1、得到系统文件

/** * Get FileSystem (得到系统文件) * * @return * @throws Exception */ public static FileSystem getFileSystem() throws Exception { Configuration configuration = new Configuration(); configuration.set("fd.defaultFS","hdfs://chenzy-1:9000"); // get filesystem FileSystem fileSystem = FileSystem.get(configuration); System.out.println(fileSystem); return fileSystem; }

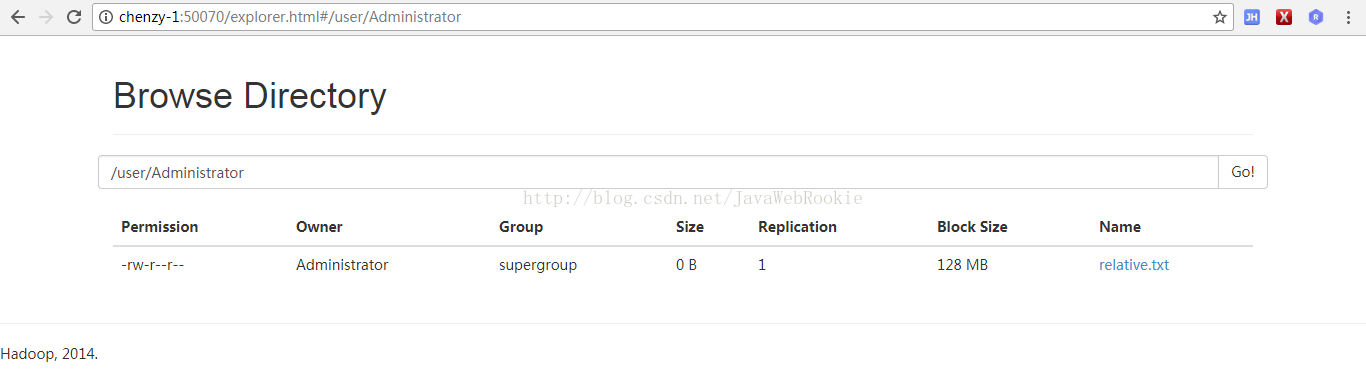

2、创建文件

//指定相对路径relative path 路径:/user/Administrator/文件名 private static void relativePath() throws Exception{ // get filesystem FileSystem fileSystem = getFileSystem(); boolean b = fileSystem.createNewFile(new Path("relative.txt")); System.out.println(b); fileSystem.close(); }

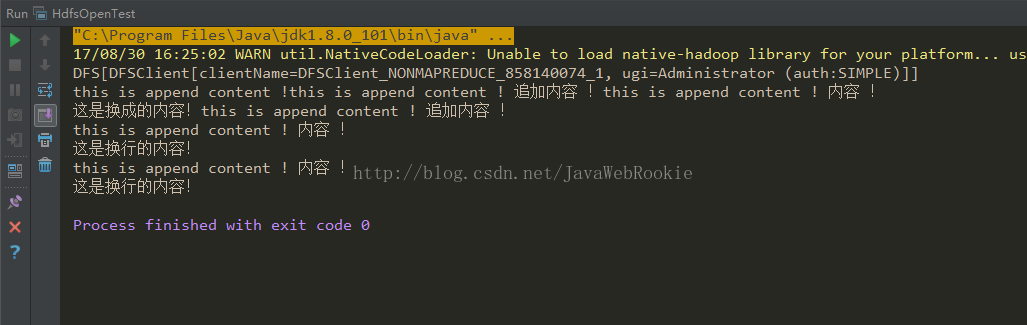

3、追加文件内容

//追加内容 private static void appendContent() throws Exception{ // get filesystem FileSystem fileSystem = getFileSystem(); Path path = new Path("/chenzy/mapreduce/wordcount/input/test.txt"); FSDataOutputStream append = fileSystem.append(path); append.write("this is append content ! 追加内容 !".getBytes()); append.close(); fileSystem.close(); } //添加内容 private static void bufferedContent() throws Exception{ // get filesystem FileSystem fileSystem = getFileSystem(); Path path = new Path("/chenzy/mapreduce/wordcount/input/test.txt"); FSDataOutputStream append = fileSystem.append(path); BufferedWriter bufferedWriter = new BufferedWriter(new OutputStreamWriter(append)); bufferedWriter.newLine(); bufferedWriter.write("this is append content ! 内容 !"); bufferedWriter.newLine(); bufferedWriter.write("这是换行的内容!"); bufferedWriter.newLine(); bufferedWriter.close(); fileSystem.close(); }

4、读取文件

//读取文件 private static void openRead() throws Exception{ // get filesystem FileSystem fileSystem = getFileSystem(); InputStream is = fileSystem.open(new Path("/chenzy/mapreduce/wordcount/input/test.txt")); BufferedReader bufferedReader = new BufferedReader(new InputStreamReader(is)); String line = null; while ((line = bufferedReader.readLine()) != null){ System.out.println(line); } is.close(); bufferedReader.close(); fileSystem.close(); }

5、从本地拷贝到hdfs

//从本地拷贝到hdfs private static void copyFromlocal() throws Exception{ // get filesystem FileSystem fileSystem = getFileSystem(); fileSystem.copyFromLocalFile(new Path("E:\\from\\new.txt"),new Path("/chenzy/mapreduce/wordcount/newMkdirs/test.txt")); fileSystem.close(); } //从hdfs拷贝到本地 private static void copyTolocal() throws Exception{ // get filesystem FileSystem fileSystem = getFileSystem(); fileSystem.copyToLocalFile(new Path("/chenzy/mapreduce/wordcount/newMkdirs/test.txt"),new Path("E:\\from\\new2.txt")); fileSystem.close(); }

3424

3424

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?