一 Map Parameters

详细研究 MapReduce 的技术官方文档时,发现在讲解Map的参数时候提到了元数据存储在 accounting buffer,是在不懂这个是什么鬼,附 MapReduce 的技术官方文档原文:

A record emitted from a map will be serialized into a buffer and metadata will be stored into accounting buffers. As described in the following options, when either the serialization buffer or the metadata exceed a threshold, the contents of the buffers will be sorted and written to disk in the background while the map continues to output records. If either buffer fills completely while the spill is in progress, the map thread will block. When the map is finished, any remaining records are written to disk and all on-disk segments are merged into a single file. Minimizing the number of spills to disk can decrease map time, but a larger buffer also decreases the memory available to the mapper.

| Name | Type | Description |

|---|---|---|

| mapreduce.task.io.sort.mb | int | The cumulative size of the serialization and accounting buffers storing records emitted from the map, in megabytes. |

| mapreduce.map.sort.spill.percent | float | The soft limit in the serialization buffer. Once reached, a thread will begin to spill the contents to disk in the background. |

Other notes

-

If either spill threshold is exceeded while a spill is in progress, collection will continue until the spill is finished. For example, if mapreduce.map.sort.spill.percent is set to 0.33, and the remainder of the buffer is filled while the spill runs, the next spill will include all the collected records, or 0.66 of the buffer, and will not generate additional spills. In other words, the thresholds are defining triggers, not blocking.

-

A record larger than the serialization buffer will first trigger a spill, then be spilled to a separate file. It is undefined whether or not this record will first pass through the combiner.

二 Accounting buffer

2.1 Mapper 任务输出

- 如果不需要Reduce操作或记录数据大于序列化缓冲区,则直接调用DirectMapOutputCollector类写入HDFS;

- 调用MapOutputBuffer类,将数据输出到环形缓冲区(Accounting buffer );

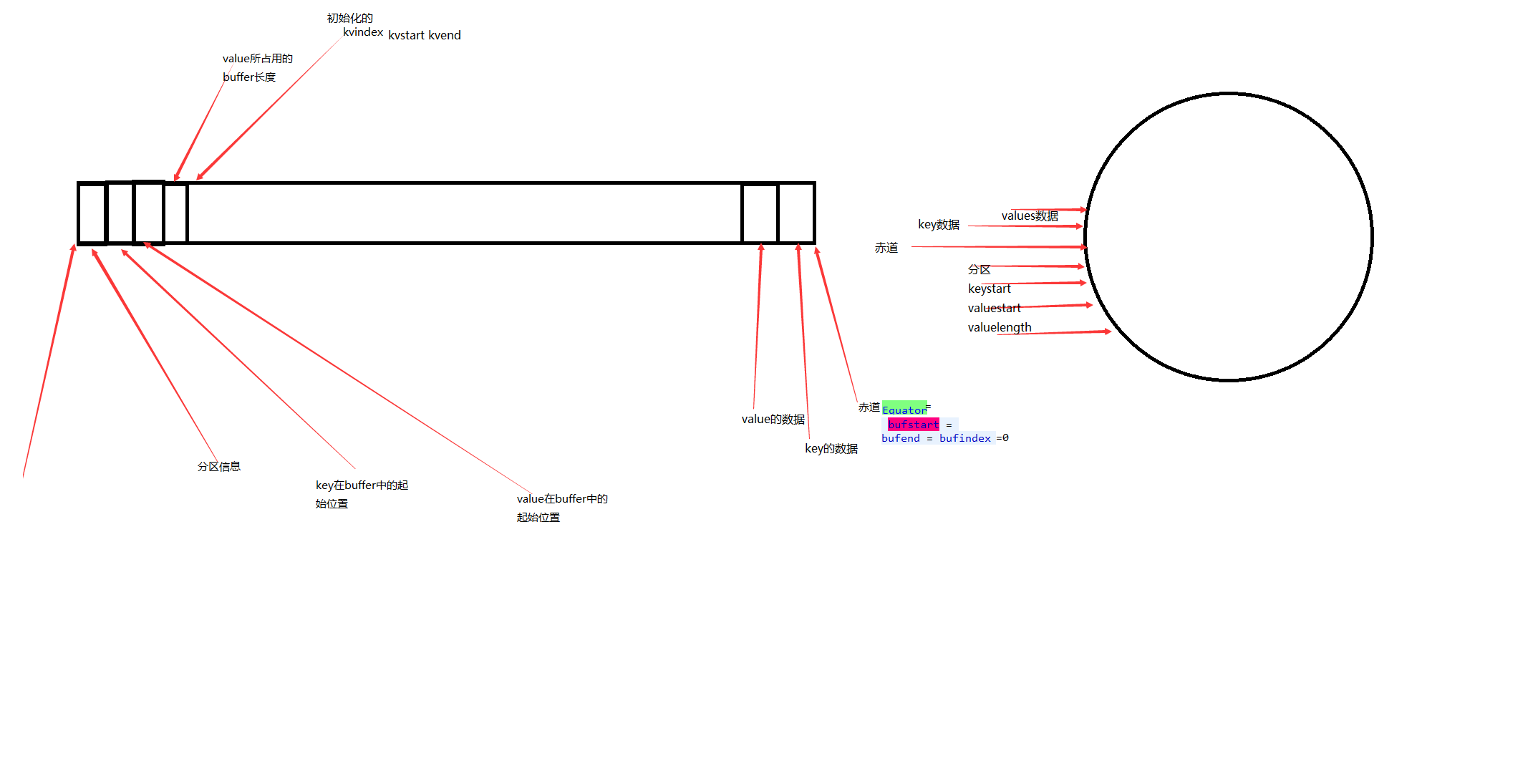

- MapOutputBuffer 索引结构,总大小在配置文件中 mapreduce.task.io.sort.mb 属性设置(默认为100mb)。具体存储结构如下所示:

2.2 Accounting buffer 参数

2.2.1 MapOutputBuffer 类

@InterfaceAudience.LimitedPrivate({"MapReduce"})

@InterfaceStability.Unstable

public static class MapOutputBuffer<K extends Object, V extends Object>

implements MapOutputCollector<K, V>, IndexedSortable {

private int partitions;

private JobConf job;

private TaskReporter reporter;

private Class<K> keyClass;

private Class<V> valClass;

private RawComparator<K> comparator;

private SerializationFactory serializationFactory;

private Serializer<K> keySerializer;

private Serializer<V> valSerializer;

private CombinerRunner<K,V> combinerRunner;

private CombineOutputCollector<K, V> combineCollector;

// Compression for map-outputs

private CompressionCodec codec;

// k/v accounting

private IntBuffer kvmeta; // 存放元数据的缓存

int kvstart; // marks origin of spill metadata 溢出的元数据起始位置;

int kvend; // marks end of spill metadata 溢出的元数据结束位置

int kvindex; // marks end of fully serialized records 完整序列化一条记录的标记(索引);

int equator; // marks origin of meta/serialization 分割元数据和缓存数据的分隔符分隔符隔符;

int bufstart; // marks beginning of spill 缓存数据的溢出起始位置;

int bufend; // marks beginning of collectable 缓存数据的溢出结束位置;

int bufmark; // marks end of record 标记一整条记录

int bufindex; // marks end of collected 标记现缓存数据索引位置

int bufvoid; // marks the point where we should stop 标记缓存结束位置

// reading at the end of the buffer

byte[] kvbuffer; // main output buffer 缓存对象

private final byte[] b0 = new byte[0]; 字节数组

private static final int VALSTART = 0; // val offset in acct 标记元数据的value在int 数组的位置

private static final int KEYSTART = 1; // key offset in acct 标记元数据的key在int 数组的位置

private static final int PARTITION = 2; // partition offset in acct 标记元数据的partition 在int 数组的位置

private static final int VALLEN = 3; // length of value 标记元数据对应的数据在的长度

private static final int NMETA = 4; // num meta ints 元数据的int 位数;

private static final int METASIZE = NMETA * 4; // size in bytes 元数据的字节个数

// spill accounting

private int maxRec;

private int softLimit;

boolean spillInProgress;;

int bufferRemaining;

volatile Throwable sortSpillException = null;

int numSpills = 0;

private int minSpillsForCombine;

private IndexedSorter sorter;

final ReentrantLock spillLock = new ReentrantLock();

final Condition spillDone = spillLock.newCondition();

final Condition spillReady = spillLock.newCondition();

final BlockingBuffer bb = new BlockingBuffer();

volatile boolean spillThreadRunning = false;

final SpillThread spillThread = new SpillThread();

private FileSystem rfs;

// Counters

private Counters.Counter mapOutputByteCounter;

private Counters.Counter mapOutputRecordCounter;

private Counters.Counter fileOutputByteCounter;

final ArrayList<SpillRecord> indexCacheList =

new ArrayList<SpillRecord>();

private int totalIndexCacheMemory;

private int indexCacheMemoryLimit;

private static final int INDEX_CACHE_MEMORY_LIMIT_DEFAULT = 1024 * 1024;

private MapTask mapTask;

private MapOutputFile mapOutputFile;

private Progress sortPhase;

private Counters.Counter spilledRecordsCounter;

public MapOutputBuffer() {

}

...

}2.2.2 MapOutputBuffer 参数初始化

//缓存的初始化

public void init(MapOutputCollector.Context context

) throws IOException, ClassNotFoundException {

job = context.getJobConf();

reporter = context.getReporter();

mapTask = context.getMapTask();

mapOutputFile = mapTask.getMapOutputFile();

sortPhase = mapTask.getSortPhase();

spilledRecordsCounter = reporter.getCounter(TaskCounter.SPILLED_RECORDS);

partitions = job.getNumReduceTasks();

rfs = ((LocalFileSystem)FileSystem.getLocal(job)).getRaw();

//sanity checks

final float spillper =

job.getFloat(JobContext.MAP_SORT_SPILL_PERCENT, (float)0.8);

final int sortmb = job.getInt(MRJobConfig.IO_SORT_MB,

MRJobConfig.DEFAULT_IO_SORT_MB); //读取配置文件溢出的阈值,默认100M

indexCacheMemoryLimit = job.getInt(JobContext.INDEX_CACHE_MEMORY_LIMIT,

INDEX_CACHE_MEMORY_LIMIT_DEFAULT);

if (spillper > (float)1.0 || spillper <= (float)0.0) {

throw new IOException("Invalid \"" + JobContext.MAP_SORT_SPILL_PERCENT +

"\": " + spillper);

}

if ((sortmb & 0x7FF) != sortmb) {

throw new IOException(

"Invalid \"" + JobContext.IO_SORT_MB + "\": " + sortmb);

}

sorter = ReflectionUtils.newInstance(job.getClass(

MRJobConfig.MAP_SORT_CLASS, QuickSort.class,

IndexedSorter.class), job);

// buffers and accounting

int maxMemUsage = sortmb << 20; //数据转换成字节;

maxMemUsage -= maxMemUsage % METASIZE;

kvbuffer = new byte[maxMemUsage]; //初始化字符数组

bufvoid = kvbuffer.length; //初始化数组的结束位置,即字符数组的长度;

kvmeta = ByteBuffer.wrap(kvbuffer) //字符数组包装成一个int数组,公共同一个kvbuffer,只是一个int 占四个字节;

.order(ByteOrder.nativeOrder())

.asIntBuffer();

setEquator(0); //初始化equator(分割元数据和缓存数据的分界线) 位置等参数;

bufstart = bufend = bufindex = equator; //初始化换出的开始结束位置等;

kvstart = kvend = kvindex;

maxRec = kvmeta.capacity()

softLimit = (int)(kvbuffer.length * spillper); //计算溢出的阈值,默认值得80%;

bufferRemaining = softLimit;

LOG.info(JobContext.IO_SORT_MB + ": " + sortmb);

LOG.info("soft limit at " + softLimit);

LOG.info("bufstart = " + bufstart + "; bufvoid = " + bufvoid);

LOG.info("kvstart = " + kvstart + "; length = " + maxRec);

// k/v 序列化相关的初始化

comparator = job.getOutputKeyComparator();

keyClass = (Class<K>)job.getMapOutputKeyClass();

valClass = (Class<V>)job.getMapOutputValueClass();

serializationFactory = new SerializationFactory(job);

keySerializer = serializationFactory.getSerializer(keyClass);

keySerializer.open(bb);

valSerializer = serializationFactory.getSerializer(valClass);

valSerializer.open(bb);

// output counters

mapOutputByteCounter = reporter.getCounter(TaskCounter.MAP_OUTPUT_BYTES);

mapOutputRecordCounter =

reporter.getCounter(TaskCounter.MAP_OUTPUT_RECORDS);

fileOutputByteCounter = reporter

.getCounter(TaskCounter.MAP_OUTPUT_MATERIALIZED_BYTES);

// compression

if (job.getCompressMapOutput()) {

Class<? extends CompressionCodec> codecClass =

job.getMapOutputCompressorClass(DefaultCodec.class);

codec = ReflectionUtils.newInstance(codecClass, job);

} else {

codec = null;

}

// combiner

final Counters.Counter combineInputCounter =

reporter.getCounter(TaskCounter.COMBINE_INPUT_RECORDS);

combinerRunner = CombinerRunner.create(job, getTaskID(),

combineInputCounter,

reporter, null);

if (combinerRunner != null) {

final Counters.Counter combineOutputCounter =

reporter.getCounter(TaskCounter.COMBINE_OUTPUT_RECORDS);

combineCollector= new CombineOutputCollector<K,V>(combineOutputCounter, reporter, job);

} else {

combineCollector = null;

}

spillInProgress = false;

minSpillsForCombine = job.getInt(JobContext.MAP_COMBINE_MIN_SPILLS, 3);

//溢出由独立的线程负责;

spillThread.setDaemon(true);

spillThread.setName("SpillThread");

spillLock.lock();

try {

spillThread.start();

while (!spillThreadRunning) {

spillDone.await();

}

} catch (InterruptedException e) {

throw new IOException("Spill thread failed to initialize", e);

} finally {

spillLock.unlock();

}

if (sortSpillException != null) {

throw new IOException("Spill thread failed to initialize",

sortSpillException);

}

}2.2.3 MapOutputBuffer 缓存数据

/**

* Serialize the key, value to intermediate storage.

* When this method returns, kvindex must refer to sufficient unused

* storage to store one METADATA.

*/

public synchronized void collect(K key, V value, final int partition

) throws IOException {

reporter.progress();

if (key.getClass() != keyClass) {

throw new IOException("Type mismatch in key from map: expected "

+ keyClass.getName() + ", received "

+ key.getClass().getName());

}

if (value.getClass() != valClass) {

throw new IOException("Type mismatch in value from map: expected "

+ valClass.getName() + ", received "

+ value.getClass().getName());

}

if (partition < 0 || partition >= partitions) {

throw new IOException("Illegal partition for " + key + " (" +

partition + ")");

}

checkSpillException();

bufferRemaining -= METASIZE; //每次缓存数据,bufferRemaining 会减少16个字符,即4个元数据的长度;

if (bufferRemaining <= 0) { bufferRemaining 不够时,触发溢出溢出操作;

// start spill if the thread is not running and the soft limit has been

// reached

spillLock.lock();

try {

do {

if (!spillInProgress) {

final int kvbidx = 4 * kvindex;

final int kvbend = 4 * kvend;

// serialized, unspilled bytes always lie between kvindex and

// bufindex, crossing the equator. Note that any void space

// created by a reset must be included in "used" bytes

final int bUsed = distanceTo(kvbidx, bufindex);

final boolean bufsoftlimit = bUsed >= softLimit;

if ((kvbend + METASIZE) % kvbuffer.length !=

equator - (equator % METASIZE)) {

// spill finished, reclaim space

resetSpill();

bufferRemaining = Math.min(

distanceTo(bufindex, kvbidx) - 2 * METASIZE,

softLimit - bUsed) - METASIZE;

continue;

} else if (bufsoftlimit && kvindex != kvend) {

// spill records, if any collected; check latter, as it may

// be possible for metadata alignment to hit spill pcnt

startSpill();

final int avgRec = (int)

(mapOutputByteCounter.getCounter() /

mapOutputRecordCounter.getCounter());

// leave at least half the split buffer for serialization data

// ensure that kvindex >= bufindex

final int distkvi = distanceTo(bufindex, kvbidx);

final int newPos = (bufindex +

Math.max(2 * METASIZE - 1,

Math.min(distkvi / 2,

distkvi / (METASIZE + avgRec) * METASIZE)))

% kvbuffer.length;

setEquator(newPos);

bufmark = bufindex = newPos;

final int serBound = 4 * kvend;

// bytes remaining before the lock must be held and limits

// checked is the minimum of three arcs: the metadata space, the

// serialization space, and the soft limit

bufferRemaining = Math.min(

// metadata max

distanceTo(bufend, newPos),

Math.min(

// serialization max

distanceTo(newPos, serBound),

// soft limit

softLimit)) - 2 * METASIZE;

}

}

} while (false);

} finally {

spillLock.unlock();

}

}

try {

// serialize key bytes into buffer,如果没触发溢出操作,则缓存数据到缓存区;

int keystart = bufindex;

keySerializer.serialize(key); //把key 数据序列化到缓存区;同时移动索引的位置;最后分析数据的移动;(估计很多人很疑惑序列化数据怎么就改变了索引的位置,哈哈哈)

if (bufindex < keystart) {

// wrapped the key; must make contiguous

bb.shiftBufferedKey();

keystart = 0;

}

// serialize value bytes into buffer

final int valstart = bufindex;

valSerializer.serialize(value); //把key 数据序列化到缓存区;同时移动bufindex 索引的位置

// It's possible for records to have zero length, i.e. the serializer

// will perform no writes. To ensure that the boundary conditions are

// checked and that the kvindex invariant is maintained, perform a

// zero-length write into the buffer. The logic monitoring this could be

// moved into collect, but this is cleaner and inexpensive. For now, it

// is acceptable.

bb.write(b0, 0, 0);

// the record must be marked after the preceding write, as the metadata

// for this record are not yet written

int valend = bb.markRecord();

mapOutputRecordCounter.increment(1);

mapOutputByteCounter.increment(

distanceTo(keystart, valend, bufvoid));

// write accounting info

kvmeta.put(kvindex + PARTITION, partition); //从kvindex 位置开始,缓存分区数据;

kvmeta.put(kvindex + KEYSTART, keystart); //从kvindex+KEYSTART 位置保存key数据在缓存区的开始位置;

kvmeta.put(kvindex + VALSTART, valstart);//从kvindex+VALSTART 位置保存value数据在缓存区的开始位置;

kvmeta.put(kvindex + VALLEN, distanceTo(valstart, valend)); //从kvindex+VALLEN 位置保存value 数据的长度

// advance kvindex

kvindex = (kvindex - NMETA + kvmeta.capacity()) % kvmeta.capacity(); //重新移动kvindex的位置--原来的位置减去16个字节;

} catch (MapBufferTooSmallException e) {

LOG.info("Record too large for in-memory buffer: " + e.getMessage());

spillSingleRecord(key, value, partition);

mapOutputRecordCounter.increment(1);

return;

}

}

//设置元数据和缓存数据的分割线

private void setEquator(int pos) {

equator = pos;

// set index prior to first entry, aligned at meta boundary

final int aligned = pos - (pos % METASIZE);

// Cast one of the operands to long to avoid integer overflow

kvindex = (int)

(((long)aligned - METASIZE + kvbuffer.length) % kvbuffer.length) / 4; //默认在26214396(100M-16个字节),设置到第一个字段写数据的地方;

//

LOG.info("(EQUATOR) " + pos + " kvi " + kvindex +

"(" + (kvindex * 4) + ")");

}2.2.4 序列化数据

1、默认序列化对象会调用Buffer的父类OutputStream 的write方法

public void write(byte b[]) throws IOException {

write(b, 0, b.length);

}

2、Buffer extends OutputStream 类的write实现方法

//写入缓存数据

public void write(byte b[], int off, int len)

throws IOException {

// must always verify the invariant that at least METASIZE bytes are

// available beyond kvindex, even when len == 0

bufferRemaining -= len;

if (bufferRemaining <= 0) {

// writing these bytes could exhaust available buffer space or fill

// the buffer to soft limit. check if spill or blocking are necessary

boolean blockwrite = false;

spillLock.lock();

try {

do {

checkSpillException();

final int kvbidx = 4 * kvindex;

final int kvbend = 4 * kvend;

// ser distance to key index

final int distkvi = distanceTo(bufindex, kvbidx);

// ser distance to spill end index

final int distkve = distanceTo(bufindex, kvbend);

// if kvindex is closer than kvend, then a spill is neither in

// progress nor complete and reset since the lock was held. The

// write should block only if there is insufficient space to

// complete the current write, write the metadata for this record,

// and write the metadata for the next record. If kvend is closer,

// then the write should block if there is too little space for

// either the metadata or the current write. Note that collect

// ensures its metadata requirement with a zero-length write

blockwrite = distkvi <= distkve

? distkvi <= len + 2 * METASIZE

: distkve <= len || distanceTo(bufend, kvbidx) < 2 * METASIZE;

if (!spillInProgress) {

if (blockwrite) {

if ((kvbend + METASIZE) % kvbuffer.length !=

equator - (equator % METASIZE)) {

// spill finished, reclaim space

// need to use meta exclusively; zero-len rec & 100% spill

// pcnt would fail

resetSpill(); // resetSpill doesn't move bufindex, kvindex

bufferRemaining = Math.min(

distkvi - 2 * METASIZE,

softLimit - distanceTo(kvbidx, bufindex)) - len;

continue;

}

// we have records we can spill; only spill if blocked

if (kvindex != kvend) {

startSpill();

// Blocked on this write, waiting for the spill just

// initiated to finish. Instead of repositioning the marker

// and copying the partial record, we set the record start

// to be the new equator

setEquator(bufmark);

} else {

// We have no buffered records, and this record is too large

// to write into kvbuffer. We must spill it directly from

// collect

final int size = distanceTo(bufstart, bufindex) + len;

setEquator(0);

bufstart = bufend = bufindex = equator;

kvstart = kvend = kvindex;

bufvoid = kvbuffer.length;

throw new MapBufferTooSmallException(size + " bytes");

}

}

}

if (blockwrite) {

// wait for spill

try {

while (spillInProgress) {

reporter.progress();

spillDone.await();

}

} catch (InterruptedException e) {

throw new IOException(

"Buffer interrupted while waiting for the writer", e);

}

}

} while (blockwrite);

} finally {

spillLock.unlock();

}

}

// here, we know that we have sufficient space to write

if (bufindex + len > bufvoid) {

final int gaplen = bufvoid - bufindex;

System.arraycopy(b, off, kvbuffer, bufindex, gaplen);

len -= gaplen;

off += gaplen;

bufindex = 0;

}

System.arraycopy(b, off, kvbuffer, bufindex, len);

bufindex += len; //改变bufindex的位置;

}

}

5860

5860

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?