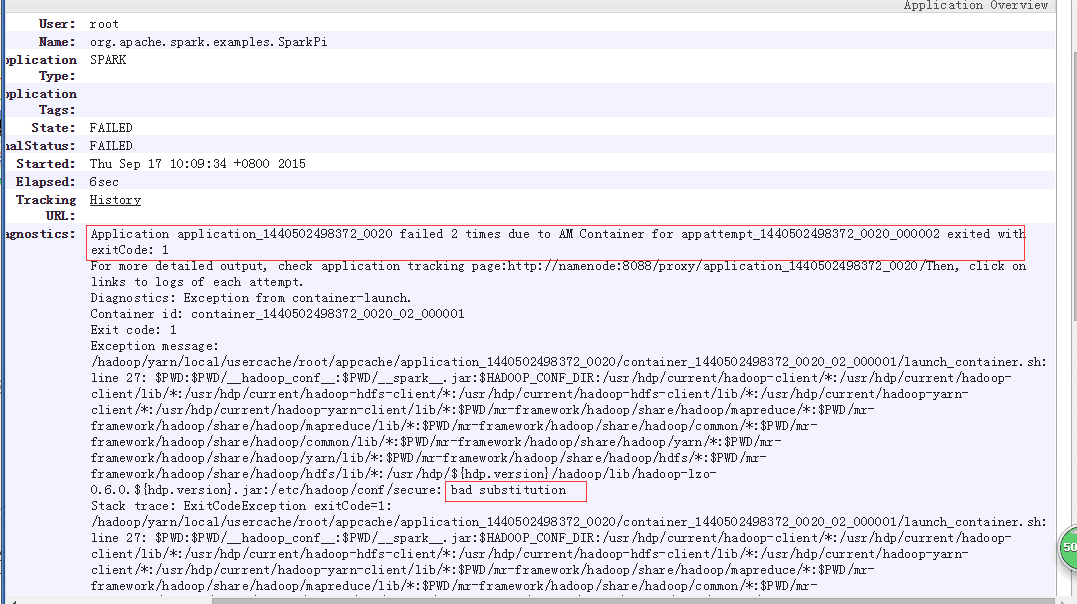

error : bad substitution

/hadoop/yarn/local/usercache/root/appcache/application_1440502498372_0012/container_1440502498372_0012_02_000001/launch_container.sh:

line 27:

$PWD

:$PWD/hadoop_conf

:$PWD/spark.jar

:

HADOOPCONFDIR:/usr/hdp/current/hadoop−lient/∗:/usr/hdp/current/hadoop−client/lib/∗:/usr/hdp/current/hadoop−hdfs−client/∗:/usr/hdp/current/hadoop−hdfs−client/lib/∗:/usr/hdp/current/hadoop−yarn−client/∗:/usr/hdp/current/hadoop−yarn−client/lib/∗:

PWD/mr-framework/hadoop/share/hadoop/mapreduce/*

:

PWD/mr−framework/hadoop/share/hadoop/mapreduce/lib/∗:

PWD/mr-framework/hadoop/share/hadoop/common/*

:

PWD/mr−framework/hadoop/share/hadoop/common/lib/∗:

PWD/mr-framework/hadoop/share/hadoop/yarn/*

:

PWD/mr−framework/hadoop/share/hadoop/yarn/lib/∗:

PWD/mr-framework/hadoop/share/hadoop/hdfs/*

:

PWD/mr−framework/hadoop/share/hadoop/hdfs/lib/∗:/usr/hdp/

{hdp.version}/hadoop/lib/hadoop-lzo-0.6.0.{hdp.version}.jar

:/etc/hadoop/conf/secure: bad substitution

Application application_ failed 2 times due to AM Container for appattempt_ exited with exitCode: 1

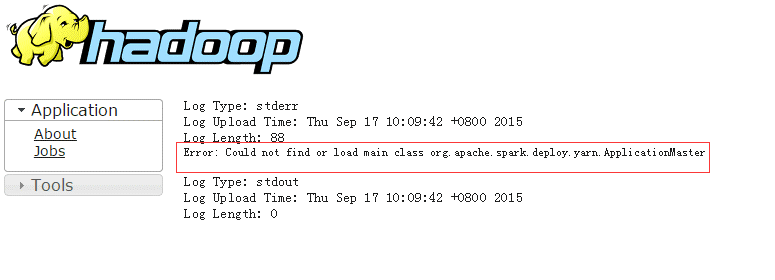

图片:

本文大数据相关软件是用ambari安装的,后又单独安装spark,遇见上面问题。

找到问题的原因,问题也解决了大半

这个问题折腾了一下午,主要是定位错误。自然也难找到正确的解决办法

解决办法:

1)spark/conf/spark-default.xml 增加以下两行

spark.driver.extraJavaOptions -Dhdp.version=2.2.6.0-2800

spark.yarn.am.extraJavaOptions -Dhdp.version=2.2.6.0-2800

2)/etc/hadoop/conf/mapred-site.xml

将

dhdp.version都替换成2.2.6.0−2800(服务器实际使用的ambari版本号):1,

s/${hdp.version}/2.2.6.0-2800/

参考链接:http://zh.hortonworks.com/community/forums/topic/bad-substitution-error-with-spark-on-hdp-2-2/

1205

1205

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?