作业描述:

spark yarn cluster模式跑spark作业失败,launch_container.sh错误

异常信息:

spark 异常 spark_conf/hadoop_conf: bad substitution

19/09/11 08:34:42 ERROR SparkContext: Error initializing SparkContext.

org.apache.spark.SparkException: Yarn application has already ended! It might have been killed or unable to launch application master.

at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.waitForApplication(YarnClientSchedulerBackend.scala:89)

.....

19/09/11 08:34:42 WARN YarnSchedulerBackend$YarnSchedulerEndpoint: Attempted to request executors before the AM has registered!

19/09/11 08:34:42 WARN MetricsSystem: Stopping a MetricsSystem that is not running

org.apache.spark.SparkException: Yarn application has already ended! It might have been killed or unable to launch application master.

at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.waitForApplication(YarnClientSchedulerBackend.scala:89)

yarn 日志:

Log Type: prelaunch.out

Log Upload Time: 星期三 九月 11 08:34:41 +0800 2019

Log Length: 25

Setting up env variables

Log Type: prelaunch.err

Log Upload Time: 星期三 九月 11 08:34:41 +0800 2019

Log Length: 1100

/hadoop/yarn/local/usercache/root/appcache/application_1568108384753_0002/container_e04_1568108384753_0002_02_000001/launch_container.sh: line 29: $PWD:$PWD/__spark_conf__:$PWD/__spark_libs__/*:/usr/hdp/2.6.5.0-292/hadoop/conf:/usr/hdp/2.6.5.0-292/hadoop/*:/usr/hdp/2.6.5.0-292/hadoop/lib/*:/usr/hdp/current/hadoop-hdfs-client/*:/usr/hdp/current/hadoop-hdfs-client/lib/*:/usr/hdp/current/hadoop-yarn-client/*:/usr/hdp/current/hadoop-yarn-client/lib/*:/usr/hdp/current/ext/hadoop/*:$PWD/mr-framework/hadoop/share/hadoop/mapreduce/*:$PWD/mr-framework/hadoop/share/hadoop/mapreduce/lib/*:$PWD/mr-framework/hadoop/share/hadoop/common/*:$PWD/mr-framework/hadoop/share/hadoop/common/lib/*:$PWD/mr-framework/hadoop/share/hadoop/yarn/*:$PWD/mr-framework/hadoop/share/hadoop/yarn/lib/*:$PWD/mr-framework/hadoop/share/hadoop/hdfs/*:$PWD/mr-framework/hadoop/share/hadoop/hdfs/lib/*:$PWD/mr-framework/hadoop/share/hadoop/tools/lib/*:/usr/hdp/${hdp.version}/hadoop/lib/hadoop-lzo-0.6.0.${hdp.version}.jar:/etc/hadoop/conf/secure:/usr/hdp/current/ext/hadoop/*:$PWD/__spark_conf__/__hadoop_conf__: bad substitution

原因分析:

就是这个变量 ${hdp.version} 解析获取不到。

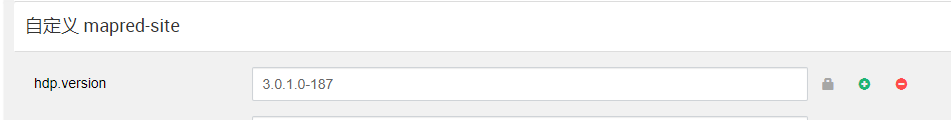

解决方法(推荐):

网上大部分解决方法都是添加spark.driver.extraJavaOptions -Dhdp.version=XXX ,spark.yarn.am.extraJavaOptions -Dhdp.version=XXX来解决hdp.version缺失的问题,但这个方法仍然不能解决 --deploy-mode cluster的情况。仔细看一下是mr…一堆路径什么的引用了 ${hdp.version}。我去mapReduce2的配置文件mapred-site里面看可以下,确实有引用这个变量。所以我们只需要再添加这个变量就可以了。

就是这么简单,就只需要mapred-site添加一个变量就行。就能解决所有bad substitution的问题,而不需要其他的处理了。

249

249

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?