引入phoenix-core包报错

因为flink集群集成的jar包有冲突,所以运行起来就报:找不到newStub 方法。

报错信息如下:

java.sql.SQLException: ERROR 2006 (INT08): Incompatible jars detected between client and server. Ensure that phoenix.jar is put on the classpath of HBase in every region server: Can't find method newStub in org.apache.phoenix.coprocessor.generated.MetaDataProtos$MetaDataService!

at org.apache.phoenix.exception.SQLExceptionCode$Factory$1.newException(SQLExceptionCode.java:464)

at org.apache.phoenix.exception.SQLExceptionInfo.buildException(SQLExceptionInfo.java:150)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.checkClientServerCompatibility(ConnectionQueryServicesImpl.java:1228)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.access$400(ConnectionQueryServicesImpl.java:228)

at org.apache.phoenix.query.ConnectionQueryServicesImpl$13.call(ConnectionQueryServicesImpl.java:2384)

at org.apache.phoenix.query.ConnectionQueryServicesImpl$13.call(ConnectionQueryServicesImpl.java:2352)

at org.apache.phoenix.util.PhoenixContextExecutor.call(PhoenixContextExecutor.java:76)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.init(ConnectionQueryServicesImpl.java:2352)

at org.apache.phoenix.jdbc.PhoenixDriver.getConnectionQueryServices(PhoenixDriver.java:232)

at org.apache.phoenix.jdbc.PhoenixEmbeddedDriver.createConnection(PhoenixEmbeddedDriver.java:147)

at org.apache.phoenix.jdbc.PhoenixDriver.connect(PhoenixDriver.java:202)

at java.sql.DriverManager.getConnection(DriverManager.java:664)

at java.sql.DriverManager.getConnection(DriverManager.java:270)

at cn.com.bluemoon.bd.flink.dws.moonr.cleaner.schedule.web.function.PhoenixSinkFunction2.open(PhoenixSinkFunction2.java:31)

at org.apache.flink.api.common.functions.util.FunctionUtils.openFunction(FunctionUtils.java:34)

at org.apache.flink.streaming.api.operators.AbstractUdfStreamOperator.open(AbstractUdfStreamOperator.java:102)

at org.apache.flink.streaming.api.operators.StreamSink.open(StreamSink.java:46)

at org.apache.flink.streaming.runtime.tasks.OperatorChain.initializeStateAndOpenOperators(OperatorChain.java:442)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restoreGates(StreamTask.java:582)

at org.apache.flink.streaming.runtime.tasks.StreamTaskActionExecutor$1.call(StreamTaskActionExecutor.java:55)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeRestore(StreamTask.java:562)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:647)

at org.apache.flink.streaming.runtime.tasks.StreamTask.restore(StreamTask.java:537)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:759)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.IllegalArgumentException: Can't find method newStub in org.apache.phoenix.coprocessor.generated.MetaDataProtos$MetaDataService!

at org.apache.hadoop.hbase.util.Methods.call(Methods.java:45)

at org.apache.hadoop.hbase.protobuf.ProtobufUtil.newServiceStub(ProtobufUtil.java:1672)

at org.apache.hadoop.hbase.client.HTable$15.call(HTable.java:1731)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

... 1 more

Caused by: java.lang.NoSuchMethodException: org.apache.phoenix.coprocessor.generated.MetaDataProtos$MetaDataService.newStub(com.google.protobuf.RpcChannel)

at java.lang.Class.getMethod(Class.java:1786)

at org.apache.hadoop.hbase.util.Methods.call(Methods.java:38)

... 6 more

然后定位到这个方法是出自哪个包的子包,查看phoenix-core包是否有该子包,如果移除掉能正常运行就ok。

<!--hbase: Version 1.2.0-cdh5.16.2-->

<dependency>

<groupId>org.apache.phoenix</groupId>

<artifactId>phoenix-core</artifactId>

<version>4.13.2-cdh5.11.2</version>

<exclusions>

<!--找不到newStub方法-->

<exclusion>

<groupId>com.google.protobuf</groupId>

<artifactId>protobuf-java</artifactId>

</exclusion>

<!--这是另一种错误LinkedMap转换不了,所以要移掉这个子包-->

<exclusion>

<groupId>commons-collections</groupId>

<artifactId>commons-collections</artifactId>

</exclusion>

</exclusions>

</dependency>

如果依旧失败,移掉子包,然后再尝试一下是否有必要额外引进更高版本的子包。子包不一定是文章展示的,你需要定位报错找不到方法或者转换不了某类的是出自哪个子包。

<!--hbase: Version 2.1.0-cdh6.3.2-->

<dependency>

<groupId>org.apache.phoenix</groupId>

<artifactId>phoenix-core</artifactId>

<version>5.0.0-HBase-2.0</version>

<exclusions>

<exclusion>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

</exclusion>

<exclusion>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-auth</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.0.0</version>

</dependency>

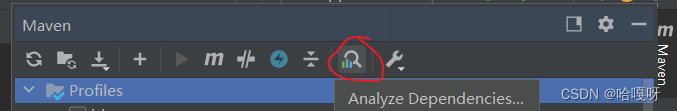

另外有必要可以留意一下 phoenix-core里面的com.google.guava 的 guava子包版本问题,借助idea的工具排查哪些包冲突了!

1517

1517

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?