C/C++ FFmepeg音视频开发录屏摄像机

以下为本人学习所做的开发笔记,不喜勿喷,谢谢各位大哥

导入FFmepeg库文件:FFmepegSDK可以去官网下载

mp4_to_mov

extern "C"

{

#include <libavformat/avformat.h>

}

#include <iostream>

using namespace std;

#pragma comment(lib,"avformat.lib")

#pragma comment(lib,"avcodec.lib")

#pragma comment(lib,"avutil.lib")

int main()

{

char infile[] = "test.mp4";

char outfile[] = "test.mov";

//muxer,demuters注册所有的封装器和解封器,注册了之后才能识别

//avi,map4,wmv文件格式

av_register_all();

//1,open input file

//打开封装

AVFormatContext* ic = NULL;

avformat_open_input(&ic, infile, 0, 0);

if (!ic)

{

cout << "avformat_open_input failed! error" << endl;

getchar();

return -1;

}

cout << "open " << infile << " success!" << endl;

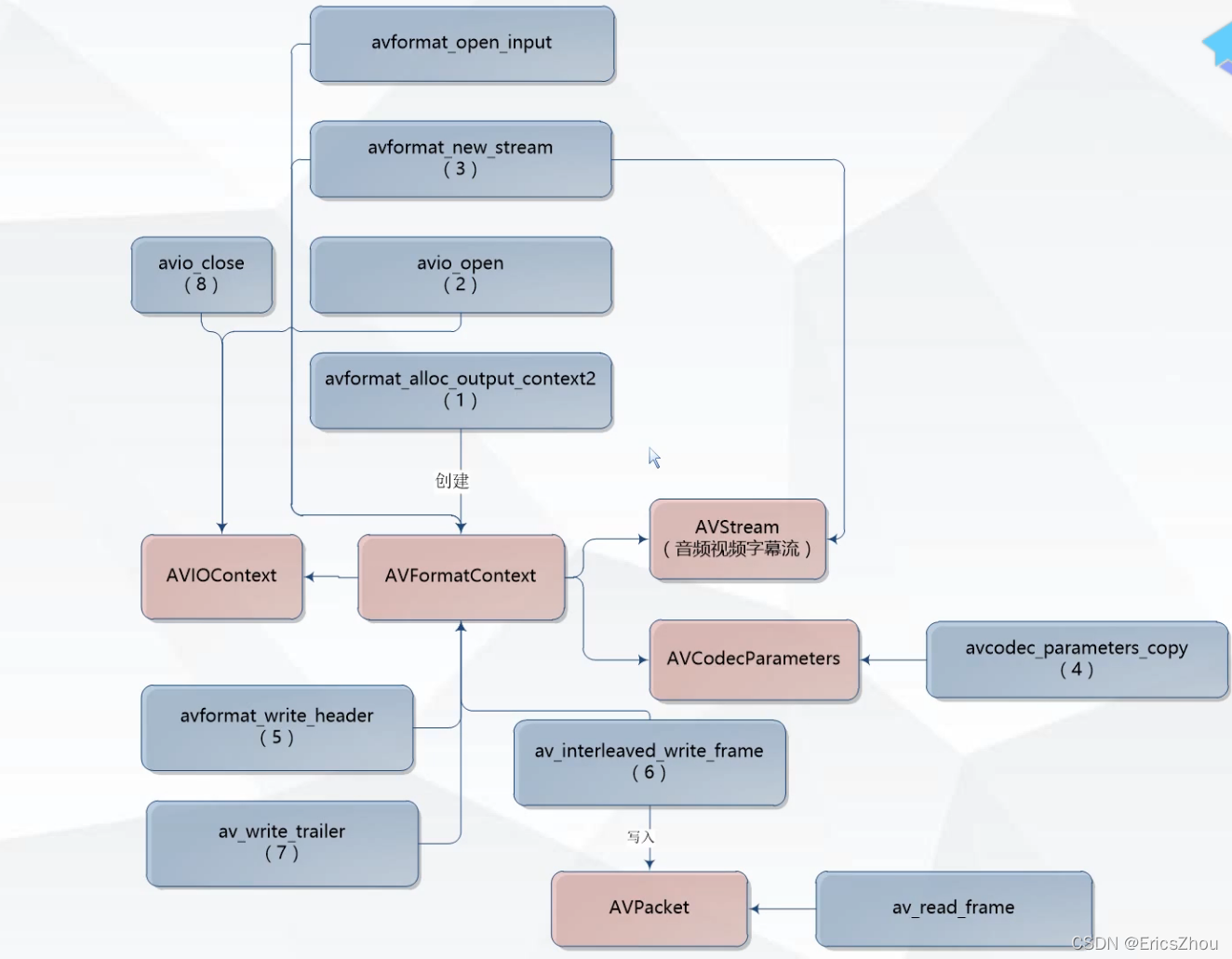

//2,create output context

AVFormatContext* oc = NULL;

avformat_alloc_output_context2(&oc, NULL, NULL, outfile);

if (!oc)

{

cerr << "avformat_alloc_output_context2 failed" << outfile << endl;

getchar();

return -1;

}

//3,add to stream

AVStream *videoStream = avformat_new_stream(oc, NULL);

AVStream* audioStream = avformat_new_stream(oc, NULL);

avcodec_parameters_copy(videoStream->codecpar, ic->streams[0]->codecpar);

avcodec_parameters_copy(audioStream->codecpar, ic->streams[1]->codecpar);

videoStream->codecpar->codec_tag = 0;

audioStream->codecpar->codec_tag = 0;

//输出打印这些配置信息,这个函数1参数输入的上下文,2,下标索引,直接是0就可以,3,url,4,参数这个上下文是用来作输入还是作为输出的输出为1,输入为0.

av_dump_format(ic, 0, infile, 0);

cout << "=============================================" << endl;

av_dump_format(oc, 0, outfile, 1);

//5 open out file io ,write head

//参数1打开这个文件它有一个句柄这个句柄存在于这个oc的成员pb(纯粹的文件输入输出,也有可能是网络)中,参数2文件的路径,参数3,标识

int ret = avio_open(&oc->pb, outfile, AVIO_FLAG_WRITE);

if (ret < 0)

{

cerr << "avio open failed!" << endl;

getchar();

return -1;

}

//写入头信息参数1,上下文,参数2,设置信息

ret = avformat_write_header(oc, NULL);

if (ret < 0)

{

cerr << "avio open header failed!" << endl;

getchar();

return -1;

}

AVPacket pkt;

for (;;)

{

//av_read_frame参数1,打开的上下文,参数2

int re = av_read_frame(ic, &pkt);

//判断是否读取完毕

if (re < 0)

break;

//pts视频显示时间,dts解码时间

//avformat_write_header改变了输出文件上下文的时间,timebase时间参考发生了变化pkt存放的时间不对会导致退出

//输入文件的pkt要于输出文件pkt的timebase一致,timebase我们可以查看到二个值的信息num=1,den=12800,意思是这1秒分为了12800份

//我们需要转化成毫秒对这个音视频帧进行输出,可以用这个av_rescale_q_rnd时间作转化参数1源,参数2,获取timebase这里我们不知道我们读的是视频还音频所以利用了流的下标作为判断

//这里我们默认0为视频1为音频,如果不是我们需要加判断,参数4为算法AVRounding为强转

pkt.pts = av_rescale_q_rnd(pkt.pts, ic->streams[pkt.stream_index]->time_base,

oc->streams[pkt.stream_index]->time_base,(AVRounding)(AV_ROUND_INF | AV_ROUND_PASS_MINMAX));

pkt.dts = av_rescale_q_rnd(pkt.dts, ic->streams[pkt.stream_index]->time_base,

oc->streams[pkt.stream_index]->time_base, (AVRounding)(AV_ROUND_INF | AV_ROUND_PASS_MINMAX));

//pos文件索引的位置,我们写入的时候位置信息会发生改变,这里赋值为-1重置让它重新算文件位置

pkt.pos = -1;

//duration已经经过多长时间,等同与下一个pts

pkt.duration= av_rescale_q_rnd(pkt.duration, ic->streams[pkt.stream_index]->time_base,

oc->streams[pkt.stream_index]->time_base, (AVRounding)(AV_ROUND_INF | AV_ROUND_PASS_MINMAX));

//写入帧视音频数据

av_write_frame(oc, &pkt);

//针空间大小是不断发生变化的,所以pkt会不断地去申请新的空间,原来的空间需要手动释放掉,不然会内存泄露

av_packet_unref(&pkt);

cout << ".";

}

//写入尾部的信息,为什么会有尾部的信息?我们拖动视频可以放在屏幕的任意地方,它其实是一个索引存放每一帧的相对位置,如果不加这个函数我们的视频长度会是零

//在我们写入头部信息的时候它不知道这个索引信息,当我们写入帧的时候,会创建这个索引

av_write_trailer(oc);

avio_close(oc->pb);

cout << "==============endl==================" << endl;

getchar();

return 0;

}

rgb_to_mp4

extern "C"

{

#include <libavformat/avformat.h>

#include <libswscale/swscale.h>

}

#include <iostream>

using namespace std;

#pragma comment(lib,"avformat.lib")

#pragma comment(lib,"avcodec.lib")

#pragma comment(lib,"avutil.lib")

#pragma comment(lib,"swscale.lib")

#pragma warning (disable : 4996)

int main()

{

char infile[] = "out.rgb";

char outfile[] = "rgb.mp4";

//muxer,demuters注册所有的封装器和解封器,注册了之后才能识别

//avi,map4,wmv文件格式

av_register_all();

//注册所有的编码器和解码器

avcodec_register_all();

//打开输入的文件,参数1,输入文件,参数2,r代表只读,b代表文件必须是二进制,不然会出错

FILE* fp = fopen(infile, "rb");

if (!fp)

{

cout << infile << "open file failed 文件打开失败" << endl;

getchar();

return -1;

}

//定义宽高的值,读取rgb值宽高我们是知道的

int width = 1920;

int height = 1080;

//压缩时间帧率

int fps = 25;

//create codec(编码解码器)

AVCodec *codec = avcodec_find_encoder(AV_CODEC_ID_H264);

if (!codec)

{

cout << "avcodec_find_decoder AV_CODEC_ID_H264 failed" << endl;

getchar();

return -1;

}

//create codec context 创建编码器的上下文

AVCodecContext *c = avcodec_alloc_context3(codec);

if (!c)

{

cout << "avcodec_alloc_context3 failed" << endl;

getchar();

return -1;

}

//设置比特率(视频的压缩率1秒钟传多大的视频)

c->bit_rate = 400000000;

c->width = width;

c->height = height;

//压缩时间基准

c->time_base = { 1,fps };

c->framerate = { fps,1 };

//画面组大小,关键帧

c->gop_size = 50;

//b帧越多压缩效率越高

c->max_b_frames = 0;

//像素的格式

c->pix_fmt = AV_PIX_FMT_YUV420P;

c->codec_id = AV_CODEC_ID_H264;

c->thread_count = 8;

//全局的编码信息

c->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

//打开编码器,参数1编码器的上下文,参数2视频的压缩格式

int ret = avcodec_open2(c, codec, NULL);

if (ret < 0)

{

cerr << "avcodec_open2 failed" << endl;

getchar();

return -1;

}

cout << "open the success !" << endl;

//2,create output context

AVFormatContext* oc = NULL;

avformat_alloc_output_context2(&oc, 0, 0, outfile);

//3,add video stream

AVStream *st = avformat_new_stream(oc, NULL);

//编码器

//st->codec = c;

st->id = 0; //默认也是零

//codecpar参数存放的位置,这里把codec_tag赋值为零

st->codecpar->codec_tag = 0;

//编码生成什么样的格式的,外部能不能知道?外部是不知道的,你要告诉外面,所有你要写在封装器的格式里面,是用什么格式压缩的,原始视频多大等

//所以需要把codecpar重新配置一边,很多东西跟上面配置编码器c的codec配置一致的,ffmapage提供了一种函数可以直接从编码器上下文中拷贝过来

avcodec_parameters_from_context(st->codecpar, c);

//输出打印这些配置信息,这个函数1参数封装器对象输出的上下文,2,下标索引,直接是0就可以,3,url,4,参数这个上下文是用来作输入还是作为输出的输出为1,输入为0.

cout << "========================================================" << endl;

av_dump_format(oc, 0, outfile, 1);

cout << "============================end============================" << endl;

//4,rgb to yuv

//转换器对视频格式和像素的转换

SwsContext* ctx = NULL;

//ctx为什么要把它传进去返回值也是它?如果这个sws_getCachedContext多次调用,如果还是把这个ctx指针传进去,这个ctx已经创建好了,它会把之前那个地址返回,如果这个参数与

//之前那个ctx参数不一致,它会重新创建,参数1上下文,参数2输入宽度,参数3输入高度,参数4输入的格式,参数5输出的宽度,参数6输出的高度,参数7输出的格式,参数8算法(图像的放大)

//参数9视频的过滤器,最后一个参数默认的参数

ctx = sws_getCachedContext(ctx,width,height,AV_PIX_FMT_BGRA,width,height, AV_PIX_FMT_YUV420P,SWS_BICUBIC,NULL,NULL,NULL);

//开始转换,我们用一个去读到这个视频数据

//输入的空间:rgb存放视频数据,分配一个空间,这就是我们准备好的空间

unsigned char* rgb = new unsigned char[width*height*4];

AVFrame* yuv = av_frame_alloc();

//输出的空间:设置yuv的值

yuv->format = AV_PIX_FMT_YUV420P;

yuv->width = width;

yuv->height = height;

//AVFrame空间要申请好,av_frame_alloc创建的是这个对象的空间,但是对yuv的AVFrame本身的空间还没有分配,av_frame_get_buffer得到这个空间参数1yuv,参数2对齐方式

ret = av_frame_get_buffer(yuv, 32);

if (ret < 0)

{

cerr << "avcodec_open2 failed" << endl;

getchar();

return -1;

}

//open oc

ret = avio_open(&oc->pb, outfile, AVIO_FLAG_WRITE);

if (ret < 0)

{

cerr << "avio_open failed" << endl;

getchar();

return -1;

}

//5,write mp4 head

ret = avformat_write_header(oc,NULL);

if (ret < 0)

{

cerr << "avformat_write_header failed" << endl;

getchar();

return -1;

}

int p = 0;

//读取这个数据

for (;;)

{

int len = fread(rgb, 1, width * height * 4, fp);

if (len <= 0)break;

//读取到一帧的画面,需要对rgb to yuv进行转化,那么我们需要一个输出输出到AVFrame(存放非编码数据没有经过压缩的,原始前的数据)的结构体当中yuv,编码之后的数据存放到AVPacket里面

uint8_t* indata[AV_NUM_DATA_POINTERS] = { 0 };

indata[0] = rgb;

int inlinesize[AV_NUM_DATA_POINTERS] = { 0 };

inlinesize[0] = width * 4;

//转化参数3深度值,参数4高度

int h = sws_scale(ctx,indata,inlinesize,0,height,yuv->data,yuv->linesize);

if (h <= 0)

break;

//6,encode frame编码

yuv->pts = p;

//st->time_base; 3600根据这个timebase得出

p += 3600;

//发送这个frame在线程内部进行编码

ret = avcodec_send_frame(c, yuv);

if (ret != 0)

continue;

AVPacket pkt;

//初始化pkt

av_init_packet(&pkt);

//接收pkt

ret = avcodec_receive_packet(c, &pkt);

if (ret != 0)

continue;

//写入视频

/*av_write_frame(oc,&pkt);

av_packet_unref(&pkt);*/

//这个是上面的优化,按照dts(解码次序)排序,视频帧的写入,也不需要我们手动释放pkt空间

av_interleaved_write_frame(oc, &pkt);

cout << "<"<<pkt.size<<">";

}

//写入视频索引

av_write_trailer(oc);

//关闭视频输出io

avio_close(oc->pb);

//清理封装输出上下文

avformat_free_context(oc);

//关闭编码器

avcodec_close(c);

//清理编码器上下文

avcodec_free_context(&c);

//清理视频重采样上下文

sws_freeContext(ctx);

cout << "============================end=============================" << endl;

delete rgb;

getchar();

return 0;

}

pcm_to_aac

//extern "C"

//{

// #include <libavformat/avformat.h>

// #include <libswscale/swscale.h>

// #include <libswresample/swresample.h>

//}

//#include <iostream>

//using namespace std;

//

//#pragma comment(lib,"avformat.lib")

//#pragma comment(lib,"avcodec.lib")

//#pragma comment(lib,"avutil.lib")

//#pragma comment(lib,"swscale.lib")

//#pragma comment(lib,"swresample.lib")

//#pragma warning (disable : 4996)

//int main()

//{

// char infile[] = "out.pcm";

// char outfile[] = "out.aac";

// //muxer,demuters注册所有的封装器和解封器,注册了之后才能识别

// //avi,map4,wmv文件格式

// av_register_all();

//

// //注册所有的编码器和解码器

// avcodec_register_all();

//

// //1,打开音频编码器

// //找到这个编码器

// AVCodec* codec = avcodec_find_encoder(AV_CODEC_ID_AAC);

// if (!codec)

// {

// cout << "avcodec_find_encodec error" << endl;

// getchar();

// return -1;

// }

//

// //创建编码器的上下文

// AVCodecContext* c = avcodec_alloc_context3(codec);

// if (!c)

// {

// cout << "avcodec_alloc_context3 error" << endl;

// getchar();

// return -1;

// }

// //配置参数

// //比特率压缩之后的大小的

// c->bit_rate = 64000;

// //采样率CD(44100)格式

// c->sample_rate = 44100;

// //音频格式

// c->sample_fmt = AV_SAMPLE_FMT_FLTP;

// //通道类型,AV_CH_LAYOUT_STEREO立体声

// c->channel_layout = AV_CH_LAYOUT_STEREO;

// //通道数量

// c->channels = 2;

//

// //这里设置的是后面的音频帧数据都是用同一个头部信息

// c->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

//

// //打开编码器参数1编码器上下文,参数2编码器,参数3可选项

// int ret = avcodec_open2(c, codec, NULL);

// if (ret <0)

// {

// cout << "avcodec_open2 error" << endl;

// getchar();

// return -1;

// }

// cout << "avcodec_open2 success! " << endl;

//

// //2,打开封装器的上下文

// AVFormatContext* oc = NULL;

// avformat_alloc_output_context2(&oc, NULL,NULL,outfile);

// if (!oc)

// {

// cout << "avformat_alloc_output_context2 error" << endl;

// getchar();

// return -1;

// }

//

// //创建输出流

// AVStream* st = avformat_new_stream(oc, NULL);

// st->codecpar->codec_tag = 0;

// //这一步相当于复制编码器上下文的配置信息

// avcodec_parameters_from_context(st->codecpar, c);

//

// //打印配置信息

// av_dump_format(oc, 0, outfile,1);

//

// //3 open io ,write head

// ret = avio_open(&oc->pb, outfile, AVIO_FLAG_WRITE);

// if (ret < 0)

// {

// cout << "avio_open error" << endl;

// getchar();

// return -1;

// }

//

// ret = avformat_write_header(oc, NULL);

//

// //44100 16 2

// //4,创建音频重采样上下文

// SwrContext* actx = NULL;

// //设置参数

// //swr_alloc_set_opts函数参数1采样上下文,AV_SAMPLE_FMT_S16采样大小,AV_CH_LAYOUT_STEREO(立体声)通道类型44100采样率,最后二个代表的是日志的偏移

// actx = swr_alloc_set_opts(actx, c->channel_layout, c->sample_fmt,c->sample_rate, //输出格式

// AV_CH_LAYOUT_STEREO, AV_SAMPLE_FMT_S16, 44100, 0, 0 //输入格式

// );

// if (!actx)

// {

// cout << "swr_alloc_set_opts error" << endl;

// getchar();

// return -1;

// }

// //初始化

// ret = swr_init(actx);

// if (!actx)

// {

// cout << "swr_init error" << endl;

// getchar();

// return -1;

// }

//

// //5,打开输入音频文件,进行重采样

// //frame未压缩的帧数据,创建av_frame_alloc对象

// AVFrame* frame = av_frame_alloc();

// //格式

// frame->format = AV_SAMPLE_FMT_FLTP;

// //通道数

// frame->channels = 2;

// //通道类型

// frame->channel_layout = AV_CH_LAYOUT_STEREO;

// //一帧音频存放的样本数量

// frame->nb_samples = 1024;

// //分配空间

// ret = av_frame_get_buffer(frame, 0);

// if (ret < 0)

// {

// cout << "av_frame_get_buffer error" << endl;

// getchar();

// return -1;

// }

//

// //读取多大?样本数*样本的大小,因为是16位制的样本所有要*2,后面一个2是因为nb_samples是音频样本数量(每一个的样本数量)这里是二个通道所以要*2

// int readSize = frame->nb_samples * 2;

// //定义一个空间

// char* pcm = new char[readSize];

//

// FILE* fp = fopen(infile, "rb");

//

// for ( ; ; )

// {

// //读取数据fread,参数1读取到,参数2大小,参数3数量,参数4文件流

// int len = fread(pcm, 1, readSize, fp);

// if (len <= 0)break;

// //cout << len << endl;

//

// //重采样swr_convert 参数1上下文,参数2空间 ,参数3输出的样本数

// const uint8_t* data[1];

// data[0] = (uint8_t*)pcm;

// len = swr_convert(actx, frame->data, frame->nb_samples, //输出

// data, frame->nb_samples); //输入

// if (len <= 0)

// break;

//

//

// //6 音频编码

// AVPacket pkt;

// av_init_packet(&pkt);

//

// ret = avcodec_send_frame(c, frame);

// if (ret != 0) continue;

// ret = avcodec_receive_packet(c, &pkt);

// if (ret != 0) continue;

//

// //7 音频封装如aac文件

// //pkt的stream_index赋值为零,因为这是处理音频文件只有一路stream_index索引是从零开始的,如果有二路需要作区分

// pkt.stream_index = 0;

// pkt.pts = 0;

// pkt.dts = 0;

// //av_interleaved_write_frame写入帧,这个函数可以自动释放pkt空间

// ret = av_interleaved_write_frame(oc, &pkt);

// cout << "[" << len << "]";

//

// }

// delete pcm;

// pcm = NULL;

// //写入音频索引

// av_write_trailer(oc);

//

// //关闭音频输出io

// avio_close(oc->pb);

//

// //清理封装输出的上下文

// avformat_free_context(oc);

//

// //关闭编码器

// avcodec_close(c);

//

// //清理编码器上下文

// avcodec_free_context(&c);

//

//

//

//

//

//

//

//

//

//

//

//

//

//

//

//

// cout << "============================end=============================" << endl;

// getchar();

// return 0;

//}

extern "C"

{

#include <libavformat/avformat.h>

#include <libswscale/swscale.h>

#include <libswresample/swresample.h>

}

#include <iostream>

using namespace std;

#pragma comment(lib,"avformat.lib")

#pragma comment(lib,"avcodec.lib")

#pragma comment(lib,"avutil.lib")

#pragma comment(lib,"swscale.lib")

#pragma comment(lib,"swresample.lib")

#pragma warning (disable : 4996)

int main()

{

char infile[] = "out.pcm";

char outfile[] = "out.aac";

//muxer,demuters

av_register_all();

avcodec_register_all();

//1 打开音频编码器

AVCodec* codec = avcodec_find_encoder(AV_CODEC_ID_AAC);

if (!codec)

{

cout << "avcodec_find_encoder error" << endl;

getchar();

return -1;

}

AVCodecContext* c = avcodec_alloc_context3(codec);

if (!c)

{

cout << "avcodec_alloc_context3 error" << endl;

getchar();

return -1;

}

c->bit_rate = 64000;

c->sample_rate = 44100;

c->sample_fmt = AV_SAMPLE_FMT_FLTP;

c->channel_layout = AV_CH_LAYOUT_STEREO;

c->channels = 2;

c->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

int ret = avcodec_open2(c, codec, NULL);

if (ret < 0)

{

cout << "avcodec_open2 error" << endl;

getchar();

return -1;

}

cout << "avcodec_open2 success!" << endl;

//2 打开输出封装的上下文

AVFormatContext* oc = NULL;

avformat_alloc_output_context2(&oc, NULL, NULL, outfile);

if (!oc)

{

cout << "avformat_alloc_output_context2 error" << endl;

getchar();

return -1;

}

AVStream* st = avformat_new_stream(oc, NULL);

st->codecpar->codec_tag = 0;

avcodec_parameters_from_context(st->codecpar, c);

av_dump_format(oc, 0, outfile, 1);

//3 open io ,write head

ret = avio_open(&oc->pb, outfile, AVIO_FLAG_WRITE);

if (ret < 0)

{

cout << "avio_open error" << endl;

getchar();

return -1;

}

ret = avformat_write_header(oc, NULL);

//44100 16 2

//4 创建音频重采样上下文

SwrContext* actx = NULL;

actx = swr_alloc_set_opts(actx,

c->channel_layout, c->sample_fmt, c->sample_rate, //输出格式

AV_CH_LAYOUT_STEREO, AV_SAMPLE_FMT_S16, 44100, //输入格式

0, 0);

if (!actx)

{

cout << "swr_alloc_set_opts error" << endl;

getchar();

return -1;

}

ret = swr_init(actx);

if (ret < 0)

{

cout << "swr_init error" << endl;

getchar();

return -1;

}

//5 打开输入音频文件,进行重采样

AVFrame* frame = av_frame_alloc();

frame->format = AV_SAMPLE_FMT_FLTP;

frame->channels = 2;

frame->channel_layout = AV_CH_LAYOUT_STEREO;

frame->nb_samples = 1024;//一帧音频存放的样本数量

ret = av_frame_get_buffer(frame, 0);

if (ret < 0)

{

cout << "av_frame_get_buffer error" << endl;

getchar();

return -1;

}

int readSize = frame->nb_samples * 2 * 2;

char* pcm = new char[readSize];

FILE* fp = fopen(infile, "rb");

for (;;)

{

int len = fread(pcm, 1, readSize, fp);

if (len <= 0)break;

const uint8_t* data[1];

data[0] = (uint8_t*)pcm;

len = swr_convert(actx, frame->data, frame->nb_samples,

data, frame->nb_samples

);

if (len <= 0)

break;

AVPacket pkt;

av_init_packet(&pkt);

//6 音频编码

ret = avcodec_send_frame(c, frame);

if (ret != 0) continue;

ret = avcodec_receive_packet(c, &pkt);

if (ret != 0) continue;

//7 音频封装如aac文件

pkt.stream_index = 0;

pkt.pts = 0;

pkt.dts = 0;

ret = av_interleaved_write_frame(oc, &pkt);

cout << "[" << len << "]";

}

delete pcm;

pcm = NULL;

//写入视频索引

av_write_trailer(oc);

//关闭视频输出io

avio_close(oc->pb);

//清理封装输出上下文

avformat_free_context(oc);

//关闭编码器

avcodec_close(c);

//清理编码器上下文

avcodec_free_context(&c);

cout << "======================end=========================" << endl;

getchar();

return 0;

}

rgb_pcm_to_mp4

#include "XVideoWriter.h"

#include <iostream>

using namespace std;

#pragma warning (disable : 4996)

int main()

{

char outfile[] = "rgbpcm.mp4";

char rgbfile[] = "test.rgb";

char pcmfile[] = "test.pcm";

XVideoWriter *xw = XVideoWriter::Get(0);

cout << xw->Init(outfile);

cout << xw->AddVideoStream(); //默认每个流的索引 0

xw->AddAudioStream(); //默认每个流的索引 1

FILE* fp = fopen(rgbfile,"rb");

if (!fp)

{

cout << "fopen " << rgbfile << " failed! " << endl;

getchar();

return -1;

}

FILE* fa = fopen(pcmfile, "rb");

if (!fa)

{

cout << "fopen " << pcmfile << " failed! " << endl;

getchar();

return -1;

}

int size = xw->inWidth*xw->inHeight * 4;

unsigned char* rgb = new unsigned char[size];

int asize = xw->nb_Sample * xw->inChannels * 2;

unsigned char* pcm = new unsigned char[size];

xw->WriteHead();

AVPacket* pkt = NULL;

int len = 0;

for (;;)

{

if (xw->IsVideoDefer())

{

int len = fread(rgb, 1, size, fp);

if (len <= 0)

break;

pkt = xw->EncodeVideo(rgb);

if (pkt) cout << ".";

else

{

cout << "-";

continue;

}

if (xw->WriteFrame(pkt))

{

cout << "+";

}

}

else

{

len = fread(pcm, 1, asize, fa);

if (len <= 0) break;

pkt = xw->EncodeAudio(pcm);

xw->WriteFrame(pkt);

}

}

xw->WriteEnd();

delete rgb;

rgb = NULL;

cout << "\n=============================end============================" << endl;

//rgb转yuv

//编码视频帧

getchar();

return 0;

}

TestDirectx

#include <d3d9.h>

#include <iostream>

#pragma comment (lib,"d3d9.lib")

#pragma warning (disable : 4996)

//截屏函数,截取全屏

void CaptureScreen(void *data)

{

//1,创建directx3d对象

static IDirect3D9* d3d = NULL;

if (!d3d)

{

d3d = Direct3DCreate9(D3D_SDK_VERSION);

}

if (!d3d) return;

//2,创建显卡的设备对象

static IDirect3DDevice9* device = NULL;

if (!device)

{

D3DPRESENT_PARAMETERS pa;

ZeroMemory(&pa, sizeof(pa));

pa.Windowed = true;

pa.Flags = D3DPRESENTFLAG_LOCKABLE_BACKBUFFER;

pa.SwapEffect = D3DSWAPEFFECT_DISCARD;

pa.hDeviceWindow = GetDesktopWindow();

d3d->CreateDevice(D3DADAPTER_DEFAULT, D3DDEVTYPE_HAL, 0, D3DCREATE_HARDWARE_VERTEXPROCESSING, &pa, &device);

}

if (!device)return;

//3创建离屏表面

int w = GetSystemMetrics(SM_CXSCREEN);

int h = GetSystemMetrics(SM_CYSCREEN);

static IDirect3DSurface9* sur = NULL;

if (!sur)

{

device->CreateOffscreenPlainSurface(w, h, D3DFMT_A8R8G8B8, D3DPOOL_SCRATCH, &sur, 0);

}

if (!sur) return;

//4,抓屏

device->GetFrontBufferData(0, sur);

//5,取出数据

D3DLOCKED_RECT rect;

ZeroMemory(&rect, sizeof(rect));

if (sur->LockRect(&rect, 0, 0) != S_OK)

{

return;

}

memcpy(data, rect.pBits, w * h * 4);

sur->UnlockRect();

std::cout << ".";

}

int main()

{

FILE* fp = fopen("out.rgb", "wb");

int size = 1920 * 1080 * 4;

char* buf = new char[size];

for (int i = 0; i < 1000; i++)

{

CaptureScreen(buf);

fwrite(buf, 1, size, fp);

Sleep(100);

}

return 0;

}

qt_audio_input

#include <QAudioInput>

#include <iostream>

using namespace std;

int main(int argc, char* argv[])

{

QAudioFormat fmt;

fmt.setSampleRate(44100);

fmt.setChannelCount(2);

fmt.setSampleSize(16);

fmt.setSampleType(QAudioFormat::UnSignedInt);

fmt.setByteOrder(QAudioFormat::LittleEndian);

fmt.setCodec("audio/pcm");

QAudioInput* input = new QAudioInput(fmt);

QIODevice* io = input->start();

FILE* fp = fopen("out.pcm", "wb");

char* buf = new char[1024];

int total = 0;

for (;;)

{

//音频录制好的地址input->bytesReady(),存放到br

int br = input->bytesReady();

if (br < 1024) continue;

int len = io->read(buf, 1024);

fwrite(buf, 1, len, fp);

cout << len << "|";

total += len;

if (total > 1024 * 1024)

break;

}

fclose(fp);

return 0;

}

XScreen(这个源码文件是以上面做为集合开发出来的软件)

#include "xscreen.h"

#include <iostream>

#include <QTime>

#include "XSceenRecord.h"

static bool isRecord = false;

#define RECORDQSS "\

QPushButton:!hover \

{background-image: url(:/XScreen/record_normal.png);}\

QPushButton:hover\

{background-image: url(:/XScreen/record_hot.png);}\

QPushButton:pressed{\

background-image: url(:/XScreen/record_pressed.png);\

background-color: rgba(255, 255, 255, 0);}"

static QTime rtime;

XScreen::XScreen(QWidget *parent)

: QWidget(parent)

{

ui.setupUi(this);

//不要标题栏

setWindowFlags(Qt::FramelessWindowHint);

//设置父类窗体为透明色

setAttribute(Qt::WA_TranslucentBackground);

startTimer(100);

}

void XScreen::timerEvent(QTimerEvent* e)

{

if (isRecord)

{

//取过去的时间elapsed

int es = rtime.elapsed() / 1000;

char buf[1024] = { 0 };

sprintf(buf, "%03d:%02d", es / 60, es % 60);

ui.timelabel->setText(buf);

}

}

void XScreen::Record()

{

isRecord = !isRecord;

std::cout << "Record succeed";

if (isRecord)

{

rtime.restart();

ui.recordButton->setStyleSheet("background-image: url(:/XScreen/stop.png);background-color: rgba(255, 255, 255, 0);");

QDateTime t = QDateTime::currentDateTime();

QString filename = t.toString("yyyyMMdd_hhmmss");

filename = "xcreen_" + filename;

filename += ".mp4";

filename = ui.urlEdit->text() + "\\" + filename;

XSceenRecord::Get()->outWidth = ui.widthEdit->text().toInt();

XSceenRecord::Get()->outHeight = ui.heightEdit_2->text().toInt();

XSceenRecord::Get()->fps = ui.fpsEdit->text().toInt();

if (XSceenRecord::Get()->Start(filename.toLocal8Bit()))

{

return;

}

isRecord = false;

}

{

ui.recordButton->setStyleSheet(RECORDQSS);

XSceenRecord::Get()->Stop();

}

}

3811

3811

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?