1. 需求

推荐可能认识的好友

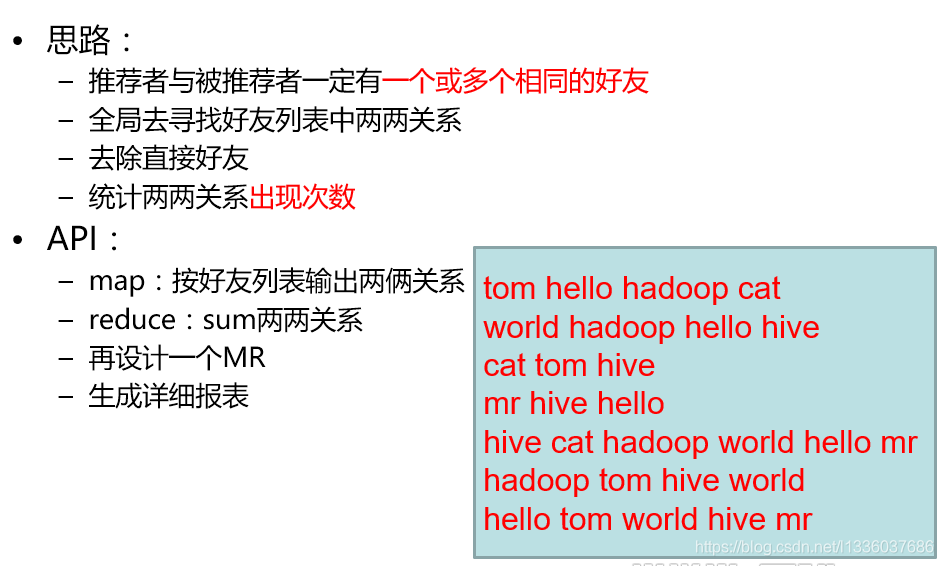

初始数据集

tom hello hadoop cat

world hadoop hello hive

cat tom hive

mr hive hello

hive cat hadoop world hello mr

hadoop tom hive world

hello tom world hive mr

分为直接关系(0)与间接关系(1)计算

2. 具体代码

2.1 MyFOF.java

/**

* Client

* @author LGX

*

*/

public class MyFOF {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration(true);

Job job = Job.getInstance(conf);

job.setJobName("analyse-fof");

job.setJarByClass(MyFOF.class);

//Input Output

Path inputPath = new Path("/data/FOF.txt");

FileInputFormat.addInputPath(job, inputPath);

Path outputPath = new Path("/data/analyse/");

if(outputPath.getFileSystem(conf).exists(outputPath)) {

outputPath.getFileSystem(conf).delete(outputPath, true);

}

FileOutputFormat.setOutputPath(job, outputPath );

//MapTask

job.setMapperClass(FMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//RedcuceTask

job.setReducerClass(FReducer.class);

job.waitForCompletion(true);

}

}

2.2 FMapper.java

/**

*

* @author LGX

*

*/

public class FMapper extends Mapper<LongWritable, Text, Text, IntWritable>{

private Text mkey = new Text();

private IntWritable mval = new IntWritable();

@Override

public void map(LongWritable key, Text value, Mapper<LongWritable ,Text ,Text, IntWritable>.Context context) throws IOException, InterruptedException{

String [] strs=StringUtils.split(value.toString(),' ');

//如果是0代表为直接关系不作推荐,如果为1代表是间接关系,需要被推荐。

for ( int i=1;i<strs.length;i++){

mkey.set(getFOF(strs[0],strs[i]));

mval.set(0);

context.write(mkey,mval);

for(int j = i+1;j<strs.length;j++){

mkey.set(getFOF(strs[i],strs[j]));

mval.set(1);

context.write(mkey,mval);

}

}

}

private static String getFOF(String s1, String s2) {

if(s1.compareTo(s2) > 0){

return s1 + " " + s2;

}else{

return s2 + " " + s1;

}

}

}

2.3 FReducer.java

public class FReducer extends Reducer<Text, IntWritable, Text, IntWritable>{

IntWritable rval = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values,

Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int flg =0;

int sum=0;

for (IntWritable value : values) {

if(value.get() == 0){

flg = 1;

}

sum += value.get();

}

if(flg == 0){

rval.set(sum);

context.write(key, rval);

}

}

}

3. 执行与结果

将项目打成jar包上传到hadoop集群上执行

执行结果:

hadoop cat 2

hello cat 2

hello hadoop 3

mr cat 1

mr hadoop 1

tom hive 3

tom mr 1

world cat 1

world mr 2

world tom 2

cat 与 hadoop之间有两个共同好友

cat 与 hello 之间有两个共同好友

…

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?