准备工作:如果是虚拟机,调整网络为桥接模式

第一部分:安装zookeeper和kafka

#下载docker镜像

docker pull docker.io/wurstmeister/zookeeper

docker pull docker.io/wurstmeister/kafka:2.12-2.1.0

#安装docker镜像

docker run -d --name zookeeper --net=host -p 2181:2181 wurstmeister/zookeeper

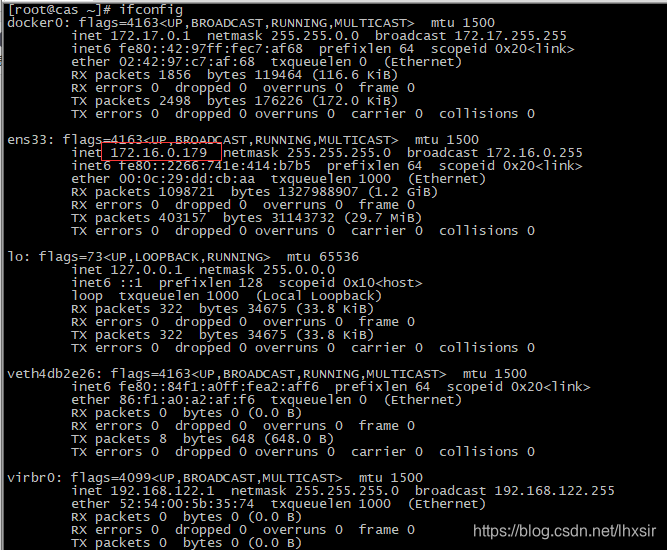

docker run -d --name kafka -p 9092:9092 -e KAFKA_BROKER_ID=0 -e KAFKA_ZOOKEEPER_CONNECT=172.16.0.179:2181 -e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://172.16.0.179:9092 -e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 -t wurstmeister/kafka:2.12-2.1.0

第二部分:docker中测试kakfa

#查看docker运行程序

docker ps

#进入docker

docker exec -it d5e603c20a32 /bin/bash

# 进入kafka的bin目录

cd /opt/kafka_2.12-2.1.0/bin/

#单机方式:创建一个主题

kafka-topics.sh --create --zookeeper 172.16.0.179:2181 --replication-factor 1 --partitions 1 --topic mykafka

#运行一个生产者

kafka-console-producer.sh --broker-list 172.16.0.179:9092 --topic mykafka

#运行一个消费者

kafka-console-consumer.sh --bootstrap-server 172.16.0.179:9092 --topic mykafka --from-beginning

#清空topic数据

kafka-topics.sh --zookeeper 172.16.0.179:2181 --delete --topic mykafka

kafka-topics.sh --zookeeper 172.16.0.179:2181 --delete --topic myflink

第三部分:本地windows环境测试kafka

python3安装包:

pip install pykafka

生产者代码:

from pykafka import KafkaClient

host = '172.16.0.179:9092'

client = KafkaClient(hosts = host)

topic = client.topics["mytopic".encode()]

# 将产生kafka同步消息,这个调用仅仅在我们已经确认消息已经发送到集群之后

with topic.get_sync_producer() as producer:

for i in range(10):

producer.produce(('test message ' + str(i ** 2)).encode())

producer.stop()

消费者代码:

# -* coding:utf8 *-

from pykafka import KafkaClient

host = '172.16.0.179:9092'

client = KafkaClient(hosts=host)

print(client.topics)

# 消费者

topic = client.topics['mytopic'.encode()]

consumer = topic.get_simple_consumer(consumer_group=b'test_group', auto_commit_enable=True, auto_commit_interval_ms=1, consumer_id=b'test_id')

for message in consumer:

if message is not None:

print(message.offset, message.value.decode('utf-8'))

552

552

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?