问题现象

最近做安全软件版本升级,将hive-jdbc从1.2.1升级到hive-2.3.3,升级后,发现系统:通过spark.sql 获取hive schema的功能不能用了。报错信息如下。

错误信息

详细错误信息:

Caused by: java.lang.NoSuchFieldError: HIVE_STATS_JDBC_TIMEOUT

at org.apache.spark.sql.hive.HiveUtils$.formatTimeVarsForHiveClient(HiveUtils.scala:204)

at org.apache.spark.sql.hive.HiveUtils$.newClientForMetadata(HiveUtils.scala:285)

at org.apache.spark.sql.hive.HiveExternalCatalog.client$lzycompute(HiveExternalCatalog.scala:66)

at org.apache.spark.sql.hive.HiveExternalCatalog.client(HiveExternalCatalog.scala:65)

at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$1.apply$mcZ$sp(HiveExternalCatalog.scala:215)

at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$1.apply(HiveExternalCatalog.scala:215)

at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$1.apply(HiveExternalCatalog.scala:215)

at org.apache.spark.sql.hive.HiveExternalCatalog.withClient(HiveExternalCatalog.scala:97)

at org.apache.spark.sql.hive.HiveExternalCatalog.databaseExists(HiveExternalCatalog.scala:214)

at org.apache.spark.sql.internal.SharedState.externalCatalog$lzycompute(SharedState.scala:116)

at org.apache.spark.sql.internal.SharedState.externalCatalog(SharedState.scala:104)

at org.apache.spark.sql.internal.SharedState.globalTempViewManager$lzycompute(SharedState.scala:143)

at org.apache.spark.sql.internal.SharedState.globalTempViewManager(SharedState.scala:138)

at org.apache.spark.sql.hive.HiveSessionStateBuilder$$anonfun$2.apply(HiveSessionStateBuilder.scala:55)

at org.apache.spark.sql.hive.HiveSessionStateBuilder$$anonfun$2.apply(HiveSessionStateBuilder.scala:55)

at org.apache.spark.sql.catalyst.catalog.SessionCatalog.globalTempViewManager$lzycompute(SessionCatalog.scala:91)

at org.apache.spark.sql.catalyst.catalog.SessionCatalog.globalTempViewManager(SessionCatalog.scala:91)

at org.apache.spark.sql.catalyst.catalog.SessionCatalog.isTemporaryTable(SessionCatalog.scala:736)

at org.apache.spark.sql.execution.command.DescribeTableCommand.run(tables.scala:584)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult$lzycompute(commands.scala:70)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult(commands.scala:68)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.executeCollect(commands.scala:79)

at org.apache.spark.sql.Dataset$$anonfun$6.apply(Dataset.scala:194)

at org.apache.spark.sql.Dataset$$anonfun$6.apply(Dataset.scala:194)

at org.apache.spark.sql.Dataset$$anonfun$53.apply(Dataset.scala:3369)

at org.apache.spark.sql.execution.SQLExecution$$anonfun$withNewExecutionId$1.apply(SQLExecution.scala:80)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:127)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:75)

at org.apache.spark.sql.Dataset.org$apache$spark$sql$Dataset$$withAction(Dataset.scala:3368)

at org.apache.spark.sql.Dataset.<init>(Dataset.scala:194)

排查分析

原因分析

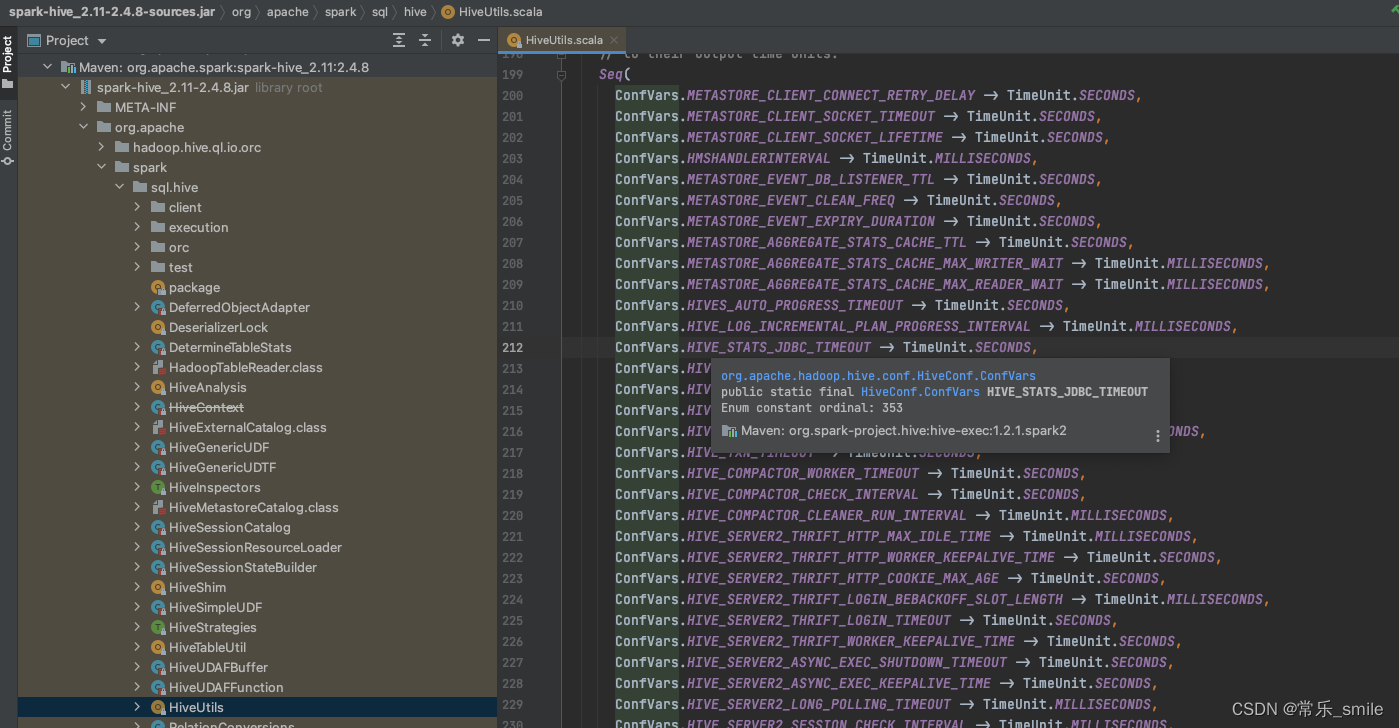

看到 Caused by: java.lang.NoSuchFieldError: HIVE_STATS_JDBC_TIMEOUT 首先关键词是HIVE_STATS_JDBC_TIMEOUT,错误原因是NoSuchFieldError,说明存在类冲突,点击查看org.apache.spark.sql.hive.HiveUtils 类源码第212行,发现该代码引用了HiveConf.ConfVars中的枚举值。那就是HiveConf类冲突了,导致找不到HIVE_STATS_JDBC_TIMEOUT field。

接下来的重点就是,如何解决HiveConf类冲突的问题。

排查过程

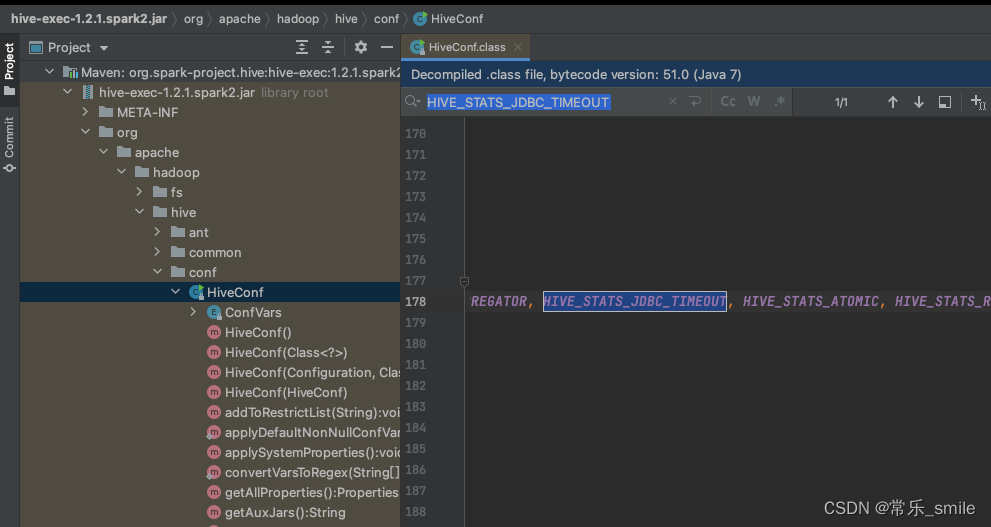

1)在org.spark-project.hive:hive-exec:1.2.1.spark2 这个包下的HiveConf类下,是有HIVE_STATS_JDBC_TIMEOUT这个枚举值的。

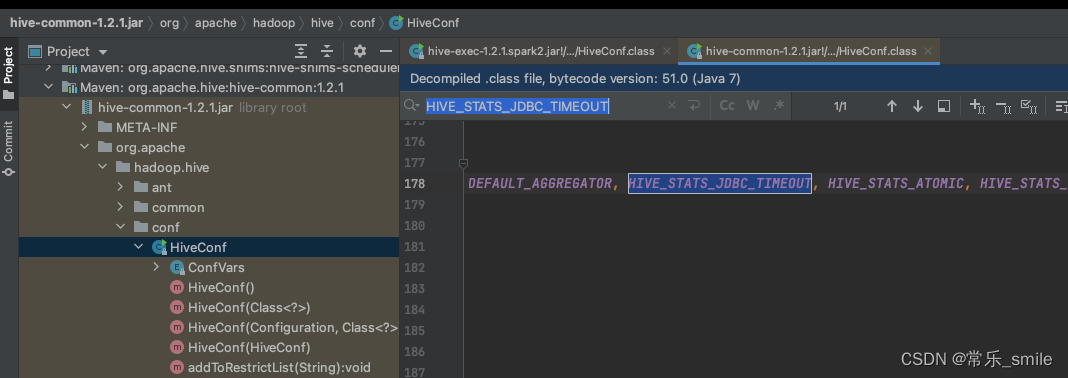

2)在org.apache.hive:hive-common:1.2.1 这个包下的HiveConf 类下,也是有HIVE_STATS_JDBC_TIMEOUT这个枚举值的。

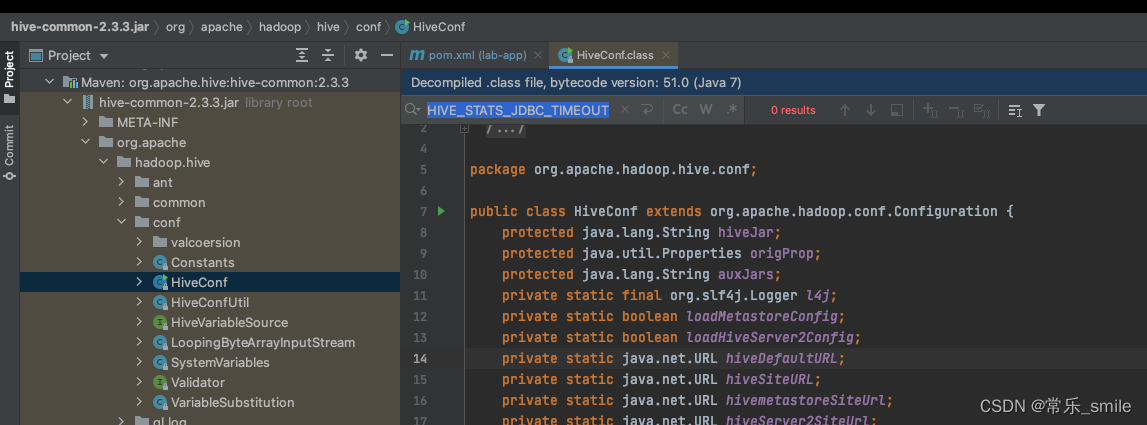

3)在org.apache.hive:hive-common:2.3.3 这个包下的HiveConf 类下,没有HIVE_STATS_JDBC_TIMEOUT这个枚举值的。

解决方法

最后迫于无奈,只能将hive-common的版本号再次降回到1.2.1版本。

309

309

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?