本文的基础环境可以参考flink 1.10.1 java版本wordcount演示 (nc + socket),在此基础上增加输出结果到kafka。

1. 添加依赖

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_2.11</artifactId>

<version>1.10.1</version>

</dependency>2. 添加代码

package com.demo.redis;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaProducer;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaProducer011;

import org.apache.flink.streaming.connectors.redis.RedisSink;

import org.apache.flink.streaming.connectors.redis.common.config.FlinkJedisPoolConfig;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisCommand;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisCommandDescription;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisMapper;

import org.apache.flink.streaming.util.serialization.KeyedSerializationSchema;

import org.apache.flink.util.Collector;

import java.util.Properties;

/**

* flink结果写入redis

*/

public class FlinkKafkaSinkDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

DataStream<String> dataStream = env.socketTextStream("192.168.0.181",9000);

SingleOutputStreamOperator<String> flatMap = dataStream.flatMap(new FlatMapFunction<String, String>() {

@Override

public void flatMap(String value, Collector<String> out) throws Exception {

String[] strings = value.split(" ");

for (String s : strings) {

out.collect(s);

}

}

});

SingleOutputStreamOperator<Tuple2<String, Integer>> map = flatMap.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

return Tuple2.of(value, 1);

}

});

SingleOutputStreamOperator<Tuple2<String, Integer>> sum = map.keyBy("f0").sum(1);

DataStream<String> result = sum.map(new MapFunction<Tuple2<String, Integer>, String>() {

@Override

public String map(Tuple2<String, Integer> data) throws Exception {

return data.f0 + ":" + data.f1;

}

});

FlinkKafkaProducer<String> kafkaProducer = new FlinkKafkaProducer<String>(

"localhost:9092", "flink-topic", new SimpleStringSchema()

);

result.addSink(kafkaProducer);

result.print();

env.execute();

}

}

将计算结果通过map转换为字符串,以便通过字符串的方式写入kafka。

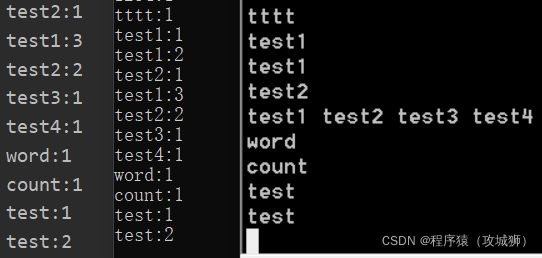

3. 查看输出结果

从nc输入测试数据,可以从idea控制台和kafka消费者控制台同时看到计算结果输出。

491

491

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?