参考https://blog.csdn.net/u010312436/article/details/78656723/以及《TensorFlow技术解析与实践》这本书。

参考的这篇博客写得很详细,那我这里就写简单点以一个错误运行的MNSIT训练集为例,看看如何通过Tensorflow Debugger来找到出错的地方,并改正。文件debug_mnist.py代码如下:

#!/usr/bin/env python

# -*- coding: utf-8 -*-

# author:袁阳平 yyp

# time:2019/9/5

# 写代码==我开心

# Copyright 2016 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

"""Demo of the tfdbg curses CLI: Locating the source of bad numerical values.

The neural network in this demo is larged based on the tutorial at:

tensorflow/examples/tutorials/mnist/mnist_with_summaries.py

But modifications are made so that problematic numerical values (infs and nans)

appear in nodes of the graph during training.

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import argparse

import sys

import tempfile

import tensorflow

from tensorflow.examples.tutorials.mnist import input_data

from tensorflow.python import debug as tf_debug

tf = tensorflow.compat.v1

IMAGE_SIZE = 28

HIDDEN_SIZE = 500

NUM_LABELS = 10

RAND_SEED = 42

def main(_):

# Import data

mnist = input_data.read_data_sets(FLAGS.data_dir,

one_hot=True,

fake_data=FLAGS.fake_data)

def feed_dict(train):

if train or FLAGS.fake_data:

xs, ys = mnist.train.next_batch(FLAGS.train_batch_size,

fake_data=FLAGS.fake_data)

else:

xs, ys = mnist.test.images, mnist.test.labels

return {x: xs, y_: ys}

sess = tf.InteractiveSession()

# Create the MNIST neural network graph.

# Input placeholders.

with tf.name_scope("input"):

x = tf.placeholder(

tf.float32, [None, IMAGE_SIZE * IMAGE_SIZE], name="x-input")

y_ = tf.placeholder(tf.float32, [None, NUM_LABELS], name="y-input")

def weight_variable(shape):

"""Create a weight variable with appropriate initialization."""

initial = tf.truncated_normal(shape, stddev=0.1, seed=RAND_SEED)

return tf.Variable(initial)

def bias_variable(shape):

"""Create a bias variable with appropriate initialization."""

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def nn_layer(input_tensor, input_dim, output_dim, layer_name, act=tf.nn.relu):

"""Reusable code for making a simple neural net layer."""

# Adding a name scope ensures logical grouping of the layers in the graph.

with tf.name_scope(layer_name):

# This Variable will hold the state of the weights for the layer

with tf.name_scope("weights"):

weights = weight_variable([input_dim, output_dim])

with tf.name_scope("biases"):

biases = bias_variable([output_dim])

with tf.name_scope("Wx_plus_b"):

preactivate = tf.matmul(input_tensor, weights) + biases

activations = act(preactivate)

return activations

hidden = nn_layer(x, IMAGE_SIZE**2, HIDDEN_SIZE, "hidden")

logits = nn_layer(hidden, HIDDEN_SIZE, NUM_LABELS, "output", tf.identity)

y = tf.nn.softmax(logits)

with tf.name_scope("cross_entropy"):

# The following line is the culprit of the bad numerical values that appear

# during training of this graph. Log of zero gives inf, which is first seen

# in the intermediate tensor "cross_entropy/Log:0" during the 4th run()

# call. A multiplication of the inf values with zeros leads to nans,

# which is first in "cross_entropy/mul:0".

#

# You can use the built-in, numerically-stable implementation to fix this

# issue:

# diff = tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=logits)

diff = -(y_ * tf.log(y))

with tf.name_scope("total"):

cross_entropy = tf.reduce_mean(diff)

with tf.name_scope("train"):

train_step = tf.train.AdamOptimizer(FLAGS.learning_rate).minimize(

cross_entropy)

with tf.name_scope("accuracy"):

with tf.name_scope("correct_prediction"):

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

with tf.name_scope("accuracy"):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

sess.run(tf.global_variables_initializer())

if FLAGS.debug and FLAGS.tensorboard_debug_address:

raise ValueError(

"The --debug and --tensorboard_debug_address flags are mutually "

"exclusive.")

if FLAGS.debug:

# config_file_path = (tempfile.mktemp(".tfdbg_config")

# if FLAGS.use_random_config_path else None)

sess = tf_debug.LocalCLIDebugWrapperSession(

sess,

ui_type=FLAGS.ui_type)

sess.add_tensor_filter("has_inf_or_nan", tf_debug.has_inf_or_nan)

elif FLAGS.tensorboard_debug_address:

sess = tf_debug.TensorBoardDebugWrapperSession(

sess, FLAGS.tensorboard_debug_address)

# Add this point, sess is a debug wrapper around the actual Session if

# FLAGS.debug is true. In that case, calling run() will launch the CLI.

for i in range(FLAGS.max_steps):

acc = sess.run(accuracy, feed_dict=feed_dict(False))

print("Accuracy at step %d: %s" % (i, acc))

sess.run(train_step, feed_dict=feed_dict(True))

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.register("type", "bool", lambda v: v.lower() == "true")

parser.add_argument(

"--max_steps",

type=int,

default=100,

help="Number of steps to run trainer.")

parser.add_argument(

"--train_batch_size",

type=int,

default=100,

help="Batch size used during training.")

parser.add_argument(

"--learning_rate",

type=float,

default=0.025,

help="Initial learning rate.")

parser.add_argument(

"--data_dir",

type=str,

default="/tmp/mnist_data",

help="Directory for storing data")

parser.add_argument(

"--ui_type",

type=str,

default="curses",

help="Command-line user interface type (curses | readline)")

parser.add_argument(

"--fake_data",

type="bool",

nargs="?",

const=True,

default=False,

help="Use fake MNIST data for unit testing")

parser.add_argument(

"--debug",

type="bool",

nargs="?",

const=True,

default=False,

help="Use debugger to track down bad values during training. "

"Mutually exclusive with the --tensorboard_debug_address flag.")

parser.add_argument(

"--tensorboard_debug_address",

type=str,

default=None,

help="Connect to the TensorBoard Debugger Plugin backend specified by "

"the gRPC address (e.g., localhost:1234). Mutually exclusive with the "

"--debug flag.")

parser.add_argument(

"--use_random_config_path",

type="bool",

nargs="?",

const=True,

default=False,

help="""If set, set config file path to a random file in the temporary

directory.""")

FLAGS, unparsed = parser.parse_known_args()

with tf.Graph().as_default():

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

我们先不加调试器,直接执行,如下:

python mnist.py

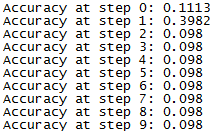

经过10次训练,结果如下:

可以看出,准确率在第一次训练时有所上升,后面一直保持在较低的水平。

下面我们就用Tensorflow Debugger来尝试调试。仅在原有的文件中加入下面3行代码。

from tensorflow.python import debug as tf_debug ## 导入debug模块

sess = tf_debug.LocalCLIDebugWrapperSession( sess, ui_type=FLAGS.ui_type)

sess.add_tensor_filter("has_inf_or_nan", tf_debug.has_inf_or_nan) ## 这两行代码放在sess.run(tf.global_variables_initializer())之后,

通过添加的代码可以看出,我们为张量值注册了一个过滤器has_inf_or_nan,它能够判断出图的任何中间张量中是否有nan或inf值。

现在我们就开启调试模式(debug),来找出准确率无法提高的原因。首先执行:

python debug_mnist.py --debug=True

这时就进入了Debugger界面,如下图:

这就是我们说的运行开始的UI(run-start UI),在tfdbg>后面可以输入交互式的命令,如run(或者缩写r),就可以进入运行结束后UI(run-en UI),如下图:

从上图可以看出,数值没有异常。然后,可以通过如下命令连续运行10次。

tfdbg> run -t 10

可以使用下面的命令直到找到在图形中的第一个nan或者inf值(这类似于调试中的打断点):

tfdbg> run -f has_inf_or_nan

结果如下:

根据上图,仔细看一下Softmax:0这个节点,方法如下:

tfdbg> pt Softmax:0

结果如下:

用ni命令的-t标志进行追溯,方法如下:

ni -t Sotfmax:0

追溯结果如下图所示

可以看出104行中y = tf.nn.softmax(logits)这里的问题,logits的值也是有问题。

查看cross_entropy/Log:0这个节点,方法如下:

tfdbg> pt Softmax:0

结果如下:

用ni命令的-t标志进行追溯,方法如下:

ni -t corss_entropy/Log

追溯结果如下:

从追溯结果可以看出,debug_mnist.py的117行是罪魁祸首

diff = -(y_ * tf.log(y))

而上述代码中的y正是由前面y = tf.nn.softmax(logits)产生,正是前面产生的值出了问题所以就导致了现在的问题,那么就要对y值进行裁剪,如下:

diff = -(y_ * tf.clip_by_value(y, 1e-8, 1.0))

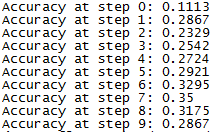

对修改之后的代码运行10次,结果如下:

可以看出准确率不再在低值徘徊,恢复正常,虽然准确率也是很低,但是是因为比如训练次数较少。

下面简单讨论下这个这个问题,run -f has_inf_or_nan命令中的has_inf_or_nan的由来,可不可以用别的参数。

至于由来,是由sess.add_tensor_filter(“has_inf_or_nan”, tf_debug.has_inf_or_nan)中的"has_inf_or_nan"得来的。

可以用别的参数,那就是自己构建张量filter,以下面代码为例,可以查找值为0的张量:

def my_filter_callable(datum, tensor):

# A filter that detects zero-valued scalars.

return len(tensor.shape) == 0 and tensor == 0.0

sess.add_tensor_filter('my_filter', my_filter_callable)

后面使用的时候,用下列命令即可:

run -f my_filter

146

146

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?