Liu Y, Zhong S H, Li W. Query-oriented multi-document summarization via unsupervised deep learning[C]//

Twenty-Sixth AAAI Conference on Artificial Intelligence. AAAI Press, 2012:1699-1705.

Abstract

- Achieve the largest coverage of the documents content.

目的是达到最大的文档内容覆盖 - Concentrate distributed information to hidden units layer by layer.

通过一层一层的隐藏单元,聚集分散的信息 - The whole deep architecture is fine tuned by minimizing the information loss of reconstruction validation.

最小化重构确认时的信息丢失

Relatework

- It is very difficult to bridge the gap between

the semantic meanings of the documents and the

basic textual units

建立语义信息和基本文本单元连接的桥梁很困难 - propose a novel framework by referencing the architecture of the **human neocortex and the procedure of

intelligent perception** via deep learning

参照人类大脑皮层的结构和智能感知过程

已有模型

support vector machine (SVM)

支持向量机-CSDN

支持向量机-博客园

deep belief network (DBN),

深度信念网络

这是第一篇把深度网络应用到面向查询的MDS

文章内容

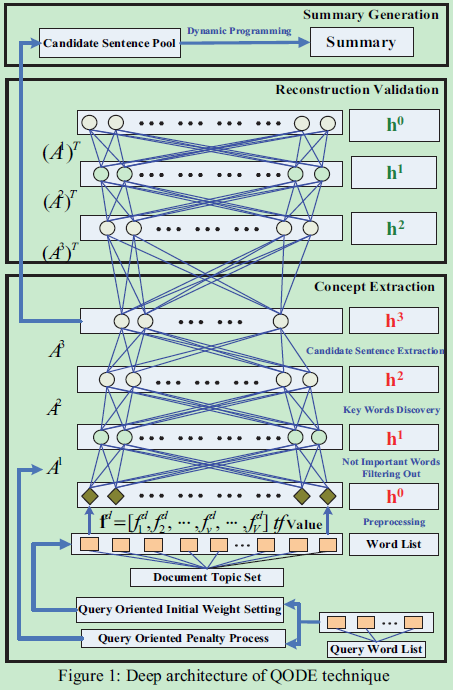

query-oriented concepts extraction, reconstruction validation for global adjustment,

and summary generation via dynamic programming

Model

Deep Learning for Query-oriented Multidocuments Summarization

- Dozens of cortical layers are involved in generating even the simplest lexical-semantic processing.

对每一个简单的词汇语义加工都要经过数十皮质层 - Deep learning has two attractive characters

- 多重隐层的非线性结构使深度模型能把复杂的问题表示得很简明,这个特性很好的适应摘要的特性,在可允许的长度

尽量包含更多的信息。 - 由于大多数深部模型中的成对隐层重构学习,即使在无监督的情况下,分布式信息也可以逐层逐层地集中。

这个特性会在大的数据集中受益更多

- 多重隐层的非线性结构使深度模型能把复杂的问题表示得很简明,这个特性很好的适应摘要的特性,在可允许的长度

- 深度学习可适用大多数领域

eg. image classification,image generation,audio event classification

Deep Architecture

- The feature vector fd=[fd1,fd2,…,fdv,…,fdV]

dm,tf value of word in teh vocabulary - For the hidden layer, Restricted Boltzmann Machines (RBMs) are used as building blocks

out put S=[s1,s2,s3,….,sT]

受限玻尔兹曼机学习笔记-很完整

RBM是一种双层递归神经网络,其中随机二进制输入和输出使用对称加权连接来连接。 RBM被用作深层模型的构建块,因为自下而上的连接可以用来从低层特征推断更紧凑的高层表示,并且自上而下的连接可以用来验证所生成的紧凑表示的有效性。 除了输入层以外,深层架构的参数空间是随机初始化的。 第一个RBM的初始参数也由查询词决定 - In the concept extraction stage, three hidden layers H1 , H2 , and H3 are used to abstract the documents using greedy layer-wise extraction algorithm.使用贪心分层提取算法

- Implement:

- H1 used to filter out the words appearing accidentally

H2 is supposed to discover the key words

H3 is used to candidate sentence extraction - Reconstruction validation part intends to reconstruct the data distribution by fine-tuning the whole deep architecture

globally

- H1 used to filter out the words appearing accidentally

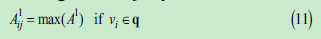

Query-oriented Concept Extraction

- 为了整合文档摘要的查询信息,我们有两个不同的过程,包括:查询面向初始权重设置和查询导向惩罚

处理。经典的神经网络,初始化都是从u(0,0.01)高斯分布中随机得到的。 - 与此不同的是,我们强化了查询的影响力。在随机初始化设置后,如果第i个H0中的节点单词vi属于查询。

在惩罚过程中,查询词的重构错误比其他惩罚更多

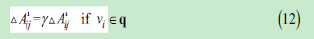

-AF importance matrix

DP is utilized to maximize the query oriented importance of generated summary with the constraint of summary length.

Reconstruction Validation for Global Adjustment

-Using greedy layer-by-layer algorithm to learn a deep model for concept extraction. 该算法有良好的全局搜索能力

-Using backpropagation through the whole deep model to finetune the parameters [A,b,c] for optimal reconstruction在这个过程中使用反向传播来调整参数,该算法有良好的局部最优解的搜索能力

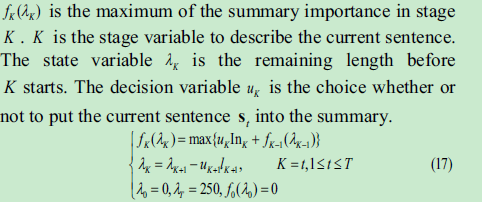

Summary Generation via Dynamic Programming

- DP is utilized to maximize the importance of the summary with the length constraint

状态转移方程:

Conclusion

提出了一种新的面向查询的多文档摘要深度学习模型。该框架继承了深层学习中优秀的抽取能力,有效地推导出了重要概念。根据实证验证在三个标准数据集,结果不仅表明区分QODE提取能力,也清楚地表明我们提供的类似人类的自然语言处理的多文档摘要的意图。

6691

6691

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?