tritonserver学习之二:tritonserver编译

tritonserver学习之三:tritonserver运行流程

tritonserver学习之六:自定义c++、python custom backend实践

tritonserver学习之八:redis_caches实践

1、tritonserver支持的协议

tritonserver成功将模型serve后,client端可以通过http或grpc协议请求到server端部署的模型,而对于grpc通信方式,系统选择了其异步模式,选择这种模式的原因主要有:

高并发:gRPC的异步模式允许服务器同时处理多个客户端请求,而不会因等待某个请求的响应而阻塞其他请求的处理。这使得TritonServer能够充分利用系统资源,提高并发性能,从而能够更高效地处理大量的模型推理请求。

资源利用率:在异步模式下,服务器不会为每个请求创建单独的线程或进程,而是将请求放入队列中,并通过事件循环机制来处理这些请求。这减少了系统资源的开销,使得TritonServer能够在有限的资源下处理更多的请求。

2、grpc异步模式

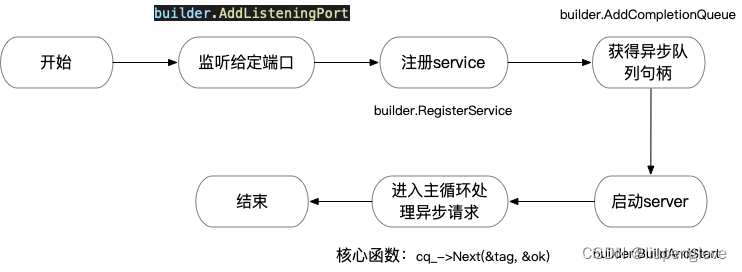

gRPC使用CompletionQueue API进行异步操作,基础工作流如下:

- 构建CompletionQueue,并绑定到RPC调用。

- 读写操作,使用一个唯一的void *指针(tag)标识。

- 注册处理函数,通常以类对象指针作为唯一tag。

- 调用CompletionQueue::Next,阻塞等待请求的到达。

- 请求到达后,通过tag指针进行响应处理。

grpc异步模式启动主流程:

grpc 示例代码:

void Run(uint16_t port) {

std::string server_address = absl::StrFormat("0.0.0.0:%d", port);

ServerBuilder builder;

// Listen on the given address without any authentication mechanism.

builder.AddListeningPort(server_address, grpc::InsecureServerCredentials());

// Register "service_" as the instance through which we'll communicate with

// clients. In this case it corresponds to an *asynchronous* service.

builder.RegisterService(&service_);

// Get hold of the completion queue used for the asynchronous communication

// with the gRPC runtime.

cq_ = builder.AddCompletionQueue();

// Finally assemble the server.

server_ = builder.BuildAndStart();

std::cout << "Server listening on " << server_address << std::endl;

// Proceed to the server's main loop.

HandleRpcs();

}注册处理函数:

service_->RequestSayHello(&ctx_, &request_, &responder_, cq_, cq_, this);this即为唯一的tag,为指向该对象的指针。

另外要说的是,通过builder.AddCompletionQueue函数获得异步队列,一个系统中是可以有多个的,在triton中一共使用了三个异步队列,分别用于普通请求、推理请求、流式推理请求。

server端完整示例代码:

/*

*

* Copyright 2015 gRPC authors.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*

*/

#include <iostream>

#include <memory>

#include <string>

#include <thread>

#include "absl/flags/flag.h"

#include "absl/flags/parse.h"

#include "absl/strings/str_format.h"

#include <grpc/support/log.h>

#include <grpcpp/grpcpp.h>

#ifdef BAZEL_BUILD

#include "examples/protos/helloworld.grpc.pb.h"

#else

#include "helloworld.grpc.pb.h"

#endif

ABSL_FLAG(uint16_t, port, 50051, "Server port for the service");

using grpc::Server;

using grpc::ServerAsyncResponseWriter;

using grpc::ServerBuilder;

using grpc::ServerCompletionQueue;

using grpc::ServerContext;

using grpc::Status;

using helloworld::Greeter;

using helloworld::HelloReply;

using helloworld::HelloRequest;

class ServerImpl final {

public:

~ServerImpl() {

server_->Shutdown();

// Always shutdown the completion queue after the server.

cq_->Shutdown();

}

// There is no shutdown handling in this code.

void Run(uint16_t port) {

std::string server_address = absl::StrFormat("0.0.0.0:%d", port);

ServerBuilder builder;

// Listen on the given address without any authentication mechanism.

builder.AddListeningPort(server_address, grpc::InsecureServerCredentials());

// Register "service_" as the instance through which we'll communicate with

// clients. In this case it corresponds to an *asynchronous* service.

builder.RegisterService(&service_);

// Get hold of the completion queue used for the asynchronous communication

// with the gRPC runtime.

cq_ = builder.AddCompletionQueue();

// Finally assemble the server.

server_ = builder.BuildAndStart();

std::cout << "Server listening on " << server_address << std::endl;

// Proceed to the server's main loop.

HandleRpcs();

}

private:

// Class encompasing the state and logic needed to serve a request.

class CallData {

public:

// Take in the "service" instance (in this case representing an asynchronous

// server) and the completion queue "cq" used for asynchronous communication

// with the gRPC runtime.

CallData(Greeter::AsyncService* service, ServerCompletionQueue* cq)

: service_(service), cq_(cq), responder_(&ctx_), status_(CREATE) {

// Invoke the serving logic right away.

Proceed();

}

void Proceed() {

if (status_ == CREATE) {

// Make this instance progress to the PROCESS state.

status_ = PROCESS;

// As part of the initial CREATE state, we *request* that the system

// start processing SayHello requests. In this request, "this" acts are

// the tag uniquely identifying the request (so that different CallData

// instances can serve different requests concurrently), in this case

// the memory address of this CallData instance.

service_->RequestSayHello(&ctx_, &request_, &responder_, cq_, cq_,

this);

} else if (status_ == PROCESS) {

// Spawn a new CallData instance to serve new clients while we process

// the one for this CallData. The instance will deallocate itself as

// part of its FINISH state.

new CallData(service_, cq_);

// The actual processing.

std::string prefix("Hello ");

reply_.set_message(prefix + request_.name());

// And we are done! Let the gRPC runtime know we've finished, using the

// memory address of this instance as the uniquely identifying tag for

// the event.

status_ = FINISH;

responder_.Finish(reply_, Status::OK, this);

} else {

GPR_ASSERT(status_ == FINISH);

// Once in the FINISH state, deallocate ourselves (CallData).

delete this;

}

}

private:

// The means of communication with the gRPC runtime for an asynchronous

// server.

Greeter::AsyncService* service_;

// The producer-consumer queue where for asynchronous server notifications.

ServerCompletionQueue* cq_;

// Context for the rpc, allowing to tweak aspects of it such as the use

// of compression, authentication, as well as to send metadata back to the

// client.

ServerContext ctx_;

// What we get from the client.

HelloRequest request_;

// What we send back to the client.

HelloReply reply_;

// The means to get back to the client.

ServerAsyncResponseWriter<HelloReply> responder_;

// Let's implement a tiny state machine with the following states.

enum CallStatus { CREATE, PROCESS, FINISH };

CallStatus status_; // The current serving state.

};

// This can be run in multiple threads if needed.

void HandleRpcs() {

// Spawn a new CallData instance to serve new clients.

new CallData(&service_, cq_.get());

void* tag; // uniquely identifies a request.

bool ok;

while (true) {

// Block waiting to read the next event from the completion queue. The

// event is uniquely identified by its tag, which in this case is the

// memory address of a CallData instance.

// The return value of Next should always be checked. This return value

// tells us whether there is any kind of event or cq_ is shutting down.

GPR_ASSERT(cq_->Next(&tag, &ok));

GPR_ASSERT(ok);

static_cast<CallData*>(tag)->Proceed();

}

}

std::unique_ptr<ServerCompletionQueue> cq_;

Greeter::AsyncService service_;

std::unique_ptr<Server> server_;

};

int main(int argc, char** argv) {

absl::ParseCommandLine(argc, argv);

ServerImpl server;

server.Run(absl::GetFlag(FLAGS_port));

return 0;

}

示例代码github:https://github.com/grpc/grpc/tree/master/examples/cpp/helloworld

以上示例只是简单说明了grpc异步模式的使用方法,而对于处理多类请求的情况还需要优化设计,triton的设计是非常值得推荐的。

3、triton grpc异步模式设计

triton中一共设计了三个异步队列,分别用于处理普通请求、推理请求、流式推理请求:

std::unique_ptr<::grpc::ServerCompletionQueue> common_cq_; // 普通请求

std::unique_ptr<::grpc::ServerCompletionQueue> model_infer_cq_; // 推理请求

std::unique_ptr<::grpc::ServerCompletionQueue> model_stream_infer_cq_; // 流式推理请求启动grpc服务代码位于【server】代码库main函数:

TRITONSERVER_Error*

StartGrpcService(

std::unique_ptr<triton::server::grpc::Server>* service,

const std::shared_ptr<TRITONSERVER_Server>& server,

triton::server::TraceManager* trace_manager,

const std::shared_ptr<triton::server::SharedMemoryManager>& shm_manager)

{

TRITONSERVER_Error* err = triton::server::grpc::Server::Create(

server, trace_manager, shm_manager, g_triton_params.grpc_options_,

service);

if (err == nullptr) {

err = (*service)->Start();

}

if (err != nullptr) {

service->reset();

}

return err;

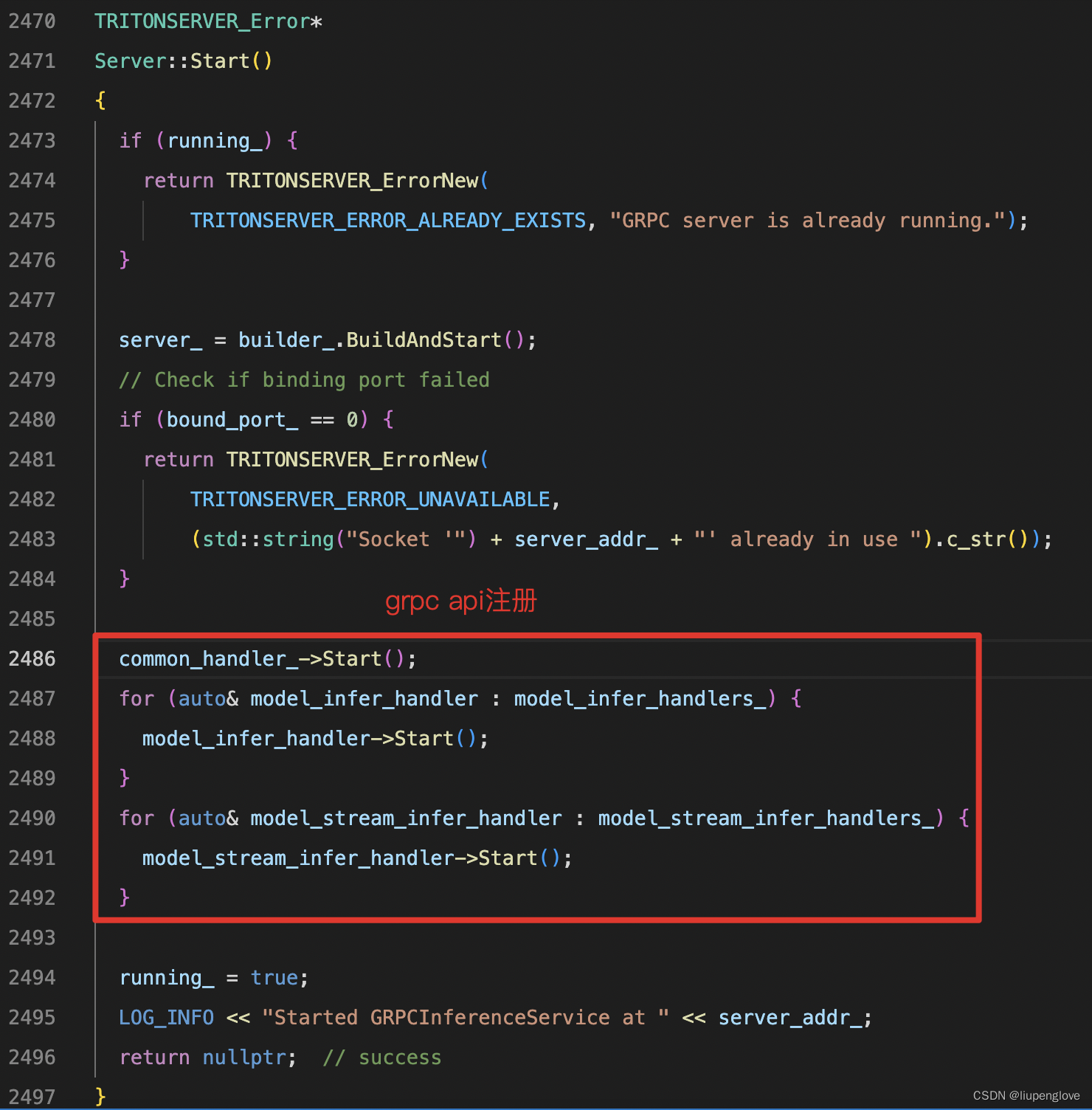

}其中(*service)->Start()函数为核心函数,实现了grpc请求的注册和处理,看如下代码(grpc_server.cc):

其中common_handler_->Start()为普通grpc请求的注册,model_infer_handler->Start为推理的注册,model_stream_infer_handler->Start为流式推理请求的注册,两个推理都出在一个循环中,这个循环标识的是在多个线程中注册函数,以便实现多线程的推理。

我们以common_handler为例继续看代码的实现:

void

CommonHandler::Start()

{

// Use a barrier to make sure we don't return until thread has

// started.

auto barrier = std::make_shared<Barrier>(2);

// 启动一个线程,完成api的注册以及处理

thread_.reset(new std::thread([this, barrier] {

// 注册所有函数

SetUpAllRequests();

barrier->Wait();

void* tag;

bool ok;

// 循环等待接收请求

while (cq_->Next(&tag, &ok)) {

ICallData* call_data = static_cast<ICallData*>(tag);

if (!call_data->Process(ok)) {

LOG_VERBOSE(1) << "Done for " << call_data->Name() << ", "

<< call_data->Id();

delete call_data;

}

}

}));

barrier->Wait();

LOG_VERBOSE(1) << "Thread started for " << Name();

}新启动的线程,完成所有api的注册,并循环等待rpc请求的到达,接收到请求后,将tag进行类型转换,同时调用其成员函数:Process()进行处理。其中类:ICallData为一个基类,这个类很重要,这里先列出,但不讲解。

继续看请求的注册,以健康检查注册为例:

void

CommonHandler::RegisterHealthCheck()

{

auto OnRegisterHealthCheck =

[this](

::grpc::ServerContext* ctx,

::grpc::health::v1::HealthCheckRequest* request,

::grpc::ServerAsyncResponseWriter<

::grpc::health::v1::HealthCheckResponse>* responder,

void* tag) {

this->health_service_->RequestCheck(

ctx, request, responder, this->cq_, this->cq_, tag);

};

auto OnExecuteHealthCheck = [this](

::grpc::health::v1::HealthCheckRequest&

request,

::grpc::health::v1::HealthCheckResponse*

response,

::grpc::Status* status) {

bool live = false;

TRITONSERVER_Error* err =

TRITONSERVER_ServerIsReady(tritonserver_.get(), &live);

auto serving_status =

::grpc::health::v1::HealthCheckResponse_ServingStatus_UNKNOWN;

if (err == nullptr) {

serving_status =

live ? ::grpc::health::v1::HealthCheckResponse_ServingStatus_SERVING

: ::grpc::health::v1::

HealthCheckResponse_ServingStatus_NOT_SERVING;

}

response->set_status(serving_status);

GrpcStatusUtil::Create(status, err);

TRITONSERVER_ErrorDelete(err);

};

const std::pair<std::string, std::string>& restricted_kv =

restricted_keys_.Get(RestrictedCategory::HEALTH);

new CommonCallData<

::grpc::ServerAsyncResponseWriter<

::grpc::health::v1::HealthCheckResponse>,

::grpc::health::v1::HealthCheckRequest,

::grpc::health::v1::HealthCheckResponse>(

"Check", 0, OnRegisterHealthCheck, OnExecuteHealthCheck,

false /* async */, cq_, restricted_kv, response_delay_);

}这个函数中有三个重点:

-

OnRegisterHealthCheck变量,该变量为std::function变量,该变量实现了grpc异步api的注册。

-

OnExecuteHealthCheck变量,该变量为std::function变量,该变量为api的处理函数。

-

创建CommonCallData对象,该对象真正实现了注册、处理请求的操作。

CommonCallData类的构造函数,会调用OnRegisterHealthCheck完成api的注册,在注册时,传入的tag为CommonCallData类对象指针,唯一标识了一个api请求,这个类继承自上面所说的ICallData类,在异步队列接收到请求数据后,会将tag强制转换为一个指向ICallData基类的指针,然而其真实类型为CommonCallData,接收到请求后,通过指针调用其成员函数Process对对应的请求进行处理:

template <typename ResponderType, typename RequestType, typename ResponseType>

bool

CommonCallData<ResponderType, RequestType, ResponseType>::Process(bool rpc_ok)

{

LOG_VERBOSE(1) << "Process for " << name_ << ", rpc_ok=" << rpc_ok << ", "

<< id_ << " step " << step_;

// If RPC failed on a new request then the server is shutting down

// and so we should do nothing (including not registering for a new

// request). If RPC failed on a non-START step then there is nothing

// we can do since we one execute one step.

const bool shutdown = (!rpc_ok && (step_ == Steps::START));

if (shutdown) {

if (async_thread_.joinable()) {

async_thread_.join();

}

step_ = Steps::FINISH;

}

if (step_ == Steps::START) {

// Start a new request to replace this one...

if (!shutdown) {

new CommonCallData<ResponderType, RequestType, ResponseType>(

name_, id_ + 1, OnRegister_, OnExecute_, async_, cq_, restricted_kv_,

response_delay_);

}

if (!async_) {

// For synchronous calls, execute and write response

// here.

Execute();

WriteResponse();

} else {

// For asynchronous calls, delegate the execution to another

// thread.

step_ = Steps::ISSUED;

async_thread_ = std::thread(&CommonCallData::Execute, this);

}

} else if (step_ == Steps::WRITEREADY) {

// Will only come here for asynchronous mode.

WriteResponse();

} else if (step_ == Steps::COMPLETE) {

step_ = Steps::FINISH;

}

return step_ != Steps::FINISH;

}以上即为tritonserver grpc异步请求注册的全流程,欢迎各位程序员同学进行指正、讨论。

也非常欢迎同学们关注公众号进行沟通,一起学习,一起进步。

1537

1537

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?