Opencv4.x深度学习之Tensorflow2.3框架训练模型

第一部分:开发环境

1.Win10 x64

2.Opencv-Python

3.Tensorflow 2.3.0 CPU

第二部分:安装

1.安装miniconda

地址:https://docs.conda.io/en/latest/miniconda.html

安装一路默认,提示要勾选的地方都勾选

2.安装VC++,根据个人需要,我安装了VS2019,没有安装这个,别的教程有提示要安装

地址:https://support.microsoft.com/zh-cn/topic/%E6%9C%80%E6%96%B0%E6%94%AF%E6%8C%81%E7%9A%84-visual-c-%E4%B8%8B%E8%BD%BD-2647da03-1eea-4433-9aff-95f26a218cc0

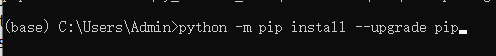

3.升级pip,如果pip大于19.0版本,不用升级

安装miniconda后,找到安装位置,打开Anaconda Prompt(miniconda3),所有的命令都在这里输入

版本查看命令:

pip -V

升级pip命令:

python -m pip install --upgrade pip

4.安装第三方库matplotlib,notebook,pandas,sklearn,tf_slim

第三方库可以根据个人需要安装

matplotlib:提供了曲线展示等功能

notebook:python代码编辑,我没有安装python相关的ide,所以直接安装了notebook

其余按个人需要安装

如果安装notebook,就需要代码补全功能

下面6条命令,按顺序执行

a.pip install jupyter_contrib_nbextensions -i https://pypi.mirrors.ustc.edu.cn/simple

b.jupyter contrib nbextension install

c.pip install jupyter_nbextensions_configurator

d.jupyter nbextensions_configurator enable

e.打开编辑器命令:jupyter notebook

f.点开Nbextensions选项勾选Hinterland

5.安装Tensorflowx.x.x

pip install tensorflow-cpu==2.3.0 -i https://pypi.douban.com/simple

tensorflow-cpu指定为cpu版本,也可以不输入-cpu

==2.3.0 指定版本号

https://pypi.douban.com/simple 国内镜像下载

6.安装OpenCV

pip install opencv-python

第三部分:模型训练

import tensorflow as tf

from tensorflow import keras

from tensorflow.python.framework.convert_to_constants import convert_variables_to_constants_v2

import numpy as np

import glob

import matplotlib.pyplot as plt

%matplotlib inline

BATCH_SIZE = 32 #每批多少个

IMAGE_WIDTH = 256 #图片传入宽度重置大小

IMAGE_HEIGHT = 256 #图片传入高度重置大小

PERCENT = 0.8 #训练图片80%与测试图片20%

RESULT_NUM = 3 #自己所需要分类的数量,我这里只有三个分类

#训练时导入图片

def load_image(path, label):

image = tf.io.read_file(path)

image = tf.image.decode_jpeg(image, channels=3)#转换为3通道图片

image = tf.image.resize(image,[IMAGE_WIDTH, IMAGE_HEIGHT])#图片重新处理大小

image = tf.cast(image, tf.float32)

image = image / 255 #归一化

return image, label

#制作自己的数据集

def make_dataset(path):

img_paths = glob.glob(path) #所有图片路径列表

#根据自己的路径,我的目录为 E:\\my_test\\分类名(kakou,luosi,redjiao)原谅我英文不好\\图片.jpg

#.split('\\')后 下标0 E:,下标1 my_test,下标2 分类名

all_label_name = [filename.split('\\')[2] for filename in img_paths] #所有标签分类名称

max_label_name = np.unique(all_label_name) #大分类 同名只显示一个

max_label_to_index = dict((name, idx) for idx, name in enumerate(max_label_name)) #标签与编号关联字典

RESULT_NUM = len(max_label_name)

print("分类数:", len(max_label_name))

max_index_to_label = dict((idx, name) for name, idx in max_label_to_index.items()) #编号与标签关联字典

all_label = [max_label_to_index.get(name) for name in all_label_name] #所有标签对应的编号

print("标签:", max_index_to_label)

random_index = np.random.permutation(len(img_paths)) #生成随机数打乱数据

img_paths = np.array(img_paths)[random_index] #随机打乱所有图片路径

all_label = np.array(all_label)[random_index] #随机打乱所有标签

#切片 percent训练数据 (1-percent)测试数据

per = int(len(img_paths) * PERCENT)

train_path = img_paths[ :per]

train_label = all_label[ :per]

test_path = img_paths[per: ]

test_label = all_label[per: ]

train_db = tf.data.Dataset.from_tensor_slices((train_path, train_label))

test_db = tf.data.Dataset.from_tensor_slices((test_path, test_label))

AUTOTUNE = tf.data.experimental.AUTOTUNE#多线程 自动分配CPU

train_db = train_db.map(load_image,num_parallel_calls=AUTOTUNE)

test_db = test_db.map(load_image,num_parallel_calls=AUTOTUNE)

train_db = train_db.repeat().shuffle(300).batch(BATCH_SIZE)

test_db = test_db.batch(BATCH_SIZE)

print("总数:", len(train_path) + len(test_path))

print("训练数:", len(train_path))

print("测试数:", len(test_path))

return train_db,test_db,train_path,test_path

#网络模型,这里可以替换成自己的网络模型

def model_net():

model = tf.keras.Sequential([

tf.keras.layers.Conv2D(32, 3, activation='relu', input_shape=(IMAGE_WIDTH, IMAGE_HEIGHT,3)),

tf.keras.layers.MaxPooling2D(pool_size=(2, 2)),

tf.keras.layers.Conv2D(32, 3, activation='relu'),

tf.keras.layers.MaxPooling2D(pool_size=(2, 2)),

tf.keras.layers.Conv2D(64, 3, activation='relu'),

tf.keras.layers.MaxPooling2D(pool_size=(2, 2)),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dropout(0.5),

tf.keras.layers.Dense(int(RESULT_NUM), activation='softmax')

])

model.compile(optimizer = tf.keras.optimizers.Adam(0.0001),

loss = tf.keras.losses.SparseCategoricalCrossentropy(from_logits=False),

metrics = ['acc'])

return model

#模型转换 方便OpenCv调用

def model_frozen(model, logdir, name):

# Convert Keras model to ConcreteFunction

full_model = tf.function(lambda x: model(x))

full_model = full_model.get_concrete_function(

tf.TensorSpec(model.inputs[0].shape, model.inputs[0].dtype))

# Get frozen ConcreteFunction

frozen_func = convert_variables_to_constants_v2(full_model)

frozen_func.graph.as_graph_def()

layers = [op.name for op in frozen_func.graph.get_operations()]

print("-" * 50)

print("Frozen model layers: ")

for layer in layers:

print(layer)

print("-" * 50)

print("Frozen model inputs: ")

print(frozen_func.inputs)

print("Frozen model outputs: ")

print(frozen_func.outputs)

# Save frozen graph from frozen ConcreteFunction to hard drive

tf.io.write_graph(graph_or_graph_def = frozen_func.graph,

logdir = logdir,

name = name,

as_text = False)

print("模型转换完成,训练结束")

#训练数据

def train_function(path):

train_db, test_db, train_path, test_path = make_dataset(path)

model = model_net()

steps_per_epoch = len(train_path) // BATCH_SIZE + 1 #整除后 如果还有余的个人感觉没有训练,所以我用了整除+1,也希望伙伴们能解惑

validation_steps = len(test_path) // BATCH_SIZE + 1

print("train batch:", steps_per_epoch)

print("test batch:", validation_steps)

history = model.fit(train_db,

epochs = 20,

steps_per_epoch = steps_per_epoch,

validation_data = test_db,

validation_steps = validation_steps)

model.evaluate(test_db)

tf.saved_model.save(model, 'E:\\model\\pb_model\\')

model_frozen(model, 'E:\\model\\frozen_model\\', 'frozen_graph.pb')

print("保存模型成功")

return model, history

model, history = train_function('E:\my_test\*\*.jpg')

第四部分:OpenCv部分

import cv2

from cv2 import dnn

import numpy as np

class_name = ['kakou', 'luosi', 'redjiao']

#转换模型保存位置

net = dnn.readNetFromTensorflow('E:/model/frozen_model/frozen_graph.pb')

#输入检测图片,这里我用uint8 uint16检测都没有问题,我是小白 希望伙伴们解惑

img = cv2.imdecode(np.fromfile('E:/my_test/kakou/kakou_25.jpg', dtype = np.uint8), -1)

#这里我注释后也可以,不知道是不是下面的size=(256, 256)做了同样的功能

img = cv2.resize(img, (256, 256))

#这里碰到了一个问题,swapRB如果为False 我的第三个分类找不出来,第三个分类背景为黑,检测部分为红,感觉是这个分类的图片比较单一造成的,希望伙伴们解惑,改为True 第三通道跟第一通道交换 正常

blob = cv2.dnn.blobFromImage(img, scalefactor = 1.0 / 225.0, size = (256, 256), mean = (), swapRB = True, crop = False)

#这里就不解释了

net.setInput(blob)

out = net.forward()

out = out.flatten()

classId = np.argmax(out)

#输出

out, classId, class_name[classId]

本人是小白,不熟悉Python与Tensorflow,Opencv接触不久,从Halcon转来的,如果上面的问题感觉小儿科,大家不要笑话,希望评论区指正,非常感谢!!!

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?