Yarn定义

Yarn是一个负责资源调度和任务管理的资源调度平台,它相当于电脑的操作系统,而MapReduce相当于在yarn上运行的应用程序

Yarn基本框架

Yarn主要由RecourceManager,NodeManager,ApplicationMaster,Container等组件构成

Yarn的框架图:

首先客户端向ResourceManager提交作业,

ReduceManager会在相对空闲的DataNode上启动一个AppMaster(它相当于此次作业的代理人),

AppMaster会将作业所需要的内存,空间,cpu大小告诉ResourceManager,ResourceManager会将所需的资源Container(锁定–在这个作业完成之前,这些资源不会被其他作业动用),

AppMaster会实时监控作业完成情况,

一旦作业完成,AppMaster就会告诉ResourceManager将资源回收。

Yarn的工作机制

首先客户端会向ResourceManager申请一个应用进程,

ResourceManager会返还给客户端一个路径用来存放原数据,jar包,配置文件

客户端将所需资源提交到ResourceManager所给的路径下

客户端告诉ResourceManager资源提交完毕并请求申请MRAppMaster

ResourceManager将用户的请求初始化为一个task并放到调度队列当中

ResourceManager寻找相对空闲的DataNode,NodeManager将任务领取

NodeMangaer创建容器Container,并产生MRAppMaster用于将所需的cpu,空间,内存锁定

Container将job的资源下载到本地

MRAppMaster申请运行maptask容器

ResourceManager将运行maptask的任务交给两个NodeManager创建容器

MRAppMaster发送程序启动脚本分别给两个NodeManager,NodeManager运行MapTask

向RM申请2个容器运行Reducetask程序

reduce向map获取相应分区数据

程序运行完之后MRAppMaster会向RsourceManager注销自己

资源调度器

Hadoop的资源调度器有三种:FIFO,Capacuty Scheduler,Fair Schedule

Hadoop 2.0版本默认的资源调度器为Capacuty Scheduler

FIFO(先进先出调度器)

只有一个队列,先进来的task先使用资源

Capacuty Scheduler(容器调度器)

有多个队列,多个队列的第一个task同时使用资源(效率高)

Fair Schedule (公平调度器)

按照缺额排序,缺额大者优先

任务推测执行

任务完成的时间和最后一个完成任务的Map决定,总有有一两个Map因为有bug的原因导致很慢,这个时候task就会有备份任务,来解决此问题

前提条件

1.每一个Task只能有一个备份任务

2.当前job已完成的Task数不能小于0.05

3.开启推测执行参数设置(mapred-site。xml文件中是自动打开的)

<property>

<name>mapreduce.map.speculative</name>

<value>true</value>

<description>If true, then multiple instances of some map tasks may be executed in parallel.</description>

</property>

<property>

推测执行算法

Reduce Join案例实操

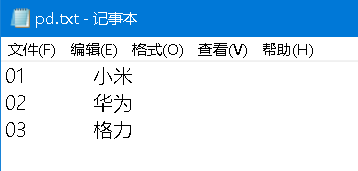

要求:像数据库建立表连接一样,将两个表合并,并按照规定格式输出

最后输出:

1.首先需要创建实体Bean并序列化:

package hxy.Order.bean;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class orderBean implements Writable {

private String order_id;

private String pd_id;

private int amount;

private String pd_name;

private String file_name;

public orderBean() {

}

public String getOrder_id() {

return order_id;

}

public void setOrder_id(String order_id) {

this.order_id = order_id;

}

public String getPd_id() {

return pd_id;

}

public void setPd_id(String pd_id) {

this.pd_id = pd_id;

}

public int getAmount() {

return amount;

}

public void setAmount(int amount) {

this.amount = amount;

}

public String getPd_name() {

return pd_name;

}

public void setPd_name(String pd_name) {

this.pd_name = pd_name;

}

public String getFile_name() {

return file_name;

}

public void setFile_name(String file_name) {

this.file_name = file_name;

}

@Override

public String toString() {

return order_id + '\t' + pd_name + '\t' + amount;

}

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeUTF(order_id);

dataOutput.writeUTF(pd_id);

dataOutput.writeInt(amount);

dataOutput.writeUTF(pd_name);

dataOutput.writeUTF(file_name);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

this.order_id = dataInput.readUTF();

this.pd_id = dataInput.readUTF();

this.amount = dataInput.readInt();

this.pd_name = dataInput.readUTF();

this.file_name = dataInput.readUTF();

}

}

2.写mapper需要将文件名获取同时判断文件名,并进行赋值:

package hxy.Order.Ordermr;

import hxy.Order.bean.orderBean;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import java.io.IOException;

public class OrderMapper extends Mapper<LongWritable,Text,Text,orderBean> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

FileSplit inputSplit = (FileSplit) context.getInputSplit();

String name = inputSplit.getPath().getName();

Text k = new Text();

orderBean order=new orderBean();

if (name.startsWith("order")){

String line = value.toString();

String[] split = line.split("\t");

order.setOrder_id(split[0]);

order.setPd_id(split[1]);

order.setAmount(Integer.parseInt(split[2]));

order.setPd_name("");

order.setFile_name("order");

k.set(split[1]);

}else{

String line = value.toString();

String[] split = line.split("\t");

order.setOrder_id("");

order.setPd_id(split[0]);

order.setAmount(0);

order.setPd_name(split[1]);

order.setFile_name("pd");

k.set(split[0]);

}

context.write(k,order);

}

}

3.写reducer类,将Mapper类传过来的OrderBean的内容进行加工输出:

package hxy.Order.Ordermr;

import hxy.Order.bean.orderBean;

import org.apache.commons.beanutils.BeanUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

import java.lang.reflect.InvocationTargetException;

import java.util.ArrayList;

public class OrderReducer extends Reducer<Text,orderBean,orderBean,NullWritable>{

@Override

protected void reduce(Text key, Iterable<orderBean> values, Context context) throws IOException, InterruptedException {

ArrayList<orderBean> orderList = new ArrayList<>();

orderBean ordertem = new orderBean();

orderBean orderpd = new orderBean();

for (orderBean order : values){

if (order.getFile_name().equals("order")){

try {

BeanUtils.copyProperties(ordertem,order);

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (InvocationTargetException e) {

e.printStackTrace();

}

orderList.add(ordertem);

}else{

try {

BeanUtils.copyProperties(orderpd,order);

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (InvocationTargetException e) {

e.printStackTrace();

}

}

}

for (orderBean orderfinally : orderList) {

orderfinally.setPd_name(orderpd.getPd_name());

context.write(orderfinally,NullWritable.get());

}

}

}

最后是driver类:

package hxy.Order.Ordermr;

import hxy.Grop.GropGrop;

import hxy.Grop.GropMapper;

import hxy.Grop.GropReducer;

import hxy.Order.bean.orderBean;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class OrderDriver {

public static void main(String args[]) throws IOException, ClassNotFoundException, InterruptedException {

Job job = Job.getInstance();

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(orderBean.class);

job.setOutputKeyClass(orderBean.class);

job.setOutputValueClass(NullWritable.class);

job.setMapperClass(OrderMapper.class);

job.setReducerClass(OrderReducer.class);

FileInputFormat.setInputPaths(job,new Path("E:\\MR\\orderinput"));

FileOutputFormat.setOutputPath(job,new Path("E:\\MR\\orderOutput"));

job.waitForCompletion(true);

}

}

847

847

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?