一、认识Hive:

1数据库与数据仓库

数据库:

mysql、oracle、sqlserver、DB2、sqlite、MDB

数据仓库:

Hive,是MR的客户端,也就是说不必要每台机器都安装部署Hive

1本质是什么?

理性认知:将SQL转换为MapReduce程序

1Hive的特性

(1)操作接口是采用SQL语法,HQL

(2)避免了写MapReduce的繁琐过程

1Hive体系结构

(1)Client

终端命令行

JDBC – 不常用,非常麻烦(相对于前者)

(2)metastore

原本的数据集和字段名称以及数据信息之间的双射关系。

我们目前是存储在Mysql中

(3)Server-Hadoop

在操作Hive的同时,需要将Hadoop的HDFS开启,YARN开启,MAPRED配置好

二、Hive的部署与安装

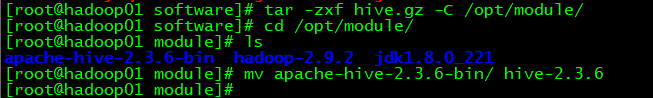

1、解压Hive到安装目录(可修改文件名称)

tar -zxf hive.gz -C /opt/module/

2、重命名配置文件

mv hive-default.xml.template hive-site.xml

mv hive-env.sh.template hive-env.sh

mv hive-log4j2.properties.template hive-log4j2.properties

rm -rf *.cmd

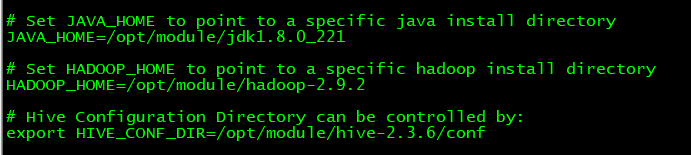

3、配置hive-env.sh中JDK和Hadoop路径并添加环境变量

JAVA_HOME=/opt/module/jdk1.8.0_121

HADOOP_HOME=/opt/module/cdh/hadoop-2.5.0-cdh5.3.6/

<-- hive启动加载的配置文件目录 -->

export HIVE_CONF_DIR=/opt/module/hive-2.3.6/conf

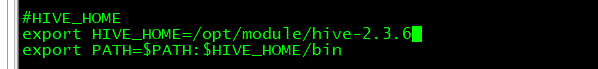

配置环境变量

使环境变量生效:

source /etc/profile

4、安装Mysql

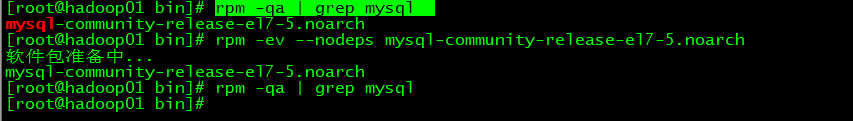

在安装之前,先检查是否安装过mysql,若存在,则删除,没有,则忽略:

rpm -qa | grep mysql

rpm -ev --nodeps mysql-community-release-el7-5.noarch

su - root

yum -y install wget

yum -y install mysql mysql-server mysql-devel

wget http://dev.mysql.com/get/mysql-community-release-el7-5.noarch.rpm

rpm -ivh mysql-community-release-el7-5.noarch.rpm

yum -y install mysql-community-server

若下载速度太慢,可尝试pip加速

临时方法,通过-i参数指定镜像地址(这里用清华大学的)

wget -i https://pypi.tuna.tsinghua.edu.cn/simple

提示:如果使用离线绿色版本(免安装版本)需要手动初始化Mysql数据库

5、配置Mysql

5.1 开启Mysql服务

/bin/systemctl start mysqld.service

service mysqld start(我是用的这个)

5.2 设置root用户密码

mysqladmin -uroot password ‘111111’

5.3 为用户以及其他机器节点授权(让其他机器都有访问MySQL数据库的权限)

进入MySQL:mysql -uroot -p

mysql> grant all on *.* to root@'hadoop-senior01.itguigu.com' identified by '123456';

mysql> grant all on *.* to root@'hadoop101' identified by '1';

mysql> grant all on *.* to root@'hadoop102' identified by '1';

mysql> grant all on *.* to root@'hadoop103' identified by '1';

mysql> grant all on *.* to root@'hadoop104' identified by '000000';

grant:授权

all:所有权限

*.*:数据库名称.表名称

root:操作mysql的用户

@'':主机名

密码:123456

刷新权限

mysql> flush privileges;

5.4 修改hive-site.xml

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hadoop10:3306/metastore?createDatabaseIfNotExist=true</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password to use against metastore database</description>

</property>

5.5 hive-log4j.properties

hive.log.dir=/opt/modules/cdh/hive-0.13.1-cdh5.3.6/logs

5.6 拷贝数据库驱动包到Hive根目录下的lib文件夹(hive去操作MySQL需要用到)

$ cp -a mysql-connector-java-5.1.27-bin.jar /opt/modules/cdh/hive-0.13.1-cdh5.3.6/lib/

cp-amysql-connector-java-5.1.47.jar/opt/module/hive-0.13.1-cdh5.3.6/lib/

5.7 启动Hive

[root@hadoop101 hive-0.13.1-cdh5.3.6]# bin/hive

$ bin/hive

5.8 修改HDFS系统中关于Hive的一些目录权限

$ /opt/module/hadoop-2.5.0-cdh5.3.6/bin/hadoop fs -chmod 777 /tmp/

$ /opt/module/hadoop-2.5.0-cdh5.3.6/bin/hadoop fs -chmod 777 /user/hive/warehouse

5.9 显示数据库名称以及字段名称

hive.cli.print.header

true

Whether to print the names of the columns in query output.

<!-- 是否在当前客户端中显示当前所在数据库名称 -->

<property>

<name>hive.cli.print.current.db</name>

<value>true</value>

<description>Whether to include the current database in the Hive prompt.</description>

</property>

5.10 创建数据库

hive> create database staff;

5.11 进入staff数据库

hive>use staff

5.12 创建表操作(默认分隔符是\t,如果是分隔符需要自己写函数)

hive> create table t1(eid int, name string, sex string,age string) row format delimited fields terminated by ‘\t’;

5.13 导入数据(语句时在hive环境中写)

查看表结构:desc

Mkdir input; create empl.txt;cat empl.txt;

从本地导入

load data local inpath ‘文件路径’ into table tablename;

从HDFS系统导入

load data inpath ‘文件路径’ into table tablename;

load data local inpath ‘opt/module/ hive-0.13.1-cdh5.3.6/input/empl.txt’ into table t1;

<property>

<name>hive.exec.local.scratchdir</name>

<value>/opt/module/hive-2.3.6/tmp//${system:user.name}</value>

<description>Local scratch space for Hive jobs</description>

</property>

<property>

<name>hive.downloaded.resources.dir</name>

<value>/opt/module/hive-2.3.6/tmp//${hive.session.id}_resources</value>

<description>Temporary local directory for added resources in the remote file system.</description>

</property>

<property>

<name>hive.server2.logging.operation.log.location</name>

<value>/opt/module/hive-2.3.6/logs</value>

<description>Top level directory where operation logs are stored if logging functionality is enabled</description>

</property>

747

747

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?