一、安装准备

- 创建hadoop账号

- 更改ip

安装Java 更改/etc/profile 配置环境变量

export $JAVA_HOME=/usr/java/jdk1.7.0_71修改host文件域名

172.16.133.149 hadoop101 172.16.133.150 hadoop102 172.16.133.151 hadoop103- 安装ssh 配置无密码登录

解压hadoop

/hadoop/hadoop-2.6.2

二、修改conf下面的配置文件

依次修改hadoop-env.sh、core-site.xml、hdfs-site.xml、mapred-site.xml、yarn-site.xml和slaves文件。

1.hadoop-env.sh

`#添加JAVA_HOME:`

`export JAVA_HOME=/usr/java/jdk1.7.0_71`

2.core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>/hadoop/hadoop-2.6.2/hdfs/tmp</value>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://hadoop101:9000</value>

</property>

</configuration>

3.hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///hadoop/hadoop-2.6.2/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:///hadoop/hadoop-2.6.2/hdfs/data</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop101:9001</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

</configuration>

4.mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

5.yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop101</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

</configuration>

6.slaves

hadoop102

hadoop103

7.最后,将整个hadoop-2.6.2文件夹及其子文件夹使用scp复制到两台Slave(hadoop102、hadoop103)的相同目录中:

scp -r \hadoop\hadoop-2.6.2\ hadoop@hadoop102:\hadoop\

scp -r \hadoop\hadoop-2.6.2\ hadoop@hadoop103:\hadoop\

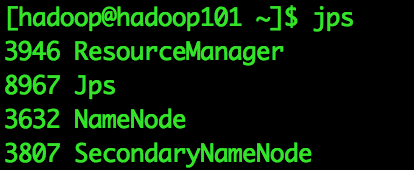

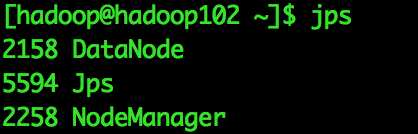

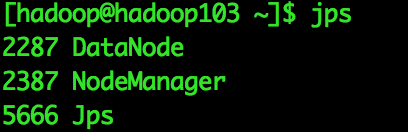

三、启动运行Hadoop(进入hadoop文件夹下)

格式化NameNode

dfs namenode -format启动Namenode、SecondaryNameNode和DataNode

[hadoop@hadoop101]$ start-dfs.sh启动ResourceManager和NodeManager

[hadoop@hadoop101]$ start-yarn.sh最终运行结果

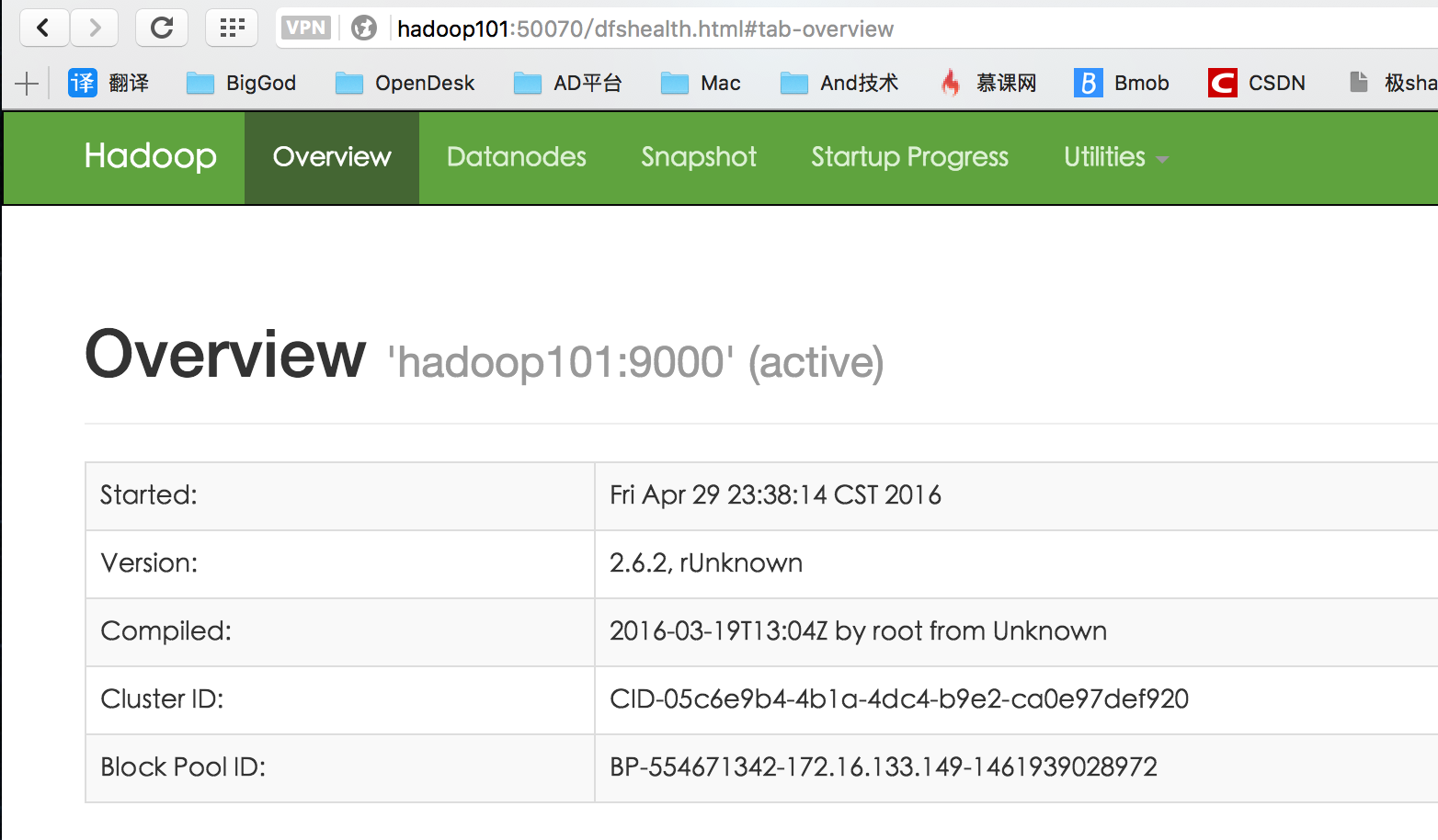

四、测试Hadoop

测试HDFS

浏览器输入http://<-NameNode主机名或IP->:50070

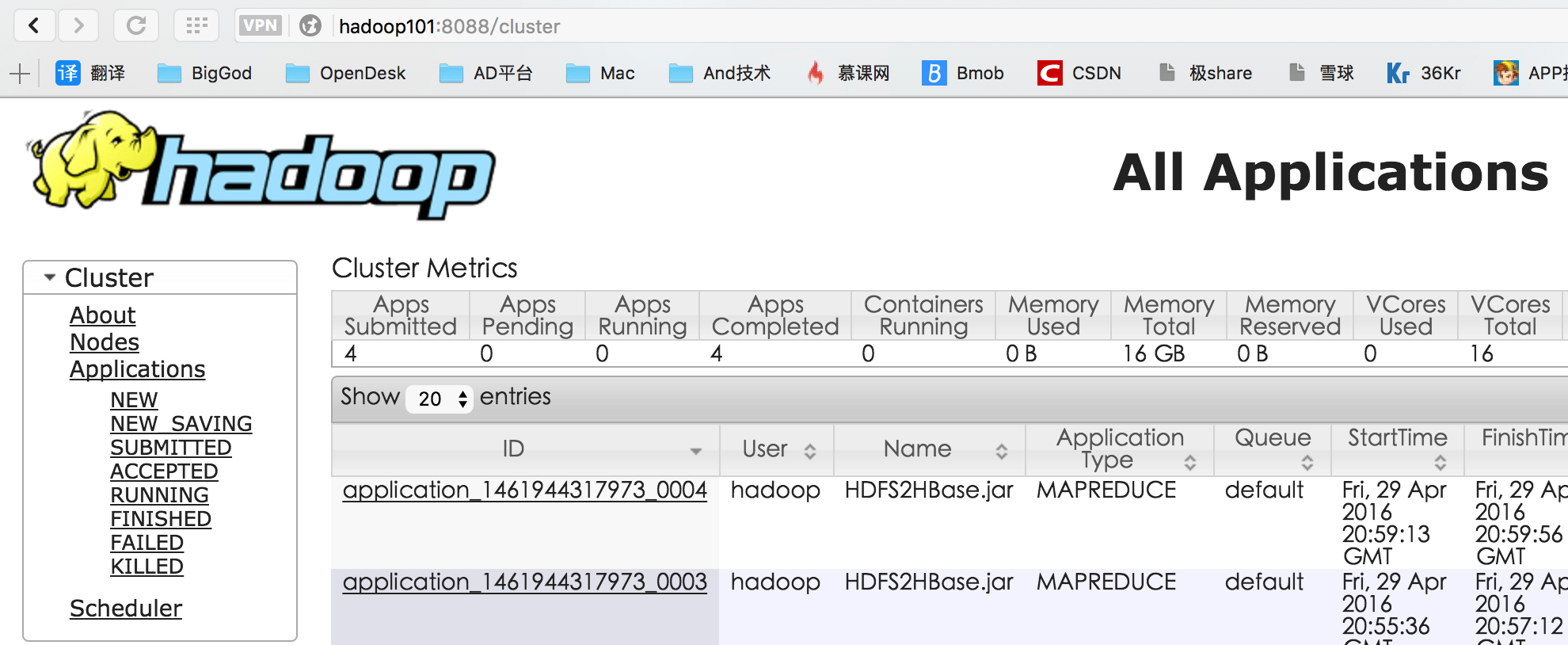

测试ResourceManager

浏览器输入http://<-ResourceManager所在主机名或IP->:8088

819

819

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?