源文地址:http://www.cnblogs.com/linbingdong/p/5806329.html

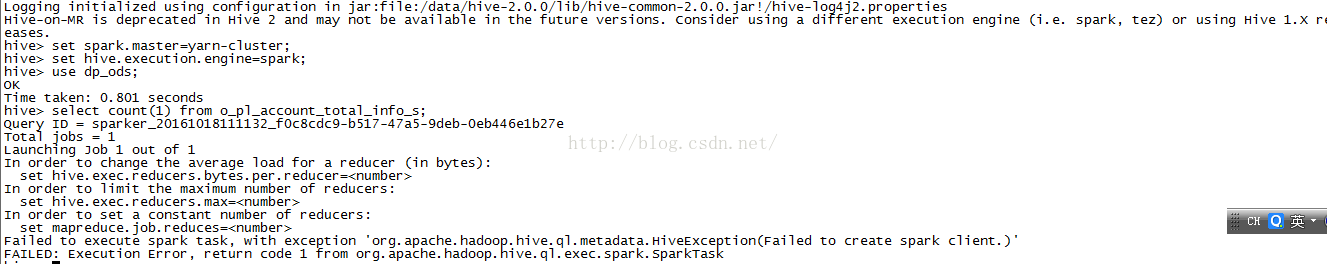

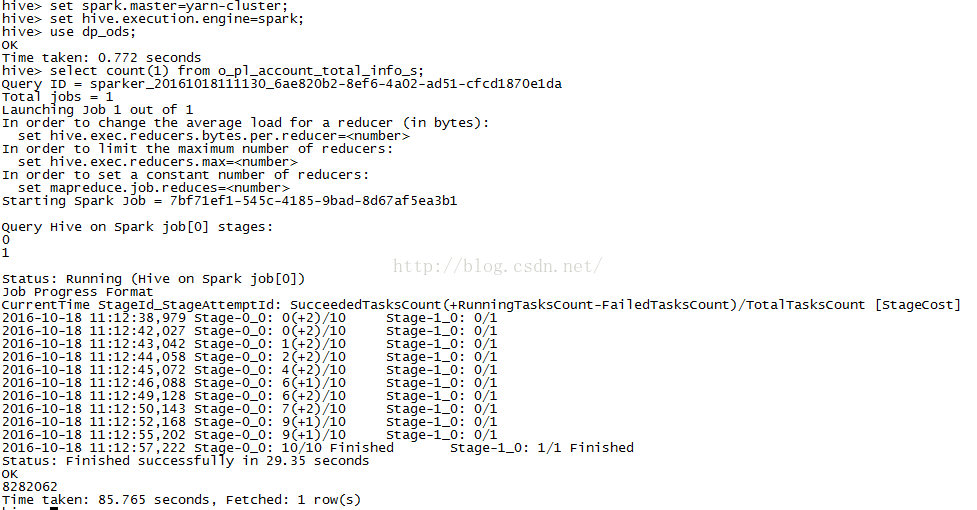

在配置好的hive on spark 上同时开两个hive 有一个任务能正常执行,另外一个不能正常执行

情况是spark有别的application在运行,导致本次spark任务的等待或者失败 具体操作截图如下

解决方式:在hadoop配置文件中设置yarn的并行度,在/etc/hadoop/conf/capacity-scheduler.xml文件中配置yarn.scheduler.capacity.maximum-am-resource-percent from 0.1 to 0.5

<property>

<name>yarn.scheduler.capacity.maximum-am-resource-percent</name>

<value>0.5</value>

<description>

Maximum percent of resources in the cluster which can be used to run

application masters i.e. controls number of concurrent running

applications.

</description>

</property>

1312

1312

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?