一、es基本概念简介?

1.1介绍

Elasticsearch是一个基于Lucene的搜索服务器。它提供了一个分布式多用户能力的全文搜索引擎,基于RESTful web接口。Elasticsearch是用Java语言开发的,并作为Apache许可条款下的开放源码发布,是一种流行的企业级搜索引擎

-

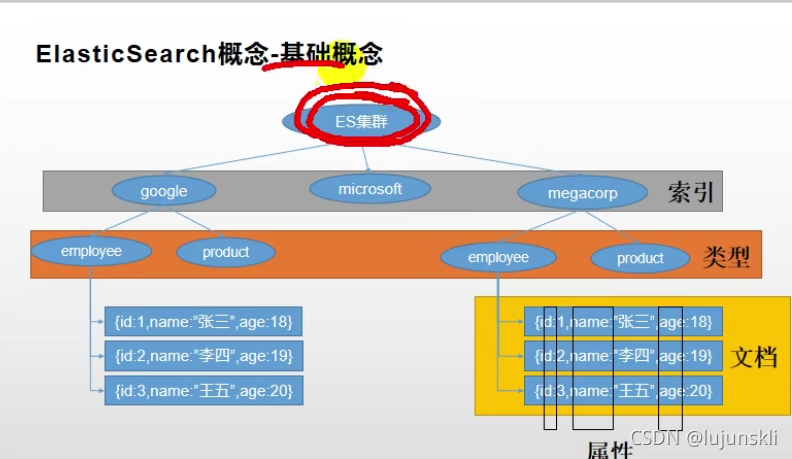

es中主要有三个概念 1.索引(index)->mysql的库 ,2.类型(type)->mysql的表

,3.文档(document)->mysql中table的内容 -

对照图

-

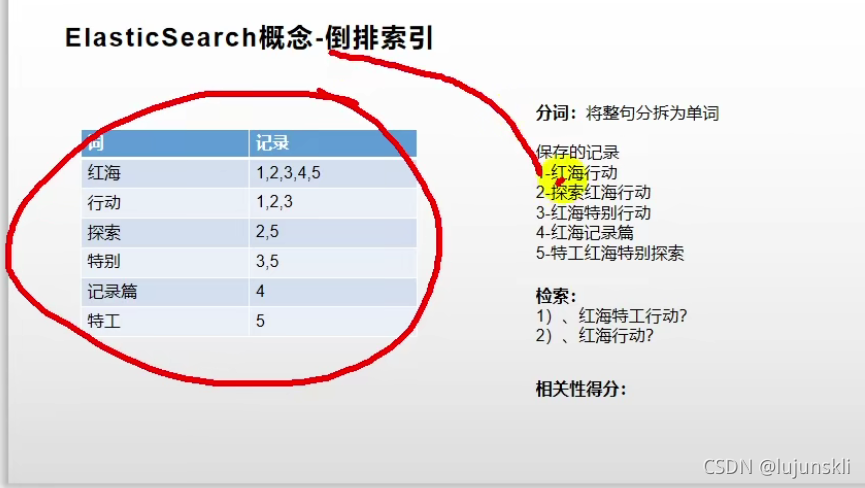

为什么能海量数据中快速帮我们定位数据?(倒排索引,相较于mysql的全表扫描,es在存储数据的时候,维护一张倒排索引表如下图,哪个单词在那些数据中有,比如我们在搜索红海特工行动的时候,就会命中三次 红海,特工,行动,此时3记录就比较满足 应为他击中了两次)

二、安装

2.1安装es

# 1.拉取镜像,es存储检索 kibana主要提供可视化的操作

docker pull elasticsearch:7.4.2

docker pull kibana:7.4.2

#2.创建挂载文件和配置

mkdir -p /mydata/elasticsearch/config

mkdir -p /mydata/elasticsearch/data

#3.修改配置让es被远程连接

vi /mydata/elasticsearch/config/elasticsearch.yml

... "http.host: 0.0.0.0" ...

#4.创建数据卷和容器

docker run --name elasticsearch -p 9200:9200 -p 9300:9300 \

-e "discovery.type=single-node" \

-e ES_JAVA_OPTS="-Xms64m -Xmx512m" \

-v /mydata/elasticsearch/config/elasticsearch.yml:/usr/share/elasticsearch/config/elasticsearch.yml \

-v /mydata/elasticsearch/data:/usr/share/elasticsearch/data \

-v /mydata/elasticsearch/plugins:/usr/share/elasticsearch/plugins \

-d elasticsearch:7.4.2

# 5.这时候启动还不行

# 递归更改权限,es需要访问

chmod -R 777 /mydata/elasticsearch/

# 设置开机启动elasticsearch

docker update elasticsearch --restart=always

#检查方式 ip+:9200 如果有es相关信息说明启动成功

#这个-e是指定要连接的es的地址

docker run --name kibana -e ELASTICSEARCH_HOSTS=http://192.168.56.10:9200 -p 5601:5601 -d kibana:7.4.2

# 设置开机启动kibana

docker update kibana --restart=always

2.2安装ik分词器

- 在前面安装的elasticsearch时,我们已经将elasticsearch容器的“/usr/share/elasticsearch/plugins”目录,映射到宿主机的“ /mydata/elasticsearch/plugins”目录下,所以安装分词器就很简单了直接去下载,“/elasticsearch-analysis-ik-7.4.2.zip”文件,解压到该文件下重启es即可。

- 也可以进到es容器中

[vagrant@localhost ~]$ sudo docker exec -it elasticsearch /bin/bash

[root@66718a266132 elasticsearch]# pwd

/usr/share/elasticsearch

[root@66718a266132 elasticsearch]# yum install wget

[root@66718a266132 elasticsearch]# wget https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v7.4.2/elasticsearch-analysis-ik-7.4.2.zip

[root@66718a266132 elasticsearch]# unzip elasticsearch-analysis-ik-7.4.2.zip -d ik

[root@66718a266132 elasticsearch]# mv ik plugins/

chmod -R 777 plugins/ik

docker restart elasticsearch

2.3 安装nginx

docker pull nginx:1.10

# 为了复制一个配置

docker run -p 80:80 --name nginx -d nginx:1.10

cd /mydata/nginx

docker container cp nginx:/etc/nginx .

然后在外部 /mydata/nginx/nginx 有了一堆文件

mv /mydata/nginx/nginx /mydata/nginx/conf

# 停掉nginx

docker stop nginx

docker rm nginx

# 创建新的nginx

docker run -p 80:80 --name nginx \

-v /mydata/nginx/html:/usr/share/nginx/html \

-v /mydata/nginx/logs:/var/log/nginx \

-v /mydata/nginx/conf:/etc/nginx \

-d nginx:1.10

# 注意一下这个路径映射到了/usr/share/nginx/html,我们在nginx配置文件中是写/usr/share/nginx/html,不是写/mydata/nginx/html

docker update nginx --restart=always

三、原生api使用

3.1入门案例(简单增删改查)

3.1.1. _cat

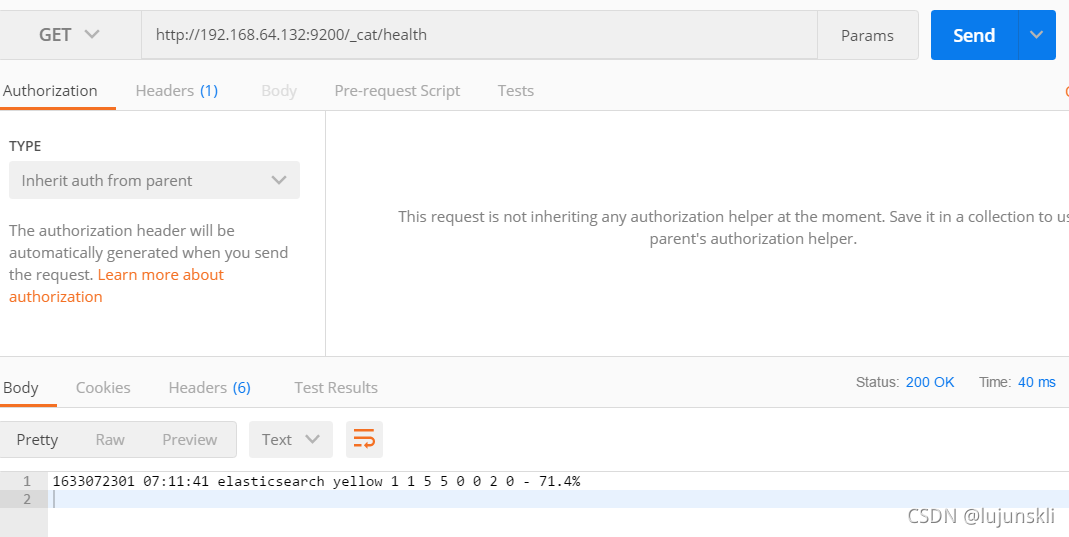

- GET /_cat/nodes 查看所有节点

- GET /_cat/health 查看es健康信息

- GET /_cat/nodes 查看主节点

- GET /_cat/nodes 查看所有索引

- 以用postman为例

3.1.2 保存

- 要保存一条数据,首先要告诉先有索引和类型,指定用哪个唯一标识

put http://192.168.64.132:9200/customer/external/1

#发送数据

{

"name":"lujun"

}

#返回结果

#_开头的是元数据相关的信息

{

# 在哪个索引下(在哪个库)

"_index": "customer",

# 在哪个类型下(在哪个表)

"_type": "external",

# 不指定会自动生成一个

"_id": "1",

# 这边是我执行了两遍的结果。第一次执行的话version是1 result是新增

"_version": 2,

"result": "updated",

"_shards": {

"total": 2,

"successful": 1,

"failed": 0

},

"_seq_no": 1,

"_primary_term": 4

}

ps:PUT和 POST都可以,POST新增。如果不指定id,会自动生成id。指定id就会修改这个数据,并新增版本号

PUT可以新增可以修改。PUT必须指定id;由于PUT需要指定id,我们一般都用来做修改操作,不指定id会报错。

3.1.3 查询,更新,删除

1.查询

GET http://192.168.64.132:9200/customer/external/1

#返回结果

{

"_index": "customer",

"_type": "external",

"_id": "1",

"_version": 2,

# seq_no 并发控制,每次更新就会+1

# primary_term 同上,主分片重新分配,如重启就会发生变化

"_seq_no": 1,

"_primary_term": 4,

"found": true,

"_source": {

"name": "lujun"

}

}

2.修改

POST http://192.168.64.132:9200/customer/external/1

{

"name":"lujun2"

}

# 修改同时增加属性

POST http://192.168.64.132:9200/customer/external/1

{

"name":"lujun2",

"xx":"xx"

}

3. 删除 文档

DELETE http://192.168.64.132:9200/customer/external/1

删除 索引

DELETE http://192.168.64.132:9200/customer

删除类型 没有

4.批量操作 注意需要在kinbana里面操作

POST /customer/external/_bulk

{"index":{"_id":"1"}}

{"name":"luijune"}

{"index":{"_id":"2"}}

{"name":"lujun2"}

复杂实例

POST /_bulk

{"delete":{"_index":"website","_type":"blog","_id":"123"}}

{"create":{"_index":"website","_type":"blog","_id":"123"}}

{"title":"my first blog post"}

{"index":{"_index":"website","_type":"blog"}}

{"title":"my second blog post"}

{"update":{"_index":"website","_type":"blog","_id":"123"}}

{"doc":{"title":"my updated blog post"}}

3.2 检索

官方文档: https://www.elastic.co/guide/en/elasticsearch/reference/7.x/getting-started-search.html

主要方式有1.通过接口(a直接拼接参数b请求体)

3.2.1 基本格式

# q查询条件 sort字段方式

GET bank/_search?q=*&sort=account_number:asc

# 请求体

#Elasticsearch提供了一个可以执行查询的Json风格的DSL(domain-specific language领域特定语言)。这个被称为Query #DSL,该查询语言非常全面。

#基本格式

GET bank/_search

{

"query": { # 查询的字段

"match_all": {}

},

"from": 0, # from+size用于做分页

"size": 5,

"_source":["balance"],#要展示的字段

"sort": [

{

"account_number": { # 返回结果按哪个列排序

"order": "desc" # 降序

}

}

]

}

3.2.2 匹配查询_单字段匹配(match和match_phrase)

# 所有包含kings的都会被查出来

# 注意如果是非字符串,会进行精确匹配。如果是字符串,会进行全文检索

GET bank/_search

{

"query": {

"match": {

"address": "kings"

}

}

}

#有的时候我们就是需要精确的查询怎么办?(两种方式,注意区别)

# match_phrase:不拆分字符串进行检索

# match+字段.keyword:必须全匹配上才检索成功

GET bank/_search

{

"query": {

"match_phrase": {

"address": "mill road" # 用match的话 他就会查mil 和road只要满足一个

}

}

}

#match+keywords和match_phrase

GET bank/_search

{

"query": {

"match_phrase": {

"address": "990 Mill"

}

}

}

# 结果

{

.........

},

"hits" : {

"total" : {

"value" : 1,

"relation" : "eq"

},

"max_score" : 10.806405,

"hits" : [

{

"_index" : "bank",

"_type" : "account",

"_id" : "970",

"_score" : 10.806405,

"_source" : {

"account_number" : 970,

"balance" : 19648,

"firstname" : "Forbes",

"lastname" : "Wallace",

"age" : 28,

"gender" : "M",

"address" : "990 Mill Road", # "990 Mill"

"employer" : "Pheast",

"email" : "forbeswallace@pheast.com",

"city" : "Lopezo",

"state" : "AK"

}

}

]

}

}

# 如果 match+字段.keywords的方式

GET bank/_search

{

"query": {

"match": {

"address.keyword": "990 Mill"

}

}

}

# 结果是没有的因为他要求完全匹配

3.2.3 匹配查询_多字段匹配(multi_math)

GET bank/_search

{

"query": {

"multi_match": {

"query": "mill",

"fields": [ #state,address只要有一个mill就可以了

"state",

"address"

]

}

}

}

# 结果

{

"took" : 28,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 4,

"relation" : "eq"

},

"max_score" : 5.4032025,

"hits" : [

{

"_index" : "bank",

"_type" : "account",

"_id" : "970",

"_score" : 5.4032025,

"_source" : {

"account_number" : 970,

"balance" : 19648,

"firstname" : "Forbes",

"lastname" : "Wallace",

"age" : 28,

"gender" : "M",

"address" : "990 Mill Road",

"employer" : "Pheast",

"email" : "forbeswallace@pheast.com",

"city" : "Lopezo",

"state" : "AK" #

}

},

{

"_index" : "bank",

"_type" : "account",

"_id" : "136",

"_score" : 5.4032025,

"_source" : {

"account_number" : 136,

"balance" : 45801,

"firstname" : "Winnie",

"lastname" : "Holland",

"age" : 38,

"gender" : "M",

"address" : "198 Mill Lane",

"employer" : "Neteria",

"email" : "winnieholland@neteria.com",

"city" : "Urie",

"state" : "IL"

}

},

{

"_index" : "bank",

"_type" : "account",

"_id" : "345",

"_score" : 5.4032025,

"_source" : {

"account_number" : 345,

"balance" : 9812,

"firstname" : "Parker",

"lastname" : "Hines",

"age" : 38,

"gender" : "M",

"address" : "715 Mill Avenue",

"employer" : "Baluba",

"email" : "parkerhines@baluba.com",

"city" : "Blackgum",

"state" : "KY"

}

},

{

"_index" : "bank",

"_type" : "account",

"_id" : "472",

"_score" : 5.4032025,

"_source" : {

"account_number" : 472,

"balance" : 25571,

"firstname" : "Lee",

"lastname" : "Long",

"age" : 32,

"gender" : "F",

"address" : "288 Mill Street", #

"employer" : "Comverges",

"email" : "leelong@comverges.com",

"city" : "Movico",

"state" : "MT" #

}

}

]

}

}

3.2.4 多条件嵌套查询(bool/must)

先来看几个关键词

- must:必须达到must所列举的所有条件

- must_not:必须不匹配must_not所列举的所有条件。

- should:应该满足should所列举的条件(满足加分,不满足也没事)。

GET bank/_search

{

"query":{

"bool":{

"must":[

{"match":{"address":"mill"}},

{"match":{"gender":"M"}}

]

}

}

}

#注意观察区别

GET bank/_search

{

"query": {

"bool": {

"must": [

{

"match": {

"gender": "M"

}

},

{

"match": {

"address": "mill"

}

}

],

"must_not": [

{

"match": {

"age": "18"

}

}

],

"should": [

{

"match": {

"lastname": "Wallace"

}

}

],

# 这边我们可以继续写

}

}

}

3.2.5 结果过滤(filter)

filter在使用过程中,并不会计算相关性得分:

GET bank/_search

{

"query": {

"bool": {

"must": [

{ "match": {"address": "mill" } }

],

"filter": { #注意 层级

"range": {

"balance": {

"gte": "10000",

"lte": "20000"

}

}

}

}

}

}

# 这里先是查询所有匹配mill的数据,在根据我们的条件进行筛选的

{

"took" : 2,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 1,

"relation" : "eq"

},

"max_score" : 5.4032025,

"hits" : [

{

"_index" : "bank",

"_type" : "account",

"_id" : "970",

"_score" : 5.4032025,

"_source" : {

"account_number" : 970,

"balance" : 19648,

"firstname" : "Forbes",

"lastname" : "Wallace",

"age" : 28,

"gender" : "M",

"address" : "990 Mill Road",

"employer" : "Pheast",

"email" : "forbeswallace@pheast.com",

"city" : "Lopezo",

"state" : "AK"

}

}

]

}

}

3.2.6 匹配之term

作用和match是匹配字段

- 全文检索字段用match

- 其他非text字段匹配用term(有点类似精确查找的意思)

来看一个例子

GET bank/_search

{

"query": {

"term": {

"address": "mill Road"

}

}

}

# 结果是没有的

# term改match会发现找到了多个注意区别

3.2.7 聚合(就是分组)

注意es的好用之处,查询出来的结果进行保留,然后进行我们一些想要的聚合,然后进行返回

"aggs":{ # 聚合关键字

"aggs_name":{ # 可随便写用于展示在结果

"AGG_TYPE":{} # 聚合的类型(avg,term,terms) avg平均值 term给出计数 注意terms和term的区别

}

}

# 搜索address中包含mill的所有人的年龄分布以及平均年龄,不显示这些人的详情

GET bank/_search

{

"query": {

"match": {

"address": "Mill"

}

},

"aggs": {

"ageAgg": {

"terms": {

"field": "age",

"size": 10

}

},

"ageAvg": {

"avg": {

"field": "age"

}

},

"balanceAvg": {

"avg": {

"field": "balance"

}

}

},

"size": 0 # 不看上述满足条件的只看聚合的

# 结果

{

"took" : 2,

"timed_out" : false,

"_shards" : {

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

},

"hits" : {

"total" : {

"value" : 4,

"relation" : "eq"

},

"max_score" : null,

"hits" : [ ]

},

"aggregations" : {

"ageAgg" : { // 第一个聚合

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

{

"key" : 38, # age为38的有2条

"doc_count" : 2

},

{

"key" : 28,

"doc_count" : 1

},

{

"key" : 32,

"doc_count" : 1

}

]

},

"ageAvg" : { // 第二个聚合

"value" : 34.0

},

"balanceAvg" : {

"value" : 25208.0

}

}

}

# 子聚合(在之前聚合的基础上进行聚合)

# 按照年龄聚合,并且求这些年龄段的这些人的平均薪资

GET bank/_search

{

"query": {

"match_all": {}

},

"aggs": {

"ageAgg": {

"terms": {

"field": "age",

"size": 100

},

"aggs": {

"ageAvg": {

"avg": {

"field": "balance"

}

}

}

}

}

}

}

# 查出所有年龄分布,并且这些年龄段中M的平均薪资和F的平均薪资以及这个年龄段的总体平均薪资

GET bank/_search

{

"query": {

"match_all": {}

},

"aggs": {

"ageAgg": {

"terms": { # 先对age进行聚合

"field": "age",

"size": 100

},

"aggs": { # 子聚合

"genderAgg": {

"terms": {

"field": "gender.keyword"

},

"aggs": {

"balanceAvg": {

"avg": {

"field": "balance"

}

}

}

},

"ageBalanceAvg": {

"avg": {

"field": "balance"

}

}

}

}

},

"size": 0

}

3.2.8 nested对象聚合

数组类型的对象会被扁平化处理(对象的相同属性会分别存储到一起)

{

"group": "fans",

"user": [

{ "name": "John", "nicheng": "Smith" },

{ "name": "Alice", "nicheng": "White" }

]

}

# 存储形式

{

"group": "fans",

"user.name": [ "alice", "john" ],

"user.nicheng": [ "smith", "white" ]

}

# 这样我们去查的时候就会有问题

# 为了解决这个问题,就采用了嵌入式属性,数组里是对象时用嵌入式属性(不是对象无需用嵌入式属性)

https://blog.csdn.net/weixin_40341116/article/details/80778599

3.3 映射(略)

3.4 分词

POST _analyze

{

"analyzer": "standard",

"text": "The 2 Brown-Foxes bone."

}

# 分词英文没问题 对于中文却不太友好 所以我们要指定我们的要用的分词器

GET _analyze

{

"analyzer": "ik_smart",

"text":"我是中国人"

}

- 虽然上述我们完成了对中文的切分,但是一些网络热词ik分词器并没有给我准备,这时候我们就需要自定义一些词。

- 修改/usr/share/elasticsearch/plugins/ik/config中的IKAnalyzer.cfg.xml

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE properties SYSTEM "http://java.sun.com/dtd/properties.dtd">

<properties>

<comment>IK Analyzer 扩展配置</comment>

<!--用户可以在这里配置自己的扩展字典 -->

<entry key="ext_dict"></entry>

<!--用户可以在这里配置自己的扩展停止词字典-->

<entry key="ext_stopwords"></entry>

<!--用户可以在这里配置远程扩展字典 -->

<entry key="remote_ext_dict">http://192.168.64.132/es/fenci.txt</entry>

<!--用户可以在这里配置远程扩展停止词字典-->

<!-- <entry key="remote_ext_stopwords">words_location</entry> -->

</properties>

#修改完记得重启

docker restart elasticsearch

# es只会对于新增的数据用更新分词。历史数据是不会重新分词的。如果想要历史数据重新分词,需要执行:

POST my_index/_update_by_query?conflicts=proceed

2.使用nginx

mkdir /mydata/nginx/html/es

cd /mydata/nginx/html/es

touch fenci.txt

echo "乔碧萝殿下,yyds" > /mydata/nginx/html/fenci.txt

GET _analyze

{

"analyzer": "ik_max_word",

"text":"乔碧萝殿下,yyds"

}

四、java客户端操作es

- ps:spring-data-Elasticsearch 整合了es相关操作 比较简单,这边是使用es官方-Elasticsearch-Rest-Client。

// 依赖

<dependency>

<groupId>org.elasticsearch.client</groupId>

<artifactId>elasticsearch-rest-high-level-client</artifactId>

<version>7.4.2</version>

</dependency>

//配置文件 官网有

@Configuration

public class GuliESConfig {

public static final RequestOptions COMMON_OPTIONS;

static {

RequestOptions.Builder builder = RequestOptions.DEFAULT.toBuilder();

COMMON_OPTIONS = builder.build();

}

@Bean

public RestHighLevelClient esRestClient() {

RestClientBuilder builder = null;

// 可以指定多个es

builder = RestClient.builder(new HttpHost(host, 9200, "http"));

RestHighLevelClient client = new RestHighLevelClient(builder);

return client;

}

}

- 测试api

//保存

public void indexData() throws IOException {

// 设置索引

IndexRequest indexRequest = new IndexRequest ("users");

indexRequest.id("1");

User user = new User();

user.setUserName("lujun");

user.setAge(24);

user.setGender("男");

String jsonString = JSON.toJSONString(user);

//设置要保存的内容,指定数据和类型

indexRequest.source(jsonString, XContentType.JSON);

//执行创建索引和保存数据

IndexResponse index = client.index(indexRequest, GulimallElasticSearchConfig.COMMON_OPTIONS);

System.out.println(index);

}

// 查询

public void find() throws IOException {

// 1 创建检索请求

SearchRequest searchRequest = new SearchRequest();

searchRequest.indices("bank");

SearchSourceBuilder sourceBuilder = new SearchSourceBuilder();

// 构造检索条件

// sourceBuilder.query();

// sourceBuilder.from();

// sourceBuilder.size();

// sourceBuilder.aggregation();

sourceBuilder.query(QueryBuilders.matchQuery("address","mill"));

System.out.println(sourceBuilder.toString());

searchRequest.source(sourceBuilder);

// 2 执行检索

SearchResponse response = client.search(searchRequest, GuliESConfig.COMMON_OPTIONS);

// 3 观察响应

System.out.println(response.toString());

}

// 聚合查询

public void find() throws IOException {

// 1 创建检索请求

SearchRequest searchRequest = new SearchRequest();

searchRequest.indices("bank");

SearchSourceBuilder sourceBuilder = new SearchSourceBuilder();

// 构造检索条件

// sourceBuilder.query();

// sourceBuilder.from();

// sourceBuilder.size();

// sourceBuilder.aggregation();

sourceBuilder.query(QueryBuilders.matchQuery("address","mill"));

//AggregationBuilders工具类构建AggregationBuilder

// 构建第一个聚合条件:按照年龄的值分布

TermsAggregationBuilder agg1 = AggregationBuilders.terms("agg1").field("age").size(10);// 聚合名称

// 参数为AggregationBuilder

sourceBuilder.aggregation(agg1);

// 构建第二个聚合条件:平均薪资

AvgAggregationBuilder agg2 = AggregationBuilders.avg("agg2").field("balance");

sourceBuilder.aggregation(agg2);

System.out.println("检索条件"+sourceBuilder.toString());

searchRequest.source(sourceBuilder);

// 2 执行检索

SearchResponse response = client.search(searchRequest, GuliESConfig.COMMON_OPTIONS);

// 3 分析响应结果

System.out.println(response.toString());

}

- 结果获取

// 3.1 获取查询结果

SearchHits hits = response.getHits();

SearchHit[] hits1 = hits.getHits();

for (SearchHit hit : hits1) {

hit.getId();

hit.getIndex();

String sourceAsString = hit.getSourceAsString();

Account account = JSON.parseObject(sourceAsString, Account.class);

System.out.println(account);

}

// 3.2 获取聚合结果

Aggregations aggregations = response.getAggregations();

Terms agg21 = aggregations.get("agg2");

for (Terms.Bucket bucket : agg21.getBuckets()) {

String keyAsString = bucket.getKeyAsString();

System.out.println(keyAsString);

}

五、项目使用

- 上架的商品才可以在网站展示。

- 上架的商品需要可以被检索。

思路就是在后台管理有商品列表有一个上架的功能点击 我们要同步信息到es

代码(略过)主要难点就是怎么存?

//方案一

{

skuId:1

spuId:11

skyTitile:华为xx

price:999

saleCount:99

attr:[

{尺寸:5},

{CPU:高通945},

{分辨率:全高清}

]

缺点:如果每个sku都存储规格参数,会有冗余存储,因为每个spu对应的sku的规格参数都一样(空间上的浪费)

//方案二

sku索引

{

spuId:1

skuId:11

}

attr索引

{

skuId:11

attr:[

{尺寸:5},

{CPU:高通945},

{分辨率:全高清}

]

}

先找到4000个符合要求的spu,再根据4000个spu查询对应的属性,封装了4000个id,long 8B*4000=32000B=32KB

1K个人检索,就是32MB(时间浪费)

结论:如果将规格参数单独建立索引,会出现检索时出现大量数据传输的问题,会引起网络网络

415

415

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?