Pytorch保存日志

一、Tensorboard

参考资料 ⭐

核心代码

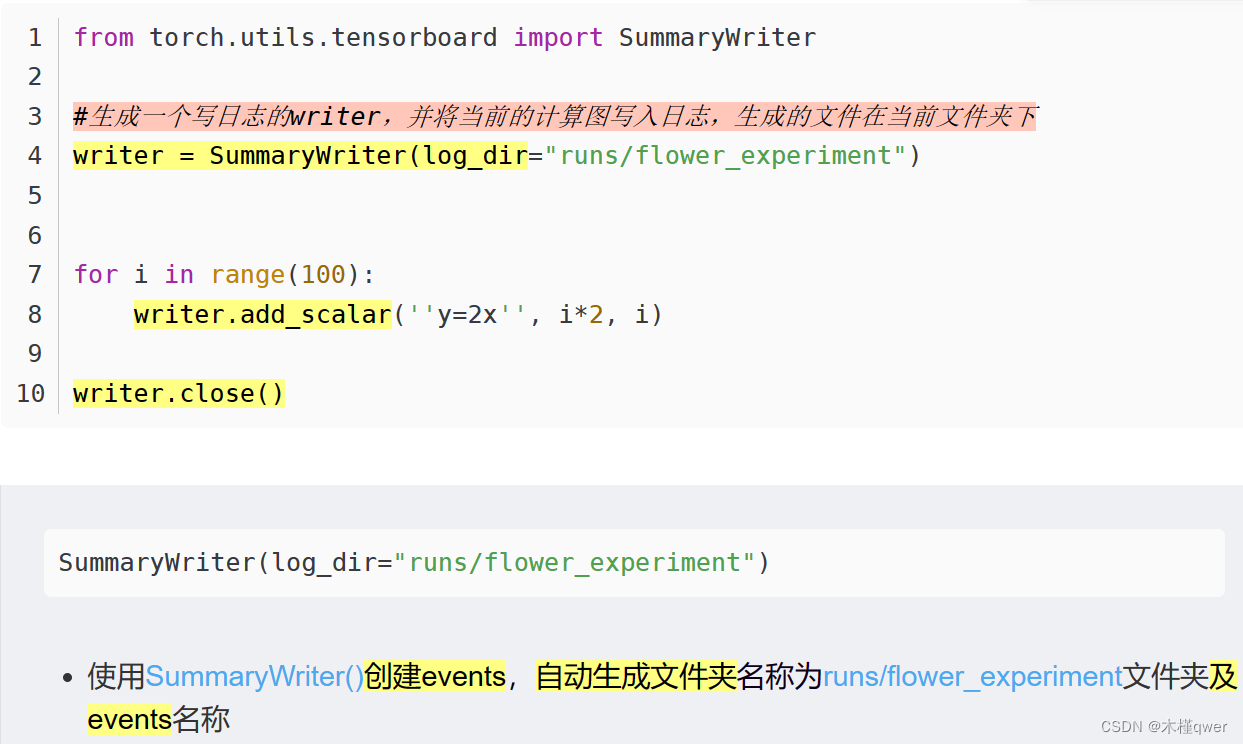

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter(log_dir=" ")

for i in range(100):

writer.add_scalar('y=2x', i*2, i) #y=2x 必须是单引号

writer.close()

1.1 查看日志

tensorboard --logdir=my_path (--port=6007/6006)

1.2 和 tensorboardX的区别

二、Logger

TODO

以前使用yolov3实验记录的资料,暂时留存

# from YOLOv3

import os

import datetime

from torch.utils.tensorboard import SummaryWriter

class Logger(object):

def __init__(self, log_dir, log_hist=True):

"""Create a summary writer logging to log_dir."""

if log_hist: # Check a new folder for each log should be dreated

log_dir = os.path.join(

log_dir,

datetime.datetime.now().strftime("%Y_%m_%d__%H_%M_%S"))

self.writer = SummaryWriter(log_dir)

def scalar_summary(self, tag, value, step):

"""Log a scalar variable."""

self.writer.add_scalar(tag, value, step)

def list_of_scalars_summary(self, tag_value_pairs, step):

"""Log scalar variables."""

for tag, value in tag_value_pairs:

self.writer.add_scalar(tag, value, step)

# 使用tensoboard

logger = Logger(args.logdir) # Tensorboard logger

# Tensorboard logging

tensorboard_log = [

("train/iou_loss", float(loss_components[0])),

("train/obj_loss", float(loss_components[1])),

("train/class_loss", float(loss_components[2])),

("train/loss", to_cpu(loss).item())]

logger.list_of_scalars_summary(tensorboard_log, batches_done) # 画出上述的四条线

# 照猫画虎:logger.list_of_scalars_summary([("loss", LOSS), ("acc", ACC)], batch_down)

if metrics_output is not None:

precision, recall, AP, f1, ap_class = metrics_output

evaluation_metrics = [

("validation/precision", precision.mean()),

("validation/recall", recall.mean()),

("validation/mAP", AP.mean()),

("validation/f1", f1.mean())]

logger.list_of_scalars_summary(evaluation_metrics, epoch

可视化

如果打不开网页请改一下port端口,默认6006,测试的时候打不开,改成9009后可显示。

tensorboard --logdir=/home/workspace/Amber/PyTorch-YOLOv3/logs/2022_02_20__09_18_36 --port=9009

6710

6710

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?