一、前言

在一些场景中需要把hive中的数据导入到hbase中做永久存储。hive与hbase数据交互一般有两种方式:1.hive和hbase建立起关联 2.把hive中的数据处理成hfile文件,然后通过bulkload导入到hbase。相比第一种方式,第二种方式效率更高,原因简单来说是HBase的数据是以HFile的形式存储在HDFS的,hive数据转为hfile文件后,可以通过bulkload直接把hfile文件加载进hbase中,比把数据put进hbase的少很多流程。类似于到数据进hive中,直接把处理好格式的数据上传到hive表所在hdfs目录效率比通过走mr插入数据效率高很多。

二、Hive导入Hbase实现方式

1.spark方式

1.hbase中创建表

create_namespace 'wm' 创建命名空间(类似数据库)

create 'wm:h_shop', 'info'

2.编写spark逻辑

查看hive表中的数据:

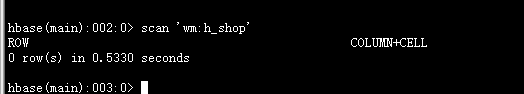

hbase中初始是无数据的

hbase中初始是无数据的

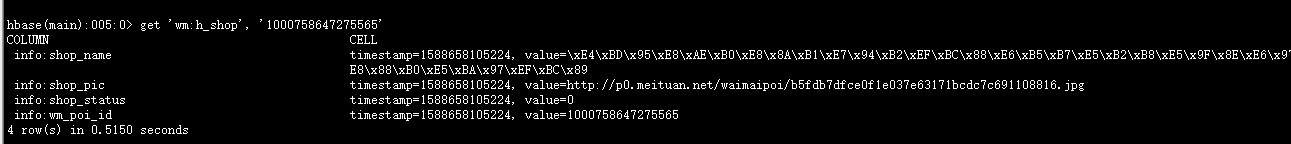

运行后hbase中数据查看数据:get 'wm:h_shop', '1000758647275565'

spark代码逻辑如下:

import java.text.SimpleDateFormat

import java.util.Date

import com.tang.crawler.utils.HbaseUtils

import org.apache.hadoop.fs.Path

import org.apache.hadoop.hbase.KeyValue

import org.apache.hadoop.hbase.client.HTable

import org.apache.hadoop.hbase.io.ImmutableBytesWritable

import org.apache.hadoop.hbase.mapred.TableOutputFormat

import org.apache.hadoop.hbase.mapreduce.{HFileOutputFormat2, LoadIncrementalHFiles}

import org.apache.hadoop.hbase.util.Bytes

import org.apache.hadoop.mapreduce.Job

import org.apache.spark.sql.SparkSession

import org.apache.spark.{SparkConf}

object ShopHiveToHbase {

def main(args: Array[String]): Unit = {

val sparkConf = new SparkConf().setAppName("shopHiveTohbase").setMaster("local[2]")

val sparkSession = SparkSession.builder()

.enableHiveSupport()

.config(sparkConf)

.getOrCreate()

val sc = sparkSession.sparkContext

//调用工具类获取hbase配置

val conf = HbaseUtils.getHbaseConfig()

//hbase表

val tableName = "wm:h_shop"

val table = new HTable(conf, tableName)

conf.set(TableOutputFormat.OUTPUT_TABLE, tableName)

val currentTime = new SimpleDateFormat("yyyyMMddHHmmss").format(new Date)

val hfileTmpPath ="hdfs://ELK01:9000/tmp/hbase/"+currentTime;

val job = Job.getInstance(conf)

job.setMapOutputKeyClass (classOf[ImmutableBytesWritable])

job.setMapOutputValueClass (classOf[KeyValue])

HFileOutputFormat2.configureIncrementalLoad (job, table)

// 加载hivehdf中的数据

sparkSession.sql("use wm")

val hiveShopRdd = sparkSession.sql("select wm_poi_id ,shop_name ,shop_status,shop_pic from dws_shop where batch_date ='2020-05-03' ").rdd

val hbaseRowRdd = hiveShopRdd.flatMap(rows=>{

val wm_poi_id = rows(0).toString

val shop_name = rows(1)

val shop_status = rows(2)

val shop_pic = rows(3)

Array((wm_poi_id,(("info","wm_poi_id",wm_poi_id))),

(wm_poi_id,(("info","shop_name",shop_name))),

(wm_poi_id,(("info","shop_status",shop_status))),

(wm_poi_id,(("info","shop_pic",shop_pic)))

)

})

//过滤调空数据

val rdd = hbaseRowRdd.filter(x=>x._1 != null).sortBy(x=>(x._1,x._2._1,x._2._2)).map(x=>{

//将rdd转换成HFile需要的格式,Hfile的key是ImmutableBytesWritable,那么我们定义的RDD也是要以ImmutableBytesWritable的实例为key

//KeyValue的实例为value

val rowKey = Bytes.toBytes(x._1)

val family = Bytes.toBytes(x._2._1)

val colum = Bytes.toBytes(x._2._2)

val value = Bytes.toBytes(x._2._3.toString)

(new ImmutableBytesWritable(rowKey), new KeyValue(rowKey, family, colum, value))

})

// Save Hfiles on HDFS

rdd.saveAsNewAPIHadoopFile(hfileTmpPath, classOf[ImmutableBytesWritable], classOf[KeyValue], classOf[HFileOutputFormat2], conf)

//Bulk load Hfiles to Hbase

val bulkLoader = new LoadIncrementalHFiles(conf)

bulkLoader.doBulkLoad(new Path(hfileTmpPath), table)

sparkSession.stop()

}

}2.mapperReduce方式

truncate 'wm:h_shop' 清除使用spark导入hbase中的数据

逻辑如下:

只需要走mapper逻辑,没有reduce操作

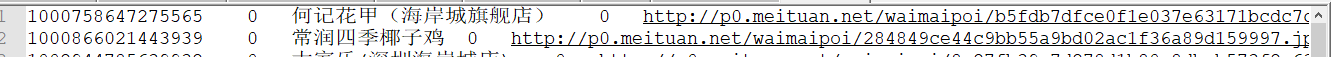

hive表中数据存在hdfs中是此格式:

mapper

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @Description: 转化为hbase数据的mapper

* @author tang

* @date 2019/12/14 20:19

*/

public class BulkLoadMapper extends Mapper<LongWritable, Text, ImmutableBytesWritable, Put> {

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString();

String[] items = line.split("\\t");

ImmutableBytesWritable rowKey = new ImmutableBytesWritable(items[0].getBytes());

Put put = new Put(Bytes.toBytes(items[0])); //ROWKEY

put.addColumn("info".getBytes(), "wm_poi_id".getBytes(), items[0].getBytes());

put.addColumn("info".getBytes(), "shop_name".getBytes(), items[2].getBytes());

put.addColumn("info".getBytes(), "shop_status".getBytes(), items[3].getBytes());

put.addColumn("info".getBytes(), "shop_pic".getBytes(), items[4].getBytes());

context.write(rowKey, put);

}

}

Drive

import com.tang.common.utils.HbaseClientUtils;

import org.apache.commons.lang3.StringUtils;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.client.HTable;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.HFileOutputFormat2;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

import java.text.SimpleDateFormat;

import java.util.Date;

/**

* @Description: 把hdfs上的文件转为hfile文件驱动类

* @author tang

* @date 2019/12/14 20:22

*/

public class BulkLoadDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//hdfs上txt数据

final String SRC_PATH= "hdfs://192.168.25.128:9000/user/hive/warehouse/wm.db/dws_shop/batch_date=2020-05-03";

//输出的hfile文件数据

String currentTime = new SimpleDateFormat("yyyyMMddHHmmss").format(new Date());

final String DESC_PATH ="hdfs://ELK01:9000/tmp/hbase/output/"+currentTime;

String hbaseTable ="wm:h_shop";

System.out.println(DESC_PATH);

drive(SRC_PATH,DESC_PATH,hbaseTable);

}

/**

* @Description: hdfs文件转换成hfile

* @author tang

* @date 2019/12/14 20:39

*/

public static void drive(String srcPath,String descPath,String hbaseTable) throws IOException, ClassNotFoundException, InterruptedException{

if(StringUtils.isBlank(srcPath)||StringUtils.isBlank(descPath)||StringUtils.isBlank(hbaseTable)){

throw new RuntimeException("参数有误,有参数为空:"+"srcPath:"+srcPath+",descPath:"+descPath+",hbaseTable:"+hbaseTable);

}

Configuration conf = HbaseClientUtils.getConfiguration();

Job job=Job.getInstance(conf);

job.setJarByClass(BulkLoadDriver.class);

job.setMapperClass(BulkLoadMapper.class);

job.setMapOutputKeyClass(ImmutableBytesWritable.class);

job.setMapOutputValueClass(Put.class);

job.setOutputFormatClass(HFileOutputFormat2.class);

HTable table = new HTable(conf,hbaseTable);

HFileOutputFormat2.configureIncrementalLoad(job,table,table.getRegionLocator());

FileInputFormat.addInputPath(job,new Path(srcPath));

FileOutputFormat.setOutputPath(job,new Path(descPath));

//系统退出返回执行结果

System.exit(job.waitForCompletion(true)?0:1);

}

}运行Driver的main方法生成hfile文件如图:

运行加载hfile工具类加载hfile文件到hbase

工具类:

import com.tang.common.utils.HbaseClientUtils;

import org.apache.commons.lang3.StringUtils;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.client.HTable;

import org.apache.hadoop.hbase.mapreduce.LoadIncrementalHFiles;

/**

* @Description: Hfile文件数据加载进hbase

* @author tang

* @date 2019/12/14 20:31

*/

public class LoadHFileToHBaseUtils {

public static void main(String[] args) throws Exception {

/*String inputPath="hdfs://192.168.25.128:9000/tmp/hbase/output";*/

String inputPath="hdfs://192.168.25.128:9000/tmp/hbase/output/20200505141559";

String hbaseTable="wm:h_shop";

Boolean load = load(inputPath, hbaseTable);

System.out.println(load);

}

public static Boolean load(String inputPath,String hbaseTable) throws Exception{

if(StringUtils.isBlank(inputPath)||StringUtils.isBlank(hbaseTable)){

return false;

}

Configuration configuration = HbaseClientUtils.getConfiguration();

HBaseConfiguration.addHbaseResources(configuration);

LoadIncrementalHFiles loder = new LoadIncrementalHFiles(configuration);

HTable hTable = new HTable(configuration, hbaseTable);

loder.doBulkLoad(new Path(inputPath), hTable);

return true;

}

}

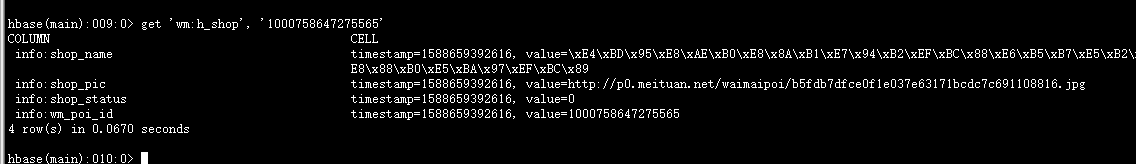

查看hbase中的数据:

之前的数据已经清除掉的

2120

2120

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?