本文项目采用python3.6版本语言,利用scrapy框架进行爬取。

该项目实现的功能是获取http://www.proxy360.cn和http://www.xicidaili.com网站中的代理信息,由于网站设有反爬虫机制,网站是通过浏览器发送过来的User-Agent的值来确认浏览器身份的,所以为了避免被查出是爬虫,所以该项目中修改了USER_AGENT的值,关于常见反爬虫机制请参照本博客“Scrapy爬虫实战五:爬虫攻防”的内容。由于爬取http://www.xicidaili.com的内容较多,在验证代理是否可用的过程中使用了多线程。

下面是本次项目的目录结构:

----getProxy

----getProxy

----spiders

__init__.py

Proxy360Spider.py

XiciDailiSpider.py

__init__.py

items.py

pipelines.py

settings.py

userAgents.py

scrapy.cfg

testProxy.py

上述目录结构中,没有后缀名的为文件夹,有后缀的为文件。我们需要修改只有Proxy360Spider.py(XiciDailiSpider.py)、items.py、pipelines.py、settings.py这四个文件。

其中items.py决定爬取哪些项目,Proxy360Spider.py(XiciDailiSpider.py)决定怎么爬,setting.py决定由谁去处理爬取的内容,pipelines决定爬取后

内容怎样处理。由于我们爬取的两个代理网站的源代码不类似,所以我们写了Proxy360Spider.py(XiciDailiSpider.py)两个文件,目录中的两个

__inti__.py文件都是空文件,保留这两个文件主要是为了让他们所在的文件夹可以作为Python的模块使用。目录中的scrapy.cfg为配置文件,testProxy.py是测试爬取出来的

代理是否可用的。

1、选择爬取的项目items.py

#决定爬取哪些项目

import scrapy

class GetProxyItem(scrapy.Item):

ip=scrapy.Field()

port=scrapy.Field()

type=scrapy.Field()

location=scrapy.Field()

protocol=scrapy.Field()

source=scrapy.Field()

id=scrapy.Field()#用来保存记录条数2 .1、 定义怎样爬取,爬取360代理的文件Proxy360Spider.py

#定义如何爬取

import scrapy

from getProxy.items import GetProxyItem

class Proxy360Spider(scrapy.Spider):

name="proxy360Spider"

allowed_domains=['proxy360.cn']

nations=['Brazil','China','America','Taiwan','Japan','Thailand','Vietnam','bahrein']

start_urls=[]

count=0

for nation in nations:

start_urls.append('http://www.proxy360.cn/Region/'+nation)

def parse(self,response):

subSelector=response.xpath('//div[@class="proxylistitem" and @name="list_proxy_ip"]')

items=[]

for sub in subSelector:

item=GetProxyItem()

item['ip']=sub.xpath('.//span[1]/text()').extract()[0]

item['port']=sub.xpath('.//span[2]/text()').extract()[0]

item['type']=sub.xpath('.//span[3]/text()').extract()[0]

item['location']=sub.xpath('.//span[4]/text()').extract()[0]

item['protocol']='HTTP'

item['source']='proxy360'

self.count+=1

item['id']=str(self.count)

items.append(item)

return items

由于360代理中包含多个地区的代理,首先找寻多个地区代理url的规律,可以这些地区的url都是由“http://www.proxy360.cn/Region/”加上地区名构成的,

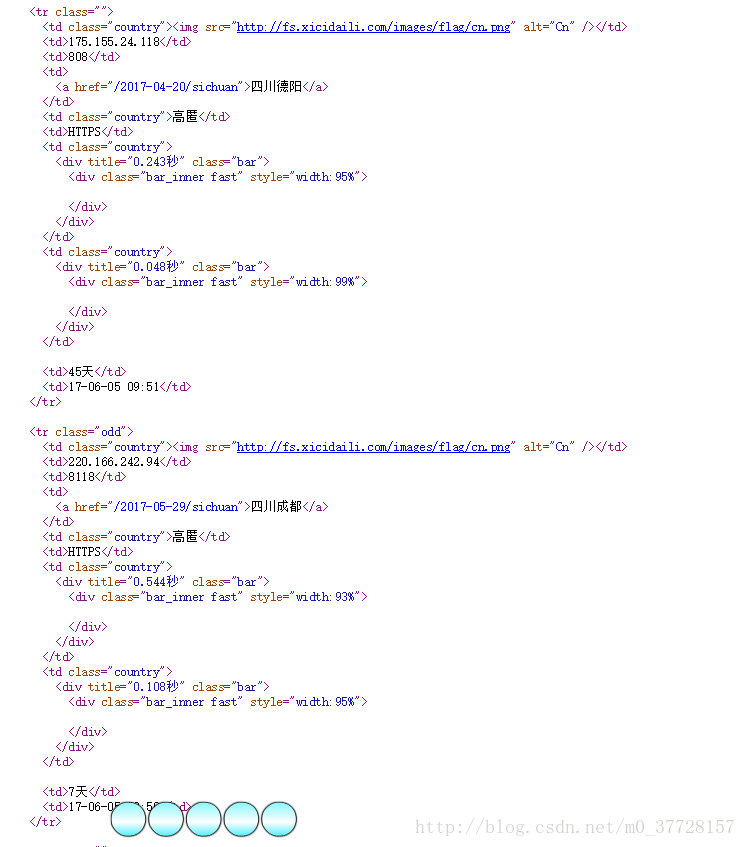

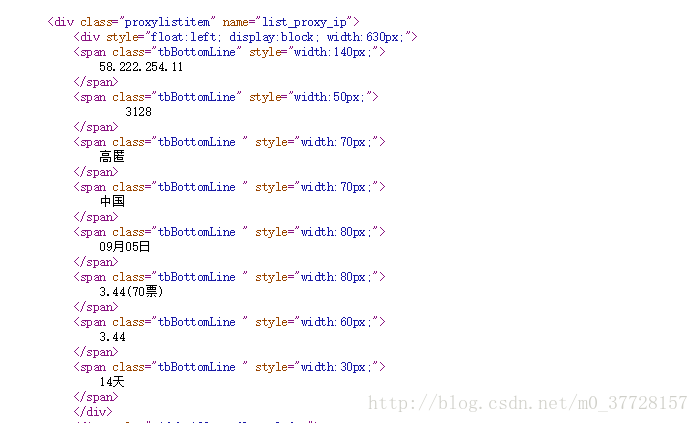

任意点开一个url,打开网页源代码,如下图所示:

可以发现我们需要的代理信息都在<div class="proxylistitem" name="list_proxy_ip">这个标签下,用xpath选择器先得到所有的这种标签,然后再一层一层的得到我们需要的信息。

2.1、定义怎样爬取,爬取西刺免费代理IP的文件XiciDailiSpider.py

import scrapy

from getProxy.items import GetProxyItem

class XiciDailiSpider(scrapy.Spider):

name='xiciDailiSpider'

allowed_domains=['xicidaili.com']

wds=['nn','nt','wn','wt']

pages=20

start_urls=[]

count=0

for type in wds:

for i in range(1,pages+1):

start_urls.append('http://www.xicidaili.com/'+type+'/'+str(i))

def parse(self,response):

subSelector=response.xpath('//tr[@class=""]|//tr[@class="odd"]')

items=[]

for sub in subSelector:

item=GetProxyItem()

item['ip']=sub.xpath('.//td[2]/text()').extract()[0]

item['port']=sub.xpath('.//td[3]/text()').extract()[0]

item['type']=sub.xpath('.//td[5]/text()').extract()[0]

if sub.xpath('.//td[4]/a/text()'):

item['location']=sub.xpath('//td[4]/a/text()').extract()[0]

else:

item['location']=sub.xpath('.//td[4]/text()').extract()[0]

item['protocol']=sub.xpath('.//td[6]/text()').extract()[0]

item['source']='xicidaili'

self.count+=1

item['id']=str(self.count)

items.append(item)

return items

同样爬取西刺代理的url也是有多个,第一步仍然是找寻url规律,读者去 http://www.xicidaili.com/网站中多点几下,关注url的变化就能得出规律,这里不再赘述。

查看网页源代码可以发现,我们需要的代理信息都在 <tr class="">或者 <tr class="odd">这两个标签下,读者重点看代码中是如何一层一层找到我们需要的信息的。

3、保存爬取的结果pipelines.py

#保存爬取结果

import time

class GetProxyPipeline(object):

def process_item(self,item,spider):

today= time.strftime('%Y-%m-%d',time.localtime())

fileName='proxy'+today+'.txt'

with open(fileName,'a') as fp:

fp.write('记录'+item['id'].strip()+':'+'\t')

fp.write(item['ip'].strip()+'\t')

fp.write(item['port'].strip()+'\t')

fp.write(item['protocol'].strip()+'\t')

fp.write(item['type'].strip()+'\t')

fp.write(item['location'].strip()+'\t')

fp.write(item['source'].strip()+'\t\n')

return item

结果保存为.txt的文件,文件以proxy加当天日期命名。

4、分派任务的settings.py

from getProxy import userAgents

BOT_NAME='getProxy'

SPIDER_MODULES=['getProxy.spiders']

NEWSPIDER_MODULE='getProxy.spiders'

USER_AGENT=userAgents.pcUserAgent.get('Firefox 4.0.1 – Windows')

ITEM_PIPELINES={'getProxy.pipelines.GetProxyPipeline':300}

这里修改了USER_AGENT,导入userAgents模块,下面给出userAgents模块代码:

pcUserAgent = {

"safari 5.1 – MAC":"User-Agent:Mozilla/5.0 (Macintosh; U; Intel Mac OS X 10_6_8; en-us) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50",

"safari 5.1 – Windows":"User-Agent:Mozilla/5.0 (Windows; U; Windows NT 6.1; en-us) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50",

"IE 9.0":"User-Agent:Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0);",

"IE 8.0":"User-Agent:Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0)",

"IE 7.0":"User-Agent:Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0)",

"IE 6.0":"User-Agent: Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1)",

"Firefox 4.0.1 – MAC":"User-Agent: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.6; rv:2.0.1) Gecko/20100101 Firefox/4.0.1",

"Firefox 4.0.1 – Windows":"User-Agent:Mozilla/5.0 (Windows NT 6.1; rv:2.0.1) Gecko/20100101 Firefox/4.0.1",

"Opera 11.11 – MAC":"User-Agent:Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; en) Presto/2.8.131 Version/11.11",

"Opera 11.11 – Windows":"User-Agent:Opera/9.80 (Windows NT 6.1; U; en) Presto/2.8.131 Version/11.11",

"Chrome 17.0 – MAC":"User-Agent: Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_0) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11",

"Maxthon":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Maxthon 2.0)",

"Tencent TT":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; TencentTraveler 4.0)",

"The World 2.x":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)",

"The World 3.x":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; The World)",

"sogou 1.x":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Trident/4.0; SE 2.X MetaSr 1.0; SE 2.X MetaSr 1.0; .NET CLR 2.0.50727; SE 2.X MetaSr 1.0)",

"360":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; 360SE)",

"Avant":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Avant Browser)",

"Green Browser":"User-Agent: Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)"

}

mobileUserAgent = {

"iOS 4.33 – iPhone":"User-Agent:Mozilla/5.0 (iPhone; U; CPU iPhone OS 4_3_3 like Mac OS X; en-us) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8J2 Safari/6533.18.5",

"iOS 4.33 – iPod Touch":"User-Agent:Mozilla/5.0 (iPod; U; CPU iPhone OS 4_3_3 like Mac OS X; en-us) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8J2 Safari/6533.18.5",

"iOS 4.33 – iPad":"User-Agent:Mozilla/5.0 (iPad; U; CPU OS 4_3_3 like Mac OS X; en-us) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8J2 Safari/6533.18.5",

"Android N1":"User-Agent: Mozilla/5.0 (Linux; U; Android 2.3.7; en-us; Nexus One Build/FRF91) AppleWebKit/533.1 (KHTML, like Gecko) Version/4.0 Mobile Safari/533.1",

"Android QQ":"User-Agent: MQQBrowser/26 Mozilla/5.0 (Linux; U; Android 2.3.7; zh-cn; MB200 Build/GRJ22; CyanogenMod-7) AppleWebKit/533.1 (KHTML, like Gecko) Version/4.0 Mobile Safari/533.1",

"Android Opera ":"User-Agent: Opera/9.80 (Android 2.3.4; Linux; Opera Mobi/build-1107180945; U; en-GB) Presto/2.8.149 Version/11.10",

"Android Pad Moto Xoom":"User-Agent: Mozilla/5.0 (Linux; U; Android 3.0; en-us; Xoom Build/HRI39) AppleWebKit/534.13 (KHTML, like Gecko) Version/4.0 Safari/534.13",

"BlackBerry":"User-Agent: Mozilla/5.0 (BlackBerry; U; BlackBerry 9800; en) AppleWebKit/534.1+ (KHTML, like Gecko) Version/6.0.0.337 Mobile Safari/534.1+",

"WebOS HP Touchpad":"User-Agent: Mozilla/5.0 (hp-tablet; Linux; hpwOS/3.0.0; U; en-US) AppleWebKit/534.6 (KHTML, like Gecko) wOSBrowser/233.70 Safari/534.6 TouchPad/1.0",

"Nokia N97":"User-Agent: Mozilla/5.0 (SymbianOS/9.4; Series60/5.0 NokiaN97-1/20.0.019; Profile/MIDP-2.1 Configuration/CLDC-1.1) AppleWebKit/525 (KHTML, like Gecko) BrowserNG/7.1.18124",

"Windows Phone Mango":"User-Agent: Mozilla/5.0 (compatible; MSIE 9.0; Windows Phone OS 7.5; Trident/5.0; IEMobile/9.0; HTC; Titan)",

"UC":"User-Agent: UCWEB7.0.2.37/28/999",

"UC standard":"User-Agent: NOKIA5700/ UCWEB7.0.2.37/28/999",

"UCOpenwave":"User-Agent: Openwave/ UCWEB7.0.2.37/28/999",

"UC Opera":"User-Agent: Mozilla/4.0 (compatible; MSIE 6.0; ) Opera/UCWEB7.0.2.37/28/999"

}

5、 配置文件scrapy.cfg

[settings]

default=getProxy.settings

[deploy]

project=getProxy

6、怎么运行

cmd->cd 将文件调到我们项目所在的这一层文件,也就是上面目录结构中scrapy.cfg所在的这一层文件夹,然后输入命令:scrapy crawl proxy360Spider

或者 scrapy crawl xiciDailiSpider这里的proxy360Spider是我们Proxy360SpiderSpider类中name="proxy360Spider"的值,更改name的值输入的命令也将更改,xiciDailiSpider也是同理。

我们写了两个网站的爬虫,所以要用依次输入上面的两个命令,主要是因为360代理中的代理ip太少,所以又多爬取了一个网站。

7、测试爬取出的代理是否可用testProxy.py

from urllib import request

import re

import threading

import time

class TestProxy(object):

def __init__(self):

self.sFile=r'proxy'+time.strftime('%Y-%m-%d',time.localtime())+'.txt'

# self.sFile=r'alive.txt'

self.dFile=r'alive_.txt'

self.URL=r'http://www.baidu.com/'

self.threads=10

self.timeout=3

self.regex=re.compile(r'baidu.com')

self.aliveList=[]

self.run()

def run(self):

with open(self.sFile,'r') as fp:

lines=fp.readlines()

line=lines.pop()

while lines:

for i in range(self.threads):

try:

t=threading.Thread(target=self.linkWithProxy,args=(line,))

t.start()

if lines:

line=lines.pop()

else:

continue

except:

print('创建线程失败!\n')

with open(self.dFile,'a') as fp:

for i in range(len(self.aliveList)):

fp.write(self.aliveList[i])

def linkWithProxy(self,line):

lineList=line.split('\t')

protocol=lineList[3].lower()

server=protocol+r'://'+lineList[1]+':'+lineList[2]

opener=request.build_opener(request.ProxyHandler({protocol:server}))

request.install_opener(opener)

try:

response=request.urlopen(self.URL,timeout=self.timeout)

except:

print('%s connect failed!\n' %server)

return

else:

try:

strRe=response.read()

except:

print('%s connect failed!\n' %server)

return

if self.regex.search(str(strRe)):

print('%s connect success!!!!!!!\n' %server)

self.aliveList.append(line)

if __name__=='__main__':

tp=TestProxy()

这里是读取proxy+当天日期的.txt文件,也就是上面爬虫跑出来的结果,然后将可用的ip写在alive_.txt文件中,这些运行的结果都是保存在项目的根目录中。

本博客有参考《 Python 网络爬虫实战》一书,该书采用的是python2.x在 Linux 系统下运行的,采用python3.x在windows下运行的可以参考本博客。

5875

5875

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?