一、MapReduce编码规范

Map阶段2个步骤

-

设置 InputFormat 类, 将数据切分为 Key-Value(K1和V1) 对, 输入到第二步

-

自定义 Map 逻辑, 将第一步的结果转换成另外的 Key-Value(K2和V2) 对, 输出结果

Shule 阶段 4 个步骤

-

对输出的 Key-Value 对进行 分区

-

对不同分区的数据按照相同的 Key 排序

-

(可选) 对分组过的数据初步 规约 , 降低数据的网络拷贝

-

对数据进行 分组 , 相同 Key 的 Value 放入一个集合中

Reduce 阶段 2 个步骤

7. 对多个 Map 任务的结果进行排序以及合并, 编写 Reduce 函数实现自己的逻辑, 对输入的 Key-Value 进行处理, 转为新的 Key-Value(K3和V3)输出

8. 设置 OutputFormat 处理并保存 Reduce 输出的 Key-Value 数据

二、MapReduce案例-流量统计

2.1 需求

统计每个手机号的上行数据包总和,下行数据包总和,上行总流量之和,下行总流量之和 分 析:以手机号码作为key值,上行流量,下行流量,上行总流量,下行总流量四个字段作为 value值,然后以这个key,和value作为map阶段的输出,reduce阶段的输入。

数据:

1363157985066 13726230503 00-FD-07-A4-72-B8:CMCC 120.196.100.82 i02.c.aliimg.com 游戏娱乐 24 27 2481 24681 200

1363157995052 13826544101 5C-0E-8B-C7-F1-E0:CMCC 120.197.40.4 jd.com 京东购物 4 0 264 0 200

1363157991076 13926435656 20-10-7A-28-CC-0A:CMCC 120.196.100.99 taobao.com 淘宝购物 2 4 132 1512 200

1363154400022 13926251106 5C-0E-8B-8B-B1-50:CMCC 120.197.40.4 cnblogs.com 技术门户 4 0 240 0 200

1363157993044 18211575961 94-71-AC-CD-E6-18:CMCC-EASY 120.196.100.99 iface.qiyi.com 视频网站 15 12 1527 2106 200

1363157995074 84138413 5C-0E-8B-8C-E8-20:7DaysInn 120.197.40.4 122.72.52.12 未知 20 16 4116 1432 200

1363157993055 13560439658 C4-17-FE-BA-DE-D9:CMCC 120.196.100.99 sougou.com 综合门户 18 15 1116 954 200

1363157995033 15920133257 5C-0E-8B-C7-BA-20:CMCC 120.197.40.4 sug.so.360.cn 信息安全 20 20 3156 2936 200

1363157983019 13719199419 68-A1-B7-03-07-B1:CMCC-EASY 120.196.100.82 baidu.com 综合搜索 4 0 240 0 200

1363157984041 13660577991 5C-0E-8B-92-5C-20:CMCC-EASY 120.197.40.4 s19.cnzz.com 站点统计 24 9 6960 690 200

1363157973098 15013685858 5C-0E-8B-C7-F7-90:CMCC 120.197.40.4 rank.ie.sogou.com 搜索引擎 28 27 3659 3538 200

1363157986029 15989002119 E8-99-C4-4E-93-E0:CMCC-EASY 120.196.100.99 www.umeng.com 站点统计 3 3 1938 180 200

1363157992093 13560439658 C4-17-FE-BA-DE-D9:CMCC 120.196.100.99 zhilian.com 招聘门户 15 9 918 4938 200

1363157986041 13480253104 5C-0E-8B-C7-FC-80:CMCC-EASY 120.197.40.4 csdn.net 技术门户 3 3 180 180 200

1363157984040 13602846565 5C-0E-8B-8B-B6-00:CMCC 120.197.40.4 2052.flash2-http.qq.com 综合门户 15 12 1938 2910 200

1363157995093 13922314466 00-FD-07-A2-EC-BA:CMCC 120.196.100.82 img.qfc.cn 图片大全 12 12 3008 3720 200

1363157982040 13502468823 5C-0A-5B-6A-0B-D4:CMCC-EASY 120.196.100.99 y0.ifengimg.com 综合门户 57 102 7335 110349 200

1363157986072 18320173382 84-25-DB-4F-10-1A:CMCC-EASY 120.196.100.99 input.shouji.sogou.com 搜索引擎 21 18 9531 2412 200

1363157990043 13925057413 00-1F-64-E1-E6-9A:CMCC 120.196.100.55 t3.baidu.com 搜索引擎 69 63 11058 48243 200

1363157988072 13760778710 00-FD-07-A4-7B-08:CMCC 120.196.100.82 http://youku.com/ 视频网站 2 2 120 120 200

1363157985079 13823070001 20-7C-8F-70-68-1F:CMCC 120.196.100.99 img.qfc.cn 图片浏览 6 3 360 180 200

1363157985069 13600217502 00-1F-64-E2-E8-B1:CMCC 120.196.100.55 www.baidu.com 综合门户 18 138 1080 186852 200

1363157985059 13600217502 00-1F-64-E2-E8-B1:CMCC 120.196.100.55 www.baidu.com 综合门户 19 128 1177 16852 200

2.2 分析内容

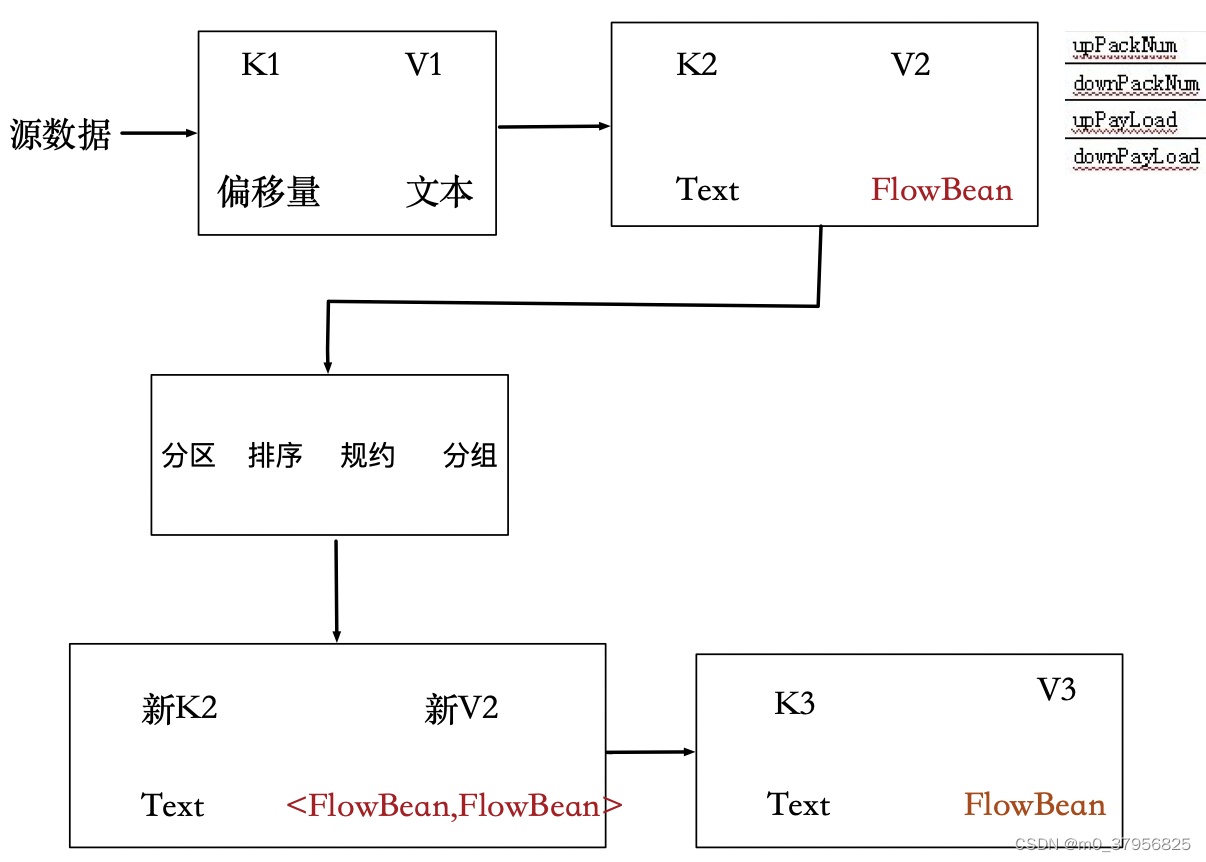

根据需求内容和mapreduce编程规范可得到如下图:

1、对源数据进行读取,得到k1、v1,偏移量是字符数,v1为读取的源数据的行内容

2、在map阶段,将k1、v1转成k2、v2,k2为手机号,v2为需求的4个字段,定义成FlowBean对象

3、经过shuffle(分区、排序、规约、分组)阶段后,得到新的k2、v2,v2为FlowBean集合

4、在reduce阶段,将新的k2、v2根据需求内容(相加逻辑)转成k3、v3

2.3 代码

- 自定义map的输出value对象FlowBean

package cn.itcast.flow_count_demo1;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class FlowBean implements Writable {

private Integer upFlow;

private Integer downFlow;

private Integer upCountFlow;

private Integer downCountFlow;

public Integer getUpFlow() {

return upFlow;

}

public void setUpFlow(Integer upFlow) {

this.upFlow = upFlow;

}

public Integer getDownFlow() {

return downFlow;

}

public void setDownFlow(Integer downFlow) {

this.downFlow = downFlow;

}

public Integer getUpCountFlow() {

return upCountFlow;

}

public void setUpCountFlow(Integer upCountFlow) {

this.upCountFlow = upCountFlow;

}

public Integer getDownCountFlow() {

return downCountFlow;

}

public void setDownCountFlow(Integer downCountFlow) {

this.downCountFlow = downCountFlow;

}

@Override

public String toString() {

return upFlow +

"\t" + downFlow +

"\t" + upCountFlow +

"\t" + downCountFlow;

}

//序列化

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeInt(upFlow);

dataOutput.writeInt(downFlow);

dataOutput.writeInt(upCountFlow);

dataOutput.writeInt(downCountFlow);

}

//反序列化

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readInt();

this.downFlow = dataInput.readInt();

this.upCountFlow = dataInput.readInt();

this.downCountFlow = dataInput.readInt();

}

}

- 定义FlowMapper类

package cn.itcast.flow_count_demo1;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowCounterMapper extends Mapper<LongWritable,Text, Text,FlowBean> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] split = value.toString().split("\t");

String phoneNum = split[1];

FlowBean flowBean = new FlowBean();

flowBean.setUpFlow(Integer.parseInt(split[6]));

flowBean.setDownFlow(Integer.parseInt(split[7]));

flowBean.setUpCountFlow(Integer.parseInt(split[8]));

flowBean.setDownCountFlow(Integer.parseInt(split[9]));

context.write(new Text(phoneNum),flowBean);

}

}

- 定义FlowReducer类

package cn.itcast.flow_count_demo1;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowCounterReducer extends Reducer<Text,FlowBean,Text,FlowBean> {

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

Integer upFlow = 0;

Integer downFlow = 0;

Integer upCountFlow = 0;

Integer downCountFlow = 0;

for (FlowBean value : values) {

upFlow += value.getUpFlow();

downFlow += value.getDownFlow();

upCountFlow += value.getUpCountFlow();

downCountFlow += value.getDownCountFlow();

}

FlowBean flowBean = new FlowBean();

flowBean.setUpFlow(upFlow);

flowBean.setDownFlow(downFlow);

flowBean.setUpCountFlow(upCountFlow);

flowBean.setDownCountFlow(downCountFlow);

context.write(key,flowBean);

}

}

- 程序main函数入口FlowMain

package cn.itcast.flow_count_demo1;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class JobMain extends Configured implements Tool {

//该方法用于指定一个job任务

@Override

public int run(String[] args) throws Exception {

//1:创建一个job任务对象

Job job = Job.getInstance(super.getConf(), "mapreduce_flowcount");

//如果打包运行出错,则需要加该配置

job.setJarByClass(JobMain.class);

//2:配置job任务对象(八个步骤)

//第一步:指定文件的读取方式和读取路径

job.setInputFormatClass(TextInputFormat.class);

//TextInputFormat.addInputPath(job, new Path("hdfs://node01:8020/wordcount"));

TextInputFormat.addInputPath(job,new Path("file:///Users/yubo/Desktop/code/java_workspace/wordcount_mapreduce/input/flow"));

//第二步:指定Map阶段的处理方式和数据类型

job.setMapperClass(FlowCounterMapper.class);

//设置Map阶段K2的类型

job.setMapOutputKeyClass(Text.class);

//设置Map阶段V2的类型

job.setMapOutputValueClass(FlowBean.class);

//第三(分区),四 (排序)

//第五步: 规约(Combiner)

//第六步 分组

//第七步:指定Reduce阶段的处理方式和数据类型

job.setReducerClass(FlowCounterReducer.class);

//设置K3的类型

job.setOutputKeyClass(Text.class);

//设置V3的类型

job.setOutputValueClass(FlowBean.class);

//第八步: 设置输出类型

job.setOutputFormatClass(TextOutputFormat.class);

//设置输出的路径

TextOutputFormat.setOutputPath(job,new Path("file:///Users/yubo/Desktop/code/java_workspace/wordcount_mapreduce/output/flow"));

//等待任务结束

boolean bl = job.waitForCompletion(true);

return bl ? 0:1;

}

public static void main(String[] args) throws Exception {

Configuration configuration = new Configuration();

//启动job任务

int run = ToolRunner.run(configuration, new JobMain(), args);

System.exit(run);

}

}

3254

3254

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?