第5周:运动鞋识别

🍨 本文为🔗365天深度学习训练营中的学习记录博客

🍦 参考文章:365天深度学习训练营-第六周:好莱坞明星识别

🍖 原作者:K同学啊 | 接辅导、项目定制

🚀 文章来源:K同学的学习圈子

学习目标:

要求:

保存训练过程中的最佳模型权重

调用官方的VGG-16网络框架

拔高:

保存训练过程中的最佳模型权重

调整代码使得测试集的accuracy达到86%

学习内容:

一、前期准备

1.1 设置GPU

import torch

import torch.nn as nn

from torchvision import transforms,datasets

import os,PIL,pathlib,warnings

warnings.filterwarnings('ignore')

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

device

1.2 导入数据

import os,PIL,random,pathlib

data_dir = './6-data'

data_dir = pathlib.Path(data_dir)

# print(data_dir) #6-data

data_paths = list(data_dir.glob("*"))

# print(data_paths) # 返回data_dir路径下所有子文件的路径,返回的是一个列表

classNames = [str(path).split('\\')[1] for path in data_paths] # 类别的名称

print(classNames)

train_transforms = transforms.Compose([transforms.Resize([224,224]),

transforms.ToTensor(),

transforms.Normalize(

mean = [0.485, 0.456, 0.406],

std = [0.229, 0.224, 0.225])])

total_data = datasets.ImageFolder('./6-data', transform = train_transforms)

total_data

1.3 划分数据集

train_size = int(0.8 * len(total_data))

test_size = len(total_data) - train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data, [train_size, test_size])

train_dataset, test_dataset

batch_size = 1

train_dl = torch.utils.data.DataLoader(train_dataset, batch_size = batch_size, shuffle = True, num_workers = 1)

test_dl = torch.utils.data.DataLoader(test_dataset, batch_size = batch_size, shuffle = True, num_workers = 1)

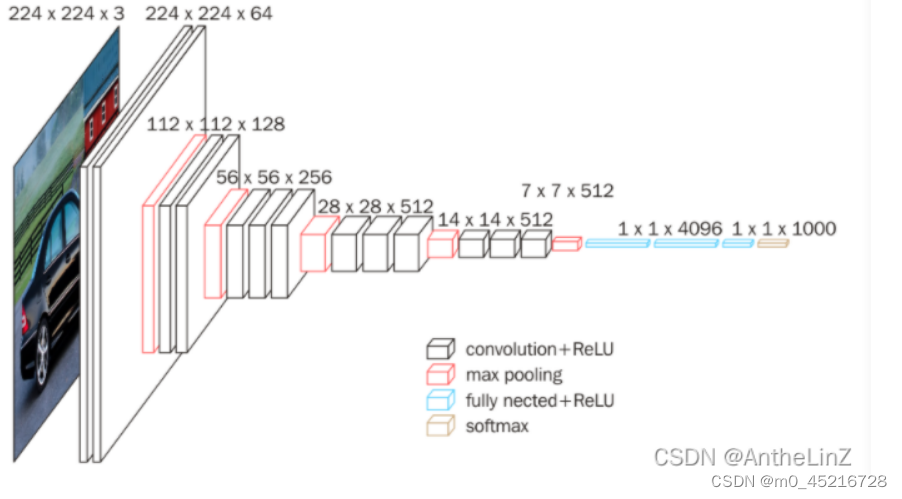

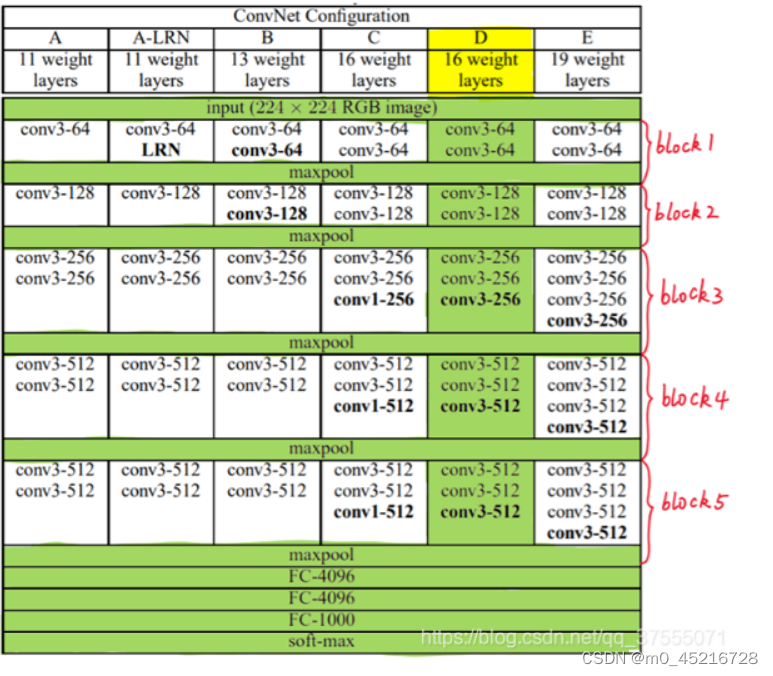

二、调用官方的VGG-16模型

VGG-16结构说明:

13个卷积层(convolutional layer),

3个全连接层(full connected layer)

5个池化层(pool layer)

from torchvision.models import vgg16

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print('Using {} device'.format(device))

# 加载预训练模型,并且对模型进行微调

model = vgg16(pretrained=True).to(device)

for param in model.parameters():

param.requires_grad = False # 冻结模型的参数,在训练的时候之训练最后一层的参数

# 修改classfier模块的第6层

model.classifier._modules['6'] = nn.Linear(4096,len(classNames))

model.to(device)

model

三、训练模型

3.1 编写训练函数

def train(dataloader, model, loss_fun, optim):

size = len(dataloader.dataset) # 训练集的大小

num_batches = len(dataloader) # 批次数目

train_acc, train_loss = 0, 0

for x, y in dataloader:

x,y = x.to(device), y.to(device)

# 计算误差

preds = model(x)

loss = loss_fun(preds, y)

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录loss与acc

train_acc += (preds.argmax(1)==y).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

3.2 .编写测试函数

def test(dataloader, model, loss_fun):

size = len(dataloader.dataset) # 测试集大小

num_batches = len(dataloader)

test_acc, test_loss = 0, 0

with torch.no_grad():

for x, y in dataloader:

x, y = x.to(device), y.to(device)

# 计算loss

preds = model(x)

loss = loss_fun(preds, y)

test_loss += loss.item()

test_acc += (preds.argmax(1) == y).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

3.3 设置动态学习率¶

def adjust_learning_rate(optimizer, epoch, start_lr):

# 每2个epoch衰减为原来的0.98

lr = start_lr * (0.98 ** (epoch//2))

for param_group in optimizer.param_groups:

param_group['lr'] = lr

learn_rate = 1e-4 # 初始学习率

optimizer = torch.optim.SGD(model.parameters(), lr = learn_rate)

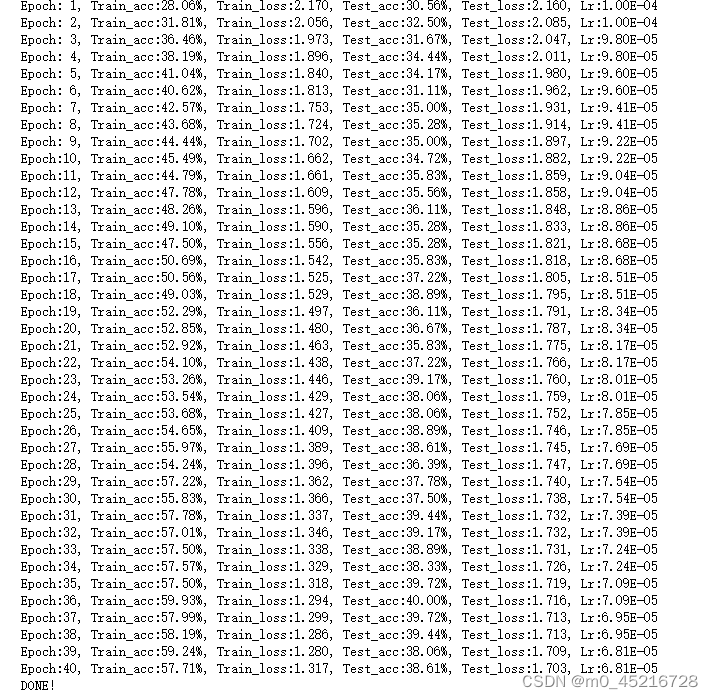

3.4 正式训练

# 注意如何保存最佳模型

import copy

loss_fun = nn.CrossEntropyLoss() # 创建损失函数

epochs = 40

train_loss = []

train_acc = []

test_loss = []

test_acc = []

best_acc = 0 # 设置一个最佳准确率,作为判别指标

for epoch in range(epochs):

# 更新学习率

adjust_learning_rate(optimizer, epoch, learn_rate) # 使用自定义学习率

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fun, optimizer)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fun)

# 保存最佳模型到best_model

if epoch_test_acc > best_acc:

best_acc = epoch_test_acc

best_model = copy.deepcopy(model)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.2f}%, Train_loss:{:.3f}, Test_acc:{:.2f}%, Test_loss:{:.3f}, Lr:{:.2E}')

print(template.format(epoch+1, epoch_train_acc, epoch_train_loss, epoch_test_acc, epoch_test_loss, lr))

# 保存最佳模型到文件中

PATH = './best_model.pth' # 保存参数文件名

torch.save(model.state_dict(), PATH)

print('DONE!')

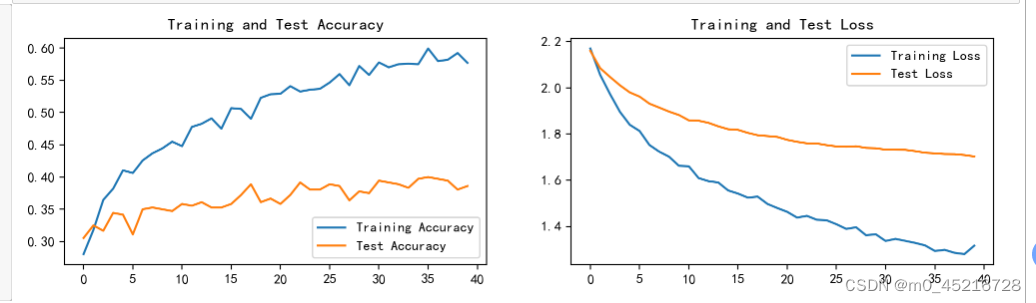

四、结果可视化

4.1 Loss 与 Accuracy图

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings('ignore') # 忽略警告信息

def myplot(train_acc, train_loss, test_acc, test_loss):

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

plt.rcParams['figure.dpi'] = 200

epoch_range = range(len(train_acc))

plt.figure(figsize=(12,3))

plt.subplot(1,2,1)

plt.plot(epoch_range, train_acc, label='Training Accuracy')

plt.plot(epoch_range, test_acc, label = 'Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Test Accuracy')

plt.subplot(1,2,2)

plt.plot(epoch_range, train_loss, label='Training Loss')

plt.plot(epoch_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Test Loss')

plt.show()

myplot(train_acc, train_loss, test_acc, test_loss)

4.2 指定图片进行预测

torch.squeeze():对数据维数进行压缩,去掉位数为1的维数

torch.squeeze(input, dim=None,* ) torch.unqueeze(input, dim=None,* ):对数据维数进行升维,加上维数为1的维度

from PIL import Image

classes = list(total_data.class_to_idx)

def predict_one_image(image_path, model, transforms, classes):

test_img = Image.open(image_path).convert('RGB')

test_img = transforms(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_,pred = torch.max(output, 1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

# 预测训练集中的某张图片

predict_one_image(image_path='./6-data/Angelina Jolie/002_8f8da10e.jpg',model=model, transforms=train_transforms, classes=classes)

4.3 4模型评估¶

best_model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, best_model, loss_fun)

best_model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, best_model, loss_fun)

# 查看是否与记录的保存一致

epoch_test_acc,epoch_test_loss

五、总结

1.本周回顾了上周所学的动态学习率以及它的怎么用代码实现,几乎所有的神经网络采取的都是梯度下降法.学习率和权重更新的快慢相关,学习率过大,可能花费更少的时间收敛到最优权重。然而,学习速率过大会导致跳动过大,不够准确以致于达不到最优点。学习率过低,收敛到最优权重的时间就更长。所以在模型训练的初期,可以将学习率设置为随着迭代次数的增加,逐渐减小,保证模型在训练后期不会有太大的波动,从而最终接近最优解。

2.本周学习了如何调用官方的VGG网络架构,并且保存训练过程中最佳的模型权重。

学习率理解与分类

————————————————

版权声明:本文为CSDN博主「m0_45216728」的原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接及本声明。

原文链接:https://blog.csdn.net/m0_45216728/article/details/134868606

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?