🏡 我的环境:

语言环境:Python3.87

编译器:Jupyter notebook

深度学习环境:Pytorch\ torch == 2.0.1+cu117

torchvision == 0.15.2+cu117

1 前期准备

1.1 设置GPU

import torch

import torch.nn as nn

from torchvision import transforms, datasets

import torchvision

import os, PIL, pathlib, warnings

warnings.filterwarnings('ignore')

device = 'cuda' if torch.cuda.is_available() else 'cpu'

device

1.2 导入数据

import os,PIL,random,pathlib

data_dir = './8-data/'

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

print(data_paths)

classname = [str(path).split('\\')[1] for path in data_paths]

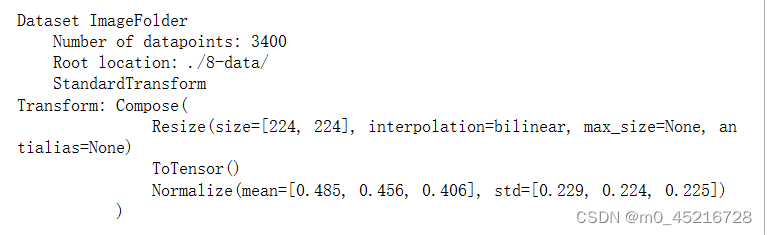

data_transform = transforms.Compose([

transforms.Resize([224,224]),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std = [0.229, 0.224, 0.225])

])

total_data = datasets.ImageFolder('./8-data/', transform=data_transform)

total_data

1.3 划分数据集

train_size = int(0.8 * len(total_data))

test_size = len(total_data)-train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data, [train_size, test_size])

batch_size = 32

train_dl = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers = 1)

test_dl = torch.utils.data.DataLoader(test_dataset, batch_size=batch_size, shuffle=True, num_workers=1)

2 搭建包含C3模块的模型

2.1 搭建模型

import torch.nn as nn

def autpad(k, p=None): # kernel, padding

# Pad to 'same'

if p is None:

p = k//2 if isinstance(k, int) else [x// 2 for x in k] # autpad

return p

class Conv(nn.Module):

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=True): #c1:ch_in, c2:ch_out, k:kernel_size, s:stride, p:padding,g:groups

super().__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autpad(k, p), groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2)

# 这段代码的作用是根据条件选择不同的激活函数。

# 如果act为True,则使用nn.SiLU()作为激活函数;如果act是一个自定义的激活函数模块,则使用该模块作为激活函数;否则,使用nn.Identity()作为激活函数,即不进行任何操作。

self.act = nn.SiLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

class Bottleneck(nn.Module):

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1=Conv(c1, c_, 1, 1)

self.cv2=Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

def forward(self,x):

return x+self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3(nn.Module):

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

super().__init__()

c_ = int(c2*e)

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2*c_, c2, 1) # act=FReLU(C2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

def forward(self,x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), dim=1))

class model_K(nn.Module):

def __init__(self):

super(model_K, self).__init__()

# 卷积模块

self.Conv = Conv(3, 32, 3, 2)

# C3模块1

self.C3_1 = C3(32, 64, 3, 2)

# 全连接层,用于分类

self.classfier = nn.Sequential(

nn.Linear(in_features=802816, out_features=100),

nn.ReLU(),

nn.Linear(in_features=100, out_features=2)

)

def forward(self, x):

x = self.Conv(x)

x = self.C3_1(x)

x = torch.flatten(x, start_dim=1)

x = self.classfier(x)

return x

model = model_K().to(device)

model

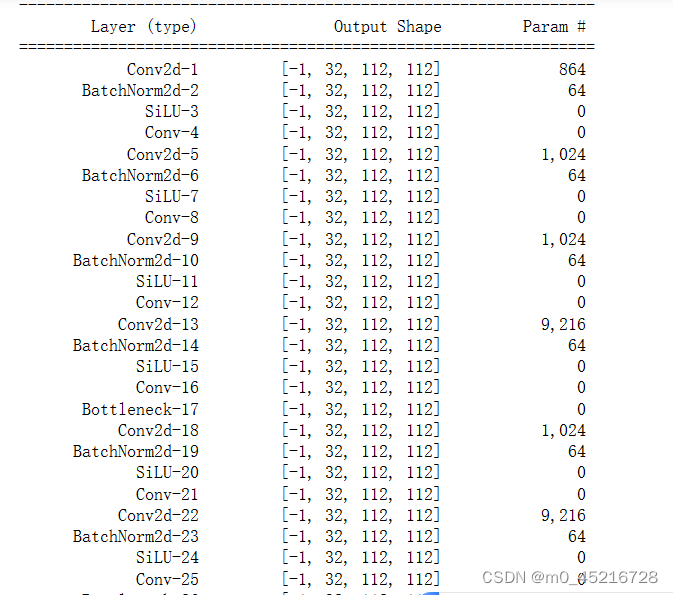

2.2 查看模型详情

import torchsummary as summary

summary.summary(model, (3, 224, 224))

3 训练模型

3.1 编写训练模型

def train(dataloader, model, loss_fun, optimizer):

size = len(dataloader.dataset)

num_batchsize = len(dataloader)

train_acc, train_loss = 0, 0

for x, y in dataloader:

x, y = x.to(device), y.to(device)

# 计算预测值和预测误差

pred = model(x)

loss = loss_fun(pred, y)

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 更新参数

# 计算loss与acc

train_acc += (pred.argmax(1)==y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batchsize

return train_acc, train_loss

3.2 编写测试函数

def test(dataloader, model, loss_fun):

size = len(dataloader.dataset)

num_batchsize = len(dataloader)

test_acc, test_loss = 0, 0

for x, y in dataloader:

x, y = x.to(device), y.to(device)

# 计算loss

pred = model(x)

loss = loss_fun(pred, y)

test_acc += (pred.argmax(1)==y).type(torch.float).sum().item()

test_loss += loss.item()

test_acc /= size

test_loss /= num_batchsize

return test_acc, test_loss

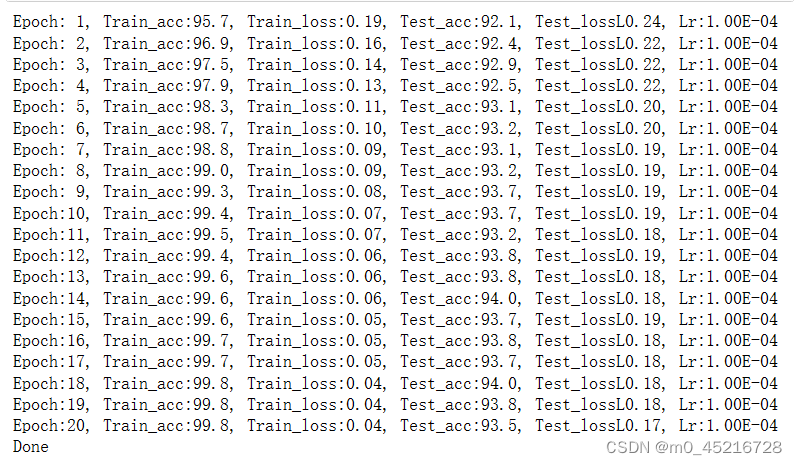

3.3 正式训练

import copy

optimizer = torch.optim.SGD(model.parameters(), lr=1e-4)

loss_fun = nn.CrossEntropyLoss()

epochs = 20

train_acc = []

train_loss = []

test_acc = []

test_loss = []

best_acc = 0 #设置一个最佳准确率,作为最佳模型的判别标准

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fun, optimizer)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fun)

if epoch_test_acc > best_acc:

best_acc = epoch_test_acc

best_model = copy.deepcopy(model)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

# 获取当前的学习率

# print(optimizer.state_dict())

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.1f}, Train_loss:{:.2f}, Test_acc:{:.1f}, Test_lossL{:.2f}, Lr:{:.2E}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss, lr))

path = './best_model.pth'

torch.save(model.state_dict(), path)

print('Done')

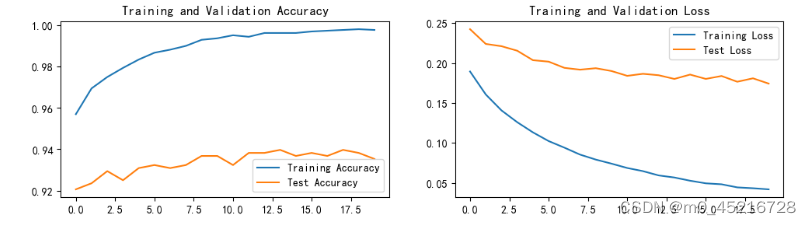

4 结果可视化

4.1 Loss 与 Accuracy图

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

plt.rcParams['figure.dpi'] = 100

plt.figure(figsize=(12,3))

plt.subplot(1,2,1)

plt.plot(range(epochs), train_acc, label='Training Accuracy')

plt.plot(range(epochs), test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1,2,2)

plt.plot(range(epochs), train_loss, label='Training Loss')

plt.plot(range(epochs), test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

4.2 模型评估

est_model.eval()

epoch_test_acc_e, epoch_test_loss_e = test(test_dl, best_model, loss_fun)

epoch_test_acc_e, epoch_test_loss_e

# 查看是否与我们记录的最高准确率一致

best_acc

1430

1430

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?