有的图太大,节点太多,耗费内存,解决方案:将大图划分为多个子图,再将子图打包成mini-batch

导入数据集

import torch

from torch_geometric.datasets import Planetoid

from torch_geometric.transforms import NormalizeFeatures

dataset = Planetoid(root='data/Planetoid', name='PubMed', transform=NormalizeFeatures())

print()

print(f'Dataset: {dataset}:')

print('==================')

print(f'Number of graphs: {len(dataset)}')

print(f'Number of features: {dataset.num_features}')

print(f'Number of classes: {dataset.num_classes}')

data = dataset[0] # Get the first graph object.

print()

print(data)

print('===============================================================================================================')

# Gather some statistics about the graph.

print(f'Number of nodes: {data.num_nodes}')

print(f'Number of edges: {data.num_edges}')

print(f'Average node degree: {data.num_edges / data.num_nodes:.2f}')

print(f'Number of training nodes: {data.train_mask.sum()}')

print(f'Training node label rate: {int(data.train_mask.sum()) / data.num_nodes:.3f}')

print(f'Has isolated nodes: {data.has_isolated_nodes()}')

print(f'Has self-loops: {data.has_self_loops()}')

print(f'Is undirected: {data.is_undirected()}')这是一张大图,数据集中仅有一张图

Data(x=[19717, 500], edge_index=[2, 88648], y=[19717], train_mask=[19717], val_mask=[19717], test_mask=[19717])

这一张图中共有19717个点,有88648条边。

分割大图

总结:先切分成n个部分,再把这几个部分打包成mini-batch

from torch_geometric.loader import ClusterData, ClusterLoader

torch.manual_seed(12345)

cluster_data = ClusterData(data, num_parts=128) # 1. Create subgraphs.

train_loader = ClusterLoader(cluster_data, batch_size=32, shuffle=True) # 2. Stochastic partioning scheme.

print()

total_num_nodes = 0

for step, sub_data in enumerate(train_loader):

print(f'Step {step + 1}:')

print('=======')

print(f'Number of nodes in the current batch: {sub_data.num_nodes}')

print(sub_data)

print()

total_num_nodes += sub_data.num_nodes

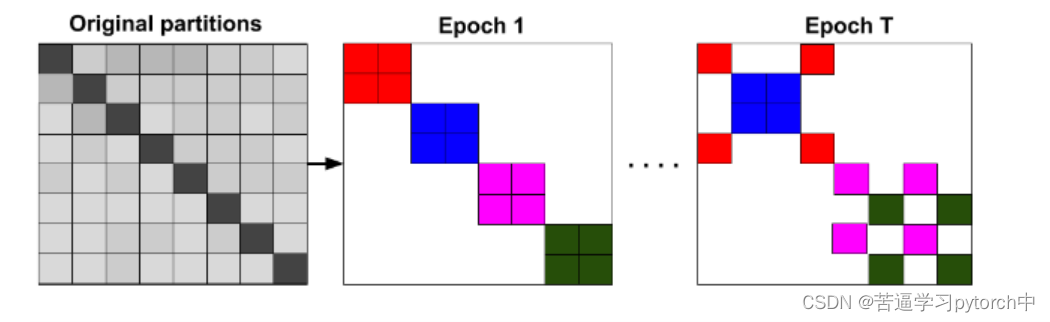

print(f'Iterated over {total_num_nodes} of {data.num_nodes} nodes!')Cluster-GCN 根据分割算法把大图分割成小的子图,然后GNN在小的子图上分别卷积,这样就可以避免邻域爆炸(neighborhood explosion)的问题

分割的原理:

不同的颜色代表不同子图的邻接矩阵,在划分为子图时,会移去一些子图与子图之间的链接,这可能会限制模型的性能,为解决这个问题,Cluster-GCN 在各个mini-batch之内的cluster之间合并添链接,也就是stochastic partitioning scheme。

这里用到了两个函数:

| ClusterData | 将一张大的图切分为num_parts个子图 |

| ClusterLoader | 执行stochastic partitioning scheme以生成mini—batch |

搭建GNN模型

import torch.nn.functional as F

from torch_geometric.nn import GCNConv

class GCN(torch.nn.Module):

def __init__(self, hidden_channels):

super(GCN, self).__init__()

torch.manual_seed(12345)

self.conv1 = GCNConv(dataset.num_node_features, hidden_channels)

self.conv2 = GCNConv(hidden_channels, dataset.num_classes)

def forward(self, x, edge_index):

x = self.conv1(x, edge_index)

x = x.relu()

x = F.dropout(x, p=0.5, training=self.training)

x = self.conv2(x, edge_index)

return x

model = GCN(hidden_channels=16)

print(model)训练

from IPython.display import Javascript

display(Javascript('''google.colab.output.setIframeHeight(0, true, {maxHeight: 300})'''))

model = GCN(hidden_channels=16)

optimizer = torch.optim.Adam(model.parameters(), lr=0.01, weight_decay=5e-4)

criterion = torch.nn.CrossEntropyLoss()

def train():

model.train()

for sub_data in train_loader: # Iterate over each mini-batch.

out = model(sub_data.x, sub_data.edge_index) # Perform a single forward pass.

loss = criterion(out[sub_data.train_mask], sub_data.y[sub_data.train_mask]) # Compute the loss solely based on the training nodes.

loss.backward() # Derive gradients.

optimizer.step() # Update parameters based on gradients.

optimizer.zero_grad() # Clear gradients.

def test():

model.eval()

out = model(data.x, data.edge_index)

pred = out.argmax(dim=1) # Use the class with highest probability.

accs = []

for mask in [data.train_mask, data.val_mask, data.test_mask]:

correct = pred[mask] == data.y[mask] # Check against ground-truth labels.

accs.append(int(correct.sum()) / int(mask.sum())) # Derive ratio of correct predictions.

return accs

for epoch in range(1, 51):

loss = train()

train_acc, val_acc, test_acc = test()

print(f'Epoch: {epoch:03d}, Train: {train_acc:.4f}, Val Acc: {val_acc:.4f}, Test Acc: {test_acc:.4f}')训练的过程其实跟图分类训练的过程很像,这里的训练集和测试集都是整张图,训练集是切分后的mini-batch,测试集是完整的一张图

3467

3467

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?