第1关:Sqoop数据导出语法学习

start-all.shschematool -dbType mysql -initSchema

第2关:HDFS数据导出至Mysql内

mysql -uroot -p123123 -h127.0.0.1

create database hdfsdb;

use hdfsdb;

create table fruit(fru_no int primary key,fru_name varchar(20),fru_price int,fru_address varchar(20));

exit;

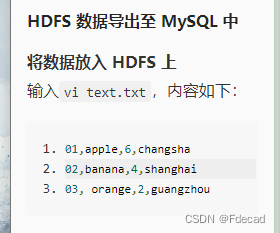

vi text.txt

输入如下数据,我不直接打出来是因为数据有差别,肉眼看不见的差别,就是玄学问题。

hadoop fs -put text.txt /user

sqoop export --connect jdbc:mysql://127.0.0.1:3306/hdfsdb --username root --password 123123 --table fruit --export-dir /user/text.txt -m 1

第3关:Hive数据导出至MySQL中

mysql -uroot -p123123 -h127.0.0.1

use hdfsdb;

create table project(pro_no int primary key,pro_name varchar(20),pro_teacher varchar(20));

exit;

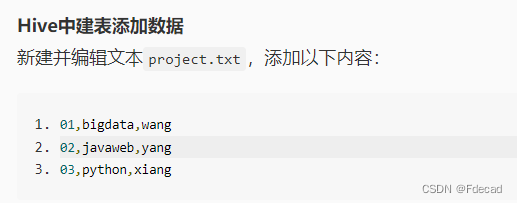

vi project.txt

:wq

hive

create table project (pro_no int,pro_name string, pro_teacher string) row format delimited fields terminated by ',' stored as textfile;

load data local inpath './project.txt' into table project;

exit;

sqoop export --connect jdbc:mysql://127.0.0.1:3306/hdfsdb --username root --password 123123 --table project --export-dir /opt/hive/warehouse/project -m 1 --input-fields-terminated-by ','

3973

3973

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?