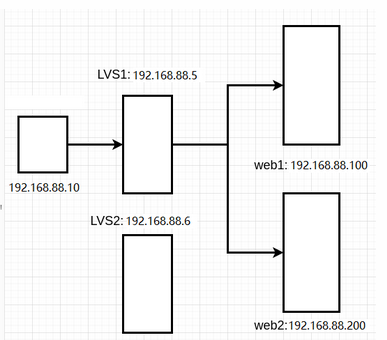

- 环境说明:LVS-DR模式

-

- client1:eth0->192.168.88.10

- lvs1:eth0->192.168.88.5

- lvs2:eth0->192.168.88.6

- web1:eth0->192.168.88.100

- web2:eth0->192.168.88.200

- 环境准备

# 关闭2台web服务器上的keepalived,并卸载

[root@pubserver cluster]# vim 08-rm-keepalived.yml

---

- name: remove keepalived

hosts: webservers

tasks:

- name: stop keepalived # 停服务

service:

name: keepalived

state: stopped

- name: uninstall keepalived # 卸载

yum:

name: keepalived

state: absent

[root@pubserver cluster]# ansible-playbook 08-rm-keepalived.yml

# 创建新虚拟机lvs2

[root@myhost ~]# vm clone lvs2

# 为lvs2设置ip地址

[root@myhost ~]# vm setip lvs2 192.168.88.6

# 连接

[root@myhost ~]# ssh 192.168.88.6配置高可用、负载均衡

1,配置网络参数

2.配置yum

3,web服务器上配置nginx

4,web服务器上配置内核参数

5.web服务器上配置vip

6.在lvs上安装keepalived和ipvsadm

7.修改keepalived配置文件

8.启服务

9验证

- 在2台web服务器的lo上配置vip

- 在2台web服务器上配置内核参数

- 删除lvs1上的eth0上的VIP地址。因为vip将由keepalived接管

[root@pubserver cluster]# vim 09-del-lvs1-vip.yml

---

- name: del lvs1 vip

hosts: lvs1

tasks:

- name: rm vip

lineinfile: # 在指定文件中删除行

path: /etc/sysconfig/network-scripts/ifcfg-eth0

regexp: 'IPADDR2=' # 正则匹配

state: absent

notify: restart system

handlers:

- name: restart system

shell: reboot

[root@pubserver cluster]# ansible-playbook 09-del-lvs1-vip.yml

# 查看结果

[root@lvs1 ~]# ip a s eth0 | grep 88

inet 192.168.88.5/24 brd 192.168.88.255 scope global noprefixroute eth0- 删除lvs1上的lvs规则。因为lvs规则将由keepalived创建

[root@lvs1 ~]# ipvsadm -Ln # 查看规则

[root@lvs1 ~]# ipvsadm -D -t 192.168.88.15:80- 在lvs上配置keepalived

# 在主机清单文件中加入lvs2的说明

[root@pubserver cluster]# vim inventory

...略...

[lb]

lvs1 ansible_host=192.168.88.5

lvs2 ansible_host=192.168.88.6

...略...

# 安装软件包

[root@pubserver cluster]# cp 01-upload-repo.yml 10-upload-repo.yml

---

- name: config repos.d

hosts: lb

tasks:

- name: delete repos.d

file:

path: /etc/yum.repos.d

state: absent

- name: create repos.d

file:

path: /etc/yum.repos.d

state: directory

mode: '0755'

- name: upload local88

copy:

src: files/local88.repo

dest: /etc/yum.repos.d/

[root@pubserver cluster]# ansible-playbook 10-upload-repo.yml

[root@pubserver cluster]# vim 11-install-lvs2.yml

---

- name: install lvs keepalived

hosts: lb

tasks:

- name: install pkgs # 安装软件包

yum:

name: ipvsadm,keepalived

state: present

[root@pubserver cluster]# ansible-playbook 11-install-lvs2.yml

[root@lvs1 ~]# vim /etc/keepalived/keepalived.conf

12 router_id lvs1 # 为本机取一个唯一的id

13 vrrp_iptables # 自动开启iptables放行规则

... ...

20 vrrp_instance VI_1 {

21 state MASTER

22 interface eth0

23 virtual_router_id 51

24 priority 100

25 advert_int 1

26 authentication {

27 auth_type PASS

28 auth_pass 1111

29 }

30 virtual_ipaddress {

31 192.168.88.15 # vip地址,与web服务器的vip一致

32 }

33 }

# 以下为keepalived配置lvs的规则

35 virtual_server 192.168.88.15 80 { # 声明虚拟服务器地址

36 delay_loop 6 # 健康检查延迟6秒开始

37 lb_algo wrr # 调度算法为wrr

38 lb_kind DR # 工作模式为DR

39 persistence_timeout 50 # 50秒内相同客户端调度到相同服务器

40 protocol TCP # 协议是TCP

41

42 real_server 192.168.88.100 80 { # 声明真实服务器

43 weight 1 # 权重

44 TCP_CHECK { # 通过TCP协议对真实服务器做健康检查

45 connect_timeout 3 # 连接超时时间为3秒

46 nb_get_retry 3 # 3次访问失败则认为真实服务器故障

47 delay_before_retry 3 # 两次检查时间的间隔3秒

48 }

49 }

50 real_server 192.168.88.200 80 {

51 weight 2

52 TCP_CHECK {

53 connect_timeout 3

54 nb_get_retry 3

55 delay_before_retry 3

56 }

57 }

58 }

# 以下部分删除

# 启动keepalived服务

[root@lvs1 ~]# systemctl start keepalived

# 验证

[root@lvs1 ~]# ip a s eth0 | grep 88

inet 192.168.88.5/24 brd 192.168.88.255 scope global noprefixroute eth0

inet 192.168.88.15/32 scope global eth0

[root@lvs1 ~]# ipvsadm -Ln # 出现规则

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.88.15:80 wrr persistent 50

-> 192.168.88.100:80 Route 1 0 0

-> 192.168.88.200:80 Route 2 0 0

# 客户端连接测试

[root@client1 ~]# for i in {1..6}; do curl http://192.168.88.15/; done

Welcome from web2

Welcome from web2

Welcome from web2

Welcome from web2

Welcome from web2

Welcome from web2

# 为了效率相同的客户端在50秒内分发给同一台服务器。为了使用同一个客户端可以看到轮询效果,可以注释配置文件中相应的行后,重启keepavlied。

[root@lvs1 ~]# vim +39 /etc/keepalived/keepalived.conf

...略...

# persistence_timeout 50

...略...

[root@lvs1 ~]# systemctl restart keepalived.service

# 在客户端验证

[root@client1 ~]# for i in {1..6}; do curl http://192.168.88.15/; done

Welcome from web2

Welcome from web1

Welcome from web2

Welcome from web2

Welcome from web1

Welcome from web2

# 配置LVS2

[root@lvs1 ~]# scp /etc/keepalived/keepalived.conf 192.168.88.6:/etc/keepalived/

[root@lvs2 ~]# vim /etc/keepalived/keepalived.conf

12 router_id lvs2

21 state BACKUP

24 priority 80

[root@lvs2 ~]# systemctl start keepalived

[root@lvs2 ~]# ipvsadm -Ln # 出现规则

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.88.15:80 wrr

-> 192.168.88.100:80 Route 1 0 0

-> 192.168.88.200:80 Route 2 0 0- 验证

# 1. 验证真实服务器健康检查

[root@web1 ~]# systemctl stop nginx

[root@lvs1 ~]# ipvsadm -Ln # web1在规则中消失

[root@lvs2 ~]# ipvsadm -Ln

[root@web1 ~]# systemctl start nginx

[root@lvs1 ~]# ipvsadm -Ln # web1重新出现在规则中

[root@lvs2 ~]# ipvsadm -Ln

# 2. 验证lvs的高可用性

[root@lvs1 ~]# shutdown -h now # 关机

[root@lvs2 ~]# ip a s | grep 88 # 可以查看到vip

inet 192.168.88.6/24 brd 192.168.88.255 scope global noprefixroute eth0

inet 192.168.88.15/32 scope global eth0

# 客户端访问vip依然可用

[root@client1 ~]# for i in {1..6}; do curl http://192.168.88.15/; done

Welcome from web1

Welcome from web2

Welcome from web2

Welcome from web1

Welcome from web2

Welcome from web2

1762

1762

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?