Kubernetes集群

本次通过kubeadm安装 1.29.2 版本

前言:

k8s启动的流程 linux > docker > cri-docker > kberclt > AS/CM/SCH

- AS apiserver

- CM controller-manager

- SCH scheduler

通过kubeadm的安装方式

组件以容器化的方式运行

优势

- 简单

- 自愈

缺点

- 集群部署掩盖一些细节不便于理解

二进制安装

组件以系统进程的方式运行

优势

- 能够更灵活的配置集群

缺点

- 难理解

一、准备工作

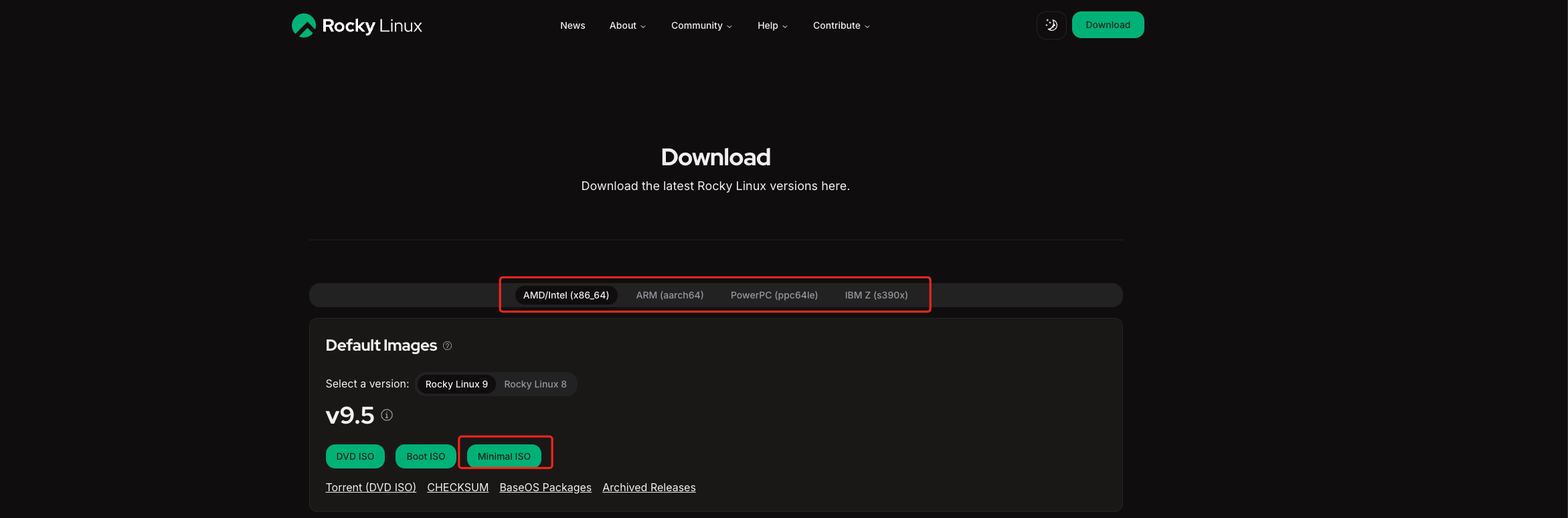

下载rockylinux9镜像

官方地址

https://rockylinux.org/zh-CN/download

根据系统架构选择镜像

阿里云镜像站地址

https://mirrors.aliyun.com/rockylinux/9/isos/x86_64/?spm=a2c6h.25603864.0.0.29696621VzJej5

二、系统配置

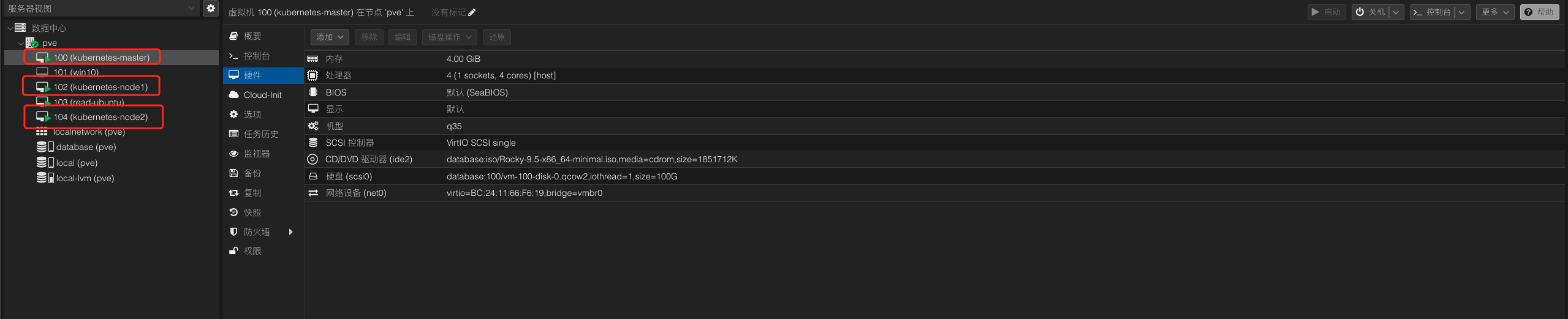

系统核心数:4c 内存:4G 磁盘:100G 一主两从架构

设备掩码 255.255.255.0

设备网关 192.168.68.1

设备IP master 192.168.68.160 node1 192.168.68.161 node2 192.168.68.162

三、环境初始化

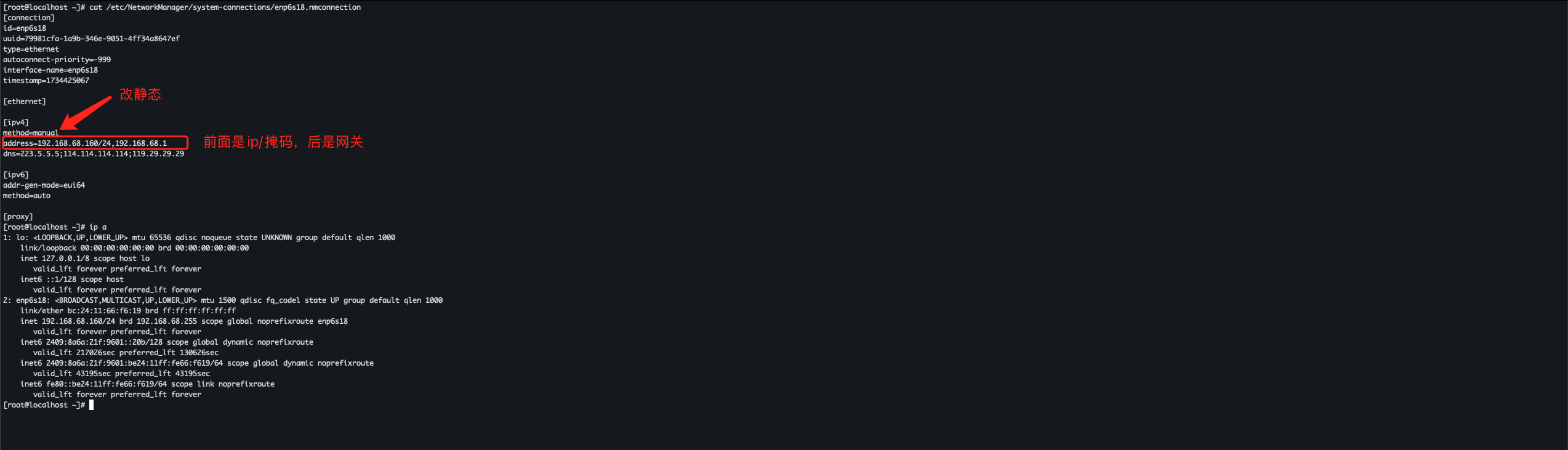

固定所有设备的IP地址,配置仅需要修改ipv4块

# 网卡配置

# cat /etc/NetworkManager/system-connections/enp6s18.nmconnection

[ipv4]

method=manual

address=192.168.68.160/24,192.168.68.1

dns=223.5.5.5;119.29.29.29

配置系统镜像源

https://developer.aliyun.com/mirror/rockylinux?spm=a2c6h.13651102.0.0.47731b11u4haJL # 阿里云镜像源

sed -e 's|^mirrorlist=|#mirrorlist=|g' \

-e 's|^#baseurl=http://dl.rockylinux.org/$contentdir|baseurl=https://mirrors.aliyun.com/rockylinux|g' \

-i.bak \

/etc/yum.repos.d/Rocky-*.repo

dnf makecache

关闭firewalld防火墙启用iptables

systemctl stop firewalld

systemctl disable firewalld

yum -y install iptables-services

systemctl start iptables

iptables -F

systemctl enable iptables

service iptables save

禁用selinux

setenforce 0

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

关闭swap分区

swapoff -a

sed -i 's:/dev/mapper/rl-swap:#/dev/mapper/rl-swap:g' /etc/fstab

修改主机名

hostnamectl set-hostname k8s-master # master

hostnamectl set-hostname k8s-node01 # node01

hostnamectl set-hostname k8s-node02 # node02

bash # 刷新生效

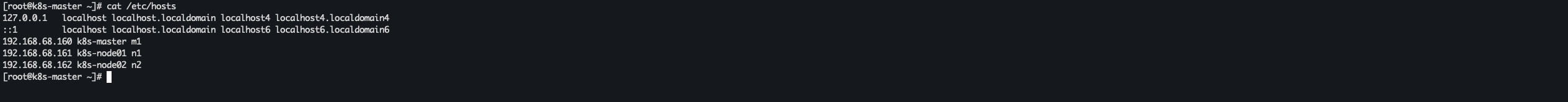

配置本地解析

vi /etc/hosts

192.168.68.160 k8s-master m1

192.168.68.161 k8s-node01 n1

192.168.68.162 k8s-node02 n2

安装ipvs

yum install -y ipvsadm

开启路由转发

echo 'net.ipv4.ip_forward=1' >> /etc/sysctl.conf

sysctl -p

加载 bridge

yum install -y epel-release

yum install -y bridge-utils

modprobe br_netfilter

echo 'br_netfilter' >> /etc/modules-load.d/bridge.conf

echo 'net.bridge.bridge-nf-call-iptables=1' >> /etc/sysctl.conf

echo 'net.bridge.bridge-nf-call-ip6tables=1' >> /etc/sysctl.conf

sysctl -p

安装docker

dnf config-manager --add-repo https://mirrors.ustc.edu.cn/docker-ce/linux/centos/docker-ce.repo

# 切换中科大源

sed -e 's|download.docker.com|mirrors.ustc.edu.cn/docker-ce|g' /etc/yum.repos.d/docker-ce.repo

# 安装docker

yum -y install docker-ce

# 或者使用阿里源

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

yum -y install docker-ce

配置docker

cat > /etc/docker/daemon.json <<EOF

{

"data-root": "/data/docker",

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m",

"max-file": "100"

},

"insecure-registries": ["harbor.xinxainghf.com"],

"registry-mirrors": ["https://proxy.1panel.live","https://docker.1panel.top","https://docker.m.daocloud.io","https://docker.1ms.run","https://docker.ketches.cn"]

}

EOF

mkdir -p /etc/systemd/system/docker.service.d

systemctl daemon-reload && systemctl restart docker && systemctl enable docker

重启设备

reboot # 建议拍摄快照防止后续问题没办法恢复环境

安装cri-docker

yum -y install wget

wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.9/cri-dockerd-0.3.9.amd64.tgz

# 注意 下面操作必须 在配置本地解析时 n1 , n2 和我写的一样

cp cri-dockerd/cri-dockerd /usr/bin/

scp cri-dockerd/cri-dockerd root@n1:/usr/bin/cri-dockerd

scp cri-dockerd/cri-dockerd root@n2:/usr/bin/cri-dockerd

配置 cri-docker 服务(这块仅在master设备上执行)

cat <<"EOF" > /usr/lib/systemd/system/cri-docker.service

[Unit]

Description=CRI Interface for Docker Application Container Engine

Documentation=https://docs.mirantis.com

After=network-online.target firewalld.service docker.service

Wants=network-online.target

Requires=cri-docker.socket

[Service]

Type=notify

ExecStart=/usr/bin/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.8

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=0

RestartSec=2

Restart=always

StartLimitBurst=3

StartLimitInterval=60s

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

cat <<"EOF" > /usr/lib/systemd/system/cri-docker.socket

[Unit]

Description=CRI Docker Socket for the API

PartOf=cri-docker.service

[Socket]

ListenStream=%t/cri-dockerd.sock

SocketMode=0660

SocketUser=root

SocketGroup=docker

[Install]

WantedBy=sockets.target

EOF

scp /usr/lib/systemd/system/cri-docker.service root@n1:/usr/lib/systemd/system/cri-docker.service

scp /usr/lib/systemd/system/cri-docker.service root@n2:/usr/lib/systemd/system/cri-docker.service

scp /usr/lib/systemd/system/cri-docker.socket root@n1:/usr/lib/systemd/system/cri-docker.socket

scp /usr/lib/systemd/system/cri-docker.socket root@n2:/usr/lib/systemd/system/cri-docker.socket

启动cri-docker

systemctl daemon-reload

systemctl enable cri-docker

systemctl start cri-docker

systemctl is-active cri-docker

# 返回 active 即为成功

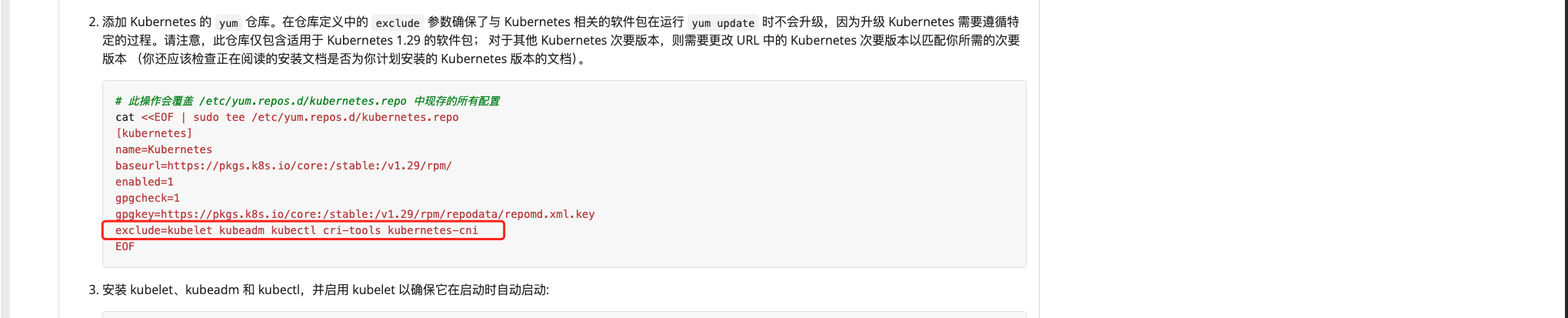

添加kuberadm源

https://v1-29.docs.kubernetes.io/zh-cn/docs/setup/production-environment/tools/kubeadm/install-kubeadm/ # 官方文档 参考使用

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/

enabled=1

gpgcheck=1

gpgkey=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/repodata/repomd.xml.key

# exclude=kubelet kubeadm kubectl cri-tools kubernetes-cni

EOF

安装kubeadm 1.29.2 版本

yum install -y kubelet-1.29.2 kubectl-1.29.2 kubeadm-1.29.2

systemctl enable kubelet.service

安装完成之后取消exclude的跳过注释,防止被update升级

sed -i 's/\# exclude/exclude/' /etc/yum.repos.d/kubernetes.repo

四、集群初始化

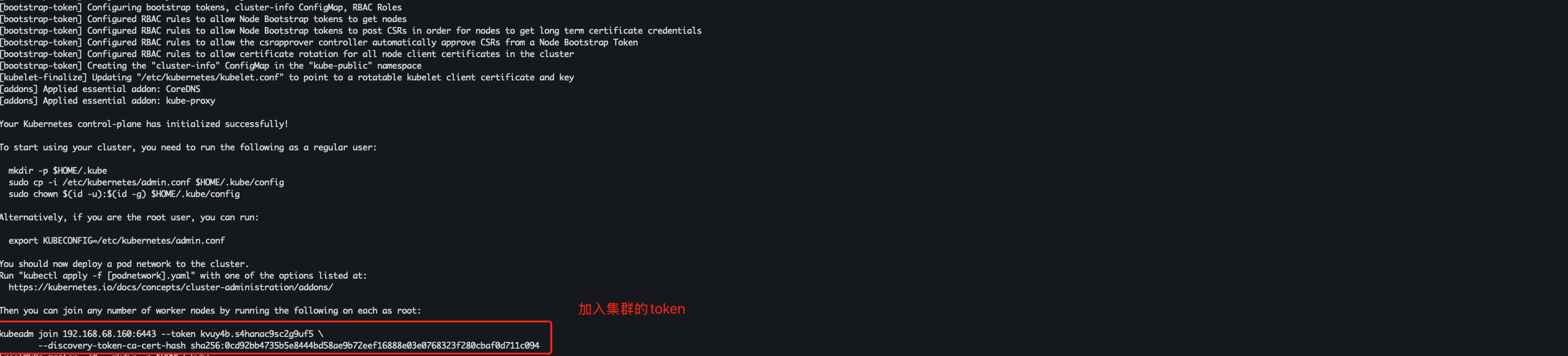

初始化master

# master 节点执行

kubeadm init --apiserver-advertise-address=192.168.68.160 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version 1.29.2 --service-cidr=10.10.0.0/12 --pod-network-cidr=10.244.0.0/16 --ignore-preflight-errors=all --cri-socket unix:///var/run/cri-dockerd.sock

创建kubernetes证书目录

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

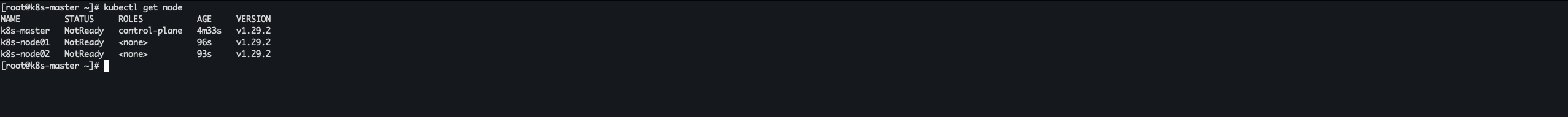

node节点加入集群

# node 节点执行,复制集群初始化的token命令时间记得加 --cri-socket unix:///var/run/cri-dockerd.sock 指定cri-docker的sock文件

kubeadm join 192.168.68.160:6443 --token kvuy4b.s4hanac9sc2g9uf5 --discovery-token-ca-cert-hash sha256:0cd92bb4735b5e8444bd58ae9b72eef16888e03e0768323f280cbaf0d711c094 --cri-socket unix:///var/run/cri-dockerd.sock

验证node节点是否加入集群

kubernetes get node

如果 token过期 重新申请

kubeadm token create --print-join-command

五、部署网络calico插件

官方文档

https://docs.tigera.io/calico/latest/getting-started/kubernetes/self-managed-onprem/onpremises#install-calico-with-kubernetes-api-datastore-more-than-50-nodes

导入镜像

# master 节点执行

wget https://alist.wanwancloud.cn/d/%E8%BD%AF%E4%BB%B6/Kubernetes/Calico/calico.zip?sign=45yzzkju25P3oo3e7-D7w4A38_0Ug50Xag585imOt-0=:0 -O calico.zip

yum -y install unzip

unzip calico.zip

cd calico

tar xf calico-images.tar.gz

scp -r calico-images root@n1:/root

scp -r calico-images root@n2:/root

docker load -i calico-images/calico-cni-v3.26.3.tar

docker load -i calico-images/calico-kube-controllers-v3.26.3.tar

docker load -i calico-images/calico-node-v3.26.3.tar

docker load -i calico-images/calico-typha-v3.26.3.tar

导入镜像

# node 节点执行

docker load -i calico-images/calico-cni-v3.26.3.tar

docker load -i calico-images/calico-kube-controllers-v3.26.3.tar

docker load -i calico-images/calico-node-v3.26.3.tar

docker load -i calico-images/calico-typha-v3.26.3.tar

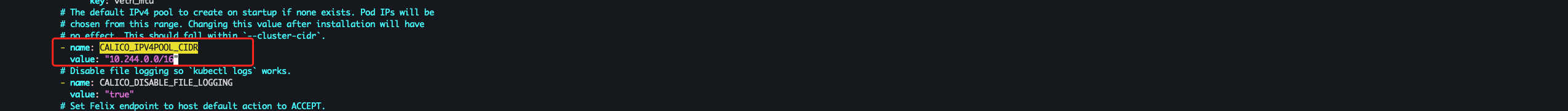

修改yaml文件

vi calico-typha.yaml

CALICO_IPV4POOL_CIDR 指定为 pod 地址 也是就是初始化集群时的地址 --pod-network-cidr=10.244.0.0/16

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

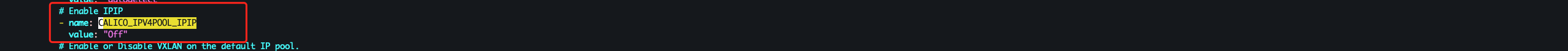

CALICO_IPV4POOL_IPIP 修改为 BGP 模式

- name: CALICO_IPV4POOL_IPIP

value: "Always" #改成Off

启用calico网络插件

kubectl apply -f calico-typha.yaml

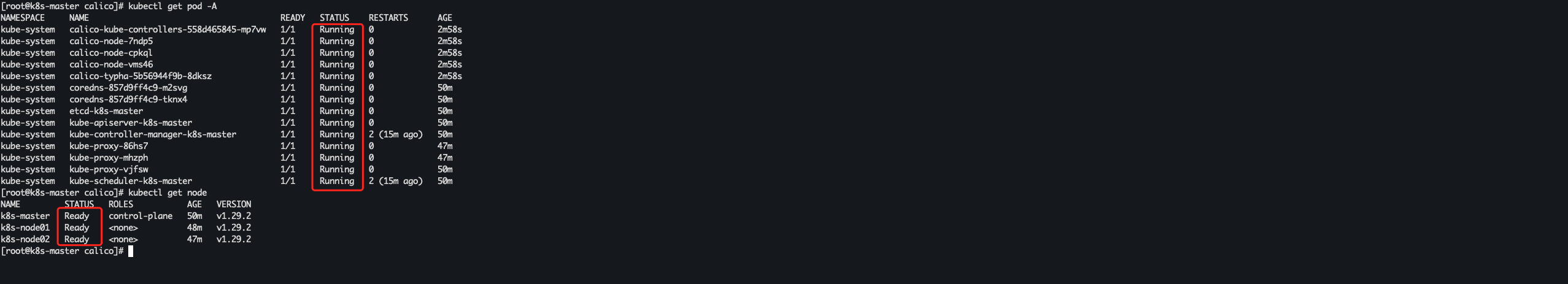

稍等一段时间 组件全部 Running 集群节点 Ready 就绪 集群就搭建成功了

kubectl get pod -A

kubectl get node

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?