基本思路:

输入要爬取的地理分区(如华南地区)构造一级页面url然后先爬取地理地区下各个城市的名称和url,然后再爬取各城市天气情况同时存储到数据库中。统计各地理分区的天气占比情况。最后利用Flask进行可视化。并实现一天一爬。

网页运行截图:

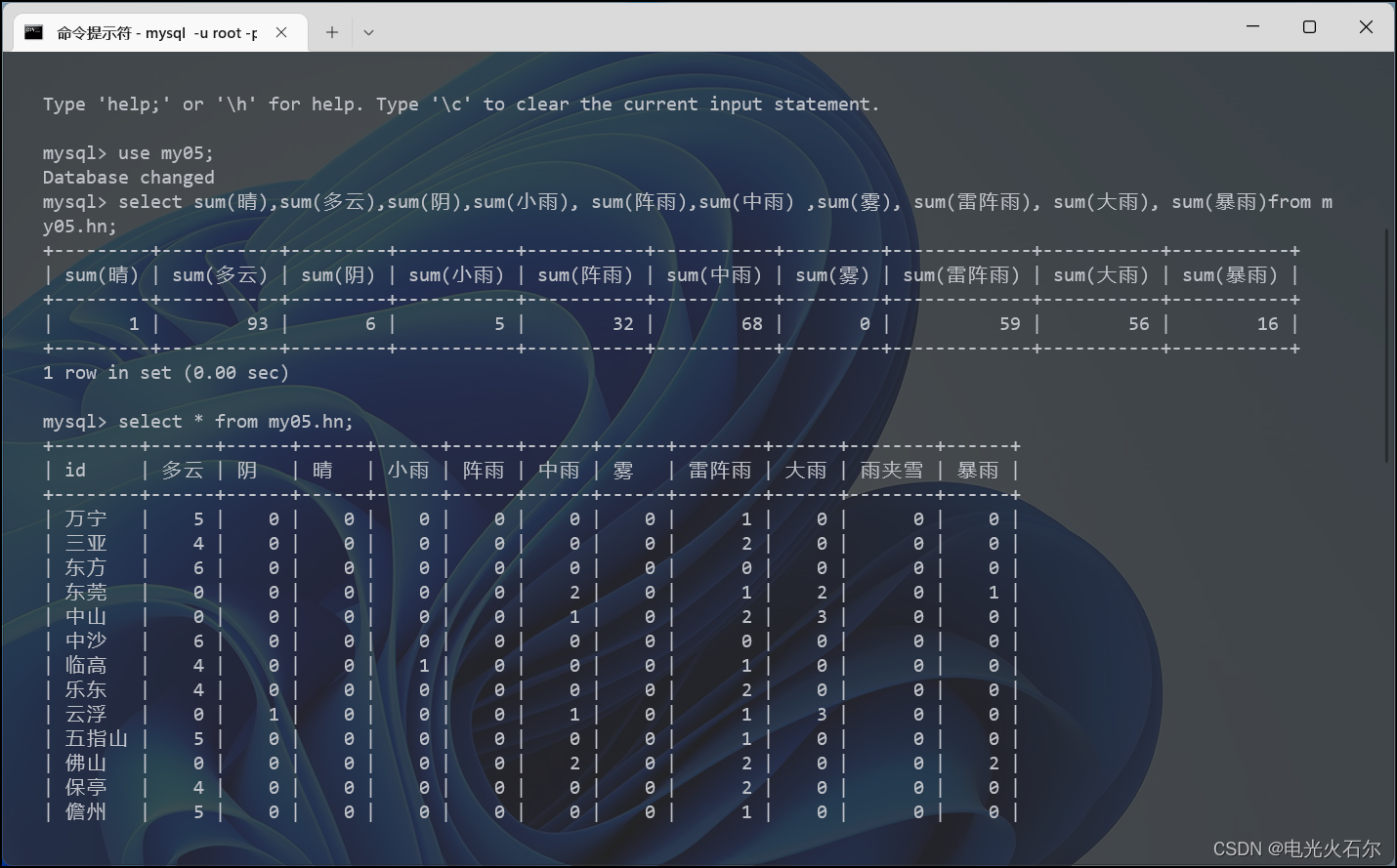

数据库截图:

部分代码如下:

scrapyweather/spiders/areas.py:

# coding:utf-8

import re

import scrapy

import sys

from pypinyin import Style

from pypinyin.core import Pinyin

from scrapyweather.items import ScrapyweatherItem

class AreasSpider(scrapy.Spider):

name = 'areas'

allowed_domains = ['www.weather.com.cn']

def __init__(self, **kwargs):

super().__init__(**kwargs)

inputarea = self.setwhere()

self.start_urls = ['http://www.weather.com.cn/textFC/%s.shtml' % inputarea]

def setwhere(self):

where_str = input("输入你要查询天气的地区(华北、东北、华东、华中、华南、西北、西南):")

p = Pinyin()

where_py = ''.join(p.lazy_pinyin(where_str, style=Style.FIRST_LETTER))

return where_py

def parse(self, response):

page_text = response.text

ex = '<td width=".*?" height=".*?">\n<a href="http://www.weather.com.cn/weather/(.*?)" target="_blank">(.*?)</a></td>'

areas = re.findall(ex, page_text, re.S)

areas = list(set(areas))

for area in areas:

item = ScrapyweatherItem()

item['area_url'] = 'http://www.weather.com.cn/weather/' + area[0]

item['area_name'] = area[1]

yield scrapy.Request(url=item['area_url'], callback=self.parse_detail, meta={'item': item})

def parse_detail(self, response):

item = response.meta['item']

weather_list = response.xpath('/html/body/div[5]/div[1]/div[1]/div[2]/ul/li[1]/p[1]/@title').extract_first()

a = weather_list.replace('小到', '')

b = a.replace('中到', '')

weather_list = b.replace('大到', '')

weather_list = weather_list.split('转')

if len(weather_list) == 1:

weather_list.append(weather_list[0])

item['weather'] = weather_list

yield item # yield item给pipelines

代码

百度网盘:

链接:https://pan.baidu.com/s/1ceei4XHryvtbDMEzngR0DQ?pwd=uhfx

提取码:uhfx

--来自百度网盘超级会员V2的分享

Github:

dumpling02/myWeather: 利用python网络爬虫实现天气情况统计,并可视化处理 (github.com)

欢迎各位大佬批评指正。

5637

5637

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?