1.处理完的特征数据如何写入kafka

1.1源数据展示(csv文件)

1.2 处理完数据如何写入

直接上代码

from pyspark.sql.functions import to_json, struct

from pyspark.shell import spark

# 定义Kafka连接信息

kafka_broker = "192.168.xx.xx:端口号"

kafka_topic = "主题"

df = df.withColumn("value", to_json(struct(df.columns)))

# 对接kafka,后期可对接实时 查询命令:kafka-console-consumer.sh --bootstrap-server ip:端口 --topic passenger_data --from-beginning

df.select("value").write \

.format("kafka") \

.option("kafka.bootstrap.servers", kafka_broker) \

.option("topic", kafka_topic) \

.save()2.报错分析

2.1 :报错TaskSetManager: Lost task 0.0 in stage 7.0 (TID 7, localhost, executor driver): java.lang.NoSuchMethodError: org.apache.kafka.clients.producer.KafkaProducer.flush()V

解决:这个问题简单,需要把要连接的kafka下的libs里面的kafka-clients-x.x.x.jar包放到spark的jars下面

放到这里

2.2 :报错 : org.apache.spark.sql.AnalysisException: Failed to find data source: kafka. Please deploy the application as per the deployment section of "Structured Streaming + Kafka Integration Guide".;

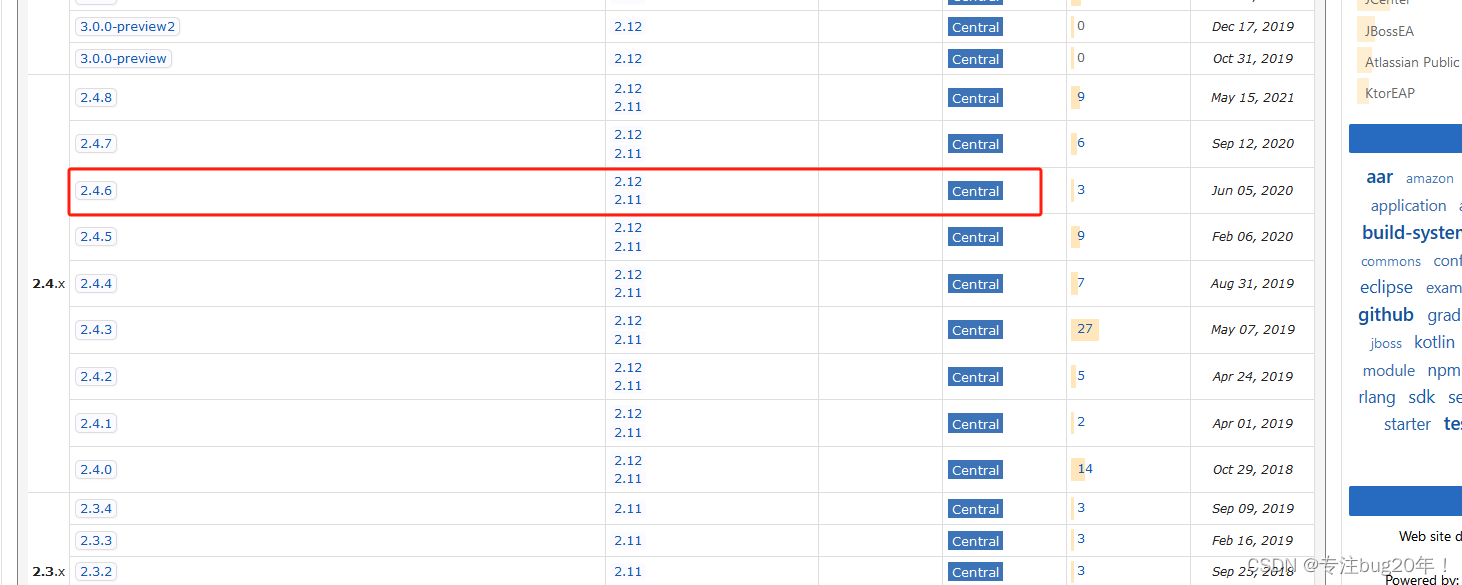

解决:这个是因为缺少了Kafka和Spark的集成包,前往https://mvnrepository.com/artifact/org.apache.spark

下载对应的jar包即可,比如我是SparkSql写入的Kafka,那么我就需要下载Spark-Sql-Kafka.x.x.x.jar

找到对应版本

点击即可下载

目前就遇到这么多,如果还有欢迎补充

8707

8707

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?