本文是通过阅读此处网址的内容以及自己查询而得的Pandas学习笔记。

一.简要介绍

Pandas是基于Numpy开发出来的,它是python的核心数据分析支持库,主要的数据结构是Series(带标签的一维同构数组)继和DataFrame(带标签的、大小可变的、二维异构表格),这些数据结构的值都是可变的;但Series的长度不可变,DataFrame可插入列。

Pandas与NumPy的本质区别是:NumPy数组只有一种数据类型,而DataFrame中每列的数据类型各不相同;当DataFrame的列由多种数据类型组成时,在输出底层数据的NumPy对象时的操作耗费系统资源较大。

部分数据类型

字符串类型:object

整数类型:int8,int16,int32,int64

浮点数类型:float64,float32

二.相关操作

1.导入

import numpy as np

import pandas as pd2.生成对象

用列表生成Series;

s = pd.Series([1,5,14,np.nan,23])

print(s)此时默认生成整数索引,结果如下:

0 1.0

1 5.0

2 14.0

3 NaN

4 23.0

dtype: float64

用Numpy数组生成DataFrame

dates = pd.date_range("20220521",periods=6)

print(dates)其中,date_range()是panda中常见的函数,用于生成固定频率的时间索引,在这次的代码中,制定了开始时间以及时间序列的数量,那么接下来就会生成从“20220521”开始,频率为默认值“D”的6个时间的DatetimeIndex时间索引;

DatetimeIndex(['2022-05-21', '2022-05-22', '2022-05-23', '2022-05-24',

'2022-05-25', '2022-05-26'],

dtype='datetime64[ns]', freq='D')此外,还有这种方式:

dates = pd.date_range("20220521",periods=6)

df = pd.DataFrame(np.random.randn(6,4),index = dates, columns = list('ABCD'))

print(df)其中,np.random.randn(6,4)代表生成一个两维的数据表格,数据部分包括6行4列,表格的行标签是之前生成的dates,列标签是A,B,C,D;

A B C D

2022-05-21 0.136886 1.041708 -1.321150 0.451349

2022-05-22 0.320517 0.915637 -0.376431 -1.490658

2022-05-23 0.390247 0.000224 1.161696 -1.622723

2022-05-24 -0.741657 1.345109 -0.656991 -1.895109

2022-05-25 -0.168533 1.175146 -0.099444 -0.803727

2022-05-26 -0.511003 -0.276564 2.746680 -1.134566还可以采用Series字典对象生成DataFrame:

df2 = pd.DataFrame({

'A': 1,

'B': pd.Timestamp('20220524'),

'C': pd.Series(1, index = list(range(4)),dtype = 'float32'),

'D': np.array([3]*4, dtype = 'int32'),

'E': pd.Categorical(["test","trian","test","train"]),

'F': "foo"

})

print(df2)结果如下:

A B C D E F

0 1 2022-05-24 1.0 3 test foo

1 1 2022-05-24 1.0 3 trian foo

2 1 2022-05-24 1.0 3 test foo

3 1 2022-05-24 1.0 3 train foo查询每一列的数据类型,可以得到每一列都有不同的数据类型;

A int64

B datetime64[ns]

C float32

D int32

E category

F object

dtype: object3.查看数据

采用tail()函数以及head()函数查看之前定义好的df的头部以及尾部数据。

print(df.head(1))

print(df.tail(2)) A B C D

2022-05-21 -0.249276 0.591296 0.992551 -1.133971

A B C D

2022-05-25 -1.266972 -0.605986 1.588265 0.712359

2022-05-26 0.604126 -1.017234 0.232689 -0.579932采用index()以及column()函数显示索引以及列名;

print(df.index)

print(df.columns)DatetimeIndex(['2022-05-21', '2022-05-22', '2022-05-23', '2022-05-24',

'2022-05-25', '2022-05-26'],

dtype='datetime64[ns]', freq='D')

Index(['A', 'B', 'C', 'D'], dtype='object')通过DataFrame.to_numpy()函数输出底层数据的NumPy对象;但输出不包含行索引和列标签。在调用这个函数时,Pandas查找支持DataFrame里所有的数据类型的NumPy数据类型;

print(df.to_numpy())[[-0.01568141 0.32649045 1.35315451 -0.73029335]

[ 1.52319239 0.39413554 -1.79469583 1.16339447]

[-0.38151711 -0.26693644 -0.43819009 0.86742392]

[-0.62045309 -1.94559426 -0.37615171 1.97012684]

[-1.5739133 1.66931392 -1.33115334 -0.75294336]

[ 0.03869211 0.31861187 0.96338101 0.09202964]]通过describe()函数可以快速查看数据的统计摘要;

print(df.describe())最终显示的结果里,包括有:计数、平均值、标准差、最小值、最大值以及四分之一分位数、二分之一分位数、四分之三分位数。

A B C D

count 6.000000 6.000000 6.000000 6.000000

mean -0.362490 -0.058584 -0.428353 0.235016

std 1.355071 0.900411 0.588704 1.441720

min -2.598253 -1.204845 -0.922017 -1.849070

25% -0.776751 -0.648614 -0.835257 -0.393448

50% -0.329908 -0.147642 -0.693182 0.140082

75% 0.412253 0.656982 -0.077645 0.896637

max 1.349188 1.039041 0.493345 2.401473

转置数据;

print(df.T) 2022-05-21 2022-05-22 2022-05-23 2022-05-24 2022-05-25 2022-05-26

A -0.036124 0.687316 -0.856321 2.429078 -2.038939 0.376852

B 0.359951 -1.019624 0.278458 1.134377 -0.956782 -0.201985

C -0.176906 0.330358 0.135873 -1.556026 1.406605 -1.092472

D 0.182999 0.576017 -0.141801 -1.459510 -0.488253 1.263315按轴排序;

print(df.sort_index(axis = 1,ascending = False))参数axis 默认值是 0,即指按照行的索引进行排序;若axis 设置为 1,即指按照列的索引进行排序;ascending 默认为 True,即升序;设置为 False 时候为降序。

D C B A

2022-05-21 -0.039538 0.102864 1.809570 0.422249

2022-05-22 0.164445 0.128373 -0.488638 1.562228

2022-05-23 -1.019688 1.247957 1.711259 0.374710

2022-05-24 2.933171 0.179653 -0.349013 -0.356169

2022-05-25 0.483066 0.902441 2.752587 -0.858579

2022-05-26 -1.359240 -0.029439 0.315487 0.136530按值排序;

print(df.sort_values(by = 'B'))按照B列的数据值排序;

A B C D

2022-05-22 -0.003005 -0.510940 -1.850251 -0.602501

2022-05-21 0.199195 0.448159 1.344446 -0.156287

2022-05-25 -1.032422 0.733475 -0.966291 0.494933

2022-05-24 2.097197 0.888254 1.117285 -0.026202

2022-05-26 -1.373749 1.635690 -0.610852 0.726204

2022-05-23 0.476349 1.862359 -0.921950 0.5659114.选择

获取数据

选取单列,产生Series;

print(df.A)2022-05-21 -1.569664

2022-05-22 -0.519668

2022-05-23 1.400681

2022-05-24 -1.563633

2022-05-25 -0.864536

2022-05-26 1.321613

Freq: D, Name: A, dtype: float64

将行进行切片;

print(df[0:3]) A B C D

2022-05-21 -0.074677 0.511283 1.363140 1.209577

2022-05-22 -1.790185 0.186599 0.386680 0.389305

2022-05-23 0.779339 0.857374 -0.292863 0.324927print(df['20220521':'20220524']) A B C D

2022-05-21 -0.034967 0.169557 0.496417 0.557630

2022-05-22 -0.363198 1.165939 -0.029852 -0.742354

2022-05-23 -1.065871 0.094265 0.335761 1.295312

2022-05-24 0.173184 1.589024 0.565842 0.973264按照标签选择

提取一行数据;

print(df.loc[dates[0]])A -1.752385

B 2.007287

C -0.466264

D -0.059769

Name: 2022-05-21 00:00:00, dtype: float64

用标签选择多列数据;

print(df.loc[:,['A','B']]) A B

2022-05-21 1.832760 -1.234210

2022-05-22 1.060735 0.604725

2022-05-23 0.042338 0.429720

2022-05-24 -0.120698 0.354572

2022-05-25 0.167567 -1.642642

2022-05-26 0.284255 0.106209用标签切片,包含行与列结束点;

print(df.loc["20220521":'20220523',['C','D']]) C D

2022-05-21 -0.064251 1.404674

2022-05-22 -1.469895 0.604737

2022-05-23 -0.164208 1.140707

返回对象,实现降维效果;

print(df.loc['20220522',['A','C']])A 0.102988

C 0.702026

Name: 2022-05-22 00:00:00, dtype: float64

提取标量的值;

print(df.loc[dates[0],'D'])0.3523557087717819等效方法,可快速访问标量;

print(df.at[dates[0],'C'])0.2784856822405241

按位置选择

按照整数位置选择;

print(df.iloc[3])A 0.504944

B 0.636838

C 0.062362

D -1.163998

Name: 2022-05-24 00:00:00, dtype: float64

整数切片;

print(df.iloc[3:5,0:3]) A B C

2022-05-24 0.883995 -0.387231 0.628574

2022-05-25 -0.289374 0.010543 0.199258

采用整数列表按照位置切片;

print(df.iloc[[1,2,5],[0,3]]) A D

2022-05-22 0.763304 -0.387213

2022-05-23 0.894361 -0.342509

2022-05-26 -0.618824 0.701133

显式整行切片;

print(df.iloc[1:3,:]) A B C D

2022-05-22 1.479378 0.782299 -0.519816 0.892910

2022-05-23 -0.890134 -1.006557 0.597311 -0.598442显式整列切片;

print(df.iloc[:,1:3]) B C

2022-05-21 -0.691051 -1.694787

2022-05-22 0.750126 -0.709624

2022-05-23 -1.652578 1.136266

2022-05-24 -2.098970 -0.647041

2022-05-25 -1.143725 -1.053974

2022-05-26 0.846129 0.317639显式提取值;

print(df.iloc[1,1])-1.220320008727587等效方法,也可以快速访问标量;

print(df.iat[1,1])-1.220320008727587布尔索引

用单列的值选择数据;

print(df[df.A>0]) A B C D

2022-05-23 1.424633 -0.411235 -1.050350 0.073719

2022-05-26 0.216383 -0.602787 0.242099 -0.003117选择DataFrame里满足条件的值;

print(df[df>0]) A B C D

2022-05-21 0.034121 NaN NaN NaN

2022-05-22 NaN 0.832257 NaN 0.673357

2022-05-23 2.551887 1.052720 NaN 2.171475

2022-05-24 NaN NaN 0.724251 NaN

2022-05-25 NaN 2.065618 0.419077 0.231083

2022-05-26 0.034950 NaN NaN NaN

采用isin()函数进行筛选;先添加一行“E”,isin()函数接受一个列表,并判断该列中元素是否在列表中;

df2 = df.copy()

df2['E'] = ['one','one','two','three','four','three']

print(df2)

print()

print(df2[df2['E'].isin(['two','four'])])

A B C D E

2022-05-21 1.784070 0.717759 1.173878 -0.543134 one

2022-05-22 -1.160707 0.622619 -0.371278 0.569910 one

2022-05-23 0.726340 0.765890 0.811662 -0.158632 two

2022-05-24 0.309843 0.208132 0.011794 -0.633664 three

2022-05-25 0.445362 1.235948 1.280879 -0.051112 four

2022-05-26 -0.499006 0.404253 1.310874 -0.230863 three

A B C D E

2022-05-23 0.726340 0.765890 0.811662 -0.158632 two

2022-05-25 0.445362 1.235948 1.280879 -0.051112 four

赋值

用索引自动对齐新增列的数据;新建一个值为[1,2,3,4,5,6],索引index为20220521到20220526的Series,并将series赋值给df作为df新增的F列

s1 = pd.Series([1,2,3,4,5,6],index = pd.date_range("20220521",periods = 6))

print(s1)

df['F'] = s12022-05-21 1

2022-05-22 2

2022-05-23 3

2022-05-24 4

2022-05-25 5

2022-05-26 6

Freq: D, dtype: int64按照标签赋值;通过at方法把满足df中dates[0],列A的值修改为0;

df.at[dates[0],'A'] = 0按照位置赋值;使用iat方法修改df中行下标为0,列下标为1的值等于0;

df.iat[0,1] = 0按照NumPy数组赋值; 使用loc方法把df的D列值修改为5*len(df)

df.loc[:,'D'] = np.array([5]*len(df))而后打印赋值结果,可得:

A B C D F

2022-05-21 0.000000 0.000000 1.189929 5 1

2022-05-22 0.720087 -0.733954 -0.227088 5 2

2022-05-23 0.304696 1.125239 2.176908 5 3

2022-05-24 0.418205 -0.414897 -0.365389 5 4

2022-05-25 -1.047534 0.502702 -0.247725 5 5

2022-05-26 1.004945 0.826539 -0.677019 5 6采用where条件进行赋值;

df2 = df.copy()

df2[df2>0] = -df2

print(df2) A B C D

2022-05-21 -1.395279 -0.998688 -1.105492 -0.077170

2022-05-22 -0.554307 -0.123219 -0.263776 -0.368944

2022-05-23 -0.867589 -0.143677 -1.149439 -0.052096

2022-05-24 -0.316583 -1.197679 -0.244917 -1.372555

2022-05-25 -1.166079 -0.265879 -1.385109 -1.612151

2022-05-26 -0.413096 -0.344400 -0.837535 -0.152082

5.缺失值

Pandas主要使用np.nan表示缺失数据,在计算时,默认情况下不包含空值;

在使用reindex重建索引时,可以更改、添加、删除指定轴的索引,并返回数据副本,并不更改原数据。

df1 = df.reindex(index = dates[0:4],columns= list(df.columns)+['E'])

df1.loc[dates[0]:dates[1],'E'] = 1

print(df1)

按照设定的行列重建索引后,修改其中特定行列的值,并打印出来;

A B C D F E

2022-05-21 0.000000 0.000000 -0.881176 5 1 1.0

2022-05-22 0.408340 -0.195753 -1.627599 5 2 1.0

2022-05-23 0.379201 -0.329105 -0.351990 5 3 NaN

2022-05-24 0.510544 1.734054 0.474862 5 4 NaN使用dropna()函数删除所有含缺失值的行;

print(df1.dropna(how = 'any')) A B C D F E

2022-05-21 0.000000 0.000000 -0.398187 5 1 1.0

2022-05-22 -1.021497 -1.067449 -1.213461 5 2 1.0使用fillna()函数填充缺失值;

A B C D F E

2022-05-21 0.000000 0.000000 -0.537663 5 1 1.0

2022-05-22 -0.447872 0.335033 1.338705 5 2 1.0

2022-05-23 -0.868069 0.114179 0.250253 5 3 5.0

2022-05-24 0.496782 -1.299465 -2.181665 5 4 5.0

使用isna()函数提取nan值的布尔掩码;

print(pd.isna(df1)) A B C D F E

2022-05-21 False False False False False False

2022-05-22 False False False False False False

2022-05-23 False False False False False True

2022-05-24 False False False False False True

6.运算

统计

一般情况,运算时排除缺失值;

描述性统计;(列)

print(df.mean())A 0.100793

B 0.377849

C 0.044165

D 5.000000

F 3.500000

dtype: float64在另一个轴也即行轴上执行同样的操作;

print(df.mean(1))2022-05-21 1.002418

2022-05-22 1.599843

2022-05-23 1.815638

2022-05-24 2.085704

2022-05-25 1.524031

2022-05-26 1.711351

Freq: D, dtype: float64在不同维度的对象进行运算时,要先将其对齐(Pandas自动沿指定维度广播)。

s = pd.Series([1,3,5,np.nan,6,8],index = dates).shift(2)

print(s)shift()函数对数据进行移动;

2022-05-21 NaN

2022-05-22 NaN

2022-05-23 1.0

2022-05-24 3.0

2022-05-25 5.0

2022-05-26 NaN

Freq: D, dtype: float64

元素级相减;

print(df.sub(s,axis = 'index')) A B C D F

2022-05-21 NaN NaN NaN NaN NaN

2022-05-22 NaN NaN NaN NaN NaN

2022-05-23 -1.472729 -1.114310 -1.966175 4.0 2.0

2022-05-24 -2.250075 -3.093553 -3.755624 2.0 1.0

2022-05-25 -6.066469 -3.576010 -5.852282 0.0 0.0

2022-05-26 NaN NaN NaN NaN NaNApply函数

调来一个函数,对数据进行批量处理;

print(df.apply(np.cumsum)) A B C D F

2022-05-21 0.000000 0.000000 -0.117359 5 1

2022-05-22 -1.624370 0.391208 1.857898 10 3

2022-05-23 -1.860172 2.902743 2.030917 15 6

2022-05-24 -2.390905 2.147162 0.670798 20 10

2022-05-25 -2.749462 2.377652 -0.947589 25 15

2022-05-26 -4.870885 0.924509 -2.626332 30 21通过cumsum()函数返回沿给定轴的元素的累积和;

print(df.apply(np.cumsum)) A B C D F

2022-05-21 0.000000 0.000000 -1.511653 5 1

2022-05-22 -0.064132 -0.430295 0.847677 10 3

2022-05-23 -0.795267 -0.249659 1.122162 15 6

2022-05-24 -1.526372 0.735427 2.770422 20 10

2022-05-25 -0.392146 1.599917 4.034942 25 15

2022-05-26 -0.380017 1.188050 4.987291 30 21

使用lambda()定义每一列最大值减去最小值的函数;

print(df.apply(lambda x : x.max() - x.min()))A 2.984022

B 1.708451

C 4.276404

D 0.000000

F 5.000000

dtype: float64直方图

s = pd.Series(np.random.randint(0,7,size = 10))

print(s)0 4

1 2

2 1

3 3

4 0

5 3

6 1

7 2

8 3

9 5

dtype: int32计数;

print(s.value_counts())6 2

5 2

1 2

3 2

0 1

2 1

dtype: int64

字符串方法

str属性中包含一组字符串处理功能,其模式匹配默认使用正则表达式;

s = pd.Series(['A','B','C','Aaba','Baca',np.nan,'CABA','dog','cat'])

print(s.str.lower())0 a

1 b

2 c

3 aaba

4 baca

5 NaN

6 caba

7 dog

8 cat

dtype: object

7.合并

结合

concat()函数用于连接Pandas对象;

df = pd.DataFrame(np.random.randn(10,4))

print(df) 0 1 2 3

0 -1.359922 0.985887 -0.003946 0.988086

1 -0.165162 -0.918188 -0.969180 1.341331

2 1.099910 -0.962338 0.202542 0.250508

3 -0.836569 1.490216 0.404249 0.758221

4 0.377200 -0.329764 -1.031423 -0.921214

5 -1.536218 -0.410249 -1.239609 -1.124185

6 -0.862180 0.415266 -1.721721 -0.236350

7 1.883878 -0.095544 -0.950778 -0.525479

8 0.944001 1.099845 1.922751 0.837286

9 -0.954146 0.325708 0.119797 0.355755分解为多组后,连接起来;

pieces = [df[:3],df[3:7],df[7:]]

print(pd.concat(pieces)) A B C D F

2022-05-21 0.000000 0.000000 -1.351565 5 1

2022-05-22 -0.790049 2.016855 0.078462 5 2

2022-05-23 0.846315 -0.592889 -1.361169 5 3

2022-05-24 0.300818 0.174860 -0.410828 5 4

2022-05-25 0.843421 0.682123 -0.095293 5 5

2022-05-26 0.568242 -0.133728 0.459318 5 6连接

按照SQL风格连接起来;

left = pd.DataFrame({'key':['foo','foo'],'lval':[1,2]})

right = pd.DataFrame({'key':['foo','foo'],'lval':[4,5]})

print(left)

print(right)

print(pd.merge(left,right,on = 'key')) key lval

0 foo 1

1 foo 2

key lval

0 foo 4

1 foo 5

key lval_x lval_y

0 foo 1 4

1 foo 1 5

2 foo 2 4

3 foo 2 5

追加

可以使用append()函数为DataFrame追加行;

df = pd.DataFrame(np.random.randn(8,4),columns= ['A','B','C','D'])

print(df)

s = df.iloc[3]

print(s)

print(df.append(s,ignore_index = True))

忽略索引ignore_index=True

A B C D

0 -1.544461 0.155749 -0.851200 -0.356837

1 0.011902 1.191635 0.493092 -0.365457

2 -0.566577 -0.142893 -1.565211 1.869204

3 0.988017 -2.153860 -1.026299 -1.091889

4 1.203632 0.368603 -0.835276 0.459193

5 0.507430 0.024230 -0.658526 -1.026438

6 0.087510 -0.725613 -2.053941 0.522262

7 0.114488 0.104691 -0.028875 0.180440

A 0.988017

B -2.153860

C -1.026299

D -1.091889

Name: 3, dtype: float64

A B C D

0 -1.544461 0.155749 -0.851200 -0.356837

1 0.011902 1.191635 0.493092 -0.365457

2 -0.566577 -0.142893 -1.565211 1.869204

3 0.988017 -2.153860 -1.026299 -1.091889

4 1.203632 0.368603 -0.835276 0.459193

5 0.507430 0.024230 -0.658526 -1.026438

6 0.087510 -0.725613 -2.053941 0.522262

7 0.114488 0.104691 -0.028875 0.180440

8 0.988017 -2.153860 -1.026299 -1.091889

8.分组

group by 主要涵盖一下几个流程:

先按照条件把数据分割成多组;然后将每组单独应用函数;最后将处理结果组合成一个数据结构;

df = pd.DataFrame({'A': ['foo', 'bar', 'foo', 'bar',

'foo', 'bar', 'foo', 'foo'],

'B': ['one', 'one', 'two', 'three',

'two', 'two', 'one', 'three'],

'C': np.random.randn(8),

'D': np.random.randn(8)})

print(df) A B C D

0 foo one 1.167424 -0.934787

1 bar one 0.780026 1.425194

2 foo two 1.385424 -0.137203

3 bar three 0.662306 0.379540

4 foo two 0.378391 1.542871

5 bar two -0.008416 -0.325737

6 foo one -1.546695 -0.413336

7 foo three -0.202212 0.007095

先分组,然后再用sum()函数计算每组的汇总数据;

print(df.groupby('A').sum()) C D

A

bar 3.982001 2.100006

foo 0.227701 1.864154多列分组后,生成多层索引,也可以应用sum()函数;

print(df.groupby(['A','B']).sum()) C D

A B

bar one -1.689814 0.275729

three -0.209854 0.808712

two -1.027787 -1.969185

foo one 1.417323 0.591048

three 0.006879 -0.328129

two -2.258776 -1.161554

9.重塑

堆叠

MultiIndex表示建立多级索引,下文是按照tuple的方式建立的;

tuples = list(zip(*[['bar','bar','baz','baz',

'foo','foo','qux','qux'],

['one','two','one','two',

'one','two','one','two']]))

index = pd.MultiIndex.from_tuples(tuples,names = ['first','second'])

df = pd.DataFrame(np.random.randn(8,2),index = index,columns=['A','B'])

df2 = df[:4]

print(df2) A B

first second

bar one -0.848519 1.328762

two -1.534984 -2.450740

baz one 0.731656 0.786888

two 0.200778 0.694727

stack()函数可以把DataFrame列压缩至一层;

stacked = df2.stack()

print(stacked)first second

bar one A 0.125944

B -1.118293

two A 0.667313

B -0.307445

baz one A -0.689515

B 0.279703

two A 1.158254

B 1.359036

dtype: float64压缩之后的DataFrame或者Series具有多层索引,采用stack()的逆操作unstack()则可进行拆叠,默认情况下拆叠最后一层;

print(stacked.unstack()) A B

first second

bar one -0.322104 -1.303836

two -0.263043 -0.830459

baz one -1.517066 -0.042109

two -0.476060 0.950878

可以指定拆叠的层数;

print(stacked.unstack(1))second one two

first

bar A 0.383808 -0.072475

B -0.112976 -0.162860

baz A -0.741657 1.031196

B 1.470863 -0.304738

print(stacked.unstack(0))

first bar baz

second

one A -1.445239 1.248117

B 0.193994 -0.823906

two A 1.529949 0.300386

B 0.348373 -0.26518710.数据透视表

df = pd.DataFrame({'A':['one','one','two','three']*3,

'B':['A','B','C']*4,

'C':['foo','foo','foo','bar','bar','bar']*2,

'D':np.random.randn(12),

'E':np.random.randn(12)

})

print(df) A B C D E

0 one A foo -0.483057 0.039645

1 one B foo -0.414430 0.729397

2 two C foo -0.144770 0.115875

3 three A bar -0.455463 0.513543

4 one B bar 0.284159 0.717968

5 one C bar -2.001673 -1.027018

6 two A foo 1.271966 0.955445

7 three B foo 0.800737 0.546006

8 one C foo -0.727104 -0.166272

9 one A bar 0.746088 0.025253

10 two B bar -0.657521 1.935400

11 three C bar 1.059533 -0.005286

生成数据透视表;(数据透视表是一种交互式的表,而且可以动态的改变他们的版面布置,以便按照不同方式分析数据,也可以重新安排行号、列标和页字段。)

print(pd.pivot_table(df,values='D',index=['A','B'],columns=['C']))C bar foo

A B

one A -0.393722 -0.475678

B -0.358087 -0.493856

C -0.167854 0.414786

three A 0.072712 NaN

B NaN 0.412877

C -0.726184 NaN

two A NaN -0.818590

B -1.001960 NaN

C NaN -0.157245

11.时间序列

Pandas为频率转换时的重采样提供了简单易用又强大高效的功能;下面是将秒级的数据转换为5分钟为频率的数据;使用resample()将其向下采样到5min的容器中,并将落入容器的时间戳的值相加。

rng = pd.date_range('23/5/2022',periods = 100,freq = 'S')

ts = pd.Series(np.random.randint(0,500,len(rng)),index = rng)

print(ts.resample('5Min').sum())2022-05-23 27528

Freq: 5T, dtype: int32时区表示;

rng = pd.date_range('24/5/2022 00:00',periods=5,freq = 'D')

ts = pd.Series(np.random.randn(len(rng)),rng)

print(ts)2022-05-24 0.629037

2022-05-25 0.305843

2022-05-26 -0.146363

2022-05-27 -0.282303

2022-05-28 -0.495081

Freq: D, dtype: float64

ts_utc = ts.tz_localize('UTC')

print(ts_utc)2022-05-24 00:00:00+00:00 -1.146774

2022-05-25 00:00:00+00:00 1.857884

2022-05-26 00:00:00+00:00 0.400149

2022-05-27 00:00:00+00:00 -1.155352

2022-05-28 00:00:00+00:00 1.635605

Freq: D, dtype: float64转换为其他时区;

print(ts_utc.tz_convert('US/Eastern'))2022-05-23 20:00:00-04:00 1.528297

2022-05-24 20:00:00-04:00 0.445658

2022-05-25 20:00:00-04:00 1.828093

2022-05-26 20:00:00-04:00 1.692153

2022-05-27 20:00:00-04:00 -1.026198

Freq: D, dtype: float64转换时间段:

rng = pd.date_range('1/1/2022',periods= 5,freq='M')

ts = pd.Series(np.random.randn(len(rng)),index= rng)

print(ts)

ps = ts.to_period() #将日期时间离散化

print(ps)

2022-01-31 0.911192

2022-02-28 -0.741799

2022-03-31 -0.433314

2022-04-30 -0.780637

2022-05-31 0.689170

Freq: M, dtype: float64

2022-01 0.911192

2022-02 -0.741799

2022-03 -0.433314

2022-04 -0.780637

2022-05 0.689170

Freq: M, dtype: float64print(ps.to_timestamp())2022-01-01 -0.445026

2022-02-01 1.338020

2022-03-01 0.351717

2022-04-01 0.537906

2022-05-01 0.370323

Freq: MS, dtype: float64Pandas函数也可以很方便的转换时间段与时间戳;

prng = pd.period_range('1999Q1','2000Q4',freq='Q-NOV')

ts = pd.Series(np.random.randn(len(prng)),prng)

ts.index = (prng.asfreq('M','e')+1).asfreq('H','s')+9

print(ts.head())1999-03-01 09:00 0.130733

1999-06-01 09:00 -2.153159

1999-09-01 09:00 0.520082

1999-12-01 09:00 -0.965103

2000-03-01 09:00 -0.392060

Freq: H, dtype: float6412.类别型

将grade的原生数据转换为类别型数据;

df = pd.DataFrame({"id":[1,2,3,4,5,6],

"raw_grade":['a','b','b','a','a','e']

})

df["grade"] = df["raw_grade"].astype("category")

print(df["grade"])0 a

1 b

2 b

3 a

4 a

5 e

Name: grade, dtype: category

Categories (3, object): ['a', 'b', 'e']用有含义的名字重命名不同类型;给categories赋值,可以改变类别标签。赋值的时候是按照顺序进行对应的;改变类别标签集合,操作过后数据的标签不变,但是标签的集合改变;

df["grade"].cat.categories = ["very good","good","very bad"]

df["grade"] = df["grade"].cat.set_categories(["very bad","bad","medium","good","very good"])

print(df["grade"])0 very good

1 good

2 good

3 very good

4 very good

5 very bad

Name: grade, dtype: category

Categories (5, object): ['very bad', 'bad', 'medium', 'good', 'very good']

按照生成类别时的顺序排序(并非按照词汇排序);

print(df.sort_values(by = 'grade')) id raw_grade grade

5 6 e very bad

1 2 b good

2 3 b good

0 1 a very good

3 4 a very good

4 5 a very good按照类别分组时,及时某个类别为空也会显示出来;

print(df.groupby("grade").size())grade

very bad 1

bad 0

medium 0

good 2

very good 3

dtype: int64

13.可视化

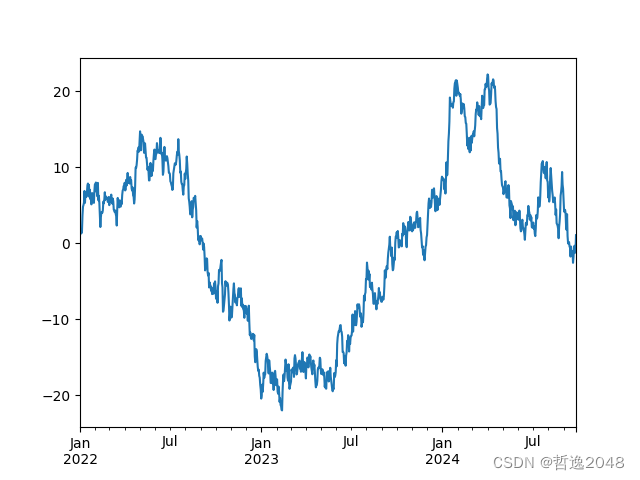

ts = pd.Series(np.random.randn(1000),

index = pd.date_range('1/1/2022',periods = 1000))

ts = ts.cumsum() #各行的累加值

ts.plot()

plt.show()AxesSubplot(0.125,0.11;0.775x0.77)

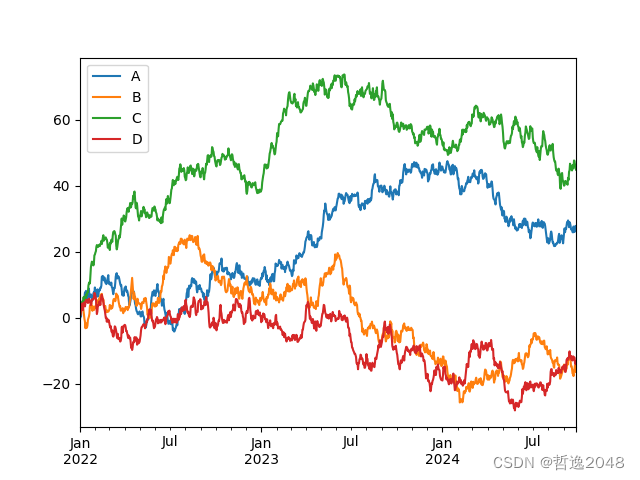

DataFrame()中的plot()函数也可以快速的绘制所有带标签的列;

df = pd.DataFrame(np.random.randn(1000,4),index = ts.index,

columns = ['A','B','C','D'])

df = df.cumsum()

print(plt.figure())

print(df.plot())

print(plt.legend(loc = 'best'))

plt.show()

14.数据输入/输出

CSV

将数据写入csv文件;

df.to_csv("foo.csv")读取csv文件数据;

print(pd.read_csv("foo.csv")) Unnamed: 0 A B C D

0 2022-01-01 -0.376720 -1.050965 0.676105 -0.155736

1 2022-01-02 -0.094961 -1.546079 -0.556264 -0.856252

2 2022-01-03 -0.524001 -1.987073 -1.254280 0.691564

3 2022-01-04 -3.139603 0.256445 -1.671734 -0.196728

4 2022-01-05 -2.477721 0.381823 -2.623101 -0.331353

.. ... ... ... ... ...

995 2024-09-22 21.916246 27.080269 -5.498463 45.427545

996 2024-09-23 21.987481 26.228331 -5.950425 46.577939

997 2024-09-24 22.121124 25.001296 -5.843399 45.658839

998 2024-09-25 22.269591 24.761772 -4.648639 44.929457

999 2024-09-26 22.108488 25.141986 -5.192166 44.995610

[1000 rows x 5 columns]

HDF5

将数据写入HDF5 Store:

df.to_hdf('foo.h5','df')读取其中的数据;

print(pd.read_hdf("foo.h5","df")) A B C D

2022-01-01 -0.434350 -0.332814 0.892750 1.157850

2022-01-02 -0.840929 -0.683873 -0.275972 2.419774

2022-01-03 -0.225382 -1.585506 0.196728 3.477412

2022-01-04 -0.830408 -1.598484 -0.474299 5.660145

2022-01-05 -0.250991 -1.412912 -0.876879 6.528144

... ... ... ... ...

2024-09-22 57.132175 -0.205451 -46.591026 -9.453061

2024-09-23 58.581851 -0.007550 -47.742120 -8.684937

2024-09-24 57.213156 0.110778 -47.451625 -6.414811

2024-09-25 58.161486 -0.709191 -46.552347 -6.542223

2024-09-26 56.969844 0.304673 -46.967793 -7.499496

[1000 rows x 4 columns]

Excel

写入Excel文件;

df.to_excel('foo.xlsx',sheet_name='Sheet1')读取文件信息;

print(pd.read_excel('foo.xlsx','Sheet1',index_col = None,na_values= ['NA'])) Unnamed: 0 A B C D

0 2022-01-01 -1.417080 -0.361437 -0.606728 0.638914

1 2022-01-02 -0.793184 -0.432722 -0.834653 0.419487

2 2022-01-03 0.030355 -0.136183 -0.702133 -0.615193

3 2022-01-04 -1.417608 0.682946 -1.466145 0.005104

4 2022-01-05 -0.608892 0.604069 -0.862176 0.817751

.. ... ... ... ... ...

995 2024-09-22 22.628256 25.999521 -48.243060 31.111053

996 2024-09-23 22.017860 26.358772 -47.372658 30.697530

997 2024-09-24 22.003023 24.433845 -46.477812 32.226947

998 2024-09-25 21.668497 27.670671 -45.666538 33.775446

999 2024-09-26 21.691661 27.582134 -43.666923 33.974122

[1000 rows x 5 columns]15.各种坑

在执行某些操作时,会触发异常;比如下面这种情况:

if pd.Series([False,True,False]):

print("I was true")会出现错误提示如下:

ValueError: The truth value of a Series is ambiguous. Use a.empty, a.bool(), a.item(), a.any() or a.all().

2804

2804

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?