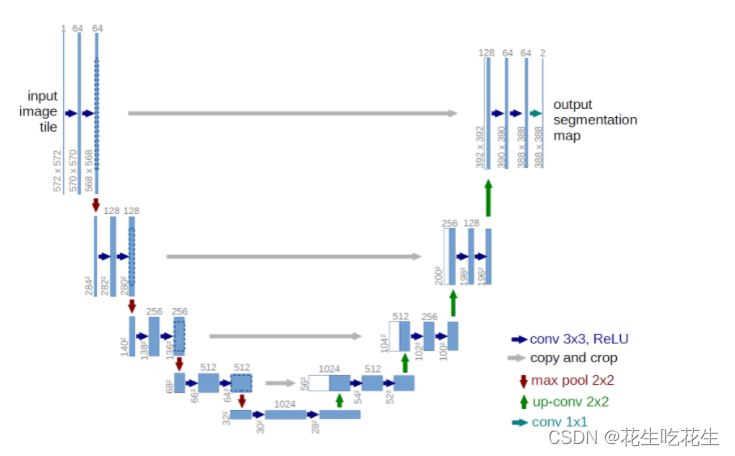

UNet是一种用于图像分割的卷积神经网络结构,它可以用于医学图像分割、自然图像分割等领域。下面我来介绍如何使用PyTorch实现UNet图像分割。

1. 准备数据

首先,你需要准备好图像分割的数据集。这个数据集需要包括原始图像和对应的分割掩码图像。你可以使用任何你熟悉的数据集,比如Kaggle上的数据集,或者自己制作的数据集。

2. 定义UNet模型

接下来,你需要定义UNet模型。UNet模型由编码器和解码器两部分组成,编码器用于提取图像特征,解码器用于将特征映射回分割掩码图像。下面是一个简单的UNet模型实现:

```python

import torch

import torch.nn as nn

class UNet(nn.Module):

def __init__(self):

super(UNet, self).__init__()

# 编码器

self.conv1 = nn.Conv2d(3, 64, 3, padding=1)

self.bn1 = nn.BatchNorm2d(64)

self.relu1 = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(64, 64, 3, padding=1)

self.bn2 = nn.BatchNorm2d(64)

self.relu2 = nn.ReLU(inplace=True)

self.pool1 = nn.MaxPool2d(2, 2)

self.conv3 = nn.Conv2d(64, 128, 3, padding=1)

self.bn3 = nn.BatchNorm2d(128)

self.relu3 = nn.ReLU(inplace=True)

self.conv4 = nn.Conv2d(128, 128, 3, padding=1)

self.bn4 = nn.BatchNorm2d(128)

self.relu4 = nn.ReLU(inplace=True)

self.pool2 = nn.MaxPool2d(2, 2)

self.conv5 = nn.Conv2d(128, 256, 3, padding=1)

self.bn5 = nn.BatchNorm2d(256)

self.relu5 = nn.ReLU(inplace=True)

self.conv6 = nn.Conv2d(256, 256, 3, padding=1)

self.bn6 = nn.BatchNorm2d(256)

self.relu6 = nn.ReLU(inplace=True)

self.pool3 = nn.MaxPool2d(2, 2)

self.conv7 = nn.Conv2d(256, 512, 3, padding=1)

self.bn7 = nn.BatchNorm2d(512)

self.relu7 = nn.ReLU(inplace=True)

self.conv8 = nn.Conv2d(512, 512, 3, padding=1)

self.bn8 = nn.BatchNorm2d(512)

self.relu8 = nn.ReLU(inplace=True)

self.pool4 = nn.MaxPool2d(2, 2)

self.conv9 = nn.Conv2d(512, 1024, 3, padding=1)

self.bn9 = nn.BatchNorm2d(1024)

self.relu9 = nn.ReLU(inplace=True)

self.conv10 = nn.Conv2d(1024, 1024, 3, padding=1)

self.bn10 = nn.BatchNorm2d(1024)

self.relu10 = nn.ReLU(inplace=True)

# 解码器

self.upconv1 = nn.ConvTranspose2d(1024, 512, 2, stride=2)

self.conv11 = nn.Conv2d(1024, 512, 3, padding=1)

self.bn11 = nn.BatchNorm2d(512)

self.relu11 = nn.ReLU(inplace=True)

self.conv12 = nn.Conv2d(512, 512, 3, padding=1)

self.bn12 = nn.BatchNorm2d(512)

self.relu12 = nn.ReLU(inplace=True)

self.upconv2 = nn.ConvTranspose2d(512, 256, 2, stride=2)

self.conv13 = nn.Conv2d(512, 256, 3, padding=1)

self.bn13 = nn.BatchNorm2d(256)

self.relu13 = nn.ReLU(inplace=True)

self.conv14 = nn.Conv2d(256, 256, 3, padding=1)

self.bn14 = nn.BatchNorm2d(256)

self.relu14 = nn.ReLU(inplace=True)

self.upconv3 = nn.ConvTranspose2d(256, 128, 2, stride=2)

self.conv15 = nn.Conv2d(256, 128, 3, padding=1)

self.bn15 = nn.BatchNorm2d(128)

self.relu15 = nn.ReLU(inplace=True)

self.conv16 = nn.Conv2d(128, 128, 3, padding=1)

self.bn16 = nn.BatchNorm2d(128)

self.relu16 = nn.ReLU(inplace=True)

self.upconv4 = nn.ConvTranspose2d(128, 64, 2, stride=2)

self.conv17 = nn.Conv2d(128, 64, 3, padding=1)

self.bn17 = nn.BatchNorm2d(64)

self.relu17 = nn.ReLU(inplace=True)

self.conv18 = nn.Conv2d(64, 64, 3, padding=1)

self.bn18 = nn.BatchNorm2d(64)

self.relu18 = nn.ReLU(inplace=True)

self.conv19 = nn.Conv2d(64, 1, 1)

def forward(self, x):

# 编码器

x1 = self.relu1(self.bn1(self.conv1(x)))

x2 = self.relu2(self.bn2(self.conv2(x1)))

x3 = self.relu3(self.bn3(self.conv3(self.pool1(x2))))

x4 = self.relu4(self.bn4(self.conv4(x3)))

x5 = self.relu5(self.bn5(self.conv5(self.pool2(x4))))

x6 = self.relu6(self.bn6(self.conv6(x5)))

x7 = self.relu7(self.bn7(self.conv7(self.pool3(x6))))

x8 = self.relu8(self.bn8(self.conv8(x7)))

x9 = self.relu9(self.bn9(self.conv9(self.pool4(x8))))

x10 = self.relu10(self.bn10(self.conv10(x9)))

# 解码器

x = self.relu11(self.bn11(self.conv11(torch.cat([x8, self.upconv1(x10)], 1))))

x = self.relu12(self.bn12(self.conv12(x)))

x = self.relu13(self.bn13(self.conv13(torch.cat([x6, self.upconv2(x)], 1))))

x = self.relu14(self.bn14(self.conv14(x)))

x = self.relu15(self.bn15(self.conv15(torch.cat([x4, self.upconv3(x)], 1))))

x = self.relu16(self.bn16(self.conv16(x)))

x = self.relu17(self.bn17(self.conv17(torch.cat([x2, self.upconv4(x)], 1))))

x = self.relu18(self.bn18(self.conv18(x)))

x = self.conv19(x)

return x

```

在这个模型中,UNet有5个下采样层和5个上采样层。每个下采样层由两个卷积层和一个最大池化层组成,每个上采样层由一个转置卷积层和两个卷积层组成。

3. 定义损失函数和优化器

接下来,你需要定义损失函数和优化器。在图像分割任务中,我们通常使用交叉熵损失函数。优化器可以选择Adam、SGD等。

```python

import torch.optim as optim

criterion = nn.BCEWithLogitsLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

```

4. 训练模型

最后,你可以开始训练模型了。你需要将数据集分成训练集和验证集,然后使用PyTorch的DataLoader加载数据集,并在每个epoch训练模型。

```python

from torch.utils.data import DataLoader

train_loader = DataLoader(train_dataset, batch_size=4, shuffle=True)

val_loader = DataLoader(val_dataset, batch_size=4, shuffle=True)

for epoch in range(num_epochs):

train_loss = 0

val_loss = 0

# 训练模型

model.train()

for images, masks in train_loader:

optimizer.zero_grad()

outputs = model(images)

loss = criterion(outputs, masks)

loss.backward()

optimizer.step()

train_loss += loss.item()

# 验证模型

model.eval()

with torch.no_grad():

for images, masks in val_loader:

outputs = model(images)

loss = criterion(outputs, masks)

val_loss += loss.item()

train_loss /= len(train_loader)

val_loss /= len(val_loader)

print('Epoch: {}, Train Loss: {}, Val Loss: {}'.format(epoch+1, train_loss, val_loss))

```

在训练过程中,你可以在每个epoch后计算训练集和验证集的损失,并输出训练结果。训练完成后,你可以保存模型并在测试集上进行测试。

这就是使用PyTorch实现UNet图像分割的基本流程。当然,你可以根据自己的需求调整模型结构、损失函数和优化器等。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

7762

7762

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?