先自我介绍一下,小编浙江大学毕业,去过华为、字节跳动等大厂,目前在阿里

深知大多数程序员,想要提升技能,往往是自己摸索成长,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

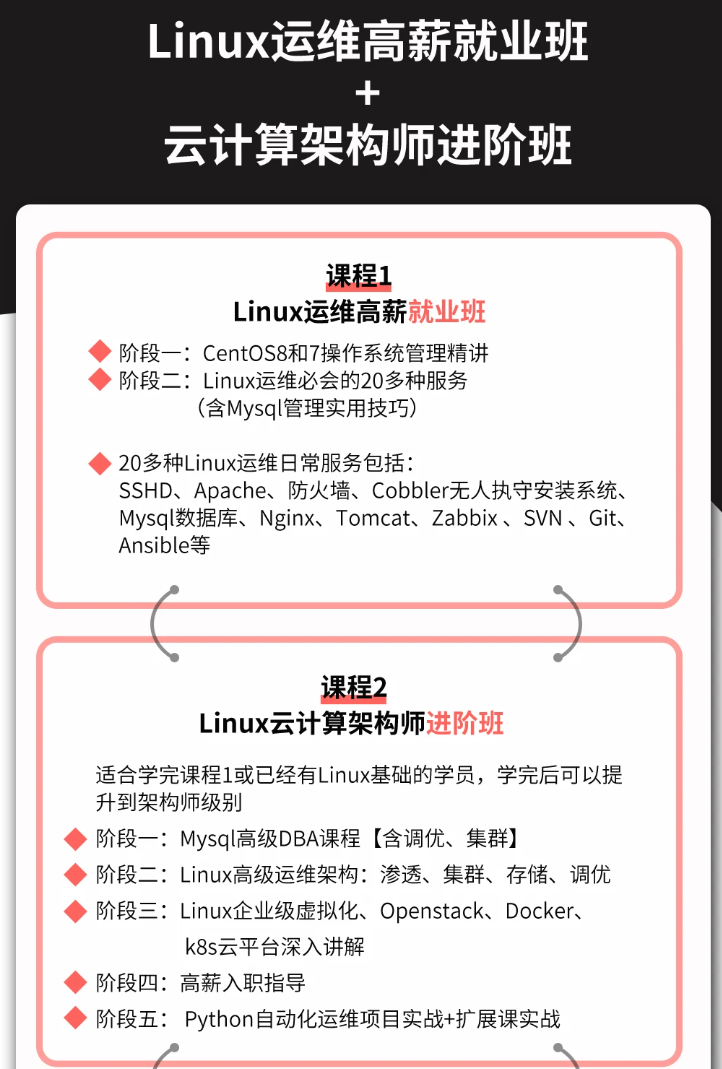

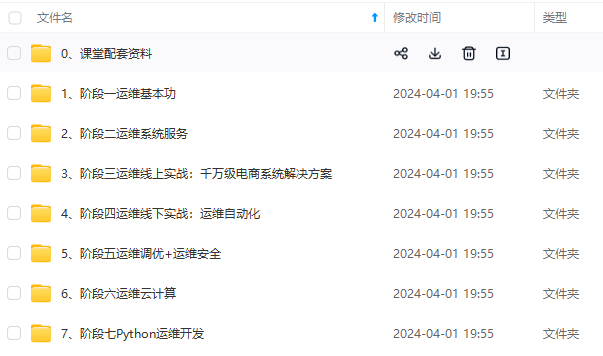

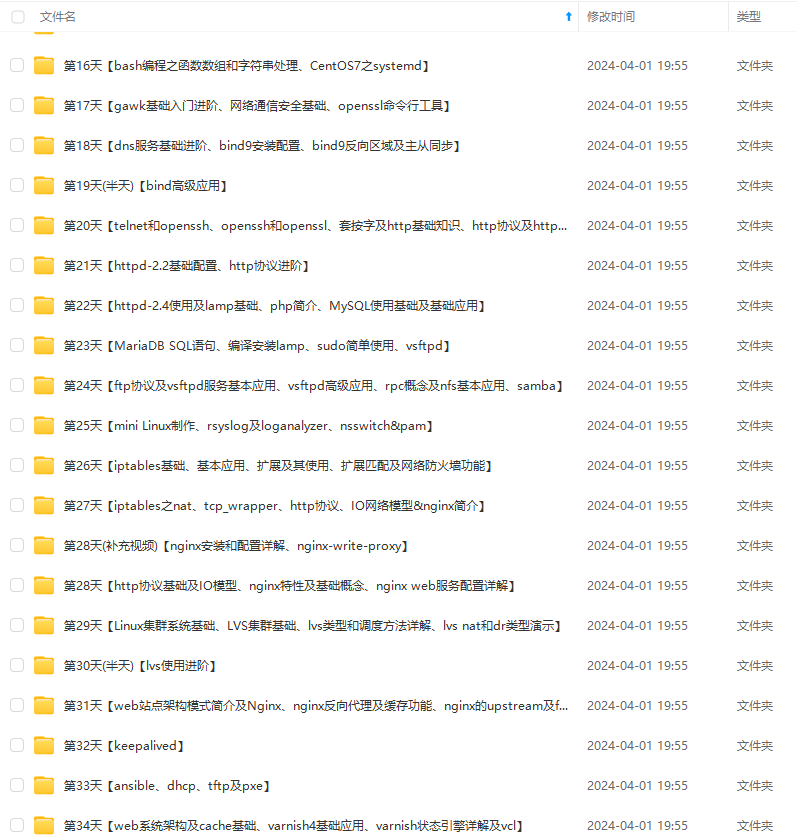

因此收集整理了一份《2024年最新Linux运维全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上运维知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

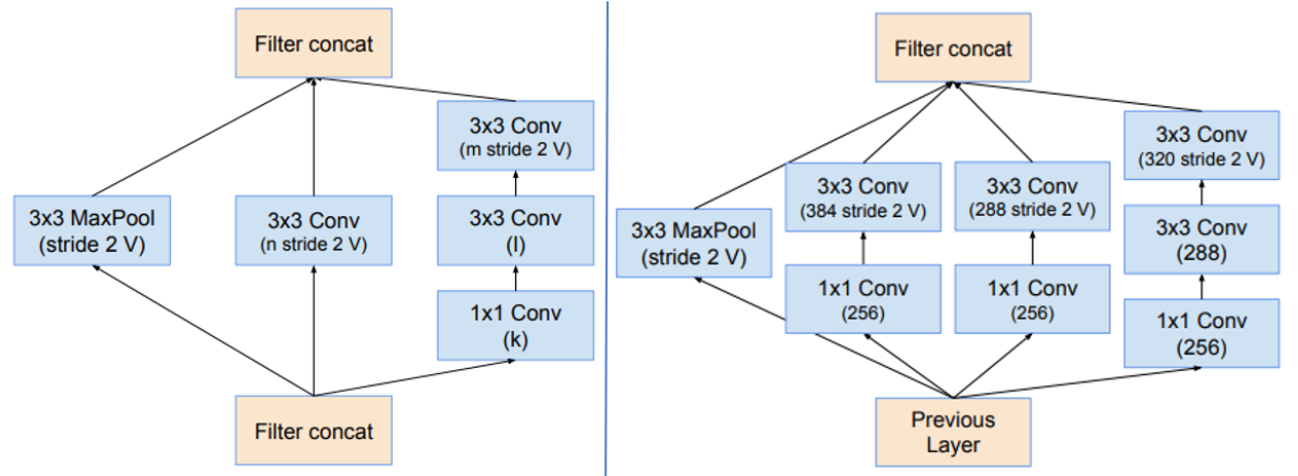

Inception-ResNet-v2网络的Reduction-A、B

Inception-ResNet v1 的计算成本和 Inception v3 的接近;Inception-ResNetv2 的计算成本和 Inception v4 的接近。它们有不同的 stem,两个网络都有模块 A、B、C 和缩减块结构。唯一的不同在于超参数设置。

③ 代码实现:

(1)Inception-Resnet-v1:

# 先定义3X3的卷积,用于代码复用

class conv3x3(nn.Module):

def __init__(self, in_planes, out_channels, stride=1, padding=0):

super(conv3x3, self).__init__()

self.conv3x3 = nn.Sequential(

nn.Conv2d(in_planes, out_channels, kernel_size=3, stride=stride, padding=padding),#卷积核为3x3

nn.BatchNorm2d(out_channels),#BN层,防止过拟合以及梯度爆炸

nn.ReLU()#激活函数

)

def forward(self, input):

return self.conv3x3(input)

class conv1x1(nn.Module):

def __init__(self, in_planes, out_channels, stride=1, padding=0):

super(conv1x1, self).__init__()

self.conv1x1 = nn.Sequential(

nn.Conv2d(in_planes, out_channels, kernel_size=1, stride=stride, padding=padding),#卷积核为1x1

nn.BatchNorm2d(out_channels),

nn.ReLU()

)

def forward(self, input):

return self.conv1x1(input)

Stem模块:输入299*299*3,输出35*35*256.

class StemV1(nn.Module):

def __init__(self, in_planes):

super(StemV1, self).__init__()

self.conv1 = conv3x3(in_planes =in_planes,out_channels=32,stride=2, padding=0)

self.conv2 = conv3x3(in_planes=32, out_channels=32, stride=1, padding=0)

self.conv3 = conv3x3(in_planes=32, out_channels=64, stride=1, padding=1)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=0)

self.conv4 = conv3x3(in_planes=64, out_channels=64, stride=1, padding=1)

self.conv5 = conv1x1(in_planes =64,out_channels=80, stride=1, padding=0)

self.conv6 = conv3x3(in_planes=80, out_channels=192, stride=1, padding=0)

self.conv7 = conv3x3(in_planes=192, out_channels=256, stride=2, padding=0)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.maxpool(x)

x = self.conv4(x)

x = self.conv5(x)

x = self.conv6(x)

x = self.conv7(x)

return x

IR-A模块*5:输入35*35*256,输出35*35*256.

class Inception_ResNet_A(nn.Module):

def __init__(self, input ):

super(Inception_ResNet_A, self).__init__()

self.conv1 = conv1x1(in_planes =input,out_channels=32,stride=1, padding=0)

self.conv2 = conv3x3(in_planes=32, out_channels=32, stride=1, padding=1)

self.line = nn.Conv2d(96, 256, 1, stride=1, padding=0, bias=True)

self.relu = nn.ReLU()

def forward(self, x):

c1 = self.conv1(x)

# print("c1",c1.shape)

c2 = self.conv1(x)

# print("c2", c2.shape)

c3 = self.conv1(x)

# print("c3", c3.shape)

c2_1 = self.conv2(c2)

# print("c2_1", c2_1.shape)

c3_1 = self.conv2(c3)

# print("c3_1", c3_1.shape)

c3_2 = self.conv2(c3_1)

# print("c3_2", c3_2.shape)

cat = torch.cat([c1, c2_1, c3_2],dim=1)#torch.Size([4, 96, 15, 15])

# print("x",x.shape)

line = self.line(cat)

# print("line",line.shape)

out =x+line

out = self.relu(out)

return out

Reduction-A模块:输入35*35*256,输出17*17*896.

class Reduction_A(nn.Module):

def __init__(self, input,n=384,k=192,l=224,m=256):

super(Reduction_A, self).__init__()

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=0)

self.conv1 = conv3x3(in_planes=input, out_channels=n,stride=2,padding=0)

self.conv2 = conv1x1(in_planes=input, out_channels=k,padding=1)

self.conv3 = conv3x3(in_planes=k, out_channels=l,padding=0)

self.conv4 = conv3x3(in_planes=l, out_channels=m,stride=2,padding=0)

def forward(self, x):

c1 = self.maxpool(x)

# print("c1",c1.shape)

c2 = self.conv1(x)

# print("c2", c2.shape)

c3 = self.conv2(x)

# print("c3", c3.shape)

c3_1 = self.conv3(c3)

# print("c3_1", c3_1.shape)

c3_2 = self.conv4(c3_1)

# print("c3_2", c3_2.shape)

cat = torch.cat([c1, c2,c3_2], dim=1)

return cat

IR-B模块*10:输入17*17*896,输出17*17*896.

class Inception_ResNet_B(nn.Module):

def __init__(self, input):

super(Inception_ResNet_B, self).__init__()

self.conv1 = conv1x1(in_planes =input,out_channels=128,stride=1, padding=0)

self.conv1x7 = nn.Conv2d(in_channels=128,out_channels=128,kernel_size=(1,7), padding=(0,3))

self.conv7x1 = nn.Conv2d(in_channels=128, out_channels=128,kernel_size=(7,1), padding=(3,0))

self.line = nn.Conv2d(256, 896, 1, stride=1, padding=0, bias=True)

self.relu = nn.ReLU()

def forward(self, x):

c1 = self.conv1(x)

# print("c1",c1.shape)

c2 = self.conv1(x)

# print("c2", c2.shape)

c2_1 = self.conv1x7(c2)

# print("c2_1", c2_1.shape)

c2_1 = self.relu(c2_1)

c2_2 = self.conv7x1(c2_1)

# print("c2_2", c2_2.shape)

c2_2 = self.relu(c2_2)

cat = torch.cat([c1, c2_2], dim=1)

line = self.line(cat)

out =x+line

out = self.relu(out)

# print("out", out.shape)

return out

Reduction-B模块:输入17*17*896,输出8*8*1792.

class Reduction_B(nn.Module):

def __init__(self, input):

super(Reduction_B, self).__init__()

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.conv1 = conv1x1(in_planes=input, out_channels=256, padding=1)

self.conv2 = conv3x3(in_planes=256, out_channels=384, stride=2, padding=0)

self.conv3 = conv3x3(in_planes=256, out_channels=256,stride=2, padding=0)

self.conv4 = conv3x3(in_planes=256, out_channels=256, padding=1)

self.conv5 = conv3x3(in_planes=256, out_channels=256, stride=2, padding=0)

def forward(self, x):

c1 = self.maxpool(x)

# print("c1", c1.shape)

c2 = self.conv1(x)

# print("c2", c2.shape)

c3 = self.conv1(x)

# print("c3", c3.shape)

c4 = self.conv1(x)

# print("c4", c4.shape)

c2_1 = self.conv2(c2)

# print("cc2_1", c2_1.shape)

c3_1 = self.conv3(c3)

# print("c3_1", c3_1.shape)

c4_1 = self.conv4(c4)

# print("c4_1", c4_1.shape)

c4_2 = self.conv5(c4_1)

# print("c4_2", c4_2.shape)

cat = torch.cat([c1, c2_1, c3_1,c4_2], dim=1)

# print("cat", cat.shape)

return cat

IR-C模块*5:输入8*8*1792,输出8*8*1792.

class Inception_ResNet_C(nn.Module):

def __init__(self, input):

super(Inception_ResNet_C, self).__init__()

self.conv1 = conv1x1(in_planes=input, out_channels=192, stride=1, padding=0)

self.conv1x3 = nn.Conv2d(in_channels=192, out_channels=192, kernel_size=(1, 3), padding=(0,1))

self.conv3x1 = nn.Conv2d(in_channels=192, out_channels=192, kernel_size=(3, 1), padding=(1,0))

self.line = nn.Conv2d(384, 1792, 1, stride=1, padding=0, bias=True)

self.relu = nn.ReLU()

def forward(self, x):

c1 = self.conv1(x)

# print("x", x.shape)

# print("c1",c1.shape)

c2 = self.conv1(x)

# print("c2", c2.shape)

c2_1 = self.conv1x3(c2)

# print("c2_1", c2_1.shape)

c2_1 = self.relu(c2_1)

c2_2 = self.conv3x1(c2_1)

# print("c2_2", c2_2.shape)

c2_2 = self.relu(c2_2)

cat = torch.cat([c1, c2_2], dim=1)

# print("cat", cat.shape)

line = self.line(cat)

out = x+ line

# print("out", out.shape)

out = self.relu(out)

return out

class Inception_ResNet(nn.Module):

def __init__(self,classes=2):

super(Inception_ResNet, self).__init__()

blocks = []

blocks.append(StemV1(in_planes=3))

for i in range(5):

blocks.append(Inception_ResNet_A(input=256))

blocks.append(Reduction_A(input=256))

for i in range(10):

blocks.append(Inception_ResNet_B(input=896))

blocks.append(Reduction_B(input=896))

for i in range(10):

blocks.append(Inception_ResNet_C(input=1792))

self.features = nn.Sequential(*blocks)

self.avepool = nn.AvgPool2d(kernel_size=3)

self.dropout = nn.Dropout(p=0.2)

self.linear = nn.Linear(1792, classes)

def forward(self,x):

x = self.features(x)

# print("x",x.shape)

x = self.avepool(x)

# print("avepool", x.shape)

x = self.dropout(x)

# print("dropout", x.shape)

x = x.view(x.size(0), -1)

x = self.linear(x)

return x

上述代码参考:基于PyTorch实现 Inception-ResNet-v1_NAND_LU的博客-CSDN博客

(2)Inception-Resnet-v2:

import torch

import torch.nn as nn

import torch.nn.functional as F

# 由于后面会经常用到,提前定义3×3卷积 和 1×1的卷积

class conv3x3(nn.Module):

def __init__(self, in_planes, out_channels, stride=1, padding=0):

super(conv3x3, self).__init__()

self.conv3x3 = nn.Sequential(

nn.Conv2d(in_planes, out_channels, kernel_size=3, stride=stride, padding=padding), # 卷积核为3x3

nn.BatchNorm2d(out_channels), # BN层,防止过拟合以及梯度爆炸

nn.ReLU() # 激活函数

)

def forward(self, input):

return self.conv3x3(input)

class conv1x1(nn.Module):

def __init__(self, in_planes, out_channels, stride=1, padding=0):

super(conv1x1, self).__init__()

self.conv1x1 = nn.Sequential(

nn.Conv2d(in_planes, out_channels, kernel_size=1, stride=stride, padding=padding), # 卷积核为1x1

nn.BatchNorm2d(out_channels),

nn.ReLU()

)

def forward(self, input):

return self.conv1x1(input)

stem模块:输入299*299*3,输出35*35*384.

# 定义stem模块

class StemV2(nn.Module):

def __init__(self, in_planes=3):

super(StemV2, self).__init__()

self.conv1 = conv3x3(in_planes =in_planes,out_channels=32,stride=2, padding=0)

self.conv2 = conv3x3(in_planes=32, out_channels=32, stride=1, padding=0)

self.conv3 = conv3x3(in_planes=32, out_channels=64, stride=1, padding=1)

self.maxpool1 = nn.MaxPool2d(kernel_size=3, stride=2, padding=0)

self.conv4 = conv3x3(in_planes=64, out_channels=96, stride=2, padding=0)

self.conv5 = conv1x1(in_planes=160, out_channels=64, stride=1, padding=1)

self.conv6 = conv3x3(in_planes=64, out_channels=96, stride=1, padding=0)

self.conv7 = conv1x1(in_planes=160, out_channels=64, stride=1, padding=1)

self.conv8 = nn.Conv2d(in_channels=64, out_channels=64, stride=1, kernel_size=(1, 7), padding=(0, 3))

self.conv9 = nn.Conv2d(in_channels=64, out_channels=64, stride=1, kernel_size=(7, 1), padding=(3, 0))

self.conv10 = conv3x3(in_planes=64, out_channels=96, stride=1, padding=0)

self.conv11 = conv3x3(in_planes=192, out_channels=192, stride=2, padding=0)

self.maxpool2 = nn.MaxPool2d(kernel_size=3, stride=2, padding=0)

self.relu = nn.ReLU()

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x1 = self.maxpool1(x)

x2 = self.conv4(x)

x = torch.cat([x1, x2], dim=1)

x1 = self.conv5(x)

x1 = self.conv6(x1)

x2 = self.conv7(x)

x2 = self.conv8(x2)

x2 = self.relu(x2)

x2 = self.conv9(x2)

x2 = self.relu(x2)

x2 = self.conv10(x2)

x = torch.cat([x1, x2], dim=1)

x1 = self.conv11(x)

x2 = self.maxpool2(x)

x = torch.cat([x1, x2], dim=1)

return x

IR-A模块*5:输入35*35*384,输出35*35*256.

# 定义IR-A模块

class Inception_ResNet_A(nn.Module):

def __init__(self, input, scale=0.3):

super(Inception_ResNet_A, self).__init__()

self.conv1 = conv1x1(in_planes =input,out_channels=32,stride=1, padding=0)

self.conv2 = conv3x3(in_planes=32, out_channels=32, stride=1, padding=1)

self.conv3 = conv3x3(in_planes=32, out_channels=48, stride=1, padding=1)

self.conv4 = conv3x3(in_planes=48, out_channels=64, stride=1, padding=1)

self.line = nn.Conv2d(128, 384, 1, stride=1, padding=0, bias=True)

self.scale = scale

self.relu = nn.ReLU()

def forward(self, x):

c1 = self.conv1(x)

# print("c1",c1.shape)

c2 = self.conv1(x)

# print("c2", c2.shape)

c3 = self.conv1(x)

# print("c3", c3.shape)

c2_1 = self.conv2(c2)

# print("c2_1", c2_1.shape)

c3_1 = self.conv3(c3)

# print("c3_1", c3_1.shape)

c3_2 = self.conv4(c3_1)

# print("c3_2", c3_2.shape)

cat = torch.cat([c1, c2_1, c3_2],dim=1)#torch.Size([4, 96, 15, 15])

# print("x",x.shape)

line = self.line(cat)

# print("line",line.shape)

out = self.scale*x+line

out = self.relu(out)

return out

Reduction-A模块:输入35*35*256,输出17*17*896.

# 定义Reduction-A模块

class Reduction_A(nn.Module):

def __init__(self, input, n=384, k=256, l=256, m=384, scale=0.3):

super(Reduction_A, self).__init__()

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=0)

self.conv1 = conv3x3(in_planes=input, out_channels=n, stride=2,padding=0)

self.conv2 = conv1x1(in_planes=input, out_channels=k, padding=1)

self.conv3 = conv3x3(in_planes=k, out_channels=l, padding=0)

self.conv4 = conv3x3(in_planes=l, out_channels=m, stride=2, padding=0)

### 最后的话

最近很多小伙伴找我要Linux学习资料,于是我翻箱倒柜,整理了一些优质资源,涵盖视频、电子书、PPT等共享给大家!

### 资料预览

给大家整理的视频资料:

给大家整理的电子书资料:

**如果本文对你有帮助,欢迎点赞、收藏、转发给朋友,让我有持续创作的动力!**

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化的资料的朋友,可以点击这里获取!](https://bbs.csdn.net/forums/4f45ff00ff254613a03fab5e56a57acb)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

最近很多小伙伴找我要Linux学习资料,于是我翻箱倒柜,整理了一些优质资源,涵盖视频、电子书、PPT等共享给大家!

### 资料预览

给大家整理的视频资料:

[外链图片转存中...(img-QdTMUOGd-1715299563419)]

给大家整理的电子书资料:

[外链图片转存中...(img-JsExxeen-1715299563419)]

**如果本文对你有帮助,欢迎点赞、收藏、转发给朋友,让我有持续创作的动力!**

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化的资料的朋友,可以点击这里获取!](https://bbs.csdn.net/forums/4f45ff00ff254613a03fab5e56a57acb)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

1311

1311

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?