self.output_size = output_size

self.n_layers = n_layers

self.hidden_dim = hidden_dim

self.bidirectional = bidirectional

#Bert ----------------重点,bert模型需要嵌入到自定义模型里面

self.bert=BertModel.from_pretrained(bertpath)

for param in self.bert.parameters():

param.requires_grad = True

LSTM layers

self.lstm = nn.LSTM(768, hidden_dim, n_layers, batch_first=True,bidirectional=bidirectional)

dropout layer

self.dropout = nn.Dropout(drop_prob)

linear and sigmoid layers

if bidirectional:

self.fc = nn.Linear(hidden_dim*2, output_size)

else:

self.fc = nn.Linear(hidden_dim, output_size)

#self.sig = nn.Sigmoid()

def forward(self, x, hidden):

batch_size = x.size(0)

#生成bert字向量

x=self.bert(x)[0] #bert 字向量

lstm_out

#x = x.float()

lstm_out, (hidden_last,cn_last) = self.lstm(x, hidden)

#print(lstm_out.shape) #[32,100,768]

#print(hidden_last.shape) #[4, 32, 384]

#print(cn_last.shape) #[4, 32, 384]

#修改 双向的需要单独处理

if self.bidirectional:

#正向最后一层,最后一个时刻

hidden_last_L=hidden_last[-2]

#print(hidden_last_L.shape) #[32, 384]

#反向最后一层,最后一个时刻

hidden_last_R=hidden_last[-1]

#print(hidden_last_R.shape) #[32, 384]

#进行拼接

hidden_last_out=torch.cat([hidden_last_L,hidden_last_R],dim=-1)

#print(hidden_last_out.shape,‘hidden_last_out’) #[32, 768]

else:

hidden_last_out=hidden_last[-1] #[32, 384]

dropout and fully-connected layer

out = self.dropout(hidden_last_out)

#print(out.shape) #[32,768]

out = self.fc(out)

return out

def init_hidden(self, batch_size):

weight = next(self.parameters()).data

number = 1

if self.bidirectional:

number = 2

if (USE_CUDA):

hidden = (weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_().float().cuda(),

weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_().float().cuda()

)

else:

hidden = (weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_().float(),

weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_().float()

)

return hidden

bert_lstm需要的参数功6个,参数说明如下:

–bertpath:bert预训练模型的路径

–hidden_dim:隐藏层的数量。

–output_size:分类的个数。

–n_layers:lstm的层数

–bidirectional:是否是双向lstm

–drop_prob:dropout的参数

定义bert的参数,如下:

class ModelConfig:

batch_size = 2

output_size = 2

hidden_dim = 384 #768/2

n_layers = 2

lr = 2e-5

bidirectional = True #这里为True,为双向LSTM

training params

epochs = 10

batch_size=50

print_every = 10

clip=5 # gradient clipping

use_cuda = USE_CUDA

bert_path = ‘bert-base-chinese’ #预训练bert路径

save_path = ‘bert_bilstm.pth’ #模型保存路径

batch_size:batchsize的大小,根据显存设置。

output_size:输出的类别个数,本例是2.

hidden_dim:隐藏层的数量。

n_layers:lstm的层数。

bidirectional:是否双向

print_every:输出的间隔。

use_cuda:是否使用cuda,默认使用,不用cuda太慢了。

bert_path:预训练模型存放的文件夹。

save_path:模型保存的路径。

===============================================================

需要下载transformers和sentencepiece,执行命令:

conda install sentencepiece

conda install transformers

================================================================

数据集按照7:3,切分为训练集和测试集,然后又将测试集按照1:1切分为验证集和测试集。

代码如下:

model_config = ModelConfig()

data=pd.read_csv(‘caipindianping.csv’,encoding=‘utf-8’)

result_comments = pretreatment(list(data[‘comment’].values))

tokenizer = BertTokenizer.from_pretrained(model_config.bert_path)

result_comments_id = tokenizer(result_comments,

padding=True,

truncation=True,

max_length=200,

return_tensors=‘pt’)

X = result_comments_id[‘input_ids’]

y = torch.from_numpy(data[‘sentiment’].values).float()

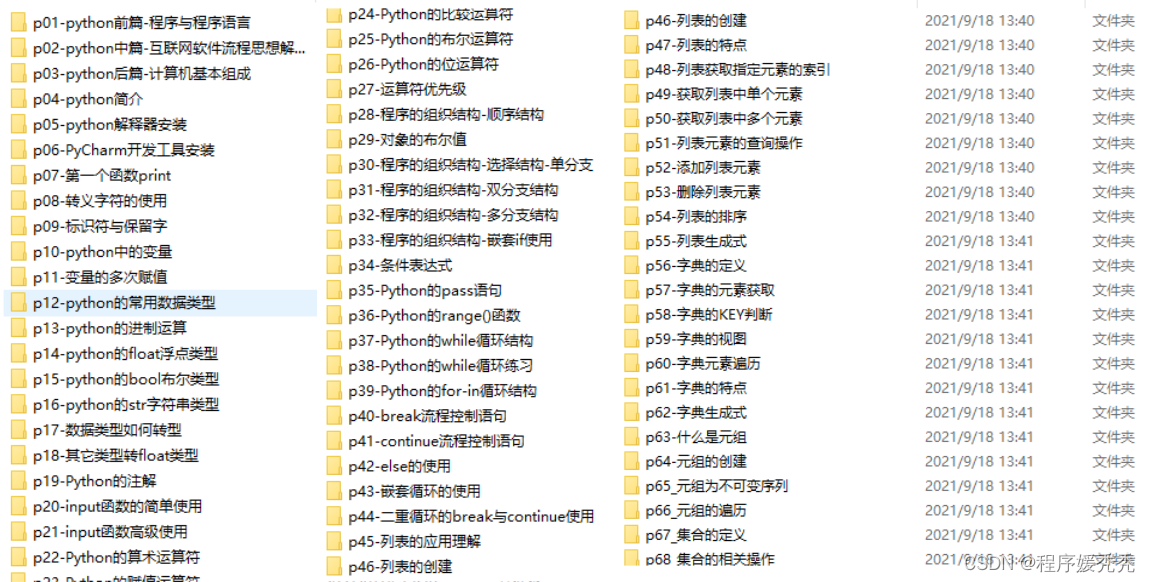

一、Python所有方向的学习路线

Python所有方向路线就是把Python常用的技术点做整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

二、学习软件

工欲善其事必先利其器。学习Python常用的开发软件都在这里了,给大家节省了很多时间。

三、入门学习视频

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了。

7302

7302

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?