学号:2120011013

(一)编程实现文件合并和去重操作

对于两个输入文件,即文件A和文件B,编写MapReduce程序,对两个文件进行合并,并剔除其中重复的内容,得到一个新的输出文件C。下面是输入文件和输出文件的一个样例供参考。

输入文件A的样例如下:

|

输入文件B的样例如下:

|

根据输入文件A和B合并得到的输出文件C的样例如下:

|

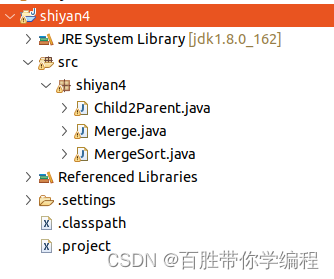

package shiyan4;

import java.io.IOException;

public class Merge {

//重载map函数,直接将输入中的value复制到输出数据的key上

public static class Map extends Mapper<Object, Text, Text, Text>{

private static Text text = new Text();

public void map(Object key, Text value, Context context) throws IOException,InterruptedException{

text = value;

context.write(text, new Text(""));

}

}

//重载reduce函数,直接将输入中的key复制到输出数据的key上

public static class Reduce extends Reducer<Text, Text, Text, Text>{

public void reduce(Text key, Iterable<Text> values, Context context ) throws IOException,InterruptedException{

context.write(key, new Text(""));

}

}

public static void main(String[] args) throws Exception{

// TODO Auto-generated method stub

Configuration conf = new Configuration();

conf.set("fs.default.name","hdfs://localhost:9000");

String[] otherArgs = new String[]{"input","output"}; /* 直接设置输入参数 */

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = Job.getInstance(conf,"Merge and duplicate removal");

job.setJarByClass(Merge.class);

job.setMapperClass(Map.class);

job.setCombinerClass(Reduce.class);

job.setReducerClass(Reduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

(二)编写程序实现对输入文件的排序

现在有多个输入文件,每个文件中的每行内容均为一个整数。要求读取所有文件中的整数,进行升序排序后,输出到一个新的文件中,输出的数据格式为每行两个整数,第一个数字为第二个整数的排序位次,第二个整数为原待排列的整数。下面是输入文件和输出文件的一个样例供参考。

输入文件1的样例如下:

| 33 37 12 40 |

输入文件2的样例如下:

| 4 16 39 5 |

输入文件3的样例如下:

| 1 45 25 |

根据输入文件1、2和3得到的输出文件如下:

|

package shiyan4;

import java.io.IOException;

public class MergeSort {

//map函数读取输入中的value,将其转化成IntWritable类型,最后作为输出key

public static class Map extends Mapper<Object, Text, IntWritable, IntWritable>{

private static IntWritable data = new IntWritable();

public void map(Object key, Text value, Context context) throws IOException,InterruptedException{

String text = value.toString();

data.set(Integer.parseInt(text));

context.write(data, new IntWritable(1));

}

}

//reduce函数将map输入的key复制到输出的value上,然后根据输入的value-list中元素的个数决定key的输出次数,定义一个全局变量line_num来代表key的位次

public static class Reduce extends Reducer<IntWritable, IntWritable, IntWritable, IntWritable>{

private static IntWritable line_num = new IntWritable(1);

public void reduce(IntWritable key, Iterable<IntWritable> values, Context context) throws IOException,InterruptedException{

for(IntWritable val : values){

context.write(line_num, key);

line_num = new IntWritable(line_num.get() + 1);

}

}

}

//自定义Partition函数,此函数根据输入数据的最大值和MapReduce框架中Partition的数量获取将输入数据按照大小分块的边界,然后根据输入数值和边界的关系返回对应的Partiton ID

public static class Partition extends Partitioner<IntWritable, IntWritable>{

public int getPartition(IntWritable key, IntWritable value, int num_Partition){

int Maxnumber = 65223;//int型的最大数值

int bound = Maxnumber/num_Partition+1;

int keynumber = key.get();

for (int i = 0; i<num_Partition; i++){

if(keynumber<bound * (i+1) && keynumber>=bound * i){

return i;

}

}

return -1;

}

}

public static void main(String[] args) throws Exception{

// TODO Auto-generated method stub

Configuration conf = new Configuration();

conf.set("fs.default.name","hdfs://localhost:9000");

String[] otherArgs = new String[]{"input","output"}; /* 直接设置输入参数 */

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount <in><out>");

System.exit(2);

}

Job job = Job.getInstance(conf,"Merge and sort");

job.setJarByClass(MergeSort.class);

job.setMapperClass(Map.class);

job.setReducerClass(Reduce.class);

job.setPartitionerClass(Partition.class);

job.setOutputKeyClass(IntWritable.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

(三)对指定的表格进行信息挖掘

下面给出一个child-parent的表格,要求挖掘其中的父子辈关系,给出祖孙辈关系的表格。

输入文件内容如下

|

输出文件内容如下:

|

package shiyan4;

import java.io.IOException;

import java.util.*;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class Child2Parent {

public static class Mymapper extends Mapper<Object, Text, Text, Text>{

public void map(Object key, Text value, Context context) throws IOException,InterruptedException{

String[] cap=value.toString().split("[\\s|\\t]+");//分割数据

if (!"child".equals(cap[0])) {

String cName = cap[0];

String pName = cap[1];

context.write(new Text(pName), new Text("r#"+cName));//打标签

context.write(new Text(cName), new Text("l#"+pName));

}

}

}

public static class Myreduce extends Reducer<Text, Text, Text, Text>{

public static int runtime = 0;

public void reduce(Text key, Iterable<Text> values,Context context) throws IOException,InterruptedException{

if (runtime == 0) {

context.write(new Text("grandchild"), new Text("grandparent"));

runtime++;

}

List<String> grandChild = new ArrayList<>();

List<String> grandParent = new ArrayList<>();

for (Text text : values) {

String[] relation = text.toString().split("#");

if ("l".equals(relation[0])) {

grandChild.add(relation[1]);

} else {

grandParent.add(relation[1]);

}

}

for (String l:grandChild) {

for (String r:grandParent) {

context.write(new Text(r), new Text(l));

}

}

}

}

public static void main(String[] args) throws Exception{

Configuration conf = new Configuration();

Job job = Job.getInstance(conf,"TableJoin");

job.setJarByClass(Child2Parent.class);

job.setMapperClass(Child2Parent.Mymapper.class);

job.setReducerClass(Child2Parent.Myreduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/user/hadoop/input"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://localhost:9000/user/hadoop/output"));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

2746

2746

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?