1. 卷积原理

- Conv1d代表一维卷积,Conv2d代表二维卷积,Conv3d代表三维卷积。

- kernel_size在训练过程中不断调整,定义为3就是3 * 3的卷积核,实际我们在训练神经网络过程中其实就是对kernel_size不断调整。

torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0,

dilation=1, groups=1, bias=True, padding_mode='zeros', device=None, dtype=None)

常用参数:

in_channels (int) – 输入图像中的通道数

out_channels (int) – 由卷积产生的通道数

padding (int, tuple or str, optional) – 添加到输入四个边的Padding Default: 0

padding_mode (str, optional) – , , or . Default:

'zeros''reflect''replicate''circular''zeros'dilation (int or tuple, optional) – Spacing between kernel elements. Default: 1

groups (int, optional) – Number of blocked connections from input channels to output channels. Default: 1

bias (bool, optional) – If , adds a learnable bias to the output. Default:

TrueTrue

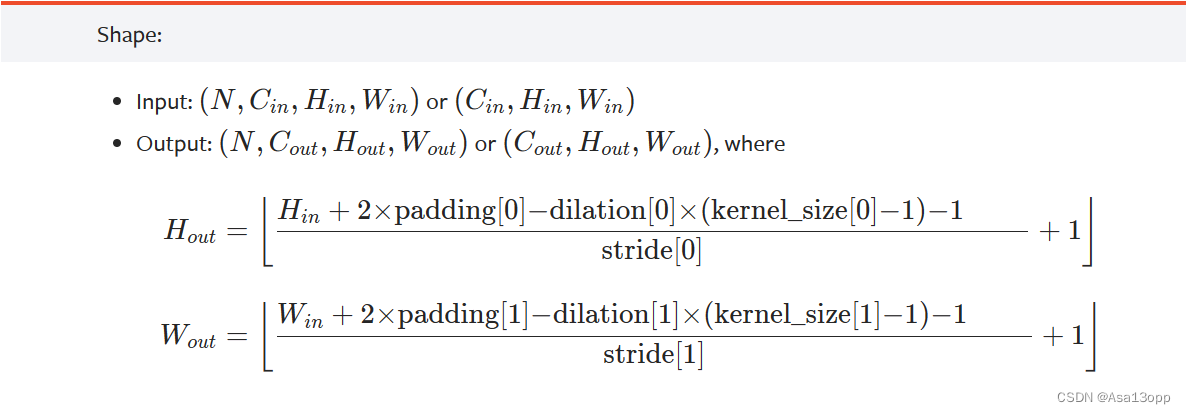

可以根据输入的参数获得输出的情况,如下图所示。

2. 搭建卷积层 并处理图片

import torch

from torch import nn

import torchvision

from torch.nn import Conv2d

from torch.utils.data import DataLoader

#搭建卷积层

dataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.conv1 = Conv2d(in_channels=3,out_channels=6,kernel_size=3,stride=1,padding=0) # 彩色图像输入为3层,我们想让它的输出为6层,选3 * 3 的卷积

def forward(self,x):

x = self.conv1(x)

return x

#卷积层处理图片

tudui = Tudui()

writer = SummaryWriter("logs")

step = 0

for data in dataloader:

imgs, targets = data

output = tudui(imgs)

print(imgs.shape) # 输入为3通道32×32的64张图片

print(output.shape) # 输出为6通道30×30的64张图片

writer.add_images("input", imgs, step)

output = torch.reshape(output,(-1,3,30,30)) #-1为占位符,让计算机自行计算后填入

# 把原来6个通道拉为3个通道,为了保证所有维度总数不变,其余的分量分到第一个维度中

writer.add_images("output", output, step)

step = step + 1

8421

8421

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?