一、FFmpeg简介

FFmpeg既是一款音视频编解码工具,同时也是一组音视频编解码开发套件,作为编解码开发套件,它为开发者提供了丰富的音视频的调用接口。

FFMpeg提供了多种媒体格式的封装和解封装,包括多种音视频编码、多种协议的流媒体、多种色彩格式转换、多种采样率转换、多种码率转换等;FFmpeg框架提供了多种丰富的插件模块,包含封装与解封装的插件、编码与解码的插件等。

FFmpeg中的“FF”指的是“Fast Forward”,FFmpeg中的“mpeg”则是“Moving Picture Experts Group(动态图像专家组)”。

1、官方地址

https://ffmpeg.org/

2、Git地址

3、Windows开发库

- 地址:

https://github.com/BtbN/FFmpeg-Builds/releases

- 下载开发库:

- 开发库目录结构如下:

二、MFC+FFmpeg实现录屏

1、FFmpeg录屏代码流程

-

白色部分: 主要为抓取桌面图像解码流程;

-

绿色部分: 将桌面图像转码/编码保存到视频文件。

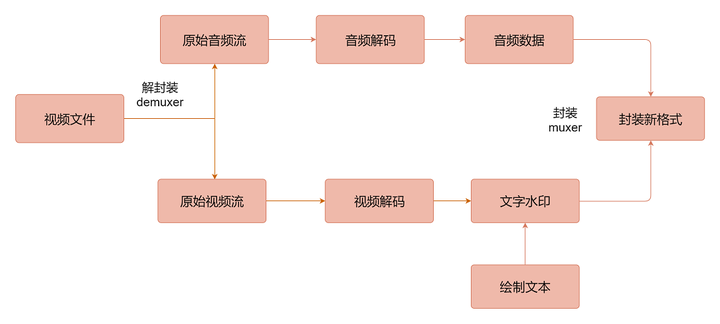

2、FFmpeg给视频添加文字水印

添加文字水印的流程图如下图所示:

① 定义滤镜实现

文字水印对应的绘制流程图如下图所示:

② 代码实现

VideoFilter.h 头文件:

/******************************************************************************

* @文件名 VideoFilter.h

* @功能 滤镜+水印

*****************************************************************************/

#pragma once

struct AVFrame;

struct AVCodecContext;

//滤镜+水印

int WaterMark(AVCodecContext* codecContext, AVFrame* frame);

VideoFilter.cpp源文件:

#include "VideoFilter.h"

#include <string>

#include <iostream>

//用C规则编译指定的代码

extern "C"

{

#include "libavcodec/avcodec.h"

#include "libavutil/opt.h"

#include "libavfilter/avfilter.h"

#include <libavutil/frame.h>

#include <libavfilter/buffersrc.h>

#include <libavfilter/buffersink.h>

}

AVFilterGraph* filter_graph = nullptr;

AVFilterContext* buffersink_ctx = nullptr;

AVFilterContext* buffersrc_ctx = nullptr;

#include <afx.h>

#include <atlconv.h>

//获取主机名称

inline std::string GetHostName()

{

static bool bInitF = false;

static char szHostName[128] = { 0 };

if (!bInitF)

{

bInitF = true;

gethostname(szHostName, sizeof(szHostName));

}

return std::string(szHostName);

}

//GBK转UTF8

std::string GBKToUTF8(const std::string& gbkStr)

{

int unicodeLen = MultiByteToWideChar(CP_ACP, 0, gbkStr.c_str(), -1, NULL, 0);

wchar_t* unicode = new wchar_t[unicodeLen + 1];

MultiByteToWideChar(CP_ACP, 0, gbkStr.c_str(), -1, unicode, unicodeLen);

unicode[unicodeLen] = L'\0';

int utf8Len = WideCharToMultiByte(CP_UTF8, 0, unicode, -1, NULL, 0, NULL, NULL);

char* utf8 = new char[utf8Len + 1];

WideCharToMultiByte(CP_UTF8, 0, unicode, -1, utf8, utf8Len, NULL, NULL);

utf8[utf8Len] = '\0';

std::string retStr(utf8);

delete[] unicode;

delete[] utf8;

return retStr;

}

//添加文字水印

int WaterMark(AVCodecContext* codecContext, AVFrame* frame)

{

char args[512] = {0};

int ret = 0;

//缓存输入和缓存输出

const AVFilter* buffersrc = avfilter_get_by_name("buffer");

const AVFilter* buffersink = avfilter_get_by_name("buffersink");

//创建输入输出参数

AVFilterInOut* outputs = avfilter_inout_alloc();

AVFilterInOut* inputs = avfilter_inout_alloc();

enum AVPixelFormat pix_fmts[] = { /*AV_PIX_FMT_YUV420P*/AV_PIX_FMT_BGRA, /*AV_PIX_FMT_YUV420P*/ AV_PIX_FMT_BGRA };

//创建滤镜容器

filter_graph = avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph)

{

ret = AVERROR(ENOMEM);

goto end;

}

//初始化数据帧的格式

sprintf_s(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

codecContext->width, codecContext->height, AV_PIX_FMT_BGRA, 1, 25, 1, 1);//图像宽高,格式,帧率,画面横纵比

//输入

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

goto end;

}

//输出

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0)

{

goto end;

}

//设置元素样式

ret = av_opt_set_int_list(buffersink_ctx, "pix_fmts", pix_fmts,

AV_PIX_FMT_BGRA, AV_OPT_SEARCH_CHILDREN);

if (ret < 0)

{

goto end;

}

//设置滤镜的端点

outputs->name = av_strdup("in");

outputs->filter_ctx = buffersrc_ctx;

outputs->pad_idx = 0;

outputs->next = NULL;

inputs->name = av_strdup("out");

inputs->filter_ctx = buffersink_ctx;

inputs->pad_idx = 0;

inputs->next = NULL;

//滤镜的描述

//使用arial字体,绘制的字体大小为50,文本内容为"节点:zxdd1 用户:root",绘制位置为(150,150)

//绘制的字体颜色为绿色

//要添加的水印数据

char filter_desrc[256] = { 0 };

//英文

//snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=arial.ttf:fontcolor=green:fontsize=50:x=150:y=150:text='%s'", "NODE[zxdd1] USER[root]");

//中文

{

if (time(NULL) % 2 == 0)

{

std::string gbkStr = "节点[" + GetHostName() + "] 操作员[root]";

std::string utf8Str = GBKToUTF8(gbkStr);

snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=./Fonts/msyh.ttc:fontcolor=0xFF1010:fontsize=40:x=10:y=20:text='%s'", utf8Str.c_str());

}

else

{

snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=./Fonts/msyh.ttc:fontcolor=0x00FF00:fontsize=40:x=10:y=20:text=''");

}

}

if ((ret = avfilter_graph_parse_ptr(filter_graph, filter_desrc,

&inputs, &outputs, NULL)) < 0)

{

goto end;

}

//滤镜

if ((ret = avfilter_graph_config(filter_graph, NULL)) < 0)

goto end;

if (av_buffersrc_add_frame(buffersrc_ctx, frame) < 0)

{

goto end;

}

//从滤波器中输出处理数据

if (av_buffersink_get_frame(buffersink_ctx, frame) < 0)

{

goto end;

}

end:

//释放

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

avfilter_graph_free(&filter_graph);

return ret;

}

③ 文字水印支持中文配置

如果 FFmpeg 支持中文水印,则要求系统支持中文字体。

Windows 系统下的字体都安装在 "C:\Windows\Fonts" 目录下,使用时可以摘出来。

备注: 使用时,可以将需要的字体拷贝到工程目录下,然后通过fontfile=*.ttc 即可。

//要添加的水印数据

char filter_desrc[256] = { 0 };

snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=./Fonts/msyh.ttc:fontcolor=0x00FF00:fontsize=40:x=10:y=20:text='中文水印测试'");

3、MFC+FFmpeg录屏实现

① 抓屏录制视频

VideoDecode.h头文件:

#ifndef __VIDEODECODE_H__

#define __VIDEODECODE_H__

#include <string>

#include <windows.h>

struct AVFormatContext;

struct AVCodecContext;

struct AVRational;

struct AVPacket;

struct AVFrame;

struct SwsContext;

struct AVBufferRef;

struct AVInputFormat;

struct AVStream;

class VideoDecode

{

public:

VideoDecode();

~VideoDecode();

bool Open(std::string filename,int screen_index = 255 /* 默认所有屏幕 */); // 打开媒体文件

void Close(); // 关闭

bool IsEnd(); // 是否读取完成

AVFrame* ReadStream(); // 读取视频图像

POINT GetAvgFrameRate() { return m_AvgFrameRate; }

AVCodecContext* GetCodecContext() { return m_pCodecContext; }

private:

void InitFFmpeg(); // 初始化ffmpeg库(整个程序中只需加载一次)

void ShowError(int errcode); // 显示ffmpeg执行错误时的错误信息

double RationalToDouble(AVRational* rational); // 将AVRational转换为double

void Free(); // 释放

void Clear(); // 清空读取缓冲

private:

AVInputFormat* m_pInputFormat = nullptr;

AVFormatContext* m_pFormatContext = nullptr; // 解封装上下文

AVCodecContext* m_pCodecContext = nullptr; // 解码器上下文

AVPacket* m_pPacket = nullptr; // 数据包

AVFrame* m_pFrame = nullptr; // 解码后的视频帧

int m_iVideoIndex = 0; // 视频流索引

__int64 m_iTotalTime = 0; // 视频总时长

__int64 m_iTotalFrames = 0; // 视频总帧数

__int64 m_iObtainFrames = 0; // 视频当前获取到的帧数

double m_fFrameRate = 0.0; // 视频帧率

SIZE m_Size; // 视频分辨率大小

char* m_pError = nullptr; // 保存异常信息

bool m_bEndF = false; // 视频读取完成

POINT m_AvgFrameRate;

};

#endif // __VIDEODECODE_H__

VideoDecode.cpp源文件:

#include "VideoDecode.h"

#include <iostream>

#include <mutex>

#include <vector>

// 使用MFC的CRect或者直接使用RECT结构体

typedef RECT DisplayRect;

std::vector<DisplayRect> g_vectDisplayRect;

BOOL CALLBACK MonitorEnumProc(HMONITOR hMonitor, HDC hdcMonitor, LPRECT lprcMonitor, LPARAM dwData)

{

MONITORINFOEX mi;

ZeroMemory(&mi, sizeof(MONITORINFOEX));

mi.cbSize = sizeof(MONITORINFOEX);

while(GetMonitorInfo(hMonitor, &mi))

{

DisplayRect rect(mi.rcMonitor);

g_vectDisplayRect.push_back(rect);

ZeroMemory(&mi, sizeof(MONITORINFOEX));

}

return TRUE; // 继续枚举

}

// 获取指定显示器的屏幕大小

DisplayRect GetDisplayRectByIndex(int index)

{

if (g_vectDisplayRect.size() <= 0)

EnumDisplayMonitors(NULL, NULL, MonitorEnumProc, 0);

DisplayRect rect;

rect.left = 0;

rect.right = 0;

rect.top = 0;

rect.bottom = 0;

if (index < g_vectDisplayRect.size())

{

auto iSize = g_vectDisplayRect.size();

for (int ii = 0;ii< iSize;++ii)

{

if (ii == index)

{

rect = g_vectDisplayRect[ii];

break;

}

}

}

return rect;

}

// 用C规则编译指定的代码

extern "C"

{

#include "libavcodec/avcodec.h"

#include "libavformat/avformat.h"

#include "libavutil/avutil.h"

#include "libswscale/swscale.h"

#include "libavutil/imgutils.h"

#include "libavdevice/avdevice.h"

}

#define ERROR_LEN 1024

#define PRINT_LOG 1

VideoDecode::VideoDecode()

{

InitFFmpeg();

m_pError = new char[ERROR_LEN];

#if defined(WIN32)

m_pInputFormat = av_find_input_format("gdigrab"); // Windows下如果没有则不能打开设备

#elif defined(OS_LINUX)

m_pInputFormat = av_find_input_format("x11grab");

#endif

if (!m_pInputFormat)

{

std::cout << "查询AVInputFormat设备失败!" << std::endl;

}

}

VideoDecode::~VideoDecode()

{

Close();

}

void VideoDecode::InitFFmpeg()

{

static bool bInitF = true;

static std::mutex mtx;

std::unique_lock<std::mutex> locker(mtx);

if (bInitF)

{

avformat_network_init();

avdevice_register_all();

bInitF = false;

}

}

bool VideoDecode::Open(std::string filename, int screen_index /* = 255 */)

{

if (filename.empty()) return false;

AVDictionary* pDictOps = nullptr;

av_dict_set(&pDictOps, "framerate", "10", 0); // 设置帧率

av_dict_set(&pDictOps, "draw_mouse", "1", 0); // 指定是否绘制鼠标指针

//通过该参数控制多屏幕录制指定屏幕

//av_dict_set(&pDictOps, "video_size", "500x400", 0); // 录制视频的大小(宽高)

int iDisplayCount = GetSystemMetrics(SM_CMONITORS);

if (iDisplayCount > 1)

{

DisplayRect rect;

char szTemp[48] = {0};

switch (screen_index)

{

case 1: //第一个屏幕

rect = GetDisplayRectByIndex(0);

snprintf(szTemp, sizeof(szTemp), "%dx%d", rect.right - rect.left, rect.bottom - rect.top);

av_dict_set(&pDictOps, "video_size", szTemp, 0);

snprintf(szTemp, sizeof(szTemp), "%d", rect.left);

av_dict_set(&pDictOps, "offset_x", szTemp, 0);

snprintf(szTemp, sizeof(szTemp), "%d", rect.top);

av_dict_set(&pDictOps, "offset_y", szTemp, 0);

break;

case 2: //第二个屏幕

rect = GetDisplayRectByIndex(1);

snprintf(szTemp, sizeof(szTemp), "%dx%d", rect.right - rect.left, rect.bottom - rect.top);

av_dict_set(&pDictOps, "video_size", szTemp, 0);

snprintf(szTemp, sizeof(szTemp), "%d", rect.left);

av_dict_set(&pDictOps, "offset_x", szTemp, 0);

snprintf(szTemp, sizeof(szTemp), "%d", rect.top);

av_dict_set(&pDictOps, "offset_y", szTemp, 0);

break;

case 3: //第三个屏幕

rect = GetDisplayRectByIndex(2);

snprintf(szTemp, sizeof(szTemp), "%dx%d", rect.right - rect.left, rect.bottom - rect.top);

av_dict_set(&pDictOps, "video_size", szTemp, 0);

snprintf(szTemp, sizeof(szTemp), "%d", rect.left);

av_dict_set(&pDictOps, "offset_x", szTemp, 0);

snprintf(szTemp, sizeof(szTemp), "%d", rect.top);

av_dict_set(&pDictOps, "offset_y", szTemp, 0);

break;

case 255: //抓取所有屏幕

default: //抓取所有屏幕

break;

}

}

// 打开输入流

int iRet = avformat_open_input(&m_pFormatContext,

filename.c_str(),

m_pInputFormat,

&pDictOps);

if (pDictOps)

{

av_dict_free(&pDictOps);

}

if (iRet < 0)

{

ShowError(iRet); // 打开视频失败

Free();

return false;

}

// 读取媒体文件

iRet = avformat_find_stream_info(m_pFormatContext, nullptr);

if (iRet < 0)

{

ShowError(iRet);

Free();

return false;

}

m_iTotalTime = m_pFormatContext->duration / (AV_TIME_BASE / 1000); // 计算视频总时长(毫秒)

// 通过AVMediaType枚举查询视频流ID

m_iVideoIndex = av_find_best_stream(m_pFormatContext, AVMEDIA_TYPE_VIDEO, -1, -1, nullptr, 0);

if (m_iVideoIndex < 0)

{

ShowError(m_iVideoIndex);

Free();

return false;

}

AVStream* videoStream = m_pFormatContext->streams[m_iVideoIndex]; // 通过查询到的索引获取视频流

// 获取视频图像分辨率

m_Size.cx = (videoStream->codecpar->width);

m_Size.cy = (videoStream->codecpar->height);

m_fFrameRate = RationalToDouble(&videoStream->avg_frame_rate);

m_AvgFrameRate.x = (videoStream->avg_frame_rate.num);

m_AvgFrameRate.y = (videoStream->avg_frame_rate.den);

// 通过解码器ID获取视频解码器

const AVCodec* codec = avcodec_find_decoder(videoStream->codecpar->codec_id);

m_iTotalFrames = videoStream->nb_frames;

// 分配AVCodecContext并将其字段设置为默认值。

m_pCodecContext = avcodec_alloc_context3(codec);

if (!m_pCodecContext)

{

#if PRINT_LOG

std::cout << "创建视频解码器上下文失败!" << std::endl;;

#endif

Free();

return false;

}

// 使用视频流的codecpar为解码器上下文赋值

iRet = avcodec_parameters_to_context(m_pCodecContext, videoStream->codecpar);

if (iRet < 0)

{

ShowError(iRet);

Free();

return false;

}

m_pCodecContext->flags2 |= AV_CODEC_FLAG2_FAST;

m_pCodecContext->thread_count = 8;

// 初始化解码器上下文

iRet = avcodec_open2(m_pCodecContext, nullptr, nullptr);

if (iRet < 0)

{

ShowError(iRet);

Free();

return false;

}

// 分配AVPacket并将其字段设置为默认值。

m_pPacket = av_packet_alloc();

if (!m_pPacket)

{

Free();

return false;

}

// 分配AVFrame并将其字段设置为默认值。

m_pFrame = av_frame_alloc();

if (!m_pFrame)

{

Free();

return false;

}

m_bEndF = false;

return true;

}

AVFrame* VideoDecode::ReadStream()

{

if (!m_pFormatContext)

{

return nullptr;

}

int readRet = av_read_frame(m_pFormatContext, m_pPacket);

if (readRet < 0)

{

avcodec_send_packet(m_pCodecContext, m_pPacket);

}

else

{

if (m_pPacket->stream_index == m_iVideoIndex)

{

// 将读取到的原始数据包传入解码器

int ret = avcodec_send_packet(m_pCodecContext, m_pPacket);

if (ret < 0)

{

ShowError(ret);

}

}

}

av_packet_unref(m_pPacket); // 释放数据包

av_frame_unref(m_pFrame);

int ret = avcodec_receive_frame(m_pCodecContext, m_pFrame);

if (ret < 0)

{

av_frame_unref(m_pFrame);

if (readRet < 0)

{

m_bEndF = true; // 当无法读取到AVPacket并且解码器中也没有数据时表示读取完成

}

return nullptr;

}

return m_pFrame;

}

void VideoDecode::Close()

{

Clear();

Free();

m_iTotalTime = 0;

m_iVideoIndex = 0;

m_iTotalFrames = 0;

m_iObtainFrames = 0;

m_fFrameRate = 0;

m_Size.cx = 0;

m_Size.cy = 0;

}

bool VideoDecode::IsEnd()

{

return m_bEndF;

}

void VideoDecode::ShowError(int errcode)

{

}

double VideoDecode::RationalToDouble(AVRational* rational)

{

double frameRate = (rational->den == 0) ? 0 : (double(rational->num) / rational->den);

return frameRate;

}

void VideoDecode::Clear()

{

if (m_pFormatContext && m_pFormatContext->pb)

{

avio_flush(m_pFormatContext->pb);

}

if (m_pFormatContext)

{

avformat_flush(m_pFormatContext); // 清理读取缓冲

}

}

void VideoDecode::Free()

{

// 释放编解码器上下文和与之相关的所有内容,并将NULL写入提供的指针

if (m_pCodecContext)

{

avcodec_free_context(&m_pCodecContext);

}

// 关闭并失败m_pFormatContext,并将指针置为null

if (m_pFormatContext)

{

avformat_close_input(&m_pFormatContext);

}

if (m_pPacket)

{

av_packet_free(&m_pPacket);

}

if (m_pFrame)

{

av_frame_free(&m_pFrame);

}

}

② 生成视频文件

VideoCodec.h头文件:

#ifndef __VIDEOCODEC_H__

#define __VIDEOCODEC_H__

#include <mutex>

#include <string>

#include <windows.h>

struct AVCodecParameters;

struct AVFormatContext;

struct AVCodecContext;

struct AVStream;

struct AVFrame;

struct AVPacket;

struct AVOutputFormat;

struct SwsContext;

class VideoCodec

{

public:

VideoCodec();

~VideoCodec();

bool Open(AVCodecContext* codecContext, POINT point, std::string filename);

void Write(AVFrame* frame);

void Close();

private:

void ShowError(int errcode);

bool ToSwsFormat(AVFrame* frame);

private:

AVFormatContext* m_pFormatContext = nullptr;

AVCodecContext* m_pCodecContext = nullptr; // 编码器上下文

SwsContext* m_pSwsContext = nullptr; // 图像转换上下文

AVStream* m_pVideoStream = nullptr;

AVPacket* m_pPacket = nullptr; // 数据包

AVFrame* m_pFrame = nullptr; // 解码后的视频帧

int m_iIndex = 0;

bool m_bWriteHeaderF = false; // 是否写入头

std::mutex m_mutex;

};

#endif // VIDEOCODEC_H

VideoCodec.cpp源文件:

#include "VideoCodec.h"

#include <iostream>

// 用C规则编译指定的代码

extern "C"

{

#include "libavcodec/avcodec.h"

#include "libavformat/avformat.h"

#include "libavutil/avutil.h"

#include "libswscale/swscale.h"

#include "libavutil/imgutils.h"

#include "libavdevice/avdevice.h"

}

#define ERROR_LEN 1024

#define PRINT_LOG 1

VideoCodec::VideoCodec()

{

}

VideoCodec::~VideoCodec()

{

Close();

}

bool VideoCodec::Open(AVCodecContext* codecContext, POINT point, std::string fileName)

{

if (!codecContext || fileName.empty()) return false;

// 通过输出文件名为输出格式分配AVFormatContext

int iRet = avformat_alloc_output_context2(&m_pFormatContext, nullptr, nullptr, fileName.c_str());

if (iRet < 0)

{

Close();

ShowError(iRet);

return false;

}

// 创建并初始化AVIOContext以访问url所指示的资源

iRet = avio_open(&m_pFormatContext->pb, fileName.c_str(), AVIO_FLAG_WRITE);

if (iRet < 0)

{

Close();

ShowError(iRet);

return false;

}

// 查询编码器

const AVCodec* codec = avcodec_find_encoder(m_pFormatContext->oformat->video_codec);

if (!codec)

{

Close();

ShowError(AVERROR(ENOMEM));

return false;

}

// 分配AVCodecContext并将其字段设置为默认值

m_pCodecContext = avcodec_alloc_context3(codec);

if (!m_pCodecContext)

{

Close();

ShowError(AVERROR(ENOMEM));

return false;

}

// 设置编码器上下文参数

m_pCodecContext->width = codecContext->width; // 图片宽度/高度

m_pCodecContext->height = codecContext->height;

m_pCodecContext->pix_fmt = codec->pix_fmts[0]; // 像素格式

m_pCodecContext->time_base = { point.y, point.x }; // 设置时间基

m_pCodecContext->framerate = { point.x, point.y };

m_pCodecContext->bit_rate = 1000000; // 目标的码率

m_pCodecContext->gop_size = 12; // I帧间隔

m_pCodecContext->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

// 打开编码器

iRet = avcodec_open2(m_pCodecContext, nullptr, nullptr);

if (iRet < 0)

{

Close();

ShowError(iRet);

return false;

}

// 向媒体文件添加新流

m_pVideoStream = avformat_new_stream(m_pFormatContext, nullptr);

if (!m_pVideoStream)

{

Close();

ShowError(AVERROR(ENOMEM));

return false;

}

iRet = avcodec_parameters_from_context(m_pVideoStream->codecpar, m_pCodecContext);

if (iRet < 0)

{

Close();

ShowError(iRet);

return false;

}

// 写入文件头

iRet = avformat_write_header(m_pFormatContext, nullptr);

if (iRet < 0)

{

Close();

ShowError(iRet);

return false;

}

m_bWriteHeaderF = true;

// 分配一个AVPacket

m_pPacket = av_packet_alloc();

if (!m_pPacket)

{

Close();

ShowError(AVERROR(ENOMEM));

return false;

}

m_pFrame = av_frame_alloc();

if (!m_pFrame)

{

Close();

ShowError(AVERROR(ENOMEM));

return false;

}

m_pFrame->format = codec->pix_fmts[0];

return true;

}

void VideoCodec::Write(AVFrame* frame)

{

std::unique_lock<std::mutex> locker(m_mutex);

if (!m_pPacket)

{

return;

}

if (!ToSwsFormat(frame))

{

return;

}

if (m_pFrame)

{

m_pFrame->pts = m_iIndex;

m_iIndex++;

}

avcodec_send_frame(m_pCodecContext, m_pFrame); // 将图像传入编码器

while (true)

{

// 从编码器中读取图像帧

int ret = avcodec_receive_packet(m_pCodecContext, m_pPacket);

if (ret < 0)

{

break;

}

av_packet_rescale_ts(m_pPacket, m_pCodecContext->time_base, m_pVideoStream->time_base);

av_write_frame(m_pFormatContext, m_pPacket); // 将数据包写入输出媒体文件

av_packet_unref(m_pPacket);

}

}

void VideoCodec::Close()

{

Write(nullptr); // 传入空帧,读取所有编码数据

std::unique_lock<std::mutex> locker(m_mutex);

if (m_pFormatContext)

{

// 写入文件尾

if (m_bWriteHeaderF)

{

m_bWriteHeaderF = false;

int ret = av_write_trailer(m_pFormatContext);

if (ret < 0)

{

ShowError(ret);

return;

}

}

int ret = avio_close(m_pFormatContext->pb);

if (ret < 0)

{

ShowError(ret);

return;

}

avformat_free_context(m_pFormatContext);

m_pFormatContext = nullptr;

m_pVideoStream = nullptr;

}

// 释放编解码器上下文

if (m_pCodecContext)

{

avcodec_free_context(&m_pCodecContext);

}

if (m_pPacket)

{

av_packet_free(&m_pPacket);

}

// 释放上下文swsContext

if (m_pSwsContext)

{

sws_freeContext(m_pSwsContext);

m_pSwsContext = nullptr;

}

if (m_pFrame)

{

av_frame_free(&m_pFrame);

}

m_iIndex = 0;

}

void VideoCodec::ShowError(int errcode)

{

}

bool VideoCodec::ToSwsFormat(AVFrame* frame)

{

if (!frame || frame->width <= 0 || frame->height <= 0)

{

return false;

}

if (!m_pSwsContext)

{

m_pSwsContext = sws_getCachedContext(m_pSwsContext,

frame->width, // 输入图像的宽度

frame->height, // 输入图像的高度

(AVPixelFormat)frame->format, // 输入图像的像素格式

frame->width, // 输出图像的宽度

frame->height, // 输出图像的高度

(AVPixelFormat)m_pFrame->format, // 输出图像的像素格式

SWS_BILINEAR, // 选择缩放算法(只有当输入输出图像大小不同时有效),一般选择SWS_FAST_BILINEAR

nullptr, // 输入图像的滤波器信息, 若不需要传NULL

nullptr, // 输出图像的滤波器信息, 若不需要传NULL

nullptr); // 特定缩放算法需要的参数(?),默认为NULL

if (!m_pSwsContext)

{

av_frame_unref(frame);

return false;

}

if (m_pFrame)

{

// 创建一个图像帧用于保存YUV420P图像

m_pFrame->width = frame->width;

m_pFrame->height = frame->height;

av_frame_get_buffer(m_pFrame, 3 * 8);

}

}

if (m_pFrame->width <= 0 || m_pFrame->height <= 0)

{

return false;

}

// 开始转换格式

bool ret = sws_scale(m_pSwsContext, // 缩放上下文

frame->data, // 原图像数组

frame->linesize, // 包含源图像每个平面步幅的数组

0, // 开始位置

frame->height, // 行数

m_pFrame->data, // 目标图像数组

m_pFrame->linesize); // 包含目标图像每个平面的步幅的数组

av_frame_unref(frame);

return ret;

}

③ 添加文字水印

VideoFilter.h头文件:

#pragma once

struct AVFrame;

struct AVCodecContext;

//滤镜+水印

int WaterMark(AVCodecContext* codecContext, AVFrame* frame);

VideoFilter.cpp源文件:

#include "VideoFilter.h"

#include <string>

#include <iostream>

// 用C规则编译指定的代码

extern "C"

{

#include "libavcodec/avcodec.h"

#include "libavutil/opt.h"

#include "libavfilter/avfilter.h"

#include <libavutil/frame.h>

#include <libavfilter/buffersrc.h>

#include <libavfilter/buffersink.h>

}

AVFilterGraph* filter_graph = nullptr;

AVFilterContext* buffersink_ctx = nullptr;

AVFilterContext* buffersrc_ctx = nullptr;

#include <afx.h>

#include <atlconv.h>

//获取主机名称

inline std::string GetHostName()

{

static bool bInitF = false;

static char szHostName[128] = { 0 };

if (!bInitF)

{

bInitF = true;

gethostname(szHostName, sizeof(szHostName));

}

return std::string(szHostName);

}

//GBK转UTF8

std::string GBKToUTF8(const std::string& gbkStr)

{

int unicodeLen = MultiByteToWideChar(CP_ACP, 0, gbkStr.c_str(), -1, NULL, 0);

wchar_t* unicode = new wchar_t[unicodeLen + 1];

MultiByteToWideChar(CP_ACP, 0, gbkStr.c_str(), -1, unicode, unicodeLen);

unicode[unicodeLen] = L'\0';

int utf8Len = WideCharToMultiByte(CP_UTF8, 0, unicode, -1, NULL, 0, NULL, NULL);

char* utf8 = new char[utf8Len + 1];

WideCharToMultiByte(CP_UTF8, 0, unicode, -1, utf8, utf8Len, NULL, NULL);

utf8[utf8Len] = '\0';

std::string retStr(utf8);

delete[] unicode;

delete[] utf8;

return retStr;

}

//添加文字水印

int WaterMark(AVCodecContext* codecContext, AVFrame* frame)

{

char args[512] = {0};

int ret = 0;

//缓存输入和缓存输出

const AVFilter* buffersrc = avfilter_get_by_name("buffer");

const AVFilter* buffersink = avfilter_get_by_name("buffersink");

//创建输入输出参数

AVFilterInOut* outputs = avfilter_inout_alloc();

AVFilterInOut* inputs = avfilter_inout_alloc();

enum AVPixelFormat pix_fmts[] = { /*AV_PIX_FMT_YUV420P*/AV_PIX_FMT_BGRA, /*AV_PIX_FMT_YUV420P*/ AV_PIX_FMT_BGRA };

//创建滤镜容器

filter_graph = avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph)

{

ret = AVERROR(ENOMEM);

goto end;

}

//初始化数据帧的格式

/*sprintf_s(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

codecContext->width, codecContext->height, codecContext->pix_fmt,

codecContext->time_base.num, codecContext->time_base.den,

codecContext->sample_aspect_ratio.num, codecContext->sample_aspect_ratio.den);*/

sprintf_s(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

codecContext->width, codecContext->height, AV_PIX_FMT_BGRA, 1, 25, 1, 1);//图像宽高,格式,帧率,画面横纵比

//输入数据缓存

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

goto end;

}

//输出数据缓存

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0)

{

av_log(NULL, AV_LOG_ERROR, "Cannot create buffer sink\n");

goto end;

}

//设置元素样式

ret = av_opt_set_int_list(buffersink_ctx, "pix_fmts", pix_fmts,

/*AV_PIX_FMT_YUV420P*/AV_PIX_FMT_BGRA, AV_OPT_SEARCH_CHILDREN);

if (ret < 0)

{

av_log(NULL, AV_LOG_ERROR, "Cannot set output pixel format\n");

goto end;

}

//设置滤镜的端点

outputs->name = av_strdup("in");

outputs->filter_ctx = buffersrc_ctx;

outputs->pad_idx = 0;

outputs->next = NULL;

inputs->name = av_strdup("out");

inputs->filter_ctx = buffersink_ctx;

inputs->pad_idx = 0;

inputs->next = NULL;

//滤镜的描述

//使用arial字体,绘制的字体大小为50,文本内容为"节点:zxdd1 用户:root",绘制位置为(150,150)

//绘制的字体颜色为绿色

//要添加的水印数据

char filter_desrc[256] = { 0 };

//英文

//snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=arial.ttf:fontcolor=green:fontsize=50:x=150:y=150:text='%s'", "NODE[zxdd1] USER[root]");

//中文

{

if (time(NULL) % 2 == 0)

{

std::string gbkStr = "节点[" + GetHostName() + "] 操作员[root]";

std::string utf8Str = GBKToUTF8(gbkStr);

snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=./Fonts/msyh.ttc:fontcolor=0xFF1010:fontsize=40:x=10:y=20:text='%s'", utf8Str.c_str());

}

else

{

snprintf(filter_desrc, sizeof(filter_desrc), "drawtext=fontfile=./Fonts/msyh.ttc:fontcolor=0x00FF00:fontsize=40:x=10:y=20:text=''");

}

}

if ((ret = avfilter_graph_parse_ptr(filter_graph, filter_desrc,

&inputs, &outputs, NULL)) < 0)

{

goto end;

}

//滤镜生效

if ((ret = avfilter_graph_config(filter_graph, NULL)) < 0)

goto end;

if (av_buffersrc_add_frame(buffersrc_ctx, frame) < 0)

{

goto end;

}

//从滤波器中输出处理数据

if (av_buffersink_get_frame(buffersink_ctx, frame) < 0)

{

goto end;

}

end:

//释放资源

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

avfilter_graph_free(&filter_graph);

return ret;

}

④ main函数调用

void CHMIRecorderDlg::OnBnClickedBtnStart()

{

int index = m_ComboRecordArea.GetCurSel();

int iScreenIndex = m_ComboRecordArea.GetItemData(index);

//1.抓取桌面

VideoDecode dec;

bool bRet = dec.Open("desktop", iScreenIndex);

AVCodecContext* pCodecContext = dec.GetCodecContext();

//2.保存成mp4文件

POINT pt; //用pt表示帧播放速率,播放速率=x/y

pt.x = 10;

pt.y = 1;

//说明:pt.x/pt.y值越大,播放速度越快,生成的文件大小越小!!!

SYSTEMTIME st;

::GetLocalTime(&st);

char szFileName[128] = { 0 };

snprintf(szFileName, sizeof(szFileName), "HmiRecord_%04d%02d%02d%02d%02d%02d.mp4", st.wYear, st.wMonth, st.wDay, st.wHour, st.wMinute, st.wSecond);

VideoCodec cod;

bRet = cod.Open(pCodecContext, pt, szFileName);

int iFrameCount = 200;

while (iFrameCount--)

{

AVFrame* pFrame = dec.ReadStream();

if (pFrame)

{

//3.添加文字水印滤镜

WaterMark(pCodecContext,pFrame);

cod.Write(pFrame);

//4.更新托盘

NOTIFYICONDATA nd = { 0 };

nd.cbSize = sizeof(NOTIFYICONDATA);

nd.hWnd = m_hWnd;

nd.uID = IDR_MAINFRAME;

nd.uFlags = NIF_ICON | NIF_MESSAGE | NIF_TIP;

nd.uCallbackMessage = WM_ICON_NOTIFY;

lstrcpy(nd.szTip, _T("HMI录制中..."));

if (time(NULL) % 2 == 0)

nd.hIcon = AfxGetApp()->LoadIcon(IDI_ICON1);

else

nd.hIcon = AfxGetApp()->LoadIcon(IDI_ICON2);

Shell_NotifyIcon(NIM_MODIFY, &nd);

}

}

cod.Close();

⑤ 工程头文件包含

⑥ 工程库文件包含

包含 "avformat.lib;avdevice.lib;avutil.lib;avcodec.lib;swscale.lib;avfilter.lib" 等库。

⑦ 运行程序

⑧ 运行效果

- 生成

*.mp4文件:

- 播放

*.mp4文件:

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?