1.简单介绍

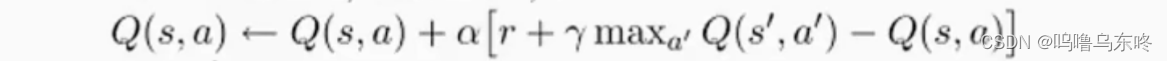

先看一下Q-learning的核心函数

Q(s,a),即在Q表格中,在状态s下,采取动作a,可以获得的value

α,即学习率

r,在状态s下,采取动作a,可以获得的奖励

γ,折扣因子

maxQ(s',a'),下一步可以获得的最大的value

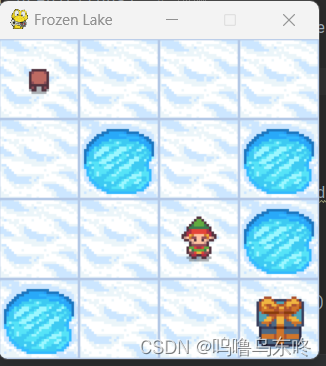

首先,我们先通过导入gym库来创建一个环境

import gym

import time

env = gym.make("FrozenLake-v1", render_mode="human", is_slippery=False)

env.reset()

while True:

env.render()

# observation 采取了动作a之后的状态

# reward 获得的奖励

# terminated bool值,游戏是否结束

# truncated bool值,游戏是否被截断

# info 包含有关环境状态的附加信息的字典,通常用于调试

observation, reward, terminated, truncated, info = env.step(env.action_space.sample()) # take a random action

if terminated:

break

env.close()运行这段代码,我们可以看到小人走一次冰湖的过程。

在这个实例中,小人除了到达右下角的目的地,可以得到1的reward之外,无论是掉入冰湖,还是随便走一步,reward都是0。

2.代码实现

1.先定义main()函数

def main():

env = gym.make("FrozenLake-v1", is_slippery=False) # 创建环境

# 初始化agent

agent = QlearingAgent(

obs_n=env.observation_space.n,

act_n=env.action_space.n,

learning_rate=0.2,

gamma=0.9,

e_greed=0.2)

# 开始训练

for episode in range(5000):

ep_reward, ep_steps = run_episode(env, agent)

print('Episode %s: steps = %s , reward = %.1f' % (episode, ep_steps,

ep_reward))

# 训练结束,查看算法效果

env = gym.make("FrozenLake-v1", render_mode="human",is_slippery=False)

test_episode(env, agent)2.完成run_episode()函数

def run_episode(env, agent):

total_steps = 0 # 记录每个episode走了多少step

total_reward = 0

obs = env.reset() # 重置环境, 重新开一局(即开始新的一个episode)

obs = obs[0]

action = agent.sample(obs) # 根据算法选择一个动作

while True:

next_obs, reward, done, truncated, info = env.step(action)

# next_obs, reward, done, _ = env.step(action) # 与环境进行一个交互

next_action = agent.sample(next_obs) # 根据算法选择一个动作

# 训练Q-learn算法

agent.learn(obs, action, reward, next_obs, done)

action = next_action

obs = next_obs # 存储上一个观察值

total_reward += reward

total_steps += 1 # 计算step数

# 如果游戏结束,退出

if done:

break

return total_reward, total_steps

3.现在来定义agent

class QlearingAgent(object):

def __init__(self, obs_n, act_n, learning_rate=0.01, gamma=0.9, e_greed=0.3):

# 动作维度,有几个动作可选,在我们这个例子中,就是上下左右四个

self.act_n = act_n

self.lr = learning_rate # 学习率

self.gamma = gamma # reward的衰减率

self.epsilon = e_greed # 按一定概率随机选动作

self.Q = np.zeros((obs_n, act_n)) # 初始化Q表格

def sample(self, obs):

if np.random.uniform(0, 1) < (1.0 - self.epsilon):

action = self.predict(obs) # 根据table的Q值选动作

else:

action = np.random.choice(self.act_n) # 有一定概率随机探索选取一个动作

return action

def predict(self, obs):

Q_list = self.Q[obs, :]

maxQ = np.max(Q_list)

action_list = np.where(Q_list == maxQ)[0] # maxQ可能对应多个action

action = np.random.choice(action_list)

return action

# 更新Q表格

def learn(self, obs, action, reward, next_obs, done):

pre_Q = self.Q[obs, action]

if done:

tar_Q = reward

else:

tar_Q = reward + self.gamma * np.max(self.Q[next_obs, :])

self.Q[obs, action] += self.lr * (tar_Q - pre_Q)4.最后是test_episode()函数

import time

def test_episode(env, agent):

total_reward = 0

obs = env.reset()

obs = obs[0]

while True:

action = agent.predict(obs) # 预测

next_obs, reward, done, truncated, _ = env.step(action)

total_reward += reward

obs = next_obs

time.sleep(0.1) # 为了有更好的效果

env.render()

if done:

print('test reward = %.1f' % (total_reward))

break

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?