深度学习步骤:

- 1. 数据处理:读取数据 和 预处理操作

- 2. 模型设计:网络结构(假设)

- 3. 训练配置:优化器(寻解算法)

- 4. 训练过程:循环调用训练过程,包括前向计算 + 计算损失(优化目标) + 后向传播

- 5. 保存模型并测试:将训练好的模型保存

一、数据处理

import paddle

import paddle.fluid as fluid

import paddle.fluid.dygraph as dygraph

from paddle.fluid.dygraph import FC

import numpy as np

# 加载数据

def load_data():

# 从文件导入数据

datafile = 'housing.data'

data = np.fromfile(datafile, sep=' ')

# 每条数据包含14项,其中前面13项是影响因素,,第14项是相应的房屋价格中位数

feature_names = ['CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', 'DIS', 'RAD', 'TAX',

'PTRATIO', 'B', 'LSTAT', 'MEDV']

feature_num = len(feature_names)

# 将原始数据进行reshape, 变成[N.14]这样的形状

data = data.reshape([data.shape[0]//feature_num, feature_num])

# 将原始数据集拆分成训练数据集和测试集

# 这里使用80%的数据集做训练,20%的数据集做测试

# 测试集和训练集必须是没有交集的

ratio = 0.8

offset = int(data.shape[0] * 0.8)

train_data = data[:offset]

# 计算train数据集的最大值,最小值,均值

maximums, minimums, avgs = train_data.max(axis=0), \

train_data.min(axis=0), train_data.sum(axis=0)/train_data.shape[0]

# 随数据进行归一化处理

for i in range(feature_num):

data[:, i] = (data[:, i] - avgs[i])/(maximums[i] - minimums[i])

# 训练集和测试集的划分

train_data = data[:offset]

test_data = data[offset:]

return train_data, test_data二、模型设计

class Regressor(fluid.dygraph.Layer):

def __init__(self, name_scope):

super(Regressor, self).__init__(name_scope)

name_scope = self.full_name()

self.fc = FC(name_scope, size=1, act=None)

def forward(self, inputs):

x = self.fc(inputs)

return x三、训练配置

# 定义飞桨动态图的工作环境

with fluid.dygraph.guard():

# 声明定义好的线性回归模型

model = Regressor("Regressor")

# 开启模型训练模式

model.train()

# 加载数据

training_data, test_data = load_data()

# 定义优化算法,这里使用随机梯度下降-SGD

# 学习率设置为0.01

opt = fluid.optimizer.SGD(learning_rate=0.01)四、训练过程

两层循环,四个计算(前向计算,损失计算,反向传播,梯度计算)

with dygraph.guard():

EPOCH_NUM = 10 # 设置外层循环次数

BATCH_SIZE = 10 # 设置batch大小

# 定义外层循环

for epoch_id in range(EPOCH_NUM):

# 在每轮迭代开始之前,将训练数据的顺序随机的打乱

np.random.shuffle(training_data)

# 将训练数据进行拆分,每个batch包含10条数据

mini_batches = [training_data[k:k+BATCH_SIZE] for k in range(0, len(training_data), BATCH_SIZE)]

# 定义内层循环

for iter_id, mini_batch in enumerate(mini_batches):

x = np.array(mini_batch[:, :-1]).astype('float32') # 获得当前批次训练数据

y = np.array(mini_batch[:, -1:]).astype('float32') # 获得当前批次训练标签(真实房价)

# 将numpy数据转为飞桨动态图variable形式

house_features = dygraph.to_variable(x)

prices = dygraph.to_variable(y)

# 前向计算

predicts = model(house_features)

# 计算损失

loss = fluid.layers.square_error_cost(predicts, label=prices)

avg_loss = fluid.layers.mean(fluid.layers.sqrt(loss))

if iter_id%20==0:

print("epoch: {}, iter: {}, loss is: {}".format(epoch_id, iter_id, avg_loss.numpy()))

# 反向传播

avg_loss.backward()

# 最小化loss,更新参数

opt.minimize(avg_loss)

# 清除梯度

model.clear_gradients()

# 保存模型

fluid.save_dygraph(model.state_dict(), 'LR_model')五、保存模型

# 定义飞桨动态图工作环境

with fluid.dygraph.guard():

# 保存模型参数,文件名为LR_model

fluid.save_dygraph(model.state_dict(), 'LR_model')

print("模型保存成功,模型参数保存在LR_model中")六、预测

def load_one_example(data_dir):

f = open(data_dir, 'r')

datas = f.readlines()

# 选择倒数第10条数据用于测试

tmp = datas[-10]

tmp = tmp.strip().split()

one_data = [float(v) for v in tmp]

# 对数据进行归一化处理

for i in range(len(one_data)-1):

one_data[i] = (one_data[i] - avg_values[i]) / (max_values[i] - min_values[i])

data = np.reshape(np.array(one_data[:-1]), [1, -1]).astype(np.float32)

label = one_data[-1]

return data, label

with dygraph.guard():

# 参数为保存模型参数的文件地址

model_dict, _ = fluid.load_dygraph('LR_model')

model.load_dict(model_dict)

model.eval()

# 参数为数据集的文件地址

test_data, label = load_one_example('./work/housing.data')

# 将数据转为动态图的variable格式

test_data = dygraph.to_variable(test_data)

results = model(test_data)

# 对结果做反归一化处理

results = results * (max_values[-1] - min_values[-1]) + avg_values[-1]

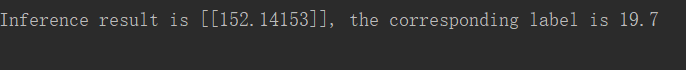

print("Inference result is {}, the corresponding label is {}".format(results.numpy(), label))预测结果:

七、完整程序

import paddle

import paddle.fluid as fluid

import paddle.fluid.dygraph as dygraph

from paddle.fluid.dygraph import FC

import numpy as np

# 加载数据

def load_data():

# 从文件导入数据

datafile = 'housing.data'

data = np.fromfile(datafile, sep=' ')

# 每条数据包含14项,其中前面13项是影响因素,,第14项是相应的房屋价格中位数

feature_names = ['CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', 'DIS', 'RAD', 'TAX',

'PTRATIO', 'B', 'LSTAT', 'MEDV']

feature_num = len(feature_names)

# 将原始数据进行reshape, 变成[N.14]这样的形状

data = data.reshape([data.shape[0]//feature_num, feature_num])

# 将原始数据集拆分成训练数据集和测试集

# 这里使用80%的数据集做训练,20%的数据集做测试

# 测试集和训练集必须是没有交集的

ratio = 0.8

offset = int(data.shape[0] * 0.8)

train_data = data[:offset]

# 计算train数据集的最大值,最小值,均值

maximums, minimums, avgs = train_data.max(axis=0), \

train_data.min(axis=0), train_data.sum(axis=0)/train_data.shape[0]

# 随数据进行归一化处理

for i in range(feature_num):

data[:, i] = (data[:, i] - avgs[i])/(maximums[i] - minimums[i])

# 训练集和测试集的划分

train_data = data[:offset]

test_data = data[offset:]

return train_data, test_data

# 网络构建

class Regressor(fluid.dygraph.Layer):

def __init__(self, name_scope):

super(Regressor, self).__init__(name_scope)

name_scope = self.full_name()

self.fc = FC(name_scope, size=1, act=None)

def forward(self, inputs):

x = self.fc(inputs)

return x

def trainer():

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

# 训练配置

# 定义飞浆动态图的工作环境

with fluid.dygraph.guard(place):

# 申明定义好的回归模型

model = Regressor("Regressor")

# 开启模型训练模式

model.train()

# 加载数据

train_data, test_data = load_data()

# 定义优化算法,SGD,学习率为0.01

opt = fluid.optimizer.SGD(learning_rate=0.01)

with dygraph.guard(place):

EPOCH_NUM = 10 # 设置外层循环次数

BATCH_SIZE = 10 # 设置batch尺寸

# 定义外层循环

for epoch_id in range(EPOCH_NUM):

# 在每轮迭代开始之前,将训练数据的顺序随机打乱

np.random.shuffle(train_data)

# 将训练数据集进行拆分,每个batch包含10条数据

mini_batchs = [train_data[k:k+BATCH_SIZE] for k in range(0, len(train_data), BATCH_SIZE)]

# 定义内层循环

for iter_id, mini_batch in enumerate(mini_batchs):

x = np.array(mini_batch[:, :-1]).astype('float32')

t = np.array(mini_batch[:, -1:]).astype('float32')

# 将numpy数据转为飞浆动态图variable形式

house_features = dygraph.to_variable(x)

prices = dygraph.to_variable(t)

# 前向计算

predicts = model(house_features)

# 损失计算

loss = fluid.layers.square_error_cost(predicts, label=prices)

avg_loss = fluid.layers.mean(fluid.layers.sqrt(loss))

if iter_id % 20 == 0:

print('epoch: {}, iter: {}, loss is: {}'.format(epoch_id, iter_id, avg_loss.numpy()))

# 反向传播

avg_loss.backward()

# 最小化loss,更新参数

opt.minimize(avg_loss)

# 清除梯度

model.clear_gradients()

# 保存模型

fluid.save_dygraph(model.state_dict(), 'LR_model')

print('模型保存成功,模型参数保存在LR_model中')

def load_one_example(data_dir):

f = open(data_dir, 'r')

datas = f.readlines()

# 选择倒数第10条数据用于测试

tmp = datas[-10]

tmp = tmp.strip().split()

one_data = [float(v) for v in tmp]

data = np.reshape(np.array(one_data[:-1]), [1, -1]).astype(np.float32)

label = one_data[-1]

return data, label

def predict():

model = Regressor("Regressor")

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

with dygraph.guard(place):

# 参数为保存模型参数的文件地址

model_dict, _ = fluid.load_dygraph('LR_model')

model.load_dict(model_dict)

model.eval()

# 参数为数据集的文件地址

test_data, label = load_one_example('housing.data')

test_data = dygraph.to_variable(test_data)

results = model(test_data)

print('Inference result is {}, the corresponding label is {}'.format(results.numpy(), label))

if __name__ == "__main__":

# trainer()

predict()

874

874

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?