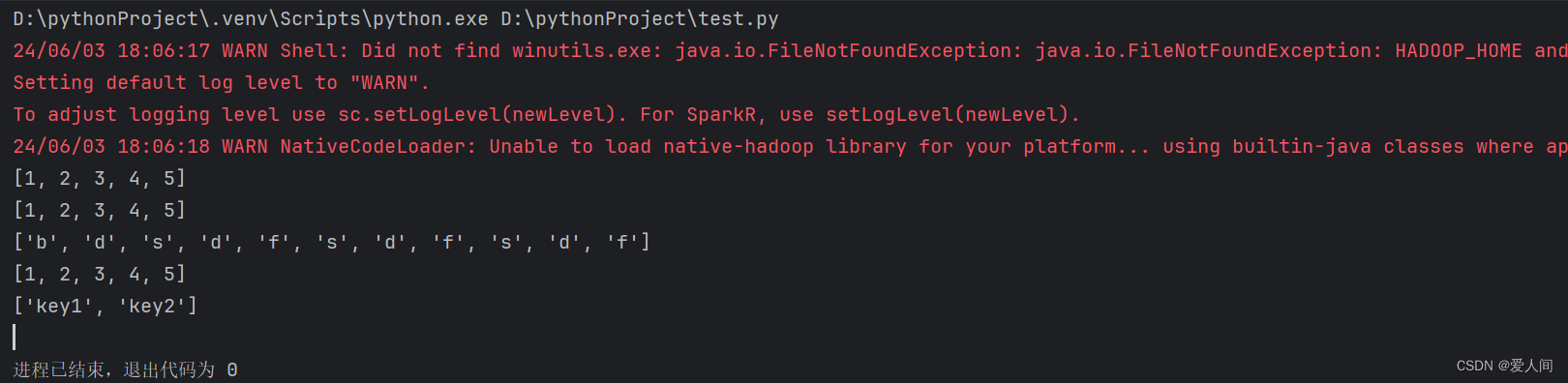

from pyspark import SparkConf,SparkContext

conf = SparkConf().setMaster("local[*]").setAppName("test_spark_app")

sc = SparkContext(conf=conf)

rdd1 = sc.parallelize([1,2,3,4,5])

rdd2 = sc.parallelize((1,2,3,4,5))

rdd3 = sc.parallelize("bdsdfsdfsdf")

rdd4 = sc.parallelize({1,2,3,4,5})

rdd5 = sc.parallelize({"key1":"value1","key2":"value2"})

print(rdd1.collect())

print(rdd2.collect())

print(rdd3.collect())

print(rdd4.collect())

print(rdd5.collect())

sc.stop()

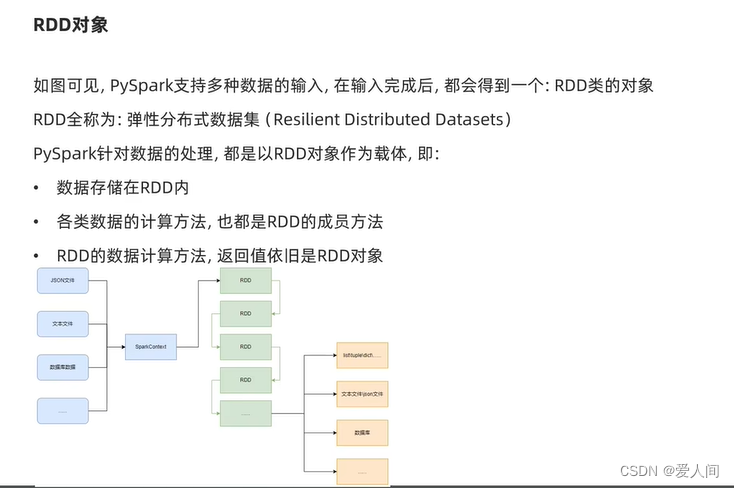

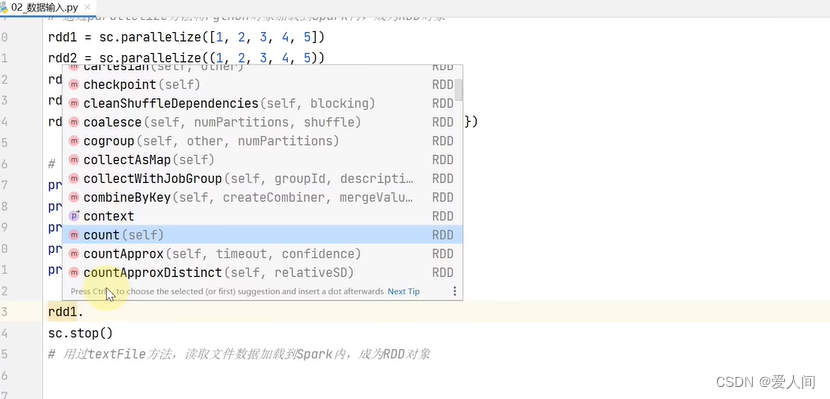

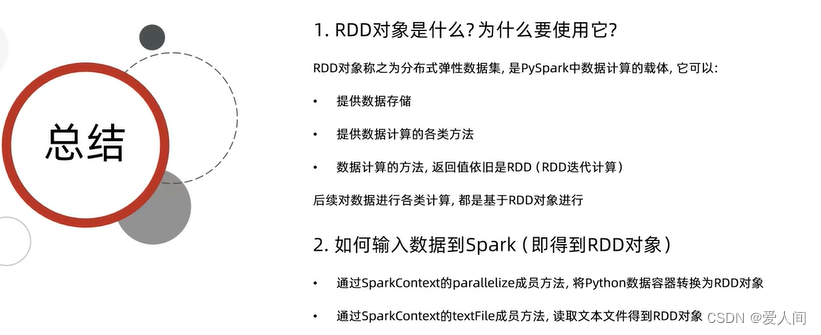

转成rdd对象,里面会有很多的方法供我们使用

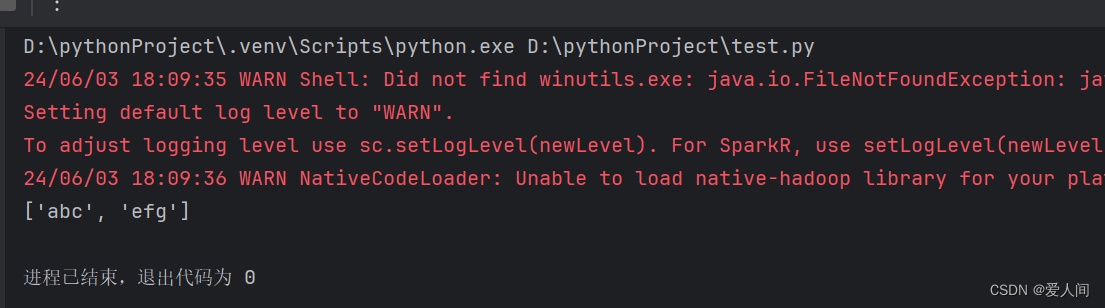

from pyspark import SparkConf,SparkContext

conf = SparkConf().setMaster("local[*]").setAppName("test_spark_app")

sc = SparkContext(conf=conf)

rdd=sc.textFile("d:/hello.txt")

print(rdd.collect())

sc.stop()

9624

9624

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?