在上一篇博客介绍了mediaplayer跟mediaplayerservice的交互,这里来跟踪下nuplayer 跟audiotrack、mediacodec、omx之间的关系。

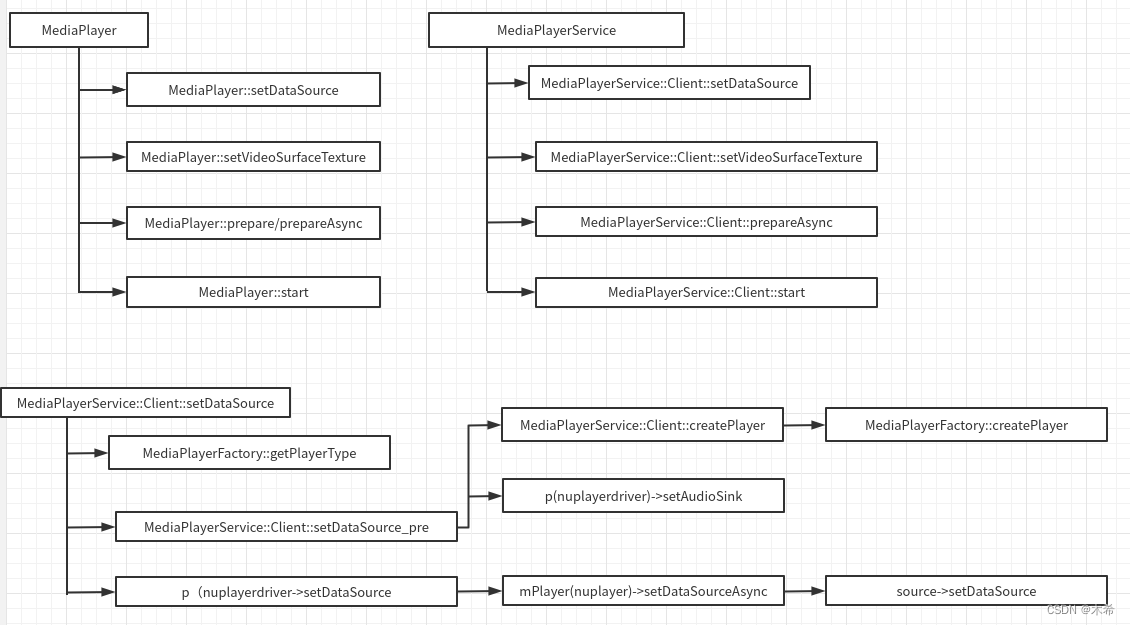

下图是android 系统的mediaplayer 、mediaplayerservice、nuplayer的交互。在nuplayer

上一篇中介绍了nuplayer的创建,在mediaplayerfactory种创建了NuPlayerDriver,

virtual sp<MediaPlayerBase> createPlayer(pid_t pid) {

ALOGV(" create NuPlayer");

return new NuPlayerDriver(pid);

}NuPlayerDriver 在new时创建Nuplayer,然后进行初始化。,Nuplayer 初始化比较简单只是抛出一个msg

NuPlayerDriver::NuPlayerDriver(pid_t pid)

: …………

mPlayer(new NuPlayer(pid, mMediaClock)),

………… {

ALOGD("NuPlayerDriver(%p) created, clientPid(%d)", this, pid);

mLooper->setName("NuPlayerDriver Looper");

mMediaClock->init();

// set up an analytics record

mMetricsItem = mediametrics::Item::create(kKeyPlayer);

mLooper->start(

false, /* runOnCallingThread */

true, /* canCallJava */

PRIORITY_AUDIO);

mLooper->registerHandler(mPlayer);

mPlayer->init(this);

}在mediaplayer 创建后,mediaplayerservice将AudioOutput同AudioTrack挂钩,传递给nuplayer mAudioSink 。

sp<MediaPlayerBase> MediaPlayerService::Client::setDataSource_pre(

player_type playerType)

{

ALOGV("player type = %d", playerType);

// create the right type of player

sp<MediaPlayerBase> p = createPlayer(playerType);

if (p == NULL) {

return p;

}

…………

if (!p->hardwareOutput()) {

mAudioOutput = new AudioOutput(mAudioSessionId, mAttributionSource,

mAudioAttributes, mAudioDeviceUpdatedListener);

static_cast<MediaPlayerInterface*>(p.get())->setAudioSink(mAudioOutput);

}

return p;

}

void NuPlayer::setAudioSink(const sp<MediaPlayerBase::AudioSink> &sink) {

sp<AMessage> msg = new AMessage(kWhatSetAudioSink, this);

msg->setObject("sink", sink);

msg->post();

}void NuPlayer::onMessageReceived(const sp<AMessage> &msg) {

switch (msg->what()) {

…………

case kWhatSetAudioSink:

{

ALOGV("kWhatSetAudioSink");

sp<RefBase> obj;

CHECK(msg->findObject("sink", &obj));

mAudioSink = static_cast<MediaPlayerBase::AudioSink *>(obj.get());

break;

}

…………

default:

TRESPASS();

break;

}

}在start时创建Render用于渲染,并将audiosink作为入参传递给Render

void NuPlayer::onStart(int64_t startPositionUs, MediaPlayerSeekMode mode) {

…………

sp<AMessage> notify = new AMessage(kWhatRendererNotify, this);

++mRendererGeneration;

notify->setInt32("generation", mRendererGeneration);

//mAudioSink 是mediaplayerservice传递的AudioOutput同AudioTrack挂钩

mRenderer = new Renderer(mAudioSink, mMediaClock, notify, flags);

mRendererLooper = new ALooper;

mRendererLooper->setName("NuPlayerRenderer");

mRendererLooper->start(false, false, ANDROID_PRIORITY_AUDIO);

mRendererLooper->registerHandler(mRenderer);

…………

//抛出kWhatScanSources事件,调用instantiateDecoder接口创建Decoder

postScanSources();

}

在start 时会抛出kWhatScanSources事件,创建解码器

status_t NuPlayer::instantiateDecoder(

bool audio, sp<DecoderBase> *decoder, bool checkAudioModeChange) {

…………

if (audio) {

sp<AMessage> notify = new AMessage(kWhatAudioNotify, this);

++mAudioDecoderGeneration;

notify->setInt32("generation", mAudioDecoderGeneration);

if (checkAudioModeChange) {

determineAudioModeChange(format);

}

if (mOffloadAudio) {

mSource->setOffloadAudio(true /* offload */);

const bool hasVideo = (mSource->getFormat(false /*audio */) != NULL);

format->setInt32("has-video", hasVideo);

*decoder = new DecoderPassThrough(notify, mSource, mRenderer);

ALOGV("instantiateDecoder audio DecoderPassThrough hasVideo: %d", hasVideo);

} else {

mSource->setOffloadAudio(false /* offload */);

*decoder = new Decoder(notify, mSource, mPID, mUID, mRenderer);

ALOGV("instantiateDecoder audio Decoder");

}

mAudioDecoderError = false;

} else {

…………

*decoder = new Decoder(

notify, mSource, mPID, mUID, mRenderer, mSurface, mCCDecoder);

…………

}

(*decoder)->init();

…………

(*decoder)->configure(format);

…………

return OK;

}void NuPlayer::Decoder::onConfigure(const sp<AMessage> &format) {

…………

mCodec = MediaCodec::CreateByType(

mCodecLooper, mime.c_str(), false /* encoder */, NULL /* err */, mPid, mUid, format);

…………

err = mCodec->configure(

format, mSurface, crypto, 0 /* flags */);

…………

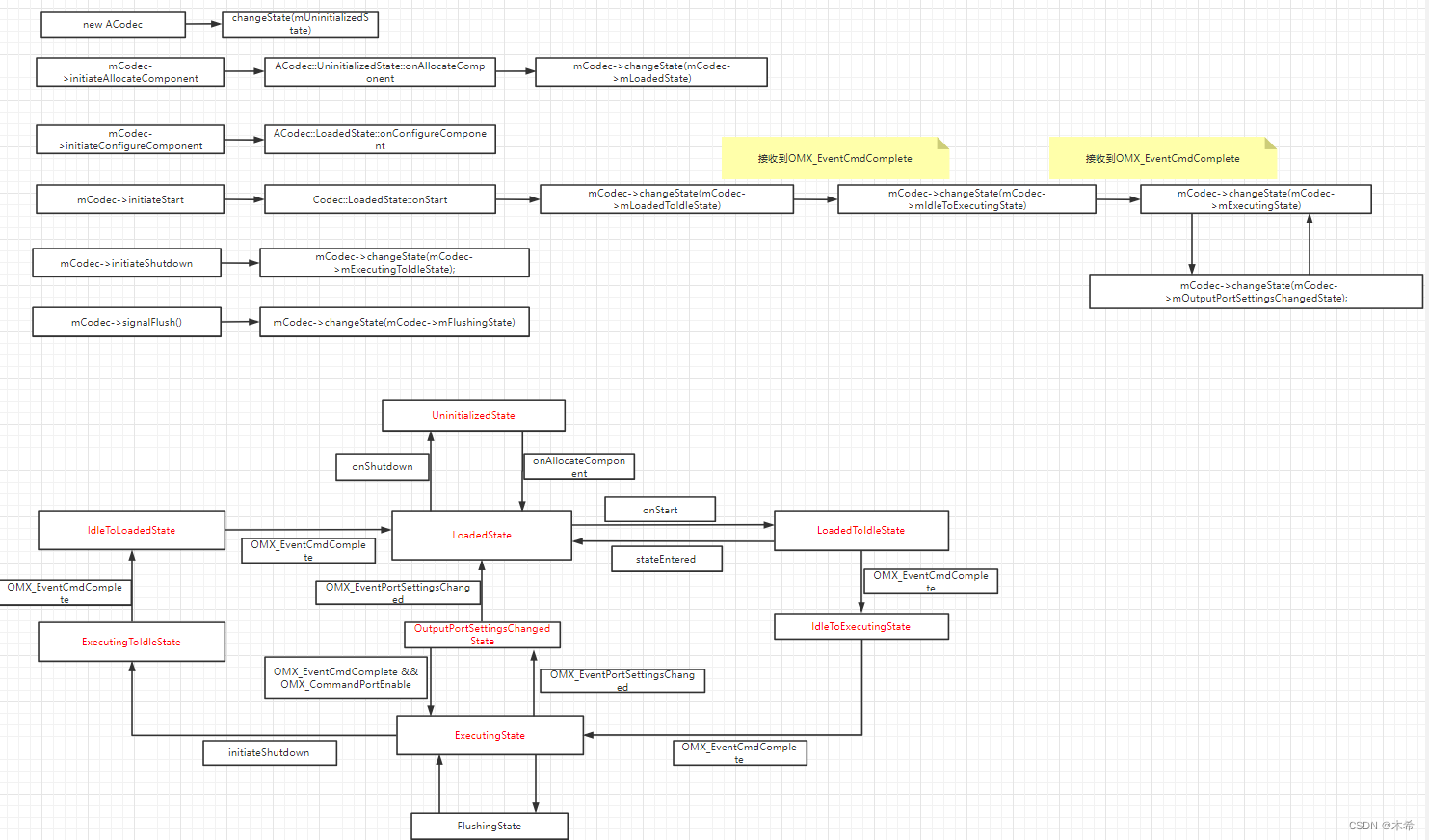

}下图是mediaplayer中关于解封装与解码部分的数据传递,视屏硬件解码由omx回调,通知acodec与mediacodec

如下是acodec中状态的扭转

4262

4262

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?