部署准备

server10 : salt-master

server11: salt-minion nginx

server12: salt-minion http

server13:salt-minion haproxy-master

server14: salt-minion haproxy-backup

VIP:172.25.65.10

保证saltstack正常工作,在server10上查看可以查看到

部署HAproxy+Keepalived高可用集群

部署http

在server12 IP:172.25.65.12

[root@server10 ~]# mkdir /srv/salt

[root@server10 salt]# mkdir apache

[root@server10 apache]# vim install.sls ##http安装

apache-install: ##唯一性声明

pkg.installed: ##下载

- pkgs:

- httpd

- httpd-tools

file.managed: ##文件管理

- name: /etc/httpd/conf/httpd.conf ##将source:的文件放到远程主机的该位置,相当于ansible中的dest

- source: salt://apache/files/httpd.conf ##源文件位置,相当于ansible中的src

service.running:

- name: httpd

- reload: true ##watch监控文件,如果文件发生改变,那么执行reload这个动作。

- watch:

- file: apache-install

- 在远程主机server12上部署http ,主要运行时所在的目录

[root@server10 apache]# salt server12 state.sls apache.install

部署Nginx(源码安装)

[root@server10 files]# mkdir /srv/salt/nginx

[root@server10 files]# mkdir /srv/salt/nginx/files

[root@server10 files]# pwd

/srv/salt/nginx/files

[root@server10 files]# ls

nginx-1.17.4.tar.gz nginx.conf nginx.service

==在此同样需要在一个主机上tar zxf nginx-1.17.4.tar.gz来获得

[root@server11 conf]# pwd

/mnt/nginx-1.17.4

[root@server11 conf]# cp nginx.conf /srv/salt/nginx/files

- 下面这个文件的作用是为了使nginx可以使用

systemctl命令方式进行启动

[root@server10 files]# cat nginx.service

[Unit]

Description=The NGINX HTTP and reverse proxy server

After=syslog.target network.target remote-fs.target nss-lookup.target

[Service]

Type=forking

PIDFile=/usr/local/nginx/logs/nginx.pid

ExecStartPre=/usr/local/nginx/sbin/nginx -t

ExecStart=/usr/local/nginx/sbin/nginx

ExecReload=/usr/local/nginx/sbin/nginx -s reload

ExecStop=/bin/kill -s QUIT $MAINPID

PrivateTmp=true

[Install]

WantedBy=multi-user.target

[root@server10 nginx]# pwd

/srv/salt/nginx

[root@server10 nginx]# ls

files install.sls service.sls

- 安装文件

[root@server10 nginx]# cat install.sls

nginx-install:

pkg.installed: ##安装所需要的依赖性文件

- pkgs:

- gcc

- pcre-devel

- openssl-devel

file.managed:

- name: /mnt/nginx-1.17.4.tar.gz

- source: salt://nginx/files/nginx-1.17.4.tar.gz

cmd.run: ##shell 进行解压、编译、安装

- name: cd /mnt && tar zxf nginx-1.17.4.tar.gz && cd nginx-1.17.4 && sed -i.bak 's/CFLAGS="$CFLAGS -g"/#CFLAGS="$CFLAGS -g"/g' auto/cc/gcc && ./configure --prefix=/usr/local/nginx --with-http_ssl_module &> /dev/null && make &> /dev/null && make install &> /dev/null

- creates: /usr/local/nginx

- 启动、重载Nginx

[root@server10 nginx]# cat service.sls

include:

- nginx.install

/usr/local/nginx/conf/nginx.conf:

file.managed:

- source: salt://nginx/files/nginx.conf

nginx-service:

file.managed:

- name: /usr/lib/systemd/system/nginx.service

- source: salt://nginx/files/nginx.service

service.running:

- name: nginx

- reload: true

- watch:

- file: /usr/local/nginx/conf/nginx.conf

[root@server10 salt]# salt server11 state.sls nginx.install

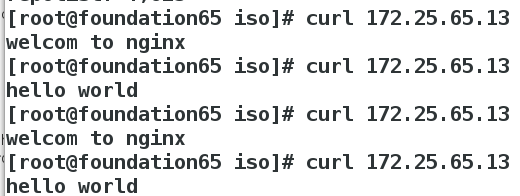

- 测试

将server11中 nginx默认发布页面进行修改方便测试

[root@server11 conf]# cat /usr/local/nginx/html/index.html

welcom to nginx

将server12中 http默认发布页面进行修改方便测试

[root@server12 mnt]# cat /var/www/html/index.html

hello world

部署HAproxy

[root@server10 salt]# ls

apache haproxy nginx top.sls

[root@server10 salt]# cd haproxy/

[root@server10 haproxy]# ls

files install.sls

- haproxy安装

[root@server10 haproxy]# cat install.sls

haproxy-install:

pkg.installed:

- pkgs:

- haproxy

- httpd-tools

file.managed:

- name: /etc/haproxy/haproxy.cfg

- source: salt://haproxy/files/haproxy.cfg

service.running:

- name: haproxy

- reload: true

- watch:

- file: haproxy-install

[root@server10 files]# pwd

/srv/salt/haproxy/files

[root@server10 files]# vim haproxy.cfg

[root@server10 salt]# salt server13 state.sls haproxy.install

- 测试:

在server14上也进行haproxy的安装,测试方式与server13一样,先确保两台主机haproxy都正常

[root@server10 salt]# salt server13 state.sls haproxy.install

部署keepalived

- 创建

keepalived目录

[root@server10 salt]# mkdir keepalived

[root@server10 salt]# cd keepalived

[root@server10 keepalived]# mkdir files

- 在server10上先安装

keepalived,将文件拷贝到/srv/salt/keepalived/files/

[root@server10 apache]# yum install keepalived -y

[root@server10 apache]# cd /etc/keepalived/

[root@server10 keepalived]# ls

keepalived.conf

[root@server10 keepalived]# cp keepalived.conf /srv/salt/keepalived/files/

- 修改配置文件

为了方便以后的远程部署,因为有master和backup状态,所以将files下的keepalived.conf 分为keepalivedmaster.conf和keepalivedbackup.conf两个文件,把安装文件也分为两个installm.sls和installb.sls,这样需要部署mater和backup的时候只需要将对应的文件进行执行推送即可。

[root@server10 files]# ls

keepalived.conf

[root@server10 files]# mv keepalived.conf keepalivedmaster.conf

[root@server10 files]# ls

keepalivedmaster.conf

[root@server10 files]# cp keepalivedmaster.conf keepalivedback.conf

主配置文件

[root@server10 files]# cat keepalivedmaster.conf

[root@server10 files]# cat keepalivedback.conf

[root@server10 salt]# cd keepalived/

[root@server10 keepalived]# ls

files installb.sls installm.sls

[root@server10 keepalived]# cat installm.sls

[root@server10 keepalived]# cat installm.sls

keepalived-install:

pkg.installed:

- pkgs:

- keepalived

file.managed:

- name: /etc/keepalived/keepalived.conf

- source: salt://keepalived/files/keepalivedmaster.conf

service.running:

- name: keepalived

- reload: true

- watch:

- file: keepalived-install

[root@server10 keepalived]# cat installb.sls

keepalived-install:

pkg.installed:

- pkgs:

- keepalived

file.managed:

- name: /etc/keepalived/keepalived.conf

- source: salt://keepalived/files/keepalivedback.conf

service.running:

- name: keepalived

- reload: true

- watch:

- file: keepalived-install

[root@server10 salt]# salt server13 state.sls keepalived.installb

[root@server10 salt]# salt server14 state.sls keepalived.installb

测试:

在server13上查看VIP

因为server13是master,所以VIP首先在server13上

[root@server13 keepalived]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 52:54:00:94:90:d2 brd ff:ff:ff:ff:ff:ff

inet 172.25.65.13/24 brd 172.25.65.255 scope global eth0

valid_lft forever preferred_lft forever

inet 172.25.65.100/32 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fe94:90d2/64 scope link

valid_lft forever preferred_lft forever

server14(haproxy-backup上此时并没有VIP)

[root@server14 salt]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 52:54:00:af:6e:57 brd ff:ff:ff:ff:ff:ff

inet 172.25.65.14/24 brd 172.25.65.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:feaf:6e57/64 scope link

valid_lft forever preferred_lft forever

访问VIP 172.25.65.100正常

模仿故障,将haproxy-master上的keepalived关掉

[root@server13 keepalived]# systemctl stop keepalived

查看server13上发现已经没有VIP

VIP漂移到server14(haproxy-backup)上

此时从外部访问,依然正常,则Haproxy+keepalived的高可用搭建成功

154

154

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?