环境:CentOS6.6

Redis版本:redis-3.0

安装目录:/usr/local/redis

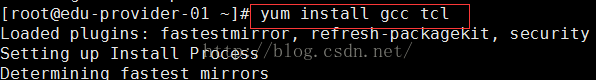

1.编译和安装所需的包:

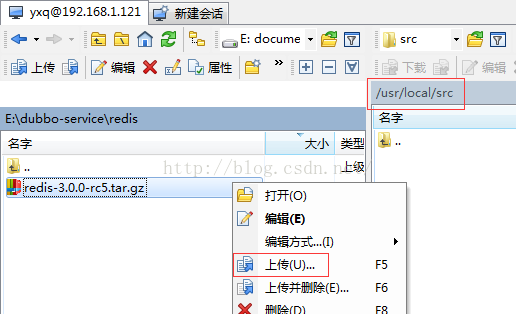

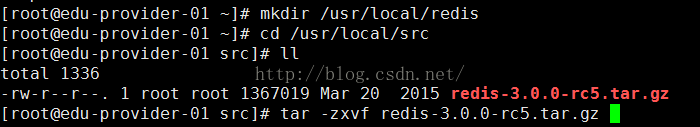

2.上传redis3安装包,解压

[root@yxq src]# mkdir /usr/local/redis

解压

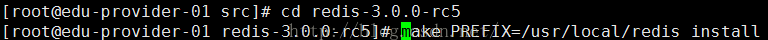

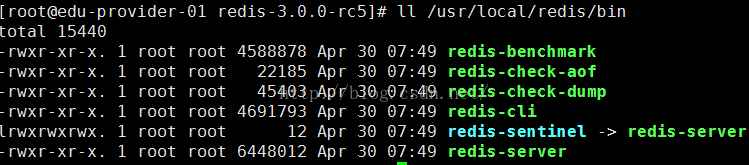

3.安装

(使用PREFIX指定安装目录):

安装完成后,可以看到/usr/local/Redis 目录下有一个 bin 目录,bin 目录里就是 redis 的命令脚本:

4.将 Redis 配置成服务:

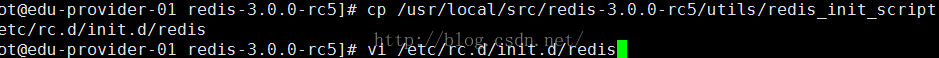

将启动脚本复制到/etc/rc.d/init.d/目录下,并命名为 redis

编辑/etc/rc.d/init.d/redis,修改相应配置,使之能注册成为服务:

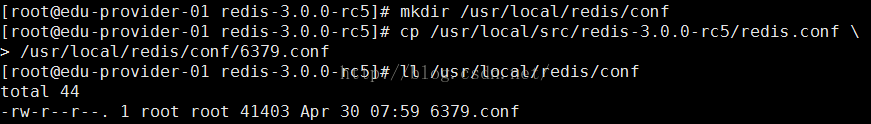

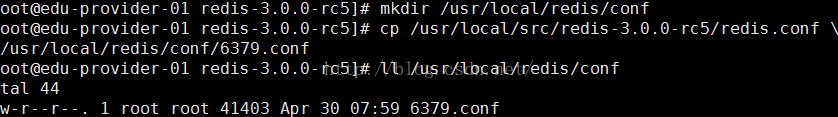

配置文件设置:

创建 redis 配置文件目录

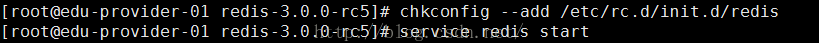

以上配置操作完成后,便可将 Redis 注册成为服务:

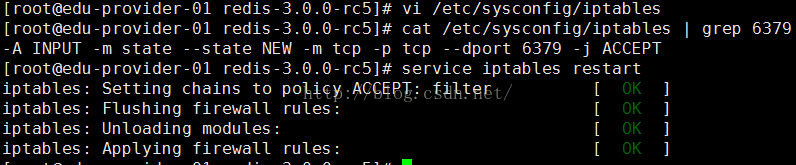

防火墙中打开对应的端口

修改 redis 配置文件设置:

将daemonize no 改为> daemonize yes,改为yes作为后台进程使用,

pidfile /var/run/redis.pid 改为> pidfile /var/run/redis_6379.pid

设置为no,pid文件是不会生成,stop等命令就不会生效,/etc/rc.d/init.d

会报如下错误:

/var/run/redis_6379.pid does not exist, process is not running

启动 Redis 服务

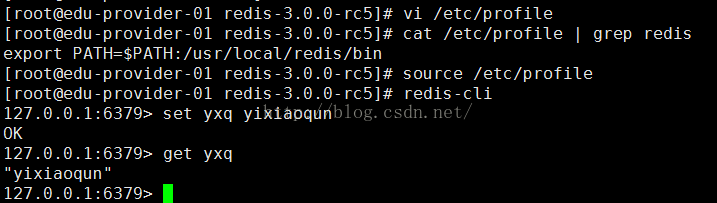

将 Redis 添加到环境变量中:

停止服务

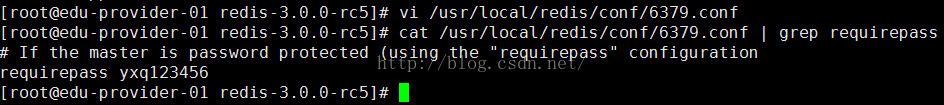

默认情况下,Redis开启安全认证,可以通过/usr/local/redis/conf/6379.conf的requirepass指定一个验证密码

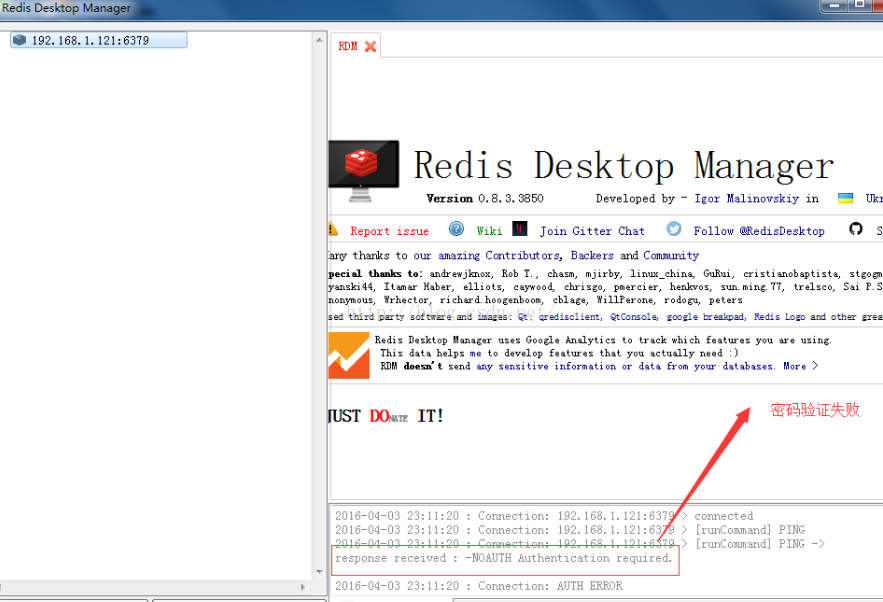

使用RedisDesktopManager登录管理

不输入验证密码

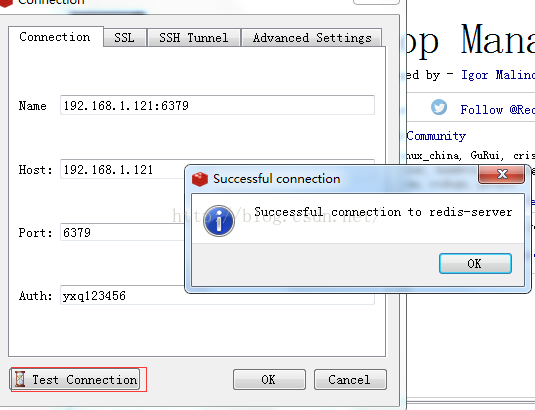

输入验证密码,测试连接

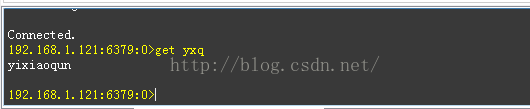

客户端使用console

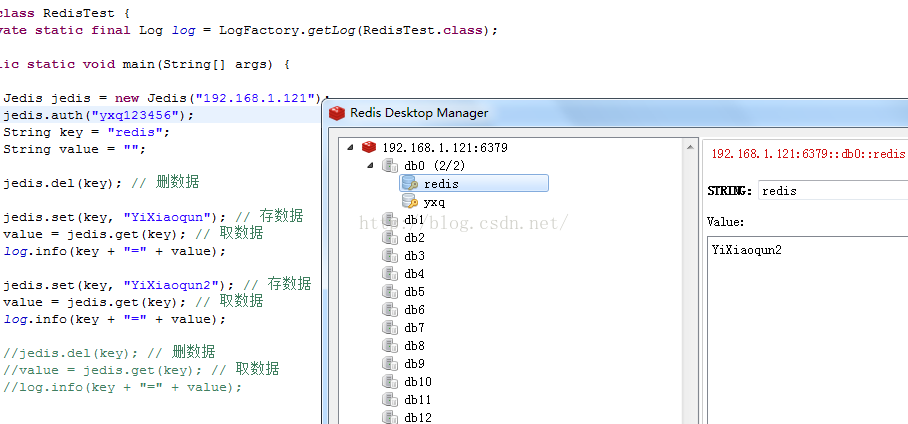

Jedis的使用

- public class RedisTest {

- private static final Log log = LogFactory.getLog(RedisTest.class);

- public static void main(String[] args) {

- Jedis jedis = new Jedis("192.168.1.121");

- jedis.auth("yxq123456");

- String key = "redis";

- String value = "";

- jedis.del(key); // 删数据

- jedis.set(key, "YiXiaoqun"); // 存数据

- value = jedis.get(key); // 取数据

- log.info(key + "=" + value);

- jedis.set(key, "YiXiaoqun2"); // 存数据

- value = jedis.get(key); // 取数据

- log.info(key + "=" + value);

- //jedis.del(key); // 删数据

- //value = jedis.get(key); // 取数据

- //log.info(key + "=" + value);

- }

- }

Jedis连接池的使用,这里设置redis不使用密码验证

spring-redis.xml

- <?xml version="1.0" encoding="UTF-8"?>

- <beans xmlns="http://www.springframework.org/schema/beans"

- xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:p="http://www.springframework.org/schema/p"

- xsi:schemaLocation="

- http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans.xsd">

- <!-- Jedis链接池配置 -->

- <bean id="jedisPoolConfig" class="redis.clients.jedis.JedisPoolConfig">

- <property name="testWhileIdle" value="true" />

- <property name="minEvictableIdleTimeMillis" value="60000" />

- <property name="timeBetweenEvictionRunsMillis" value="30000" />

- <property name="numTestsPerEvictionRun" value="-1" />

- <property name="maxTotal" value="8" />

- <property name="maxIdle" value="8" />

- <property name="minIdle" value="0" />

- </bean>

- <bean id="shardedJedisPool" class="redis.clients.jedis.ShardedJedisPool">

- <constructor-arg index="0" ref="jedisPoolConfig" />

- <constructor-arg index="1">

- <list>

- <bean class="redis.clients.jedis.JedisShardInfo">

- <constructor-arg index="0" value="192.168.1.121" />

- <constructor-arg index="1" value="6379" type="int" />

- </bean>

- </list>

- </constructor-arg>

- </bean>

- </beans>

- <?xml version="1.0" encoding="UTF-8"?>

- <beans xmlns="http://www.springframework.org/schema/beans" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:p="http://www.springframework.org/schema/p" xmlns:context="http://www.springframework.org/schema/context" xmlns:aop="http://www.springframework.org/schema/aop"

- xmlns:tx="http://www.springframework.org/schema/tx"

- xsi:schemaLocation="http://www.springframework.org/schema/beans

- http://www.springframework.org/schema/beans/spring-beans-3.2.xsd

- http://www.springframework.org/schema/aop

- http://www.springframework.org/schema/aop/spring-aop-3.2.xsd

- http://www.springframework.org/schema/tx

- http://www.springframework.org/schema/tx/spring-tx-3.2.xsd

- http://www.springframework.org/schema/context

- http://www.springframework.org/schema/context/spring-context-3.2.xsd"

- default-autowire="byName" default-lazy-init="false">

- <!-- 采用注释的方式配置bean -->

- <context:annotation-config />

- <!-- 配置要扫描的包 -->

- <context:component-scan base-package="redis.edu.demo" />

- <!-- proxy-target-class默认"false",更改为"ture"使用CGLib动态代理 -->

- <aop:aspectj-autoproxy proxy-target-class="true" />

- <import resource="spring-redis.xml" />

- </beans>

- <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

- xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

- <modelVersion>4.0.0</modelVersion>

- <groupId>redis.edu.demo</groupId>

- <artifactId>edu-demo-redis</artifactId>

- <version>1.0-SNAPSHOT</version>

- <packaging>war</packaging>

- <name>edu-demo-redis</name>

- <url>http://maven.apache.org</url>

- <build>

- <finalName>edu-demo-redis</finalName>

- <resources>

- <resource>

- <targetPath>${project.build.directory}/classes</targetPath>

- <directory>src/main/resources</directory>

- <filtering>true</filtering>

- <includes>

- <include>**/*.xml</include>

- <include>**/*.properties</include>

- </includes>

- </resource>

- </resources>

- </build>

- <dependencies>

- <!-- Common Dependency Begin -->

- <dependency>

- <groupId>antlr</groupId>

- <artifactId>antlr</artifactId>

- </dependency>

- <dependency>

- <groupId>aopalliance</groupId>

- <artifactId>aopalliance</artifactId>

- </dependency>

- <dependency>

- <groupId>org.aspectj</groupId>

- <artifactId>aspectjweaver</artifactId>

- </dependency>

- <dependency>

- <groupId>cglib</groupId>

- <artifactId>cglib</artifactId>

- </dependency>

- <dependency>

- <groupId>net.sf.json-lib</groupId>

- <artifactId>json-lib</artifactId>

- <classifier>jdk15</classifier>

- <scope>compile</scope>

- </dependency>

- <dependency>

- <groupId>ognl</groupId>

- <artifactId>ognl</artifactId>

- </dependency>

- <dependency>

- <groupId>oro</groupId>

- <artifactId>oro</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-beanutils</groupId>

- <artifactId>commons-beanutils</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-codec</groupId>

- <artifactId>commons-codec</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-collections</groupId>

- <artifactId>commons-collections</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-digester</groupId>

- <artifactId>commons-digester</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-fileupload</groupId>

- <artifactId>commons-fileupload</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-io</groupId>

- <artifactId>commons-io</artifactId>

- </dependency>

- <dependency>

- <groupId>org.apache.commons</groupId>

- <artifactId>commons-lang3</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-logging</groupId>

- <artifactId>commons-logging</artifactId>

- </dependency>

- <dependency>

- <groupId>commons-validator</groupId>

- <artifactId>commons-validator</artifactId>

- </dependency>

- <dependency>

- <groupId>dom4j</groupId>

- <artifactId>dom4j</artifactId>

- </dependency>

- <dependency>

- <groupId>net.sf.ezmorph</groupId>

- <artifactId>ezmorph</artifactId>

- </dependency>

- <dependency>

- <groupId>javassist</groupId>

- <artifactId>javassist</artifactId>

- </dependency>

- <dependency>

- <groupId>log4j</groupId>

- <artifactId>log4j</artifactId>

- </dependency>

- <dependency>

- <groupId>org.slf4j</groupId>

- <artifactId>slf4j-api</artifactId>

- </dependency>

- <dependency>

- <groupId>org.slf4j</groupId>

- <artifactId>slf4j-log4j12</artifactId>

- </dependency>

- <dependency>

- <groupId>com.alibaba</groupId>

- <artifactId>fastjson</artifactId>

- </dependency>

- <!-- Common Dependency End -->

- <!-- Spring Dependency Begin -->

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-aop</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-aspects</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-beans</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-context</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-context-support</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-core</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-jms</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-orm</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-oxm</artifactId>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-test</artifactId>

- <scope>test</scope>

- </dependency>

- <dependency>

- <groupId>org.springframework</groupId>

- <artifactId>spring-tx</artifactId>

- </dependency>

- <!-- Spring Dependency End -->

- <!-- Redis client -->

- <dependency>

- <groupId>redis.clients</groupId>

- <artifactId>jedis</artifactId>

- <version>2.6.2</version>

- </dependency>

- <dependency>

- <groupId>org.apache.commons</groupId>

- <artifactId>commons-pool2</artifactId>

- <version>2.3</version>

- </dependency>

- </dependencies>

- </project>

- public class RedisSpringTest {

- private static final Log log = LogFactory.getLog(RedisSpringTest.class);

- public static void main(String[] args) {

- try {

- ClassPathXmlApplicationContext context = new ClassPathXmlApplicationContext("classpath:spring/spring-context.xml");

- context.start();

- ShardedJedisPool pool = (ShardedJedisPool) context.getBean("shardedJedisPool");

- ShardedJedis jedis = pool.getResource();

- String key = "redis";

- String value = "";

- jedis.del(key); // 删数据

- jedis.set(key, "yixiaoqun"); // 存数据

- value = jedis.get(key); // 取数据

- log.info(key + "=" + value);

- jedis.set(key, "yixiaoqun2"); // 存数据

- value = jedis.get(key); // 取数据

- log.info(key + "=" + value);

- jedis.del(key); // 删数据

- value = jedis.get(key); // 取数据

- log.info(key + "=" + value);

- context.stop();

- } catch (Exception e) {

- log.error("==>RedisSpringTest context start error:", e);

- System.exit(0);

- } finally {

- log.info("===>System.exit");

- System.exit(0);

- }

- }

- }

-

FastDFS简介

FastDFS是一个轻量级的开源分布式文件系统

FastDFS主要解决了大容量的文件存储和高并发访问的问题,文件存取时实现了负载均衡

FastDFS实现了软件方式的RAID,可以使用廉价的IDE硬盘进行存储

支持存储服务器在线扩容

支持相同内容的文件只保存一份,节约磁盘空间

FastDFS只能通过Client API访问,不支持POSIX访问方式

FastDFS特别适合大中型网站使用,用来存储资源文件(如:图片、文档、音频、视频等等)

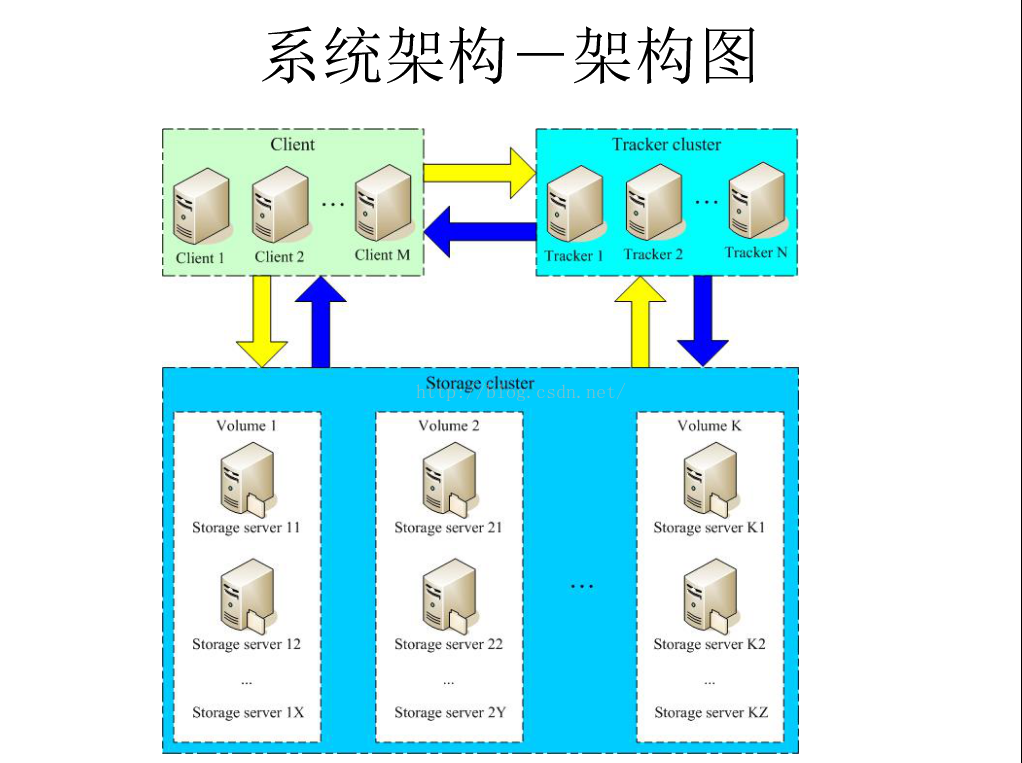

系统架构

跟踪服务器:192.168.1.131(安装到consumer)

存储服务器:192.168.1.51(安装到CI服务器)

环境:CentOS6.6

用户:root

数据目录 :/fastdffs(数据目录按你的数据盘挂载路径而定)

安装包

FastDFS v5.05

libfastcommon-master.zip(是从 FastDFS 和 FastDHT 中提取出来的公共 C 函数库) fastdfs-nginx-module_v1.16.tar.gz

nginx-1.6.2.tar.gz

fastdfs_client_java._v1.25.tar.gz

源码地址:https://github.com/happyfish100/

下载地址:http://sourceforge.NET/projects/fastdfs/files/

官方论坛:http://bbs.chinaunix.net/forum-240-1.html

一、所有跟踪服务器和存储服务器均执行如下操作

1.编译和安装所需的依赖包

Connecting to 192.168.1.131:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Sun Apr 3 00:12:21 2016 from 192.168.1.2

[root@consume ~]# yum install make cmake gcc gcc-c++

2.安装 libfastcommon:

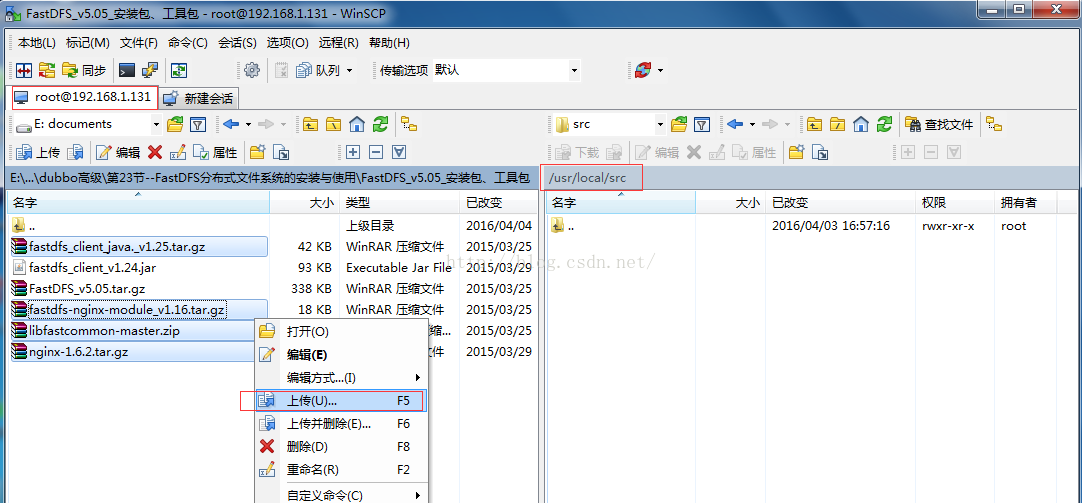

(1)上传或下载 libfastcommon-master.zip 到/usr/local/src 目录

(2)解压

[root@consume ~]# cd /usr/local/src/

[root@consume src]# unzip libfastcommon-master.zip

[root@consume src]# cd libfastcommon-master

[root@consume libfastcommon-master]# ll

total 28

-rw-r--r--. 1 root root 2913 Feb 27 2015 HISTORY

-rw-r--r--. 1 root root 582 Feb 27 2015 INSTALL

-rw-r--r--. 1 root root 1342 Feb 27 2015 libfastcommon.spec

-rwxr-xr-x. 1 root root 2151 Feb 27 2015 make.sh

drwxr-xr-x. 2 root root 4096 Feb 27 2015 PHP-fastcommon

-rw-r--r--. 1 root root 617 Feb 27 2015 README

drwxr-xr-x. 2 root root 4096 Feb 27 2015 src(3) 编译、安装

[root@consume libfastcommon-master]# ./make.sh

[root@consume libfastcommon-master]# ./make.sh install

# libfastcommon默认安装到了

/usr/lib64/libfastcommon.so

/usr/lib64/libfdfsclient.so

(4)因为 FastDFS 主程序设置的 lib 目录是/usr/local/lib,所以需要创建软链接.

[root@consume libfastcommon-master]# ln -s /usr/lib64/libfastcommon.so /usr/local/lib/libfastcommon.so

[root@consume libfastcommon-master]# ln -s /usr/lib64/libfastcommon.so /usr/lib/libfastcommon.so

[root@consume libfastcommon-master]# ln -s /usr/lib64/libfdfsclient.so /usr/local/lib/libfdfsclient.so

[root@consume libfastcommon-master]# ln -s /usr/lib64/libfdfsclient.so /usr/lib/libfdfsclient.so

安装 FastDFS

(1)上传或下载 FastDFS 源码包(FastDFS_v5.05.tar.gz)到 /usr/local/src 目录

(2)解压

[root@consume libfastcommon-master]# cd /usr/local/src

root@consume src]# ll | grep Fast

采用默认安装的方式安装,安装后的相应文件与目录:

-rw-r--r--. 1 root root 345400 Mar 25 2015 FastDFS_v5.05.tar.gz

[root@consume src]# tar -zxvf FastDFS_v5.05.tar.gz

(3)编译、安装(编译前要确保已经成功安装了 libfastcommon)

[root@consume src]# cd FastDFS

[root@consume FastDFS]# ./make.sh

[root@consume FastDFS]# ./make.sh install

A、服务脚本在:

/etc/init.d/fdfs_storaged

/etc/init.d/fdfs_tracker

[root@consume FastDFS]# ls /etc/init.d | grep fdfs

fdfs_storaged

fdfs_trackerd

B、配置文件在(样例配置文件):

/etc/fdfs/client.conf.sample

/etc/fdfs/storage.conf.sample

/etc/fdfs/tracker.conf.sample

[root@consume FastDFS]# ls /etc/fdfs | grep conf

client.conf.sample

storage.conf.sample

tracker.conf.sample

C、命令工具在/usr/bin/目录下的:

[root@consume FastDFS]# ls /usr/bin | grep fdfs

fdfs_appender_test

fdfs_appender_test1

fdfs_append_file

fdfs_crc32

fdfs_delete_file

fdfs_download_file

fdfs_file_info

fdfs_monitor

fdfs_storaged

fdfs_test

fdfs_test1

fdfs_trackerd

fdfs_upload_appender

fdfs_upload_file

4)因为 FastDFS 服务脚本设置的 bin 目录是/usr/local/bin,但实际命令安装在/usr/bin,可以进入

/user/bin 目录使用以下命令查看 fdfs 的相关命令:

[root@consume FastDFS]# cd /usr/bin

[root@consume bin]# ll | grep fdfs

因此需要修改 FastDFS 服务脚本中相应的命令路径,也就是把/etc/init.d/fdfs_storaged

和/etc/init.d/fdfs_tracker 两个脚本中的/usr/local/bin 修改成/usr/bin:

使用查找替换命令进统一修改:%s+/usr/local/bin+/usr/bin[root@consume bin]# cd /etc/init.d

[root@consume init.d]# vi fdfs_trackerd

[root@consume init.d]# vi fdfs_storaged

跟踪器与存储器配置文件都更改是为了保证文件的同步和一致性

二、配置 FastDFS 跟踪器(192.168.1.131)

1、复制 FastDFS 跟踪器样例配置文件,并重命名:

[root@consume init.d]# cd /etc/fdfs/

[root@consume fdfs]# ll

total 20

-rw-r--r--. 1 root root 1461 Apr 3 19:11 client.conf.sample

-rw-r--r--. 1 root root 7829 Apr 3 19:11 storage.conf.sample

-rw-r--r--. 1 root root 7102 Apr 3 19:11 tracker.conf.sample

[root@consume fdfs]# cp tracker.conf.sample tracker.conf

2、编辑跟踪器配置文件:

[root@consume fdfs]# vi /etc/fdfs/tracker.conf

修改的内容如下:disabled=false

port=22122

base_path=/fastdfs/tracker

(其它参数保留默认配置,具体配置解释请参考官方文档说明:

http://bbs.chinaunix.net/thread-1941456-1-1.html )

3、 创建基础数据目录(参考基础目录 base_path 配置):

[root@consume fdfs]# mkdir -p /fastdfs/tracker4、 防火墙中打开跟踪器端口(默认为 22122):

[root@consume fdfs]# vi /etc/sysconfig/iptables添加:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 22122 -j ACCEPT

[root@consume fdfs]# cat /etc/sysconfig/iptables | grep 22122-A INPUT -m state --state NEW -m tcp -p tcp --dport 22122 -j ACCEPT

重启

[root@consume fdfs]# service iptables restart

5、 启动 Tracker:

[root@consume fdfs]# /etc/init.d/fdfs_trackerd start

Starting FastDFS tracker server:查看 FastDFS Tracker 是否已成功启动:

[root@consume fdfs]# ps -ef | grep fdfs

root 3870 1 0 19:50 ? 00:00:00 /usr/bin/fdfs_trackerd /etc/fdfs/tracker.conf

root 3879 3028 0 19:51 pts/0 00:00:00 grep fdfs

(初次成功启动,会在/fastdfs/tracker 目录下创建 data、logs 两个目录)

[root@consume fdfs]# ll /fastdfs/tracker/

total 8

drwxr-xr-x. 2 root root 4096 Apr 3 19:50 data

drwxr-xr-x. 2 root root 4096 Apr 3 19:50 logs

[root@consume fdfs]#

6.关闭 Tracker

[root@consume fdfs]# /etc/init.d/fdfs_trackerd stop

stopping fdfs_trackerd ...

7、 设置 FastDFS 跟踪器开机启动:

[root@consume fdfs]# vi /etc/rc.d/rc.local

## FastDFS Tracker

/etc/init.d/fdfs_trackerd start

验证是否设置开机启动[root@consume fdfs]# /etc/init.d/fdfs_trackerd status

fdfs_trackerd is stopped

[root@consume fdfs]# reboot

Connecting to 192.168.1.131:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Sun Apr 3 18:44:38 2016 from 192.168.1.2

[root@consume ~]# /etc/init.d/fdfs_trackerd status

fdfs_trackerd (pid 2152) is running...

[root@consume ~]#

三、配置 FastDFS 存储(192.168.1.51)

1、 复制 FastDFS 存储器样例配置文件,并重命名:

[root@yxq init.d]# cd /etc/fdfs

[root@yxq fdfs]# ll

total 20

-rw-r--r-- 1 root root 1461 Apr 3 21:45 client.conf.sample

-rw-r--r-- 1 root root 7829 Apr 3 21:45 storage.conf.sample

-rw-r--r-- 1 root root 7102 Apr 3 21:45 tracker.conf.sample

[root@yxq fdfs]# cp storage.conf.sample storage.conf

2、 编辑存储器样例配置文件:

[root@yxq fdfs]# vi /etc/fdfs/storage.conf

修改的内容如下:

disabled=false port=23000

base_path=/fastdfs/storage

store_path0=/fastdfs/storage

tracker_server=192.168.4.131:22122

http.server_port=8888

(其它参数保留默认配置,具体配置解释请参考官方文档说明:

http://bbs.chinaunix.net/thread-1941456-1-1.html )

3、 创建基础数据目录(参考基础目录 base_path 配置):

[root@yxq fdfs]# mkdir -p /fastdfs/storage

4、 防火墙中打开存储器端口(默认为 23000):

[root@yxq fdfs]# vi /etc/sysconfig/iptables

添加如下端口行:

-A INPUT -m state --state NEW -m tcp -p tcp --dport 23000 -j ACCEPT

[root@yxq fdfs]# cat /etc/sysconfig/iptables | grep 23000

-A INPUT -m state --state NEW -m tcp -p tcp --dport 23000 -j ACCEPT重启防火墙:

[root@yxq fdfs]# service iptables restart

5、 启动 Storage:

[root@yxq fdfs]# /etc/init.d/fdfs_storaged start

Starting FastDFS storage server:看 FastDFS Storage 是否已成功启动

[root@yxq fdfs]# ps -ef | grep fdfs

root 11997 1 0 22:16 pts/0 00:00:00 /usr/bin/fdfs_storaged /etc/fdfs/storage.conf

root 12000 11293 0 22:16 pts/0 00:00:00 grep fdfs

(初次成功启动,会在/fastdfs/storage 目录下创建data、logs 两个目录)

[root@yxq fdfs]# ll /fastdfs/storage/

6、 关闭 Storage:

[root@yxq fdfs]# /etc/init.d/fdfs_storaged stop

stopping fdfs_storaged ...

7、 设置 FastDFS 存储器开机启动:

[root@yxq fdfs]# vi /etc/rc.d/rc.local

添加:

## FastDFS Storage

/etc/init.d/fdfs_storaged start

四、文件上传测试(192.168.4.121)

1、修改 Tracker 服务器中的客户端配置文件:

[root@consume ~]# cp /etc/fdfs/client.conf.sample /etc/fdfs/client.conf

[root@consume ~]# vi /etc/fdfs/client.conf

修改

base_path=/fastdfs/tracker

tracker_server=192.168.1.131:22122

执行如下命令

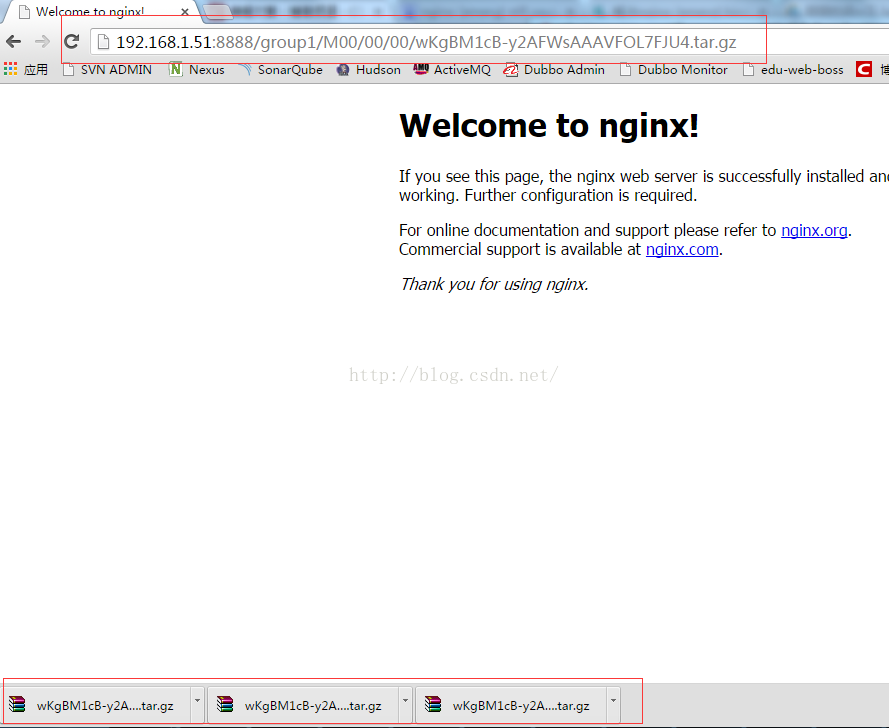

[root@consume ~]# /usr/bin/fdfs_upload_file /etc/fdfs/client.conf /usr/local/src/FastDFS_v5.05.tar.gz

group1/M00/00/00/wKgBM1cB-y2AFWsAAAVFOL7FJU4.tar.gz

(能返回以上文件ID,说明文件上传成功)

以也可以在存储服务器根据group1查看

[root@yxq fdfs]# ll /fastdfs/storage/data/00/00/

total 340

-rw-r--r-- 1 root root 345400 Apr 3 22:27 wKgBM1cB-y2AFWsAAAVFOL7FJU4.tar.gz

[root@yxq fdfs]# date

Sun Apr 3 22:31:54 PDT 2016

[root@yxq fdfs]#

六、在每个存储节点上安装nginx(只需要在存储节点服务器上安装即可)

1.fastdfs-nginx-module作用说明:

FastDFS通过Tracker服务器,将文件放在Storage服务器存储,但是同组存储服务器之间需要进入文件复制,有同步延迟问题。

假设Tracker服务器将文件 上传到了192.168.1.51,上传成功后文件ID已经返回给客户端。此时FastDFS存储集群机制会将这个文件

同步到存储192.168.1.52,在文件还没有复制完成的情况下,客户端如果用这个文件 ID在192.168.1.52上取文件,就会出现文件无

法访问的错误,而fastdfs-nginx-module可以重定向文件连接到源服务器取文件,避免客户端由于复制延迟导致的文件无法访问错

误。(解压后的fastdfs-nnginx-module在nginx安装时使用)

2.上传fastdfs-nginx-module_v1.16.tar.gz到/usr/local/src中(开始已经上传)

3.解压

[root@yxq src]# ls

FastDFS FastDFS_v5.05.tar.gz ng

fastdfs_client_java._v1.25.tar.gz libfastcommon-master re

fastdfs-nginx-module_v1.16.tar.gz libfastcommon-master.zip re

[root@yxq src]# tar -zxvf fastdfs-nginx-module_v1.16.tar.gz

4、修改fastdfs-nginx-module的config配置文件

[root@yxq src]# cd fastdfs-nginx-module/src

[root@yxq src]# vi config

CORE_INCS="$CORE_INCS /usr/local/include/fastdfs /usr/local/include/fastcommon/"

修改为

CORE_INCS="$CORE_INCS /usr/include/fastdfs /usr/include/fastcommon/"

5.上传当前稳定版本Nginx(nginx-1.6.2.tar.gz) 到/usr/local/src目录

6.安装编译Nginx所需的依赖包

[root@yxq src]# yum install gcc gcc-c++ make automake autoconf libtool pcre* zlib openssl openssl-devel

7.编译安装Nginx(添加fastdfs-nginx-module模块)

[root@yxq src]# cd /usr/local/src/

[root@yxq src]# tar -zxvf nginx-1.6.2.tar.gz

[root@yxq src]# cd nginx-1.6.2

[root@yxq nginx-1.6.2]# ./configure --add-module=/usr/local/src/fastdfs-nginx-module/src

[root@yxq nginx-1.6.2]# make && make install

8、复制fastdfs-nginx-module源码中的配置文件到/etc/fdfs目录 ,并修改

[root@yxq nginx-1.6.2]# cp /usr/local/src/fastdfs-nginx-module/src/mod_fastdfs.conf /etc/fdfs/

修改以下配置

connect_timeout=10

base_path=/tmp

tracker_server=192.168.1.131:22122

storage_server_port=23000

group_name=group1

url_have_group_name = true

store_path0=/fastdfs/storage

9、复制FastDFS的部分配置文件到/etc/fdfs目录

[root@yxq nginx-1.6.2]# cd /usr/local/src/FastDFS/conf

[root@yxq conf]# cp http.conf mime.types /etc/fdfs/

10、在/fastdfs/storage文件存储目录 下创建软连接,将其连接到实际存放数据的目录

[root@yxq conf]# ln -s /fastdfs/storage/data /fastdfs/storage/data/M00

11、配置Nginx

简洁版nginx配置样例

root@yxq conf]# cd /usr/local/nginx/

[root@yxq nginx-1.6.2]# cd conf

[root@yxq conf]# ls

fastcgi.conf koi-utf mime.types scgi_params win-utf

fastcgi_params koi-win nginx.conf uwsgi_params

[root@yxq conf]# vi nginx.conf

user root;

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

#keepalive_timeout 0;

keepalive_timeout 65;

server {

listen 8888;

server_name localhost;

location ~/group([0-9]) /M00{

#alias /fastdfs/storage/data;

ngx_fastdfs_module;

}

#error_page 404 /404.html;

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

}

}

}

注意、说明

A.8888端口值是要与/etc/fdfs/storage.conf的http.server_port=8888相对应

因为http.server_port默认为8888,如果想改成80,则要对应修改过来

B.Storage对应有多个group的情况下,访问路径带group名,如/group1/M00/00/xxx,

对应的Nginx配置为

location ~/group([0-9]) /M00{

ngx_fastdfs_module;

}

C.如查下载时如发现老报404,将nginx.conf第一行的user nobody修改为user root后重启

12.防火墙中打开Nginx的8888端口

[root@yxq conf]# vi /etc/sysconfig/iptables

添加

-A INPUT -m state --state NEW -m tcp -p tcp --dport 8888 -j ACCEPT

[root@yxq conf]# cat /etc/sysconfig/iptables | grep 8888

-A INPUT -m state --state NEW -m tcp -p tcp --dport 8888 -j ACCEPT

[root@yxq conf]# service iptables restart

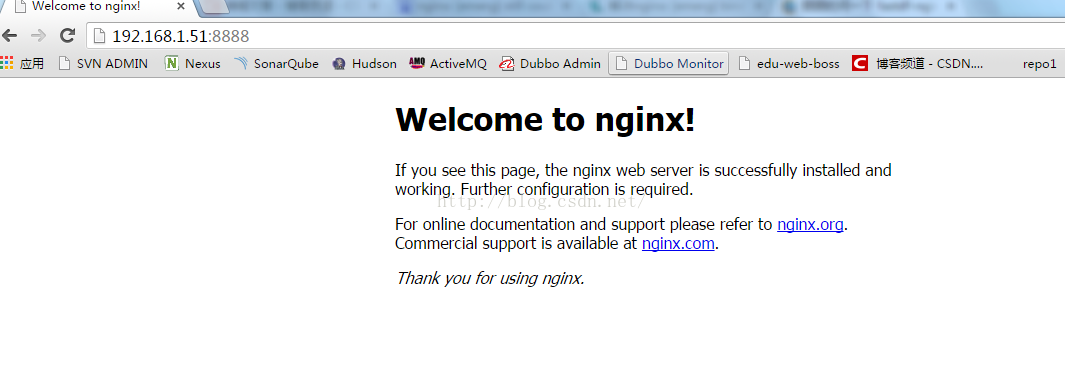

13、启动Nginx

[root@yxq conf]# /usr/local/nginx/sbin/nginx

ngx_http_fastdfs_set pid=15553

(重启Nginx的命令为:/usr/local/nginx/sbin/nginx -s reload

[root@yxq conf]# /usr/local/nginx/sbin/nginx -s reload

ngx_http_fastdfs_set pid=15557

[root@yxq conf]# ps -ef | grep ngx_http_fastdfs_set

root 15561 11293 0 23:25 pts/0 00:00:00 grep ngx_http_fastdfs_set

[root@yxq conf]#

)

14、通过浏览器访问测试上传的文件

访问上传的文件

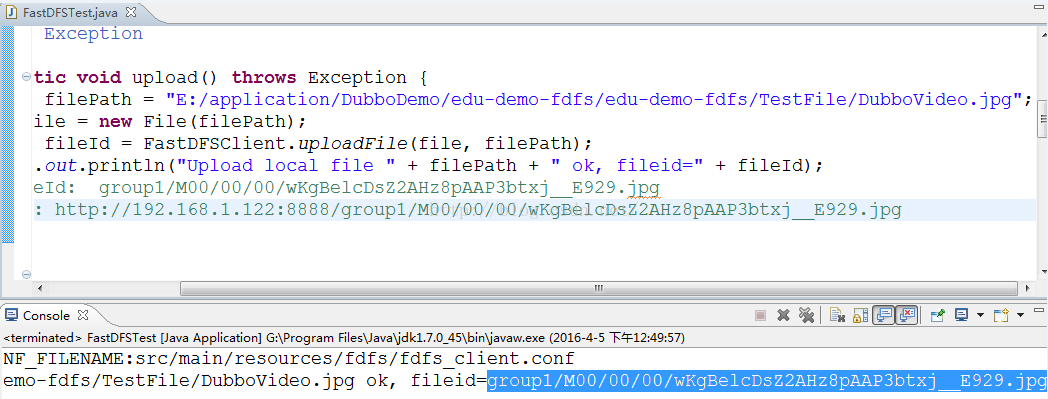

在Java代码中使用FastDFS

集群的目的:实现高可用,容错功能,集群的服务器不要放在一台物理机,要分散节点,才能实现高可用,高容错性能,一台提供者挂了,还有其他提供者,保证系统正常、稳定运行。

一、环境准备

edu-provider-01(192.168.1.121)

edu-provider-02(192.168.1.122)

Connecting to 192.168.1.121:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Sat Apr 9 04:28:07 2016 from 192.168.1.51

[root@edu-provider-01 ~]#

Connecting to 192.168.1.122:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Sat Apr 9 04:28:07 2016 from 192.168.1.51

[root@edu-provider-02 ~]#

二、Dubbo服务集群

用户服务:pay-service-user

交易服务:pay-service-trade

我在121,122服务器同时启动这两个服务

[root@edu-provider-01 user]# ./service-user.sh start

=== start pay-service-user

[root@edu-provider-01 user]# cd ..

[root@edu-provider-01 service]# cd trade/

[root@edu-provider-01 trade]# ./service-trade.sh start

=== start pay-service-trade

[root@edu-provider-02 user]# ./service-user.sh start

=== start pay-service-user

[root@edu-provider-02 user]# cd ..

[root@edu-provider-02 service]# cd trade/

[root@edu-provider-02 trade]# ./service-trade.sh start

=== start pay-service-trade

在DubboAdmin管理控制台中可以查看到两台机器的服务都注册成功

这里我可以查询交易信息

我先关掉121的交易服务

[root@edu-provider-01 trade]# ./service-trade.sh stop

=== stop pay-service-trade

[root@edu-provider-01 trade]# ps -ef | grep pay

root 2803 1 8 06:33 pts/0 00:00:50 /usr/jdk/jre/bin/Java -Xms128m -Xmx512m -jar pay-service-user.jar

root 2980 2705 0 06:43 pts/0 00:00:00 grep pay

[root@edu-provider-01 trade]#

这里我依旧可以查询交易信息

我再关掉122的交易服务

[root@edu-provider-02 trade]# ./service-trade.sh stop

=== stop pay-service-trade

[root@edu-provider-02 trade]# ps -ef | grep pay

root 2639 1 7 06:34 pts/1 00:00:51 /usr/jdk/jre/bin/java -Xms128m -Xmx512m -jar pay-service-user.jar

root 2816 2592 0 06:46 pts/1 00:00:00 grep pay

[root@edu-provider-02 trade]#

这里我查询交易信息就会出现异常

我在121服务上再开启交易服务,又可以查询交易信息了

[root@edu-provider-01 trade]# ./service-trade.sh start

=== start pay-service-trade

[root@edu-provider-01 trade]#

三、Dubbo服务容错配置-集群容错模式

标签:

<dubbo:service>提供方配置标签,粒度粗

例:<!-- 当ProtocolConfig和ServiceConfig某属性没有配置时,采用此缺省值 -->

<dubbo:provider timeout="30000" threadpool="fixed" threads="100" accepts="1000" />

<dubbo:service>:服务发布标签,例,在这个上配置容错,粒度细

<!-- 提供服务接口 -->

<dubbo:service retries="0" interface="edu.pay.facade.trade.service.PaymentFacade" ref="paymentFacade" />

<dubbo:consumer>消费端标签,应用单个消费端配置,粒度粗

例:<dubbo:consumer timeout="8000" retries="0" />

在这个上配置容错,粒度细

<dubbo:reference >

<!-- 调用账户服务 -->

<dubbo:reference interface="edu.pay.facade.account.service.AccountTransactionFacade" id="accountTransactionFacade" check="false" />

属性:cluster 类型:string

是否必填:可选 缺省值:failover

作用:性能调优 集群方式:可选:failover/failfast/failsafe/failback/forking

1、Failover Cluster

失败自动切换,当出现失败,重试其它服务器。(缺省) 通常用于读操作,但重试会带来更长延迟。 可通过retries="2"来设置重试次数(不含第一次)。

<dubbo:service retries="2" />

或:

<dubbo:reference retries="2" />

或:

<dubbo:reference>

<dubbo:method name="findFoo" retries="2" /> </dubbo:reference>

2、Failfast Cluster

快速失败,只发起一次调用,失败立即报错。 通常用于非幂等性的写操作,比如新增记录。

<dubbo:service cluster="failfast" />

或:

<dubbo:reference cluster="failfast" />

3.Failsafe Cluster

失败安全,出现异常时,直接忽略,通常 用于写入审计日志等操作

<dubbo:service cluster="failsafe"/>或者

<dubbo:reference cluster="failsafe"/>

4.Failback Cluster

失败自动恢复,后台记录失败请求,定时重发,通常用于消息通知操作。

<dubbo:service cluster="failback"/>

或

<dubbo:reference cluster="failback"/>

5.Forking Cluster

并行调用多个服务,只要一个成功即返回,通常用于实时要求较高的读操作,但需要浪费更多的服务器资源。可通过forks="2"来设置最大并发数。

<dubbo:service cluster="forking">

或

<dubbo:reference cluster="forking"/>

集群的目的:实现高可用,容错功能,集群的服务器不要放在一台物理机,要分散节点,才能实现高可用,高容错性能,一台提供者挂了,还有其他提供者,保证系统正常、稳定运行。

一、环境准备

edu-provider-01(192.168.1.121)

edu-provider-02(192.168.1.122)

Connecting to 192.168.1.121:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Sat Apr 9 04:28:07 2016 from 192.168.1.51

[root@edu-provider-01 ~]#

Connecting to 192.168.1.122:22...

Connection established.

To escape to local shell, press 'Ctrl+Alt+]'.

Last login: Sat Apr 9 04:28:07 2016 from 192.168.1.51

[root@edu-provider-02 ~]#

二、Dubbo服务集群

用户服务:pay-service-user

交易服务:pay-service-trade

我在121,122服务器同时启动这两个服务

[root@edu-provider-01 user]# ./service-user.sh start

=== start pay-service-user

[root@edu-provider-01 user]# cd ..

[root@edu-provider-01 service]# cd trade/

[root@edu-provider-01 trade]# ./service-trade.sh start

=== start pay-service-trade

[root@edu-provider-02 user]# ./service-user.sh start

=== start pay-service-user

[root@edu-provider-02 user]# cd ..

[root@edu-provider-02 service]# cd trade/

[root@edu-provider-02 trade]# ./service-trade.sh start

=== start pay-service-trade

在DubboAdmin管理控制台中可以查看到两台机器的服务都注册成功

这里我可以查询交易信息

我先关掉121的交易服务

[root@edu-provider-01 trade]# ./service-trade.sh stop

=== stop pay-service-trade

[root@edu-provider-01 trade]# ps -ef | grep pay

root 2803 1 8 06:33 pts/0 00:00:50 /usr/jdk/jre/bin/Java -Xms128m -Xmx512m -jar pay-service-user.jar

root 2980 2705 0 06:43 pts/0 00:00:00 grep pay

[root@edu-provider-01 trade]#

这里我依旧可以查询交易信息

我再关掉122的交易服务

[root@edu-provider-02 trade]# ./service-trade.sh stop

=== stop pay-service-trade

[root@edu-provider-02 trade]# ps -ef | grep pay

root 2639 1 7 06:34 pts/1 00:00:51 /usr/jdk/jre/bin/java -Xms128m -Xmx512m -jar pay-service-user.jar

root 2816 2592 0 06:46 pts/1 00:00:00 grep pay

[root@edu-provider-02 trade]#

这里我查询交易信息就会出现异常

我在121服务上再开启交易服务,又可以查询交易信息了

[root@edu-provider-01 trade]# ./service-trade.sh start

=== start pay-service-trade

[root@edu-provider-01 trade]#

三、Dubbo服务容错配置-集群容错模式

标签:

<dubbo:service>提供方配置标签,粒度粗

例:<!-- 当ProtocolConfig和ServiceConfig某属性没有配置时,采用此缺省值 -->

<dubbo:provider timeout="30000" threadpool="fixed" threads="100" accepts="1000" />

<dubbo:service>:服务发布标签,例,在这个上配置容错,粒度细

<!-- 提供服务接口 -->

<dubbo:service retries="0" interface="edu.pay.facade.trade.service.PaymentFacade" ref="paymentFacade" />

<dubbo:consumer>消费端标签,应用单个消费端配置,粒度粗

例:<dubbo:consumer timeout="8000" retries="0" />

在这个上配置容错,粒度细

<dubbo:reference >

<!-- 调用账户服务 -->

<dubbo:reference interface="edu.pay.facade.account.service.AccountTransactionFacade" id="accountTransactionFacade" check="false" />

属性:cluster 类型:string

是否必填:可选 缺省值:failover

作用:性能调优 集群方式:可选:failover/failfast/failsafe/failback/forking

1、Failover Cluster

失败自动切换,当出现失败,重试其它服务器。(缺省) 通常用于读操作,但重试会带来更长延迟。 可通过retries="2"来设置重试次数(不含第一次)。

<dubbo:service retries="2" />

或:

<dubbo:reference retries="2" />

或:

<dubbo:reference>

<dubbo:method name="findFoo" retries="2" /> </dubbo:reference>

2、Failfast Cluster

快速失败,只发起一次调用,失败立即报错。 通常用于非幂等性的写操作,比如新增记录。

<dubbo:service cluster="failfast" />

或:

<dubbo:reference cluster="failfast" />

3.Failsafe Cluster

失败安全,出现异常时,直接忽略,通常 用于写入审计日志等操作

<dubbo:service cluster="failsafe"/>或者

<dubbo:reference cluster="failsafe"/>

4.Failback Cluster

失败自动恢复,后台记录失败请求,定时重发,通常用于消息通知操作。

<dubbo:service cluster="failback"/>

或

<dubbo:reference cluster="failback"/>

5.Forking Cluster

并行调用多个服务,只要一个成功即返回,通常用于实时要求较高的读操作,但需要浪费更多的服务器资源。可通过forks="2"来设置最大并发数。

<dubbo:service cluster="forking">

或

<dubbo:reference cluster="forking"/>

在实际项目中,生产环境中,我们用failover模式时可以这样设计服务接口,遵循接口隔离原则 ,查询服务与写操作服务隔离,

查询接口我们可以配置retries="2"

在写操作接口我们配置retries="0" ,如果不设置为0, 超时,会重新连接,会出现重复写的情况,所以使用failover模式时,我们要进行读写操作接口隔离,且写操作接口retries=0

2257

2257

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?