简介

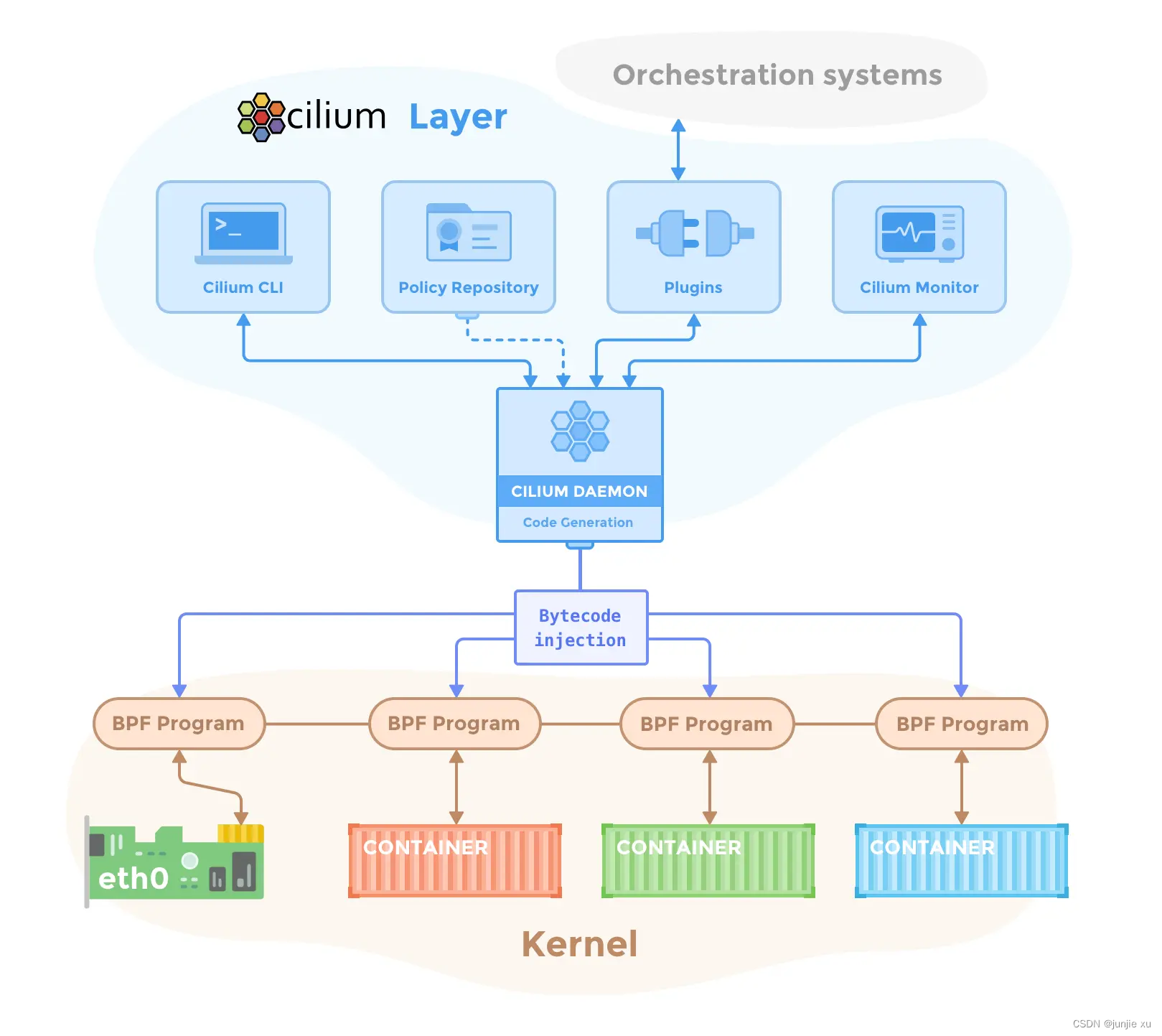

Cilium 是一个用于容器网络领域的开源项目,主要是面向容器而使用,用于提供并透明地保护应用程序工作负载(如应用程序容器或进程)之间的网络连接和负载均衡。

Cilium 在第 3/4 层运行,以提供传统的网络和安全服务,还在第 7 层运行,以保护现代应用协议(如 HTTP, gRPC 和 Kafka)的使用。 Cilium 被集成到常见的容器编排框架中,如 Kubernetes 和 Mesos。

Cilium 的底层基础是 BPF,Cilium 的工作模式是生成内核级别的 BPF 程序与容器直接交互。区别于为容器创建 overlay 网络,Cilium 允许每个容器分配一个 IPv6 地址(或者 IPv4 地址),使用容器标签而不是网络路由规则去完成容器间的网络隔离。它还包含创建并实施 Cilium 规则的编排系统的整合。

安装

$ curl -L --remote-name-all https://github.com/cilium/cilium-cli/releases/latest/download/cilium-linux-amd64.tar.gz{,.sha256sum}

$ tar -zxvf cilium-linux-amd64.tar.gz

$ cilium install

ℹ️ Using Cilium version 1.13.0

🔮 Auto-detected cluster name: kubernetes

🔮 Auto-detected datapath mode: tunnel

🔮 Auto-detected kube-proxy has not been installed

ℹ️ Cilium will fully replace all functionalities of kube-proxy

ℹ️ helm template --namespace kube-system cilium cilium/cilium --version 1.13.0 --set bpf.masquerade=true,cluster.id=0,cluster.name=kubernetes,encryption.nodeEncryption=false,k8sServiceHost=1.2.3.226,k8sServicePort=6443,kubeProxyReplacement=strict,operator.replicas=1,serviceAccounts.cilium.name=cilium,serviceAccounts.operator.name=cilium-operator,tunnel=vxlan

ℹ️ Storing helm values file in kube-system/cilium-cli-helm-values Secret

🔑 Created CA in secret cilium-ca

🔑 Generating certificates for Hubble...

🚀 Creating Service accounts...

🚀 Creating Cluster roles...

🚀 Creating ConfigMap for Cilium version 1.13.0...

🚀 Creating Agent DaemonSet...

🚀 Creating Operator Deployment...

⌛ Waiting for Cilium to be installed and ready...

^[[A♻️ Restarting unmanaged pods...

♻️ Restarted unmanaged pod kube-system/coredns-6d8c4cb4d-4nf2s

✅ Cilium was successfully installed! Run 'cilium status' to view installation health

// 安装 hubble

$ cilium hubble enable --ui

🔑 Found CA in secret cilium-ca

ℹ️ helm template --namespace kube-system cilium cilium/cilium --version 1.13.0 --set bpf.masquerade=true,cluster.id=0,cluster.name=kubernetes,encryption.nodeEncryption=false,hubble.enabled=true,hubble.relay.enabled=true,hubble.tls.ca.cert=LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUNGVENDQWJxZ0F3SUJBZ0lVUTc1YkNSZU4yc01yVndtbFREWWJic0ZaZ1NBd0NnWUlLb1pJemowRUF3SXcKYURFTE1Ba0dBMVVFQmhNQ1ZWTXhGakFVQmdOVkJBZ1REVk5oYmlCR2NtRnVZMmx6WTI4eEN6QUpCZ05WQkFjVApBa05CTVE4d0RRWURWUVFLRXdaRGFXeHBkVzB4RHpBTkJnTlZCQXNUQmtOcGJHbDFiVEVTTUJBR0ExVUVBeE1KClEybHNhWFZ0SUVOQk1CNFhEVEl6TURJeU5qQTJORGt3TUZvWERUSTRNREl5TlRBMk5Ea3dNRm93YURFTE1Ba0cKQTFVRUJoTUNWVk14RmpBVUJnTlZCQWdURFZOaGJpQkdjbUZ1WTJselkyOHhDekFKQmdOVkJBY1RBa05CTVE4dwpEUVlEVlFRS0V3WkRhV3hwZFcweER6QU5CZ05WQkFzVEJrTnBiR2wxYlRFU01CQUdBMVVFQXhNSlEybHNhWFZ0CklFTkJNRmt3RXdZSEtvWkl6ajBDQVFZSUtvWkl6ajBEQVFjRFFnQUVlcm1PbFJOZjZtSzBkNXcxbWNuRjV6RlEKb1ZGaVZUSGxKTmczQkM4Nzd4QnA5VnN0b0h3OU54Y0c1R1o0WXFoNFd0NXdhMG90dVBhRXJSbFk3RFBlbjZOQwpNRUF3RGdZRFZSMFBBUUgvQkFRREFnRUdNQThHQTFVZEV3RUIvd1FGTUFNQkFmOHdIUVlEVlIwT0JCWUVGTGViCkdSSUgxTVZGTlNpYW5XaTYyYXZRQzhLYU1Bb0dDQ3FHU000OUJBTUNBMGtBTUVZQ0lRQ3FOSHFpa3VCWUpkdHMKYVBadndUMWhIZEd4aCtyWVBWc1RQTnprd2pxQzV3SWhBTnVuOFQ0TThhTC9CUmhSb1pXTlE4TXYxc083bGZJRApvT3BtYS9NcmFEVTkKLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=,hubble.tls.ca.key=[--- REDACTED WHEN PRINTING TO TERMINAL (USE --redact-helm-certificate-keys=false TO PRINT) ---],hubble.ui.enabled=true,k8sServiceHost=1.2.3.226,k8sServicePort=6443,kubeProxyReplacement=strict,operator.replicas=1,serviceAccounts.cilium.name=cilium,serviceAccounts.operator.name=cilium-operator,tunnel=vxlan

✨ Patching ConfigMap cilium-config to enable Hubble...

🚀 Creating ConfigMap for Cilium version 1.13.0...

♻️ Restarted Cilium pods

⌛ Waiting for Cilium to become ready before deploying other Hubble component(s)...

🚀 Creating Peer Service...

✨ Generating certificates...

🔑 Generating certificates for Relay...

✨ Deploying Relay...

✨ Deploying Hubble UI and Hubble UI Backend...

⌛ Waiting for Hubble to be installed...

ℹ️ Storing helm values file in kube-system/cilium-cli-helm-values Secret

✅ Hubble was successfully enabled!

修改 svc 为 nodeport 提供访问

组件和原理

Cilium-agent

daemonset 在集群中的每个节点上运行,通过 Kubernetes 或 API 接受配置,这些配置描述了网络、服务负载平衡、网络策略以及可见性和监控要求。监听来自 Kubernetes 等编排系统的事件,以了解何时启动和停止容器或工作负载。它管理 eBPF 程序,Linux 内核使用这些程序来控制进出这些容器的所有网络访问。

初始化

Init 生成 节点的 cilium 信息

路径:/var/run/cilium/state/globals/node_config.h

cilium-agent --config-dir=/tmp/cilium/config-map

创建 bpfMap,map 存储地址 /sys/fs/bpf/tc/globals/

ctmap.InitMapInfo(option.Config.CTMapEntriesGlobalTCP, option.Config.CTMapEntriesGlobalAny,

option.Config.EnableIPv4, option.Config.EnableIPv6, option.Config.EnableNodePort)

policymap.InitMapInfo(option.Config.PolicyMapEntries)

lbmapInitParams := lbmap.InitParams{

IPv4: option.Config.EnableIPv4,

IPv6: option.Config.EnableIPv6,

MaxSockRevNatMapEntries: option.Config.SockRevNatEntries,

ServiceMapMaxEntries: option.Config.LBMapEntries,

BackEndMapMaxEntries: option.Config.LBMapEntries,

RevNatMapMaxEntries: option.Config.LBMapEntries,

AffinityMapMaxEntries: option.Config.LBMapEntries,

SourceRangeMapMaxEntries: option.Config.LBMapEntries,

MaglevMapMaxEntries: option.Config.LBMapEntries,

}

将本 node 注册到 nodeMgr

nodeMngr.Subscribe(dp.Node())

m.nodeHandlers[nh] = struct{}{}

Domain 创建,包含所有的 manager

// datapath is the underlying datapath implementation to use to

// implement all aspects of an agent

datapath datapath.Datapath

// nodeDiscovery defines the node discovery logic of the agent

nodeDiscovery *nodediscovery.NodeDiscovery

// ipam is the IP address manager of the agent

ipam *ipam.IPAM

netConf *cnitypes.NetConf

endpointManager endpointmanager.EndpointManager

identityAllocator CachingIdentityAllocator

ipcache *ipcache.IPCache

k8sWatcher *watchers.K8sWatcher

redirectPolicyManager *redirectpolicy.Manager

egressGatewayManager *egressgateway.Manager

cgroupManager *manager.CgroupManager

设置系统配置

rp_filter

fib_multipath_use_neigh

unprivileged_bpf_disabled

forwarding

IPAM

IPAM 先获取 InternalIP

为每个节点分配 cidr v4Prefix=10.0.0.0/24

AllocteIPs

Cluster-Name: kubernetes

Local node-name: cilium1"

External-Node IPv4: 1.2.3.226

Internal-Node IPv4: 10.0.0.2

IPv4 allocation prefix: 10.0.0.0/24

Loopback IPv4: 169.254.42.1

Local IPv4 addresses:

- 1.2.3.226

- 10.0.0.2

CNI

向 cilium agent 请求本节点配置

conf, err = getConfigFromCiliumAgent(c)

if err != nil {

return

}

配置中 ipammode:cluster-role

通过 cilium-agent 请求地址

podName := string(cniArgs.K8S_POD_NAMESPACE) + "/" + string(cniArgs.K8S_POD_NAME)

ipam, err := client.IPAMAllocate("", podName, true)

allocate_ip

func (h *postIPAM) Handle(params ipamapi.PostIpamParams) middleware.Responder {

通过现有的 allocated 的 ip 和数量,计算下一个 ip 的偏移量,然后得到申请的 IP

网卡创建

创建 veth-pair,DisableRpFilter,SetMTU,SetUp,SetGROMaxSize,SetGSOMaxSize,设置 ns 内部 IP 和路由

创建 endpoint

调 cilium-agent 创建 endpoint

Endpoint 创建

func (d *Daemon) createEndpoint(ctx context.Context, owner regeneration.Owner, epTemplate *models.EndpointChangeRequest) (*endpoint.Endpoint, int, error)

Endpoint 内容

为 endpoint 申请 ID

err = mgr.expose(ep)

func (mgr *endpointManager) expose(ep *endpoint.Endpoint) error {

newID, err := mgr.AllocateID(ep.ID)

if err != nil {

return err

}

mgr.mutex.Lock()

// Get a copy of the identifiers before exposing the endpoint

identifiers := ep.IdentifiersLocked()

ep.Start(newID)

func (e *Endpoint) Regenerate(regenMetadata *regeneration.ExternalRegenerationMetadata) <-chan bool {

// BPF 程序会被下发到宿主机 /var/run/cilium/state 目录中,regenerateBPF() 函数会重写 bpf headers,以及更新 BPF Map。更新 BPF Map 很重要,下发到网卡中的 BPF 程序执行逻辑时会去查 BPF Map 数据:

func (e *Endpoint) regenerateBPF(regenContext *regenerationContext) (revnum uint64, stateDirComplete bool, reterr error) {

// Compile and install BPF programs for this endpoint

compilationExecuted, err = e.realizeBPFState(regenContext)

编译 BPF 程序

err = e.owner.Datapath().Loader().CompileOrLoad(datapathRegenCtxt.completionCtx, datapathRegenCtxt.epInfoCache, &stats.datapathRealization)

/var/run/cilium/state/<ENDPOINT ID>/

err := compileDatapath(ctx, dirs, ep.IsHost(), ep.Logger(Subsystem))

挂载 BPF 程序

err = l.reloadDatapath(ctx, ep, dirs)

// bpf_lxc.o

filter := &netlink.BpfFilter{

FilterAttrs: netlink.FilterAttrs{

LinkIndex: link.Attrs().Index,

Parent: qdiscParent,

Handle: 1,

Protocol: unix.ETH_P_ALL,

Priority: option.Config.TCFilterPriority,

},

Fd: prog.FD(),

Name: fmt.Sprintf("cilium-%s", link.Attrs().Name),

DirectAction: true,

}

挂载结果

// 普通 pod, from_container

# tc filter show dev lxc1466f225c091 ingress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 cilium-lxc1466f225c091 direct-action not_in_hw id 1717 name cil_from_contai tag a6c2cf4a8cf17a1c jited

// cilium_net,cilium_host, to_host, from_host

# tc filter show dev cilium_host ingress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 cilium-cilium_host direct-action not_in_hw id 1597 name cil_to_host tag 6b89d4d09c799b6f jited

# tc filter show dev cilium_host egress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 cilium-cilium_host direct-action not_in_hw id 1612 name cil_from_host tag ec83ff303e14698d jited

// cilium_vxlan to_overlay,from_overlay

# tc filter show dev cilium_vxlan ingress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 bpf_overlay.o:[from-overlay] direct-action not_in_hw id 1509 name cil_from_overla tag 10c7559775622132 jited

# tc filter show dev cilium_vxlan egress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 bpf_overlay.o:[to-overlay] direct-action not_in_hw id 1520 name cil_to_overlay tag 4d78a91325ebff94 jited

// ens3 from_netdev,to_netdev

# tc filter show dev ens3 ingress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 cilium-ens3 direct-action not_in_hw id 1635 name cil_from_netdev tag 764697679c641345 jited

# tc filter show dev ens3 egress

filter protocol all pref 1 bpf chain 0

filter protocol all pref 1 bpf chain 0 handle 0x1 cilium-ens3 direct-action not_in_hw id 1636 name cil_to_netdev tag 8a513eccee57644b jited

Cilium cli 的使用

工具很强大,支持查看几乎所有的信息

# kubectl exec cilium-wkfqx -n kube-system -- cilium --help

Available Commands:

bpf Direct access to local BPF maps

build-config Resolve all of the configuration sources that apply to this node

cleanup Remove system state installed by Cilium at runtime

completion Output shell completion code

config Cilium configuration options

debuginfo Request available debugging information from agent

encrypt Manage transparent encryption

endpoint Manage endpoints

fqdn Manage fqdn proxy

help Help about any command

identity Manage security identities

ip Manage IP addresses and associated information

kvstore Direct access to the kvstore

lrp Manage local redirect policies

map Access userspace cached content of BPF maps

metrics Access metric status

monitor Display BPF program events

node Manage cluster nodes

nodeid List node IDs and associated information

policy Manage security policies

prefilter Manage XDP CIDR filters

preflight Cilium upgrade helper

recorder Introspect or mangle pcap recorder

service Manage services & loadbalancers

status Display status of daemon

version Print version information

可以查看 k8s endpoint 内容

kubectl exec cilium-wkfqx -n kube-system -- cilium endpoint list

甚至可以查看 cilium_lxc map 有多少内容

kubectl exec cilium-wkfqx -n kube-system -- cilium map get cilium_lxc

Key Value State Error

10.0.0.77:0 id=1033 flags=0x0000 ifindex=9 mac=8A:65:66:99:93:67 nodemac=66:52:92:66:66:01 sync

10.0.0.231:0 id=692 flags=0x0000 ifindex=13 mac=F6:DF:83:C1:42:AE nodemac=4A:D2:EC:B7:41:7C sync

10.0.0.157:0 id=2351 flags=0x0000 ifindex=19 mac=36:B0:81:DD:CB:53 nodemac=5A:E7:3B:D0:B8:C5 sync

10.0.0.243:0 id=505 flags=0x0000 ifindex=21 mac=9A:A7:AD:85:CC:E3 nodemac=E6:5B:CE:77:AA:3D sync

监控

kubectl exec cilium-wkfqx -n kube-system -- cilium monitor

bpfMap 的操作

Cilium-agent

当 pod 增加时,将对应 endpoint 存入 map

err = lxcmap.WriteEndpoint(datapathRegenCtxt.epInfoCache)

for _, v := range GetBPFKeys(f) {

if err := LXCMap().Update(v, info); err != nil {

return err

}

}

if err = m.open(); err != nil {

return err

}

err = UpdateElement(m.fd, m.name, key.GetKeyPtr(), value.GetValuePtr(), 0)

if option.Config.MetricsConfig.BPFMapOps {

metrics.BPFMapOps.WithLabelValues(m.commonName(), metricOpUpdate, metrics.Error2Outcome(err)).Inc()

}

如 endpointInfo

info := &EndpointInfo{

IfIndex: uint32(e.GetIfIndex()), // 网卡索引

// Store security identity in network byte order so it can be

// written into the packet without an additional byte order

// conversion.

LxcID: uint16(e.GetID()), // endpoint ID

MAC: mac, // 网卡 mac

NodeMAC: nodeMAC, // 宿主机侧 mac

}

Key

type EndpointKey struct {

// represents both IPv6 and IPv4 (in the lowest four bytes)

IP types.IPv6 `align:"$union0"` ip bytes

Family uint8 `align:"family"` 1 // ipv4

Key uint8 `align:"key"` 0

ClusterID uint8 `align:"cluster_id"` 0

Pad uint8 `align:"pad"` 0

}

存入的 hashmap 以上面 key,value=endpointInfo 存储

Ebpf

当 ebpf 程序中需要查询 该 endpoint 时,通过 map_lookup_elem 在 map 查询

static __always_inline __maybe_unused struct endpoint_info *

__lookup_ip4_endpoint(__u32 ip)

{

struct endpoint_key key = {};

key.ip4 = ip;

key.family = ENDPOINT_KEY_IPV4;

return map_lookup_elem(&ENDPOINTS_MAP, &key);

}

2216

2216

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?