本文主要操作参考:https://blog.csdn.net/woshixiazaizhe/article/details/80610432?spm=1001.2014.3001.5506

1. kafka内置zookeeper启动报错:ZooKeeper audit is disabled

修改kafka安装目录下config中的ZooKeeper.properties文件,修改该选项:audit.enable=true(如果没有改语句则进行添加)

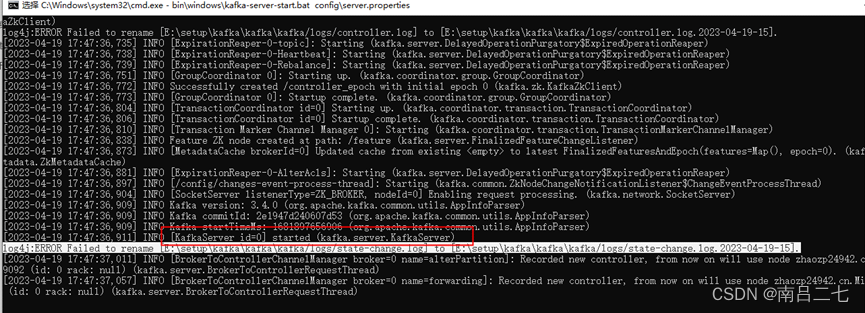

2. 启动zookeeper后再启动kafka,成功;但是显示有个ERROR

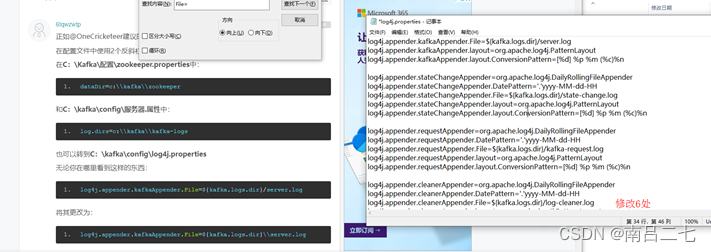

这样进行修改

这样进行修改

3.按照所有步骤操作并创建Topic后,在本地继续用python尝试生产和订阅数据

3.1. python生产消息到kafka:

from kafka import KafkaProducer

from kafka.errors import kafka_errors

import traceback

import json

def kafka_producer():

producer = KafkaProducer(

bootstrap_servers=['localhost:9092'], # kafka服务器ip和端口号

key_serializer=lambda k: json.dumps(k).encode(), # 假设生产的消息为json字符串

value_serializer=lambda v: json.dumps(v).encode()) # 假设生产的消息为json字符串

data = {'msg': 'hello kafka,17:56!'}

future = producer.send(

'test', # 要发送的kafka主题

key='1', # 同一个key值,会被送至同一个分区

value=data,

partition=0) # 向分区1发送消息

print("send {}".format(str(data)))

try:

future.get(timeout=10) # 监控是否发送成功

except kafka_errors: # 发送失败抛出kafka_errors

traceback.format_exc()

if __name__ == '__main__':

kafka_producer()

3.2. python订阅消息:

import time

from kafka import KafkaConsumer

# Set up Kafka consumer

consumer = KafkaConsumer(

# 'test', # Topic to consume from

'mbt_intelligent_control',

bootstrap_servers=['localhost:9092'], # Kafka broker addresses

auto_offset_reset='earliest', # Start consuming from the beginning of the topic

enable_auto_commit=True, # Commit offsets automatically after consuming messages

group_id='carbon-taos-consumer' # Consumer group ID

)

# Consume messages from Kafka topic

# print(message.value.decode('utf-8'))

for message in consumer:

print(message.value.decode('utf-8'))

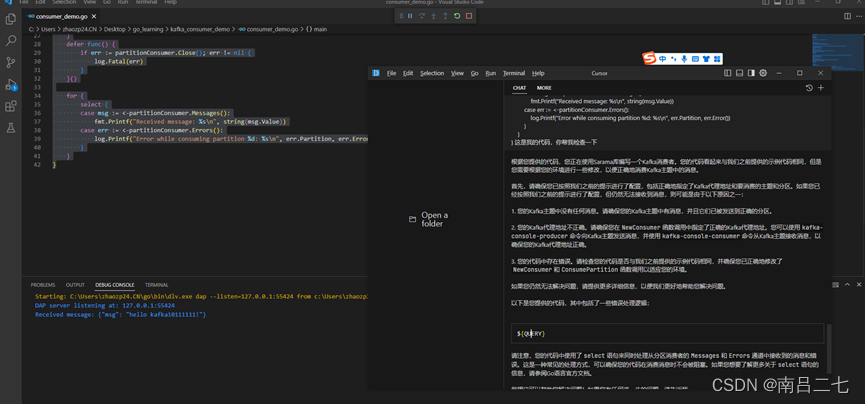

3.3. 尝试用go订阅消息:

在cursor的帮助下,成功消费到kafka的消息哈哈哈哈

5万+

5万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?