1. 激活函数的作用

激活函数的作用是为了增加神经网络模型的非线性。否则你想想,没有激活函数的每层都相当于矩阵相乘。就算你叠加了若干层之后,无非还是个矩阵相乘罢了。所以你没有非线性结构的话,根本就算不上什么神经网络。

2. 梯度消失

在神经网络中,将经过反向传播之后,梯度值衰减到接近0的现象称作梯度消失现象。

3. s i g m o i d 函 数 sigmoid函数 sigmoid函数

f

(

x

)

=

1

1

+

e

−

x

=

(

1

+

e

−

x

)

−

1

f

′

(

x

)

=

−

1

∗

(

1

+

e

−

x

)

−

2

∗

(

1

+

e

−

x

)

′

=

−

1

∗

(

1

+

e

−

x

)

−

2

∗

e

−

x

∗

(

−

x

)

′

=

−

1

∗

(

1

+

e

−

x

)

−

2

∗

e

−

x

∗

(

−

1

)

=

e

−

x

(

1

+

e

−

x

)

2

=

1

+

e

−

x

−

1

(

1

+

e

−

x

)

2

=

1

1

+

e

−

x

−

1

(

1

+

e

−

x

)

2

=

1

1

+

e

−

x

∗

(

1

−

1

1

+

e

−

x

)

=

f

(

x

)

∗

(

1

−

f

(

x

)

)

f(x) = \frac{1}{1+e^{-x}} = (1+e^{-x})^{-1}\\\\ f'(x) = -1 * (1+e^{-x})^{-2} * (1+e^{-x})'\\\\ = -1 * (1+e^{-x})^{-2} * e^{-x} * (-x)'\\\\ = -1 * (1+e^{-x})^{-2} * e^{-x} * (-1)\\\\ = \frac{e^{-x}}{(1+e^{-x})^{2} }\\\\ = \frac{1+e^{-x}-1}{(1+e^{-x})^{2}}\\\\ = \frac{1}{1+e^{-x}} - \frac{1}{(1+e^{-x})^{2}}\\\\ = \frac{1}{1+e^{-x}} * (1 - \frac{1}{1+e^{-x}})\\\\ =f(x) * (1-f(x))

f(x)=1+e−x1=(1+e−x)−1f′(x)=−1∗(1+e−x)−2∗(1+e−x)′=−1∗(1+e−x)−2∗e−x∗(−x)′=−1∗(1+e−x)−2∗e−x∗(−1)=(1+e−x)2e−x=(1+e−x)21+e−x−1=1+e−x1−(1+e−x)21=1+e−x1∗(1−1+e−x1)=f(x)∗(1−f(x))

sigmoid函数只有在x接近于0的地方,导数才比较大,但最大值也只有1/4;

在x的数值非常大或者非常小的地方,导数都接近与0.

反向传播 ∂ L ∂ x = ∂ L ∂ y ∗ ∂ y ∂ x \frac{\partial L}{\partial x} = \frac{\partial L}{\partial y} * \frac{\partial y}{\partial x} ∂x∂L=∂y∂L∗∂x∂y,这将导致 ∂ L ∂ x \frac{\partial L} {\partial x} ∂x∂L会显著的小于 ∂ L ∂ y \frac{\partial L} {\partial y} ∂y∂L

- 如果x是非常大或者非常小的地方,则x的梯度将接近于0

- 即使x的数值接近于0,其梯度最大不超过y的梯度的1/4,如果由多层网络使用sigmoid激活函数,则导致靠前的那些层,梯度变得非常小。

2. 双曲正切函数: t a n h ( x ) tanh(x) tanh(x)

f ( x ) = t a n h ( x ) = e x − e − x e x + e − x \begin{aligned} f(x) & = tanh(x) \\\\ & = \frac{e^{x} - e^{-x}}{e^{x} + e^{-x}}\\\\ \end{aligned} f(x)=tanh(x)=ex+e−xex−e−x

令

a

=

e

x

,

b

=

e

−

x

a = e^{x}, b = e^{-x}

a=ex,b=e−x,根据

(

u

v

)

′

=

u

′

v

−

u

v

′

v

2

(\frac{u}{v})' = \frac{u'v - uv'}{v^{2}}

(vu)′=v2u′v−uv′,则有

f

′

x

=

(

e

x

−

e

−

x

e

x

+

e

−

x

)

′

=

(

a

−

b

a

+

b

)

′

=

(

a

−

b

)

′

(

a

+

b

)

−

(

a

−

b

)

(

a

+

b

)

′

(

a

+

b

)

2

\begin{aligned} f'{x} & = (\frac{e^{x} - e^{-x}}{e^{x} + e^{-x}})'\\\\ & = (\frac{a - b}{a + b})'\\\\ & = \frac{(a - b)'(a + b) - (a - b)(a + b)'}{(a + b)^{2}} \end{aligned}

f′x=(ex+e−xex−e−x)′=(a+ba−b)′=(a+b)2(a−b)′(a+b)−(a−b)(a+b)′

(

a

−

b

)

′

=

(

e

x

−

e

−

x

)

′

=

e

x

−

(

−

1

)

∗

e

−

x

=

e

x

+

e

−

x

=

a

+

b

\begin{aligned} (a - b)' = (e^{x} - e^{-x})' = e^{x} - (-1) * e^{-x} = e^{x} + e^{-x} = a + b \end{aligned}

(a−b)′=(ex−e−x)′=ex−(−1)∗e−x=ex+e−x=a+b

(

a

+

b

)

′

=

(

e

x

+

e

−

x

)

′

=

e

x

+

(

−

1

)

∗

e

−

x

=

e

x

−

e

−

x

=

a

−

b

\begin{aligned} (a + b)' = (e^{x} + e^{-x})' = e^{x} + (-1) * e^{-x} = e^{x} - e^{-x} = a - b \end{aligned}

(a+b)′=(ex+e−x)′=ex+(−1)∗e−x=ex−e−x=a−b

(

a

−

b

)

′

(

a

+

b

)

−

(

a

−

b

)

(

a

+

b

)

′

(

a

+

b

)

2

=

(

a

+

b

)

2

−

(

a

−

b

)

2

(

a

+

b

)

2

=

1

−

(

a

−

b

a

+

b

)

2

=

1

−

(

e

x

−

e

−

x

e

x

+

e

−

x

)

2

=

1

−

(

t

a

n

h

(

x

)

)

2

=

1

−

(

f

(

x

)

)

2

\begin{aligned} & \frac{(a - b)'(a + b) - (a - b)(a + b)'}{(a + b)^{2}}\\\\ & = \frac{(a + b)^{2} - (a - b)^{2}}{(a + b)^{2}}\\\\ & = 1 - (\frac{a - b}{a + b})^{2}\\\\ & = 1 - (\frac{e^{x} - e^{-x}}{e^{x} + e^{-x}})^{2}\\\\ & = 1 - (tanh(x))^{2}\\\\ & = 1 - (f(x))^{2} \end{aligned}

(a+b)2(a−b)′(a+b)−(a−b)(a+b)′=(a+b)2(a+b)2−(a−b)2=1−(a+ba−b)2=1−(ex+e−xex−e−x)2=1−(tanh(x))2=1−(f(x))2

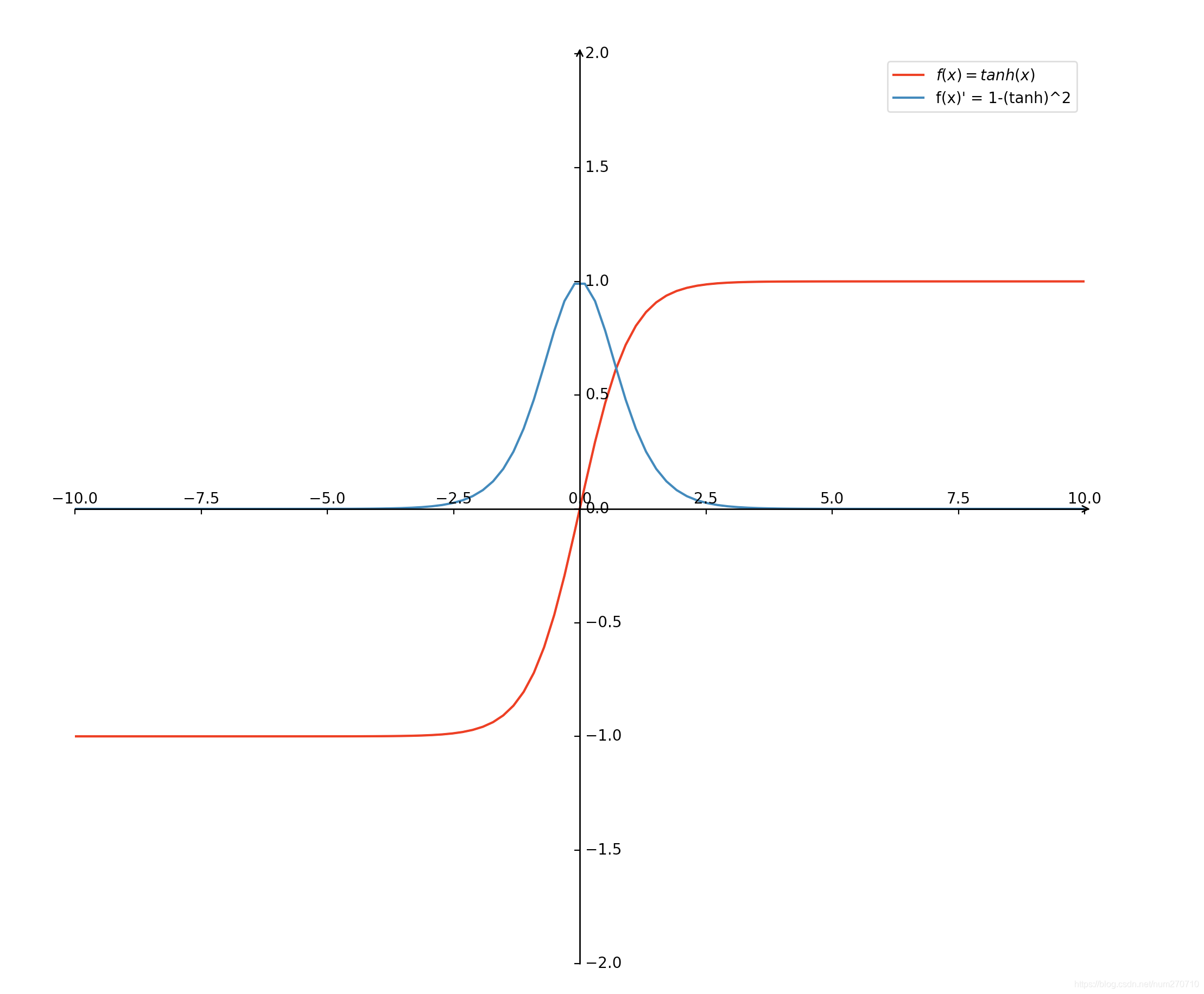

- 余弦正切函数及其导数视图

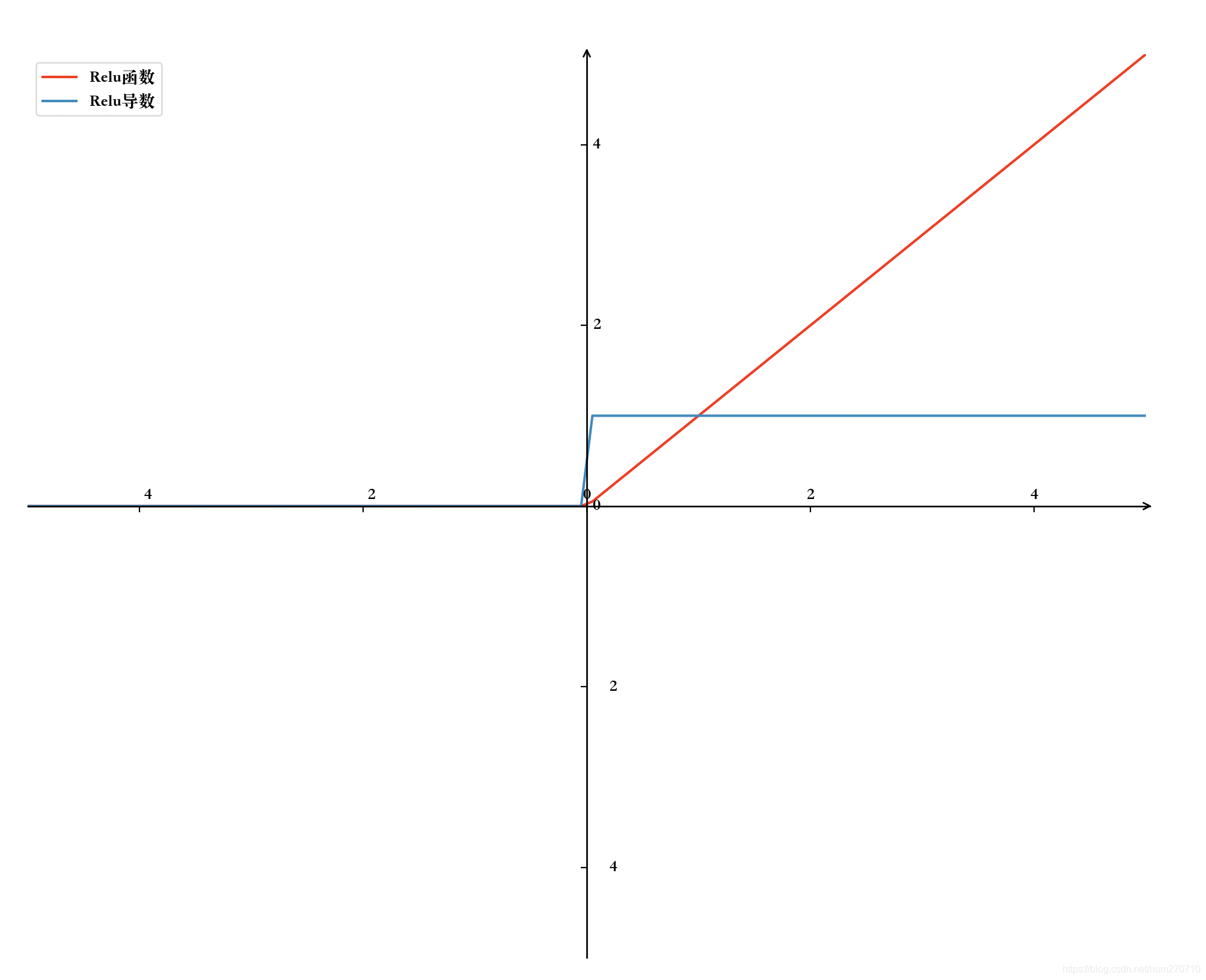

3. Relu函数

f ( x ) = m a x ( 0 , x ) f(x) = max(0, x) f(x)=max(0,x)

f

′

(

x

)

=

{

0

,

x

<

0

1

,

x

≥

0

f'(x) = \begin{cases} 0, & x < 0 \\ 1, & x \geq 0 \end{cases}

f′(x)={0,1,x<0x≥0

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?