环境信息:

- Centos 7

- Janusgraph 0.52

- CDH 6.1.0(HBase 2.1.0, Spark 2.4.0)

- Java 8

一、pom.xml配置

jar包版本尽量和大数据集群环境中使用的保持一致。避免jar包冲突。

<dependencies>

<dependency>

<groupId>org.janusgraph</groupId>

<artifactId>janusgraph-driver</artifactId>

<version>0.5.2</version>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.janusgraph</groupId>

<artifactId>janusgraph-hadoop</artifactId>

<version>0.5.2</version>

<exclusions>

<exclusion>

<artifactId>logback-classic</artifactId>

<groupId>ch.qos.logback</groupId>

</exclusion>

<exclusion>

<artifactId>metrics-core</artifactId>

<groupId>io.dropwizard.metrics</groupId>

</exclusion>

<exclusion>

<artifactId>metrics-graphite</artifactId>

<groupId>io.dropwizard.metrics</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.janusgraph</groupId>

<artifactId>janusgraph-hbase</artifactId>

<version>0.5.2</version>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.tinkerpop</groupId>

<artifactId>gremlin-driver</artifactId>

<version>3.4.6</version>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

<version>18.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.4.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-core</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-annotations</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-module-scala_2.11</artifactId>

<groupId>com.fasterxml.jackson.module</groupId>

</exclusion>

<exclusion>

<artifactId>log4j</artifactId>

<groupId>log4j</groupId>

</exclusion>

<exclusion>

<artifactId>javassist</artifactId>

<groupId>org.javassist</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.4.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-core</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-yarn_2.11</artifactId>

<version>2.4.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.tinkerpop</groupId>

<artifactId>spark-gremlin</artifactId>

<version>3.4.6</version>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.tinkerpop</groupId>

<artifactId>hadoop-gremlin</artifactId>

<version>3.4.6</version>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-mapreduce</artifactId>

<version>2.1.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-annotations</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-core</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<groupId>org.apache.hadoop</groupId>

</exclusion>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

<exclusion>

<artifactId>metrics-core</artifactId>

<groupId>io.dropwizard.metrics</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>2.1.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>jackson-annotations</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-core</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jcodings</artifactId>

<groupId>org.jruby.jcodings</groupId>

</exclusion>

<exclusion>

<artifactId>metrics-core</artifactId>

<groupId>io.dropwizard.metrics</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-common</artifactId>

<version>2.1.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-protocol</artifactId>

<version>2.1.0</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>2.1.0</version>

<scope>provided</scope>

<exclusions>

<exclusion>

<artifactId>jackson-annotations</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-core</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jackson-databind</artifactId>

<groupId>com.fasterxml.jackson.core</groupId>

</exclusion>

<exclusion>

<artifactId>jersey-server</artifactId>

<groupId>org.glassfish.jersey.core</groupId>

</exclusion>

<exclusion>

<artifactId>jersey-container-servlet-core</artifactId>

<groupId>org.glassfish.jersey.containers</groupId>

</exclusion>

<exclusion>

<artifactId>metrics-core</artifactId>

<groupId>io.dropwizard.metrics</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>com.jcabi</groupId>

<artifactId>jcabi-log</artifactId>

<version>0.14</version>

</dependency>

</dependencies>Janusgraph是基于tinkerpop构建的,tinkerpop代码中会检查Mainfest.MF的version属性,所以打包时必须加上,否则会报错:

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-jar-plugin</artifactId>

<version>2.3.1</version>

<configuration>

<archive>

<manifest>

<addClasspath>true</addClasspath>

</manifest>

<manifestEntries>

<version>

1.0

</version>

</manifestEntries>

</archive>

</configuration>

</plugin>二、resources配置

大数据环境的4个配置文件放到resources目录下。

把core-site.xml中以下配置注释掉

<!-- <property>-->

<!-- <name>net.topology.script.file.name</name>-->

<!-- <value>/etc/hadoop/conf.cloudera.yarn/topology.py</value>-->

<!-- </property>-->三、图配置

# Copyright 2020 JanusGraph Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# Hadoop Graph Configuration

#

gremlin.graph=org.apache.tinkerpop.gremlin.hadoop.structure.HadoopGraph

gremlin.hadoop.graphReader=org.janusgraph.hadoop.formats.hbase.HBaseInputFormat

gremlin.hadoop.graphWriter=org.apache.tinkerpop.gremlin.hadoop.structure.io.gryo.GryoOutputFormat

gremlin.hadoop.jarsInDistributedCache=true

gremlin.hadoop.inputLocation=none

gremlin.hadoop.outputLocation=output

gremlin.spark.persistContext=true

gremlin.hadoop.defaultGraphComputer=org.apache.tinkerpop.gremlin.spark.process.computer.SparkGraphComputer

#

# JanusGraph HBase InputFormat configuration

#

janusgraphmr.ioformat.conf.storage.backend=hbase

janusgraphmr.ioformat.conf.storage.hostname=<zk ip 地址>

janusgraphmr.ioformat.conf.storage.hbase.table=<hbase 表名称>

#

# SparkGraphComputer Configuration

#

spark.master=yarn

spark.submit.deployMode=client

spark.yarn.queue=<队列名称>

spark.executor.memory=4g

spark.driver.memory=2g

# spark jar包路径,把CDH客户端spark的lib目录上传即可

spark.yarn.jars=hdfs://ip:port/spark-jars/*.jar

spark.serializer=org.apache.spark.serializer.KryoSerializer

spark.kryo.registrator=org.janusgraph.hadoop.serialize.JanusGraphKryoRegistrator

# janusgraph lib 目录

spark.executor.extraClassPath=/app/janusgraph/lib/*四、代码实现

package net.demo.graph;

import org.apache.tinkerpop.gremlin.process.traversal.dsl.graph.GraphTraversalSource;

import org.apache.tinkerpop.gremlin.spark.process.computer.SparkGraphComputer;

import org.apache.tinkerpop.gremlin.structure.Graph;

import org.apache.tinkerpop.gremlin.structure.util.GraphFactory;

public class GraphSparkDemo {

public static void main(String[] args) throws Exception {

System.setProperty("HADOOP_GREMLIN_LIBS", "hdfs://ip:port/spark-jars/*.jar");

Graph graph = GraphFactory.open("./read-hbase-spark.properties");

GraphTraversalSource g = graph.traversal().withComputer(SparkGraphComputer.class);

long count = g.V().count().next();

System.out.println("v count:" + count);

graph.close();

}

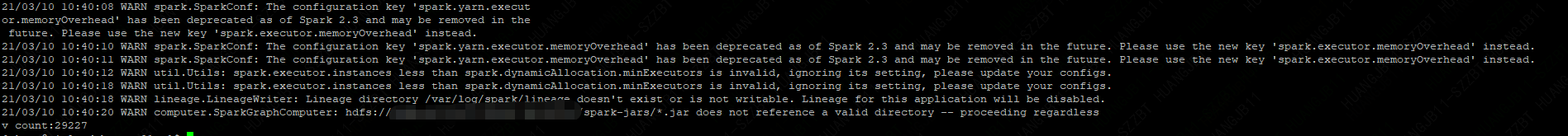

}五、执行结果

六、遇到的坑

1. JAR包冲突,guvua, jackson,janusgraph用的包和环境上的有差别,遇到的问题最多

2. 在Windows上调试,会遇到unread block data错误,打包在环境上执行就不会出现。

3. Mainfest.MF中缺少version属性报错,因为gremlin代码中会检查这个属性是否存在

4. 打包过程中把Mainfest.MF中包含SF, RSA, DSA文件,执行spark会抛出异常,需要排除相关文件。

七、参考文献

https://www.jianshu.com/p/8be5eeae59fc?from=message

https://docs.janusgraph.org/advanced-topics/hadoop/

7234

7234

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?