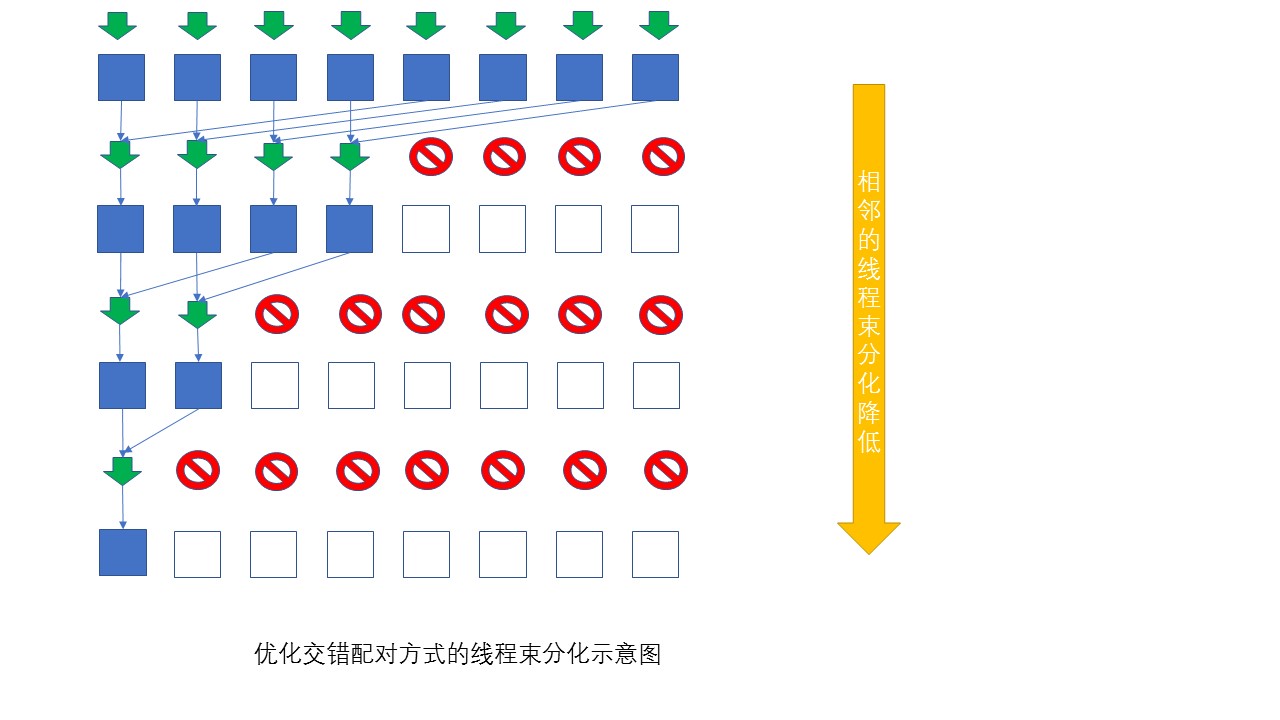

按照前文多线程的交错配对方式实现并行规约求和方式,实现CUDA版本的并行规约求和,由于这种方式的规约可以避免线程束的分化,因此不需要进行类似于相邻配对那种方式的优化。

交错与优化相邻模式相比,计算效率提升到1.14倍,性能提高有限,这主要受限于全局内存的加载和存储模式。

并行规约的示意图:

#include "cuda_runtime.h"

#include "device_launch_parameters.h"

#include <stdio.h>

#include "math.h"

#include "stdlib.h"

//错误检查的宏定义

#define CHECK(call) \

{ \

const cudaError_t status=call; \

if (status!=cudaSuccess) \

{ \

printf("文件:%s,函数:%s,行号:%d\n",__FILE__, \

__FUNCTION__,__LINE__); \

printf("%s", cudaGetErrorString(status)); \

exit(1); \

} \

} \

//核函数

__global__ void Kernel(int *d_data, int *d_local_sum, int N)

{

int tid = threadIdx.x;

int index = blockIdx.x*blockDim.x + threadIdx.x;

int *data = d_data + blockIdx.x*blockDim.x;

if (index >= N) return;

for (int strize = blockDim.x / 2; strize > 0; strize >>= 1)

{

if (tid < strize)

data[tid] += data[tid + strize];

__syncthreads();

}

if (tid == 0)

{

d_local_sum[blockIdx.x] = data[0];

}

}

//主函数

int main()

{

//基本参数设置

cudaSetDevice(0);

const int N = 16777216;

int local_length = 1024;

int total_sum = 0;

dim3 grid(((N + local_length - 1) / local_length), 1);

dim3 block(local_length, 1);

int *h_data = nullptr;

int *h_local_sum = nullptr;

int *d_data = nullptr;

int *d_local_sum = nullptr;

//Host&Deivce内存申请及数组初始化

h_data = (int*)malloc(N * sizeof(int));

h_local_sum = (int*)malloc(int(grid.x) * sizeof(int));

CHECK(cudaMalloc((void**)&d_data, N * sizeof(int)));

CHECK(cudaMalloc((void**)&d_local_sum, int(grid.x) * sizeof(int)));

for (int i = 0; i < N; i++)

h_data[i] = int(10 * sin(0.02*3.14*i));//限制数组元素值,防止最终求和值超过int的范围

//数据拷贝至Device

CHECK(cudaMemcpy(d_data, h_data, N * sizeof(int), cudaMemcpyHostToDevice));

for (int i = 0; i < 200; i++)

//执行核函数

Kernel << <grid, block >> > (d_data, d_local_sum, N);

//数据拷贝至Host

CHECK(cudaMemcpy(h_local_sum, d_local_sum, int(grid.x) * sizeof(int),

cudaMemcpyDeviceToHost));

//同步&重置设备

CHECK(cudaDeviceSynchronize());

CHECK(cudaDeviceReset());

for (int i = 0; i < int(grid.x); i++)

{

total_sum += h_local_sum[i];

}

printf("%d \n", total_sum);

//getchar();

return 0;

}

873

873

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?