class linear_model:

def __init__(self, w, b ,lr):

self.w = w

self.b = b

self.lr = lr

self.ypred_minus_ytrue = 0

self.x = 0

self.grad_w = 0

self.grad_b = 0

def forward(self, x):

self.x = x

return self.w * x + self.b

def backward(self,diff,diff_mul_x):

# update the gradient

# d cost / d w = 2 * x * (y_pred - y_true) [1]

# d cost / d b = 2 * (y_pred - y_true) [2]

self.grad_w = diff_mul_x # [1]

self.grad_b = diff # [2]

self.w = self.w - self.lr * self.grad_w

self.b = self.b - self.lr * self.grad_b

def loss_function(y_pred,y_true,x):

diff = np.mean(y_pred - y_true)

diff_mul_x = np.mean(x * (y_pred - y_true))

return np.mean((y_pred - y_true)**2) , diff , diff_mul_x

# main

if __name__=="__main__":

import numpy as np

# random x ,y data

x = np.array([1,2,3,4,7])

y = np.array([1,3,2,5,9])

# inverse x array

x = 1/x

# # square y

# y = y**2

loss_ls = []

w_ls = []

b_ls = []

clf = linear_model(w=1,b=0,lr=0.01)

epoch = 1000

for i in range(epoch):

y_pred = clf.forward(x)

diff_square , diff , diff_mul_x = loss_function(y_pred, y, x)

clf.backward(diff,diff_mul_x)

print(diff_square,diff,clf.w,clf.b)

loss_ls.append(diff_square)

w_ls.append(clf.w)

b_ls.append(clf.b)

# plot

import matplotlib.pyplot as plt

# plot in a subplot toghter with loss

plt.subplot(2,1,1)

plt.plot(range(epoch),loss_ls)

plt.title('loss')

plt.subplot(2,1,2)

plt.plot(range(epoch),w_ls)

plt.plot(range(epoch),b_ls)

# legend

plt.legend(['w','b'])

plt.title('w,b')

plt.show()

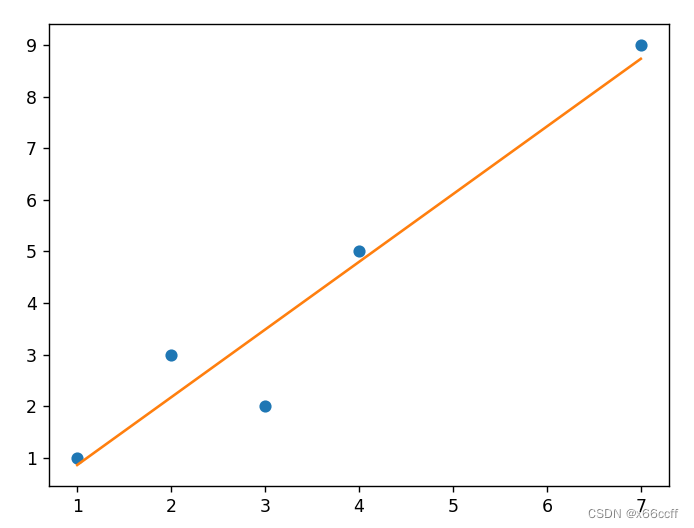

# plot data and line

plt.plot(x,y,'o')

plt.plot(x,clf.w*x+clf.b)

plt.show()

plt.title(label='w*x+b')

数据1

数据2

1144

1144

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?